mirror of

https://github.com/langgenius/dify-docs.git

synced 2026-03-27 13:28:32 +07:00

Docs: update metadata content english

This commit is contained in:

44

docs.json

44

docs.json

@@ -140,21 +140,38 @@

|

||||

]

|

||||

},

|

||||

{

|

||||

"group": "Knowledge Base",

|

||||

"group": "Knowledge",

|

||||

"pages": [

|

||||

"en-us/user-guide/knowledge-base/readme",

|

||||

{

|

||||

"group": "Create Knowledge Base",

|

||||

"group": "Create Knowledge",

|

||||

"pages": [

|

||||

"en-us/user-guide/knowledge-base/knowledge-base-creation/upload-documents",

|

||||

"en-us/user-guide/knowledge-base/knowledge-base-creation/sync-from-notion",

|

||||

"en-us/user-guide/knowledge-base/knowledge-base-creation/sync-from-website",

|

||||

"en-us/user-guide/knowledge-base/knowledge-base-creation/connect-external-knowledge"

|

||||

"en-us/user-guide/knowledge-base/knowledge-base-creation/introduction",

|

||||

{

|

||||

"group": "1. Import Text Data",

|

||||

"pages": [

|

||||

"en-us/user-guide/knowledge-base/create-knowledge-and-upload-documents/import-content-data/readme",

|

||||

"en-us/user-guide/knowledge-base/create-knowledge-and-upload-documents/import-content-data/sync-from-notion",

|

||||

"en-us/user-guide/knowledge-base/create-knowledge-and-upload-documents/import-content-data/sync-from-website"

|

||||

]

|

||||

},

|

||||

"en-us/user-guide/knowledge-base/create-knowledge-and-upload-documents/chunking-and-cleaning-text",

|

||||

"en-us/user-guide/knowledge-base/create-knowledge-and-upload-documents/setting-indexing-methods"

|

||||

]

|

||||

},

|

||||

"en-us/user-guide/knowledge-base/retrieval-test-and-citation",

|

||||

"en-us/user-guide/knowledge-base/knowledge-and-documents-maintenance",

|

||||

{

|

||||

"group": "Manage Knowledge",

|

||||

"pages": [

|

||||

"en-us/user-guide/knowledge-base/knowledge-and-documents-maintenance/introduction",

|

||||

"en-us/user-guide/knowledge-base/knowledge-and-documents-maintenance/maintain-knowledge-documents",

|

||||

"en-us/user-guide/knowledge-base/knowledge-and-documents-maintenance/maintain-dataset-via-api"

|

||||

]

|

||||

},

|

||||

"en-us/user-guide/knowledge-base/metadata",

|

||||

"en-us/user-guide/knowledge-base/integrate-knowledge-within-application",

|

||||

"en-us/user-guide/knowledge-base/faq"

|

||||

"en-us/user-guide/knowledge-base/retrieval-test-and-citation",

|

||||

"en-us/user-guide/knowledge-base/connect-external-knowledge-base",

|

||||

"en-us/user-guide/knowledge-base/api-documentation/external-knowledge-api-documentation"

|

||||

]

|

||||

},

|

||||

{

|

||||

@@ -367,7 +384,7 @@

|

||||

"zh-cn/user-guide/knowledge-base/metadata",

|

||||

"zh-cn/user-guide/knowledge-base/integrate-knowledge-within-application",

|

||||

"zh-cn/user-guide/knowledge-base/retrieval-test-and-citation",

|

||||

"zh-cn/user-guide/knowledge-base/knowledge-base-creation/connect-external-knowledge-base",

|

||||

"zh-cn/user-guide/knowledge-base/connect-external-knowledge-base",

|

||||

"zh-cn/user-guide/knowledge-base/api-documentation/external-knowledge-api-documentation"

|

||||

]

|

||||

},

|

||||

@@ -677,7 +694,12 @@

|

||||

"label": "Support",

|

||||

"href": "mailto:support@dify.ai"

|

||||

}

|

||||

]

|

||||

],

|

||||

"primary": {

|

||||

"type": "button",

|

||||

"label": "Dify.AI",

|

||||

"href": "https://cloud.dify.ai"

|

||||

}

|

||||

},

|

||||

"footer": {

|

||||

"socials": {

|

||||

|

||||

@@ -1,46 +0,0 @@

|

||||

# Welcome to Dify

|

||||

|

||||

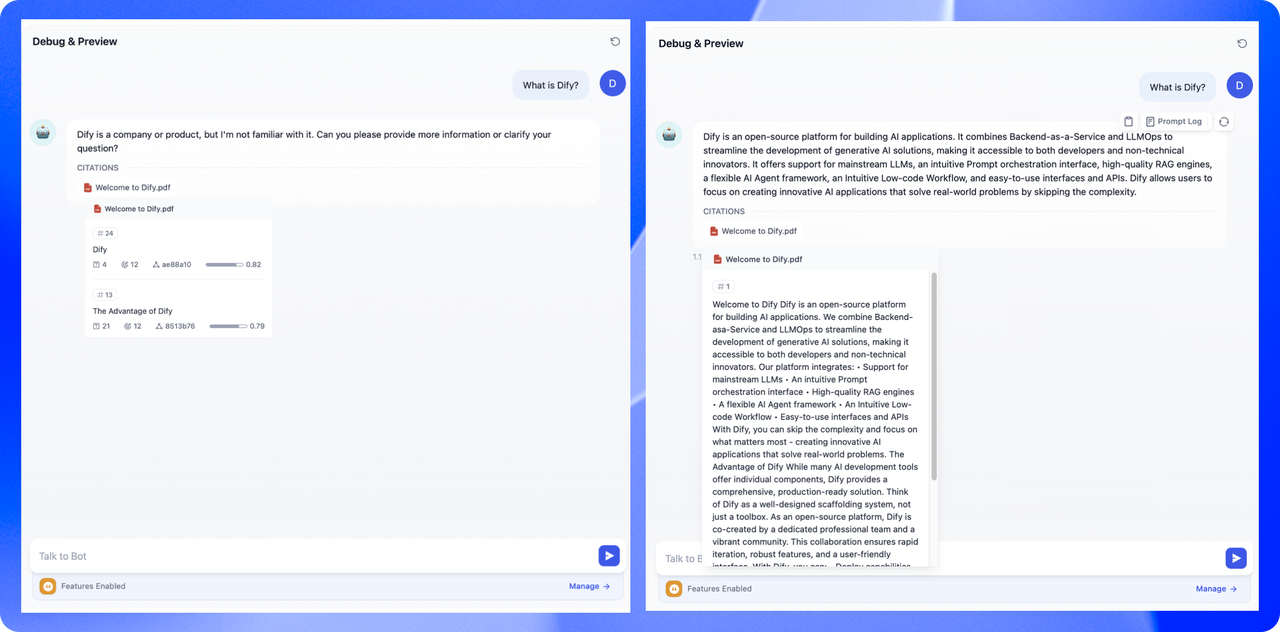

Dify is an open-source platform for building AI applications. We combine Backend-as-a-Service and LLMOps to streamline the development of generative AI solutions, making it accessible to both developers and non-technical innovators.

|

||||

|

||||

Our platform integrates:

|

||||

|

||||

- Support for mainstream LLMs

|

||||

- An intuitive Prompt orchestration interface

|

||||

- High-quality RAG engines

|

||||

- A flexible AI Agent framework

|

||||

- An Intuitive Low-code Workflow

|

||||

- Easy-to-use interfaces and APIs

|

||||

|

||||

With Dify, you can skip the complexity and focus on what matters most - creating innovative AI applications that solve real-world problems.

|

||||

|

||||

### The Advantage of Dify

|

||||

|

||||

While many AI development tools offer individual components, Dify provides a comprehensive, production-ready solution. Think of Dify as a well-designed scaffolding system, not just a toolbox.

|

||||

|

||||

As an open-source platform, Dify is co-created by a dedicated professional team and a vibrant community. This collaboration ensures rapid iteration, robust features, and a user-friendly interface.

|

||||

|

||||

With Dify, you can:

|

||||

|

||||

- Deploy capabilities similar to Assistants API and GPTs using any model

|

||||

- Maintain full control over your data with flexible security options

|

||||

- Leverage an intuitive interface for easy management and deployment

|

||||

|

||||

### Dify

|

||||

|

||||

{% hint style="info" %} The name Dify comes from "Define + Modify", referring to defining and continuously improving your AI applications. It's made for you. {% endhint %}

|

||||

|

||||

Here's how various groups are leveraging Dify:

|

||||

|

||||

1. **Startups**: Rapidly prototype and iterate on AI ideas, accelerating both successes and failures. Numerous teams have used Dify to build MVPs, secure funding, and win customer contracts.

|

||||

2. **Established Businesses**: Enhance existing applications with LLM capabilities. Use Dify's RESTful APIs to separate prompts from business logic, while utilizing our management interface to track data, costs, and usage.

|

||||

3. **Enterprise AI infrastructure**: Banks and tech companies are deploying Dify as an internal LLM gateway, facilitating GenAI adoption with centralized governance.

|

||||

4. **AI Enthusiasts and Learners**: Practice prompt engineering and explore agent technologies with ease. Over 60,000 developers built their first AI app on Dify even before GPTs were introduced. Since then, our community has grown significantly, now boasting over 180,000 developers and supporting 59,000+ end users.

|

||||

|

||||

Whether you're a startup founder, an enterprise developer, or an AI enthusiast, Dify is designed to meet your needs and accelerate your AI journey!

|

||||

|

||||

### Next Steps

|

||||

|

||||

- Read [**Quick Start**](https://docs.dify.ai/application/creating-an-application) for an overview of Dify’s application building workflow.

|

||||

- Learn how to [**self-deploy Dify** ](https://docs.dify.ai/getting-started/install-self-hosted)to your servers and [**integrate open source models**](https://docs.dify.ai/advanced/model-configuration)**.**

|

||||

- Understand Dify’s [**specifications and roadmap**](https://docs.dify.ai/getting-started/readme/features-and-specifications)**.**

|

||||

- [**Star us on GitHub**](https://github.com/langgenius/dify) and read our **Contributor Guidelines.**

|

||||

61

en-us/user-guide/build-app/agent.mdx

Normal file

61

en-us/user-guide/build-app/agent.mdx

Normal file

@@ -0,0 +1,61 @@

|

||||

---

|

||||

title: Agent

|

||||

---

|

||||

|

||||

## Definition

|

||||

|

||||

An Agent Assistant can leverage the reasoning abilities of large language models (LLMs). It independently sets goals, simplifies complex tasks, operates tools, and refines processes to complete tasks autonomously.

|

||||

|

||||

## Usage Instructions

|

||||

|

||||

To facilitate quick learning and use, application templates for the Agent Assistant are available in the 'Explore' section. You can integrate these templates into your workspace. The new Dify 'Studio' also allows the creation of a custom Agent Assistant to suit individual requirements. This assistant can assist in analyzing financial reports, composing reports, designing logos, and organizing travel plans.

|

||||

|

||||

|

||||

|

||||

The task completion ability of the Agent Assistant depends on the inference capabilities of the model selected. We recommend using a more powerful model series like GPT-4 when employing Agent Assistant to achieve more stable task completion results.

|

||||

|

||||

|

||||

|

||||

You can write prompts for the Agent Assistant in 'Instructions'. To achieve optimal results, you can clearly define its task objectives, workflow, resources, and limitations in the instructions.

|

||||

|

||||

|

||||

|

||||

## Adding Tools for the Agent Assistant

|

||||

|

||||

In the "Context" section, you can incorporate knowledge base tools that the Agent Assistant can utilize for information retrieval. This will assist in providing it with external background knowledge.

|

||||

|

||||

In the "Tools" section, you are able to add tools that are required for use. These tools can enhance the capabilities of LLMs, such as internet searches, scientific computations, or image creation, thereby enriching the LLM's ability to interact with the real world. Dify offers two types of tools: **built-in tools and custom tools.**

|

||||

|

||||

You have the option to directly use built-in tools in Dify, or you can easily import custom API tools (currently supporting OpenAPI/Swagger and OpenAI Plugin standards).

|

||||

|

||||

|

||||

|

||||

The **Tools** feature allows you to create more powerful AI applications on Dify. For example, you can orchestrate suitable tools for Agent Assistant, enabling it to complete complex tasks through reasoning, step decomposition, and tool invocation.

|

||||

|

||||

Additionally, the tool simplifies the integration of your application with other systems or services, enabling interactions with the external environment, such as executing code or accessing proprietary information sources. Simply mention the name of the tool you want to invoke in the chat box, and it will be automatically activated.

|

||||

|

||||

|

||||

|

||||

## Agent Settings

|

||||

|

||||

On Dify, two inference modes are provided for Agent Assistant: Function Calling and ReAct. Models like GPT-3.5 and GPT-4 that support Function Calling have demonstrated better and more stable performance. For model series that do not support Function Calling, we have implemented the ReAct inference framework to achieve similar effects.

|

||||

|

||||

In the Agent settings, you can modify the iteration limit of the Agent.

|

||||

|

||||

|

||||

|

||||

## Configuring the Conversation Opener

|

||||

|

||||

You can set up a conversation opener and initial questions for your Agent Assistant. The configured conversation opener will be displayed at the beginning of each user's first interaction, showcasing the types of tasks the Agent can perform, along with examples of questions that can be asked.

|

||||

|

||||

|

||||

|

||||

## Debugging and Preview

|

||||

|

||||

After orchestrating the intelligent assistant, you can debug and preview it before publishing as an application to verify the assistant's task completion effectiveness.

|

||||

|

||||

|

||||

|

||||

## Publish Application

|

||||

|

||||

|

||||

81

en-us/user-guide/build-app/chatbot.mdx

Normal file

81

en-us/user-guide/build-app/chatbot.mdx

Normal file

@@ -0,0 +1,81 @@

|

||||

---

|

||||

title: Chatbot Application

|

||||

---

|

||||

|

||||

Conversation applications use a one-question-one-answer mode to have a continuous conversation with the user.

|

||||

|

||||

### Applicable scenarios

|

||||

|

||||

Conversation applications can be used in fields such as customer service, online education, healthcare, financial services, etc. These applications can help organizations improve work efficiency, reduce labor costs, and provide a better user experience.

|

||||

|

||||

### How to compose

|

||||

|

||||

Conversation applications supports: prompts, variables, context, opening remarks, and suggestions for the next question.

|

||||

|

||||

Here, we use a interviewer application as an example to introduce the way to compose a conversation applications.

|

||||

|

||||

#### Step 1 Create an application

|

||||

|

||||

Click the "Create Application" button on the homepage to create an application. Fill in the application name, and select **"Chat App"** as the application type.

|

||||

|

||||

|

||||

|

||||

#### Step 2: Compose the Application

|

||||

|

||||

After the application is successfully created, it will automatically redirect to the application overview page. Click on the button on the left menu: **"Orchestrate"** to compose the application.

|

||||

|

||||

|

||||

|

||||

**2.1 Fill in Prompts**

|

||||

|

||||

Prompt phrases are used to guide AI in providing professional responses, making the replies more precise. You can utilize the built-in prompt generator to craft suitable prompts. Prompts support the insertion of form variables, such as `{{input}}`. The values in the prompt variables will be replaced with the values filled in by the user.

|

||||

|

||||

Example:

|

||||

|

||||

1. Enter the interview scenario command.

|

||||

2. The prompt will automatically generate on the right side content box.

|

||||

3. You can insert custom variables within the prompt to tailor it to specific needs or details.

|

||||

|

||||

For a better experience, we will add an opening dialogue: `"Hello, {{name}}. I'm your interviewer, Bob. Are you ready?"`

|

||||

|

||||

To add the opening dialogue, click the "Add Feature" button in the upper left corner, and enable the "Conversation remarkers" feature:

|

||||

|

||||

|

||||

|

||||

And then edit the opening remarks:

|

||||

|

||||

|

||||

|

||||

**2.2 Adding Context**

|

||||

|

||||

If an application wants to generate content based on private contextual conversations, it can use our [knowledge](/en-us/knowledge-base/introduction) feature. Click the "Add" button in the context to add a knowledge base.

|

||||

|

||||

|

||||

|

||||

**2.3 Debugging**

|

||||

|

||||

Enter user inputs on the right side and check the respond content.

|

||||

|

||||

|

||||

|

||||

If the results are not satisfactory, you can adjust the prompts and model parameters. Click on the model name in the upper right corner to set the parameters of the model:

|

||||

|

||||

|

||||

|

||||

**Debugging with multiple models:**

|

||||

|

||||

If debugging with a single model feels inefficient, you can utilize the **Debug as Multiple Models** feature to batch-test the models’ response effectiveness.

|

||||

|

||||

|

||||

|

||||

Supports adding up to 4 LLMs at the same time.

|

||||

|

||||

|

||||

|

||||

> ⚠️ When using the multi-model debugging feature, if only some large models are visible, it is because other large models’ keys have not been added yet. You can manually add multiple models’ keys in [“Add New Provider”](/en-us/user-guide/models/new-provider).

|

||||

|

||||

**2.4 Publish App**

|

||||

|

||||

After debugging your application, click the **"Publish"** button in the top right corner to create a standalone AI application. In addition to experiencing the application via a public URL, you can also perform secondary development based on APIs, embed it into websites, and more. For details, please refer to [Publishing](/en-us/user-guide/application-publishing/launch-your-webapp-quickly/web-app-settings).

|

||||

|

||||

If you want to customize the application that you share, you can Fork our open source [WebApp template](https://github.com/langgenius/webapp-conversation). Based on the template, you can modify the application to meet your specific needs and style requirements.

|

||||

130

en-us/user-guide/build-app/flow-app/additional-features.mdx

Normal file

130

en-us/user-guide/build-app/flow-app/additional-features.mdx

Normal file

@@ -0,0 +1,130 @@

|

||||

---

|

||||

title: Additional Features

|

||||

version: 'English'

|

||||

---

|

||||

|

||||

Both Workflow and Chatflow applications support enabling additional features to enhance the user interaction experience. For example, adding a file upload entry, giving the LLM application a self-introduction, or using a welcome message can provide users with a richer interactive experience.

|

||||

|

||||

Click the **"Features"** button in the upper right corner of the application to add more functionality.

|

||||

|

||||

<iframe

|

||||

src="https://app.arcade.software/share/a0tbwuEIT5I3y5RdHsJp"

|

||||

frameborder="0"

|

||||

width="100%"

|

||||

height="500px"

|

||||

allowfullscreen

|

||||

></iframe>

|

||||

|

||||

#### Workflow

|

||||

|

||||

Workflow type applications only support the **"Image Upload"** feature. When enabled, an image upload entry will appear on the usage page of the Workflow application.

|

||||

|

||||

<iframe

|

||||

src="https://app.arcade.software/share/DqlK9RV79K25ElxMq1BJ"

|

||||

frameborder="0"

|

||||

width="100%"

|

||||

height="500px"

|

||||

allowfullscreen

|

||||

></iframe>

|

||||

|

||||

**Usage:**

|

||||

|

||||

**For application users:** Applications with image upload enabled will display an upload button on the usage page. Click the button or paste a file link to complete the image upload. You will receive the LLM's response to the image.

|

||||

|

||||

**For application developers:** After enabling the image upload feature, the uploaded image files will be stored in the `sys.files` variable. Next, add an LLM node, select a large model with vision capabilities, and enable the VISION feature within it. Choose the `sys.files` variable to allow the LLM to read the image file.

|

||||

|

||||

Finally, select the output variable of the LLM node in the END node to complete the setup.

|

||||

|

||||

<Frame caption="Enabling visual analysis capabilities">

|

||||

<img src="/ja-jp/user-guide/.gitbook/assets/image (7).png" alt="Setting interface for enabling visual analysis capabilities in LLM nodes" />

|

||||

</Frame>

|

||||

|

||||

#### Chatflow

|

||||

|

||||

Chatflow type applications support the following features:

|

||||

|

||||

* **Conversation Opener**

|

||||

|

||||

Allow AI to proactively send a message, which can be a welcome message or AI self-introduction, to bring it closer to the user.

|

||||

* **Follow-up**

|

||||

|

||||

Automatically add suggestions for the next question after the conversation is complete, to increase the depth and frequency of dialogue topics.

|

||||

* **Text-to-Speech**

|

||||

|

||||

Add an audio playback button in the Q&A text box, using a TTS service (needs to be set up in Model Providers) to read out the text.

|

||||

* **File Upload**

|

||||

|

||||

Supports the following file types: documents, images, audio, video, and other file types. After enabling this feature, application users can upload and update files at any time during the application dialogue. A maximum of 10 files can be uploaded simultaneously, with a size limit of 15MB per file.

|

||||

|

||||

<Frame caption="ファイルのアップロード機能">

|

||||

<img src="/ja-jp/user-guide/.gitbook/assets/image (8).png" alt="Chatflow应用中文件上传功能的设置界面" />

|

||||

</Frame>

|

||||

|

||||

* **Citation and Attribution**

|

||||

|

||||

Commonly used in conjunction with the "Knowledge Retrieval" node to display the reference source documents and attribution parts of the LLM's responses.

|

||||

* **Content Moderation**

|

||||

|

||||

Supports using moderation APIs to maintain a sensitive word library, ensuring that the LLM can respond and output safe content. For detailed instructions, please refer to Sensitive Content Moderation.

|

||||

|

||||

**Usage:**

|

||||

|

||||

Except for the **File Upload** feature, the usage of other features in Chatflow applications is relatively simple. Once enabled, they can be intuitively used on the application interaction page.

|

||||

|

||||

This section will mainly introduce the specific usage of the **File Upload** feature:

|

||||

|

||||

**For application users:** Chatflow applications with file upload enabled will display a "paperclip" icon on the right side of the dialogue box. Click it to upload files and interact with the LLM.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-8.png" alt="Upload file" />

|

||||

</Frame>

|

||||

|

||||

**For application developers:**

|

||||

|

||||

After enabling the file upload feature, the files users send will be uploaded in the `sys.files` variable. It will be updated after the user sends a new message in the same conversation turn.

|

||||

|

||||

Different types of files correspond to different application orchestration methods based on the uploaded file differences.

|

||||

|

||||

* **Document Files**

|

||||

|

||||

LLMs do not have the ability to directly read document files, so a Document Extractor node is needed to preprocess the files in the `sys.files` variable. The orchestration steps are as follows:

|

||||

|

||||

1. Enable the Features function and only check "Documents" in the file types.

|

||||

2. Select the `sys.files` variable in the input variables of the Document Extractor node.

|

||||

3. Add an LLM node and select the output variable of the document extractor node in the system prompt.

|

||||

4. Add a "Direct Reply" node at the end, filling in the output variable of the LLM node.

|

||||

|

||||

Chatflow applications built using this method cannot remember the content of uploaded files. Application users need to upload document files in the chat box for each conversation. If you want the application to remember uploaded files, please refer to File Upload: Adding Variables in the Start Node.

|

||||

|

||||

* **Image Files**

|

||||

|

||||

Some LLMs support directly obtaining information from images, so no additional nodes are needed to process images.

|

||||

|

||||

The orchestration steps are as follows:

|

||||

|

||||

1. Enable the Features function and only check "Images" in the file types.

|

||||

2. Add an LLM node, enable the VISION feature, and select the `sys.files` variable.

|

||||

3. Add a "Answer" node at the end, filling in the output variable of the LLM node.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-9.png" alt="Enable vision" />

|

||||

</Frame>

|

||||

|

||||

* **Mixed File Types**

|

||||

|

||||

If you want the application to have the ability to process both document files and image files simultaneously, you need to use the List Operation node to preprocess the files in the `sys.files` variable, extract more refined variables, and send them to the corresponding processing nodes. The orchestration steps are as follows:

|

||||

|

||||

1. Enable the Features function and check both "Images" and "Document Files" types.

|

||||

2. Add two list operation nodes, extracting image and document variables in the "Filter" condition.

|

||||

3. Extract document file variables and pass them to the "Document Extractor" node; extract image file variables and pass them to the "LLM" node.

|

||||

4. Add a "Direct Reply" node at the end, filling in the output variable of the LLM node.

|

||||

|

||||

After the application user uploads both document files and images, document files are automatically diverted to the document extractor node, and image files are automatically diverted to the LLM node to achieve joint processing of files.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-10.png" alt="Mixed File Types" />

|

||||

</Frame>

|

||||

|

||||

* **Audio and Video Files**

|

||||

|

||||

LLMs do not yet support direct reading of audio and video files, and the Dify platform has not yet built-in related file processing tools. Application developers can refer to [External Data Tools](/extension/api-based-extension/external-data-tool) to integrate tools for processing file information themselves.

|

||||

38

en-us/user-guide/build-app/flow-app/concepts.mdx

Normal file

38

en-us/user-guide/build-app/flow-app/concepts.mdx

Normal file

@@ -0,0 +1,38 @@

|

||||

---

|

||||

title: Concepts

|

||||

version: 'English'

|

||||

---

|

||||

|

||||

### Nodes

|

||||

|

||||

**Nodes are the key components of a workflow**. By connecting nodes with different functionalities, you can execute a series of operations within the workflow.

|

||||

|

||||

For core workflow nodes, please refer to [Node - Start](/en-us/user-guide/build-app/flow-app/nodes/start).

|

||||

|

||||

***

|

||||

|

||||

### Variables

|

||||

|

||||

**Variables are used to link the input and output of nodes within a workflow**, enabling complex processing logic throughout the process. Fore more details, please take refer to [Variables](/en-us/user-guide/build-app/flow-app/variables).

|

||||

|

||||

***

|

||||

|

||||

### Chatflow and Workflow

|

||||

|

||||

**Application Scenarios**

|

||||

|

||||

* **Chatflow**: Designed for conversational scenarios, including customer service, semantic search, and other conversational applications that require multi-step logic in response construction.

|

||||

* **Workflow**: Geared towards automation and batch processing scenarios, suitable for high-quality translation, data analysis, content generation, email automation, and more.

|

||||

|

||||

**Usage Entry Points**

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

**Differences in Available Nodes**

|

||||

|

||||

1. The End node is an ending node for Workflow and can only be selected at the end of the process.

|

||||

2. The Answer node is specific to Chatflow, used for streaming text output, and can output at intermediate steps in the process.

|

||||

3. Chatflow has built-in chat memory (Memory) for storing and passing multi-turn conversation history, which can be enabled in nodes like LLM and question classifiers. Workflow does not have Memory-related configurations and cannot enable them.

|

||||

4. Built-in variables for Chatflow's start node include: `sys.query`, `sys.files`, `sys.conversation_id`, `sys.user_id`. Built-in variables for Workflow's start node include: `sys.files`, `sys_id`.

|

||||

35

en-us/user-guide/build-app/flow-app/create-flow-app.mdx

Normal file

35

en-us/user-guide/build-app/flow-app/create-flow-app.mdx

Normal file

@@ -0,0 +1,35 @@

|

||||

---

|

||||

title: Creating Applications

|

||||

version: 'English'

|

||||

---

|

||||

|

||||

## Chatflow

|

||||

|

||||

**Use Cases:**

|

||||

|

||||

Suitable for conversational scenarios, including customer service, semantic search, and other dialogue-based applications that require multiple logical steps when constructing responses. The distinctive feature of this type of application is its support for multi-round conversational interactions with generated results, allowing for adjustment of the generated content.

|

||||

|

||||

Common interaction path: Give instructions → Generate content → Multiple discussions about the content → Regenerate results → End.

|

||||

|

||||

On the "Studio" page, click "Create Blank Application" on the left, then select "Workflow Orchestration" under "Chat Assistant."

|

||||

|

||||

|

||||

|

||||

## Workflow

|

||||

|

||||

**Use Cases:**

|

||||

|

||||

Suitable for automation and batch processing scenarios, ideal for high-quality translation, data analysis, content generation, email automation, and similar applications. This type of application does not support multi-round conversational interactions with generated results.

|

||||

|

||||

Common interaction path: Give instructions → Generate content → End

|

||||

|

||||

On the "Studio" page, click "Create Blank Application" on the left, then select "Workflow" to complete the creation.

|

||||

|

||||

|

||||

|

||||

## Differences Between the Two Application Type

|

||||

|

||||

1. The End node belongs to Workflow as a termination node and can only be selected at the end of the process.

|

||||

2. The Answer node belongs to Chatflow, used for streaming text content output and supports output during intermediate steps of the process.

|

||||

3. Chatflow has built-in chat memory (Memory) for storing and transmitting historical messages from multiple rounds of conversation, which can be enabled in LLM, question classification, and other nodes. Workflow has no Memory-related configuration and cannot be enabled.

|

||||

4. Chatflow's start node built-in variables include: `sys.query`, `sys.files`, `sys.conversation_id`, `sys.user_id`. Workflow's start node built-in variables include: `sys.files`, `sys.user_id`

|

||||

151

en-us/user-guide/build-app/flow-app/file-upload.mdx

Normal file

151

en-us/user-guide/build-app/flow-app/file-upload.mdx

Normal file

@@ -0,0 +1,151 @@

|

||||

---

|

||||

title: File Upload

|

||||

version: 'English'

|

||||

---

|

||||

|

||||

Compared to chat text, document files can contain vast amounts of information, such as academic reports and legal contracts. However, Large Language Models (LLMs) are inherently limited to processing only text or images, making it challenging to extract the rich contextual information within these files. As a result, application users often resort to manually copying and pasting large amounts of information to converse with LLMs, significantly increasing unnecessary operational overhead.

|

||||

|

||||

The file upload feature addresses this limitation by allowing files to be uploaded, parsed, referenced, and downloaded as File variables within workflow applications. **This empowers developers to easily construct complex workflows capable of understanding and processing various media types, including images, audio, and video.**

|

||||

|

||||

## Application Scenarios

|

||||

|

||||

1. **Document Analysis**: Upload academic research reports for LLMs to quickly summarize key points and answer related questions based on the file content.

|

||||

2. **Code Review**: Developers can upload code files to receive optimization suggestions and bug detection.

|

||||

3. **Learning Assistance**: Students can upload assignments or study materials for personalized explanations and guidance.

|

||||

4. **Legal Aid**: Upload complete contract texts for LLMs to assist in reviewing clauses and identifying potential risks.

|

||||

|

||||

## Difference Between File Upload and Knowledge Base

|

||||

|

||||

Both file upload and knowledge base provide additional contextual information for LLMs, but they differ significantly in usage scenarios and functionality:

|

||||

|

||||

1. **Information Source**:

|

||||

* File Upload: Allows end-users to dynamically upload files during conversations, providing immediate, personalized contextual information.

|

||||

* Knowledge Base: Pre-set and managed by application developers, containing a relatively fixed set of information.

|

||||

2. **Usage Flexibility**:

|

||||

* File Upload: More flexible, users can upload different types of files based on specific needs.

|

||||

* Knowledge Base: Content is relatively fixed but can be reused across multiple sessions.

|

||||

3. **Information Processing**:

|

||||

* File Upload: Requires document extractors or other tools to convert file content into text that LLMs can understand.

|

||||

* Knowledge Base: Usually pre-processed and indexed, ready for direct retrieval.

|

||||

4. **Application Scenarios**:

|

||||

* File Upload: Suitable for scenarios that need to process user-specific documents, such as document analysis, personalized learning assistance, etc.

|

||||

* Knowledge Base: Suitable for scenarios that require access to a large amount of preset information, such as customer service, product inquiries, etc.

|

||||

5. **Data Persistence**:

|

||||

* File Upload: Typically for temporary use, not stored long-term in the system.

|

||||

* Knowledge Base: Exists as a long-term part of the application, can be continuously updated and maintained.

|

||||

|

||||

## Quick Start

|

||||

|

||||

Dify supports file uploads in both [ChatFlow](/en-us/user-guide/build-app/flow-app/create-flow-app#chatflow) and [WorkFlow](/en-us/user-guide/build-app/flow-app/create-flow-app#workflow) type applications, processing them through variables for LLMs. Application developers can refer to the following methods to enable file upload functionality:

|

||||

|

||||

* In Workflow applications:

|

||||

* Add file variables in the ["Start Node"](/en-us/user-guide/build-app/flow-app/nodes/start)

|

||||

* In ChatFlow applications:

|

||||

* Enable file upload in ["Additional Features"](/en-us/user-guide/build-app/flow-app/additional-features) to allow direct file uploads in the chat window

|

||||

* Add file variables in the "[Start Node"](/en-us/user-guide/build-app/flow-app/nodes/start)

|

||||

* Note: These two methods can be configured simultaneously and are independent of each other. The file upload settings in additional features (including upload method and quantity limit) do not affect the file variables in the start node. For example, if you only want to create file variables through the start node, you don't need to enable the file upload feature in additional features.

|

||||

|

||||

These two methods provide flexible file upload options for applications to meet the needs of different scenarios.

|

||||

|

||||

**File Types**

|

||||

|

||||

| File Type | Supported Formats |

|

||||

|-----------|------------------|

|

||||

| Documents | TXT, MARKDOWN, PDF, HTML, XLSX, XLS, DOCX, CSV, EML, MSG, PPTX, PPT, XML, EPUB |

|

||||

| Images | JPG, JPEG, PNG, GIF, WEBP, SVG |

|

||||

| Audio | MP3, M4A, WAV, WEBM, AMR |

|

||||

| Video | MP4, MOV, MPEG, MPGA |

|

||||

| Others | Custom file extension support |

|

||||

|

||||

**Method 1: Enable File Upload in Application Chat Box (Chatflow Only)**

|

||||

|

||||

1. Click the **"Features"** button in the upper right corner of the Chatflow application to add more functionality to the application. After enabling this feature, application users can upload and update files at any time during the application dialogue. A maximum of 10 files can be uploaded simultaneously, with a size limit of 15MB per file.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-1-3.png" alt="File upload feature" />

|

||||

</Frame>

|

||||

|

||||

Enabling this feature does not grant LLMs the ability to directly read files. A **Document Extractor** is still needed to parse documents into text for LLM comprehension.

|

||||

|

||||

* For audio files, models like `gpt-4o-audio-preview` that support multimodal input can process audio directly without additional extractors.

|

||||

* For video and other file types, there are currently no corresponding extractors. Application developers need to [integrate external tools](/en-us/user-guide/tools/extensions/api-based/external-data-tool) for processing.

|

||||

|

||||

2. Add a Document Extractor node, and select the `sys.files` variable in the input variables.

|

||||

3. Add an LLM node and select the output variable of the Document Extractor node in the system prompt.

|

||||

4. Add an "Answer" node at the end, filling in the output variable of the LLM node.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-2-4.png" alt="Document extractor workflow" />

|

||||

</Frame>

|

||||

|

||||

Once enabled, users can upload files and engage in conversations in the dialogue box. However, with this method, the LLM application does not have the ability to remember file contents, and files need to be uploaded for each conversation.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-3-3.png" alt="Chat interface" />

|

||||

</Frame>

|

||||

|

||||

If you want the LLM to remember file contents during conversations, please refer to Method 2.

|

||||

|

||||

**Method 2: Enable File Upload by Adding File Variables**

|

||||

|

||||

**1. Add File Variables in the "Start" Node**

|

||||

|

||||

Add input fields in the application's "Start" node, choosing either **"Single File"** or **"File List"** as the field type for the variable.

|

||||

|

||||

* **Single File**: Allows the application user to upload only one file.

|

||||

* **File List**: Allows the application user to batch upload multiple files at once.

|

||||

|

||||

> For ease of operation, we will use a single file variable as an example.

|

||||

|

||||

**File Parsing**

|

||||

|

||||

There are two main ways to use file variables:

|

||||

|

||||

1. Using tool nodes to convert file content:

|

||||

* For document-type files, you can use the "Document Extractor" node to convert file content into text form.

|

||||

* This method is suitable for cases where file content needs to be parsed into a format that the model can understand (such as string, array[string], etc.).

|

||||

2. Using file variables directly in LLM nodes:

|

||||

* For certain types of files (such as images), you can use file variables directly in LLM nodes.

|

||||

* For example, for file variables of image type, you can enable the vision feature in the LLM node and then directly reference the corresponding file variable in the variable selector.

|

||||

|

||||

**2. Add Document Extractor Node**

|

||||

|

||||

After uploading, files are stored in single file variables, which LLMs cannot directly read. Therefore, a **"Document Extractor"** node needs to be added first to extract content from uploaded document files and send it to the LLM node for information processing.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-4.png" alt="Document Extractor configuration" />

|

||||

</Frame>

|

||||

|

||||

Fill in the output variable of the "Document Extractor" node in the system prompt of the LLM node.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-5.png" alt="LLM node configuration" />

|

||||

</Frame>

|

||||

|

||||

**Referencing File Variables in LLM Nodes**

|

||||

|

||||

For certain file types (such as images), file variables can be directly used within LLM nodes. This method is particularly suitable for scenarios requiring visual analysis. Here are the specific steps:

|

||||

|

||||

1. In the LLM node, enable the vision functionality.

|

||||

2. In the variable selector of the LLM node, directly reference the previously created file variable.

|

||||

3. In the system prompt, guide the model on how to process the image input.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-6.png" alt="LLM node with vision" />

|

||||

</Frame>

|

||||

|

||||

**File Download**

|

||||

|

||||

Placing file variables in answer nodes or end nodes will provide a file download card in the conversation box when the application reaches that node.

|

||||

|

||||

<Frame>

|

||||

<img src="/images/assets/image-7.png" alt="File download interface" />

|

||||

</Frame>

|

||||

|

||||

## Advanced Usage

|

||||

|

||||

If you want the application to support uploading multiple types of files, such as allowing users to upload document files, images, and audio/video files simultaneously, you need to add a "File List" variable in the "Start Node" and use the "List Operation" node to process different file types. For detailed instructions, please refer to the List Operation node.

|

||||

|

||||

<Frame caption="Handling multiple file types">

|

||||

<img src="/ja-jp/user-guide/.gitbook/assets/image (3).png" alt="Diagram of workflow for processing multiple file types" />

|

||||

</Frame>

|

||||

7

en-us/user-guide/build-app/flow-app/nodes/README.md

Normal file

7

en-us/user-guide/build-app/flow-app/nodes/README.md

Normal file

@@ -0,0 +1,7 @@

|

||||

# Node Description

|

||||

|

||||

**Nodes are the key components of a workflow**, enabling the execution of a series of operations by connecting nodes with different functionalities.

|

||||

|

||||

### Core Nodes

|

||||

|

||||

<table data-view="cards"><thead><tr><th></th><th></th><th></th></tr></thead><tbody><tr><td><a href="start.md"><strong>Start</strong></a></td><td>Defines the initial parameters for starting a workflow process.</td><td></td></tr><tr><td><a href="end.md"><strong>End</strong></a></td><td>Defines the final output content for ending a workflow process.</td><td></td></tr><tr><td><a href="answer.md"><strong>Answer</strong></a></td><td>Defines the response content in a Chatflow process.</td><td></td></tr><tr><td><a href="llm.md"><strong>Large Language Model (LLM)</strong></a></td><td>Calls a large language model to answer questions or process natural language.</td><td></td></tr><tr><td><a href="knowledge_retrieval.md"><strong>Knowledge Retrieval</strong></a></td><td>Retrieves text content related to user questions from a knowledge base, which can serve as context for downstream LLM nodes.</td><td></td></tr><tr><td><a href="question-classifier.md"><strong>Question Classifier</strong></a></td><td>By defining classification descriptions, the LLM can select the matching classification based on user input.</td><td></td></tr><tr><td><a href="ifelse.md"><strong>IF/ELSE</strong></a></td><td>Allows you to split the workflow into two branches based on if/else conditions.</td><td></td></tr><tr><td><a href="code.md"><strong>Code Execution</strong></a></td><td>Runs Python/NodeJS code to execute custom logic such as data transformation within the workflow.</td><td></td></tr><tr><td><a href="template.md"><strong>Template</strong></a></td><td>Enables flexible data transformation and text processing using Jinja2, a Python templating language.</td><td></td></tr><tr><td><a href="variable-assigner.md"><strong>Variable Aggregator</strong></a></td><td>Aggregates variables from multiple branches into one variable for unified configuration of downstream nodes.</td><td></td></tr><tr><td><a href="variable-assignment.md"><strong>Variable Assigner</strong></a></td><td>The variable assigner node is used to assign values to writable variables.</td><td></td></tr><tr><td><a href="parameter-extractor.md"><strong>Parameter Extractor</strong></a></td><td>Uses LLM to infer and extract structured parameters from natural language for subsequent tool calls or HTTP requests.</td><td></td></tr><tr><td><a href="iteration.md"><strong>Iteration</strong></a></td><td>Executes multiple steps on list objects until all results are output.</td><td></td></tr><tr><td><a href="http-request.md"><strong>HTTP Request</strong></a></td><td>Allows sending server requests via the HTTP protocol, suitable for retrieving external results, webhooks, generating images, and other scenarios.</td><td></td></tr><tr><td><a href="tools.md"><strong>Tools</strong></a></td><td>Enables calling built-in Dify tools, custom tools, sub-workflows, and more within the workflow.</td><td></td></tr></tbody></table>

|

||||

24

en-us/user-guide/build-app/flow-app/nodes/answer.mdx

Normal file

24

en-us/user-guide/build-app/flow-app/nodes/answer.mdx

Normal file

@@ -0,0 +1,24 @@

|

||||

---

|

||||

title: 'Answer'

|

||||

version: 'English'

|

||||

---

|

||||

|

||||

# Direct Reply

|

||||

|

||||

Defining Reply Content in a Chatflow Process. In a text editor, you have the flexibility to determine the reply format. This includes crafting a fixed block of text, utilizing output variables from preceding steps as the reply content, or merging custom text with variables for the response.

|

||||

|

||||

Answer node can be seamlessly integrated at any point to dynamically deliver content into the dialogue responses. This setup supports a live-editing configuration mode, allowing for both text and image content to be arranged together. The configurations include:

|

||||

|

||||

1. Outputting the reply content from a Language Model (LLM) node.

|

||||

2. Outputting generated images.

|

||||

3. Outputting plain text.

|

||||

|

||||

Example 1: Output plain text.

|

||||

|

||||

|

||||

|

||||

Example 2: Output image and LLM reply.

|

||||

|

||||

|

||||

|

||||

|

||||

98

en-us/user-guide/build-app/flow-app/nodes/code.mdx

Normal file

98

en-us/user-guide/build-app/flow-app/nodes/code.mdx

Normal file

@@ -0,0 +1,98 @@

|

||||

---

|

||||

title: Code Execution

|

||||

---

|

||||

|

||||

## Table of Contents

|

||||

|

||||

* [Introduction](#introduction)

|

||||

* [Usage Scenarios](#usage-scenarios)

|

||||

* [Local Deployment](#local-deployment)

|

||||

* [Security Policies](#security-policies)

|

||||

|

||||

## Introduction

|

||||

|

||||

The code node supports running Python/NodeJS code to perform data transformations within a workflow. It can simplify your workflow and is suitable for scenarios such as arithmetic operations, JSON transformations, text processing, and more.

|

||||

|

||||

This node significantly enhances the flexibility for developers, allowing them to embed custom Python or JavaScript scripts within the workflow and manipulate variables in ways that preset nodes cannot achieve. Through configuration options, you can specify the required input and output variables and write the corresponding execution code:

|

||||

|

||||

.png)

|

||||

|

||||

## Configuration

|

||||

|

||||

If you need to use variables from other nodes in the code node, you must define the variable names in the `input variables` and reference these variables. You can refer to [Variable References](../key-concept.md#variables).

|

||||

|

||||

## Usage Scenarios

|

||||

|

||||

Using the code node, you can perform the following common operations:

|

||||

|

||||

### Structured Data Processing

|

||||

|

||||

In workflows, you often have to deal with unstructured data processing, such as parsing, extracting, and transforming JSON strings. A typical example is data processing from an HTTP node. In common API return structures, data may be nested within multiple layers of JSON objects, and you need to extract certain fields. The code node can help you perform these operations. Here is a simple example that extracts the `data.name` field from a JSON string returned by an HTTP node:

|

||||

|

||||

```python

|

||||

def main(http_response: str) -> str:

|

||||

import json

|

||||

data = json.loads(http_response)

|

||||

return {

|

||||

# Note to declare 'result' in the output variables

|

||||

'result': data['data']['name']

|

||||

}

|

||||

```

|

||||

|

||||

### Mathematical Calculations

|

||||

|

||||

When you need to perform complex mathematical calculations in a workflow, you can also use the code node. For example, calculating a complex mathematical formula or performing some statistical analysis on data. Here is a simple example that calculates the variance of an array:

|

||||

|

||||

```python

|

||||

def main(x: list) -> float:

|

||||

return {

|

||||

# Note to declare 'result' in the output variables

|

||||

'result': sum([(i - sum(x) / len(x)) ** 2 for i in x]) / len(x)

|

||||

}

|

||||

```

|

||||

|

||||

### Data Concatenation

|

||||

|

||||

Sometimes, you may need to concatenate multiple data sources, such as multiple knowledge retrievals, data searches, API calls, etc. The code node can help you integrate these data sources together. Here is a simple example that merges data from two knowledge bases:

|

||||

|

||||

```python

|

||||

def main(knowledge1: list, knowledge2: list) -> list:

|

||||

return {

|

||||

# Note to declare 'result' in the output variables

|

||||

'result': knowledge1 + knowledge2

|

||||

}

|

||||

```

|

||||

|

||||

## Local Deployment

|

||||

|

||||

If you are a local deployment user, you need to start a sandbox service to ensure that malicious code is not executed. This service requires the use of Docker. You can find specific information about the sandbox service [here](https://github.com/langgenius/dify/tree/main/docker/docker-compose.middleware.yaml). You can also start the service directly via `docker-compose`:

|

||||

|

||||

```bash

|

||||

docker-compose -f docker-compose.middleware.yaml up -d

|

||||

```

|

||||

|

||||

## Security Policies

|

||||

|

||||

Both Python and JavaScript execution environments are strictly isolated (sandboxed) to ensure security. This means that developers cannot use functions that consume large amounts of system resources or may pose security risks, such as direct file system access, making network requests, or executing operating system-level commands. These limitations ensure the safe execution of the code while avoiding excessive consumption of system resources.

|

||||

|

||||

### FAQ

|

||||

|

||||

**Why can't I save the code it in the code node?**

|

||||

|

||||

Please check if the code contains potentially dangerous behaviors. For example:

|

||||

|

||||

```python

|

||||

def main() -> dict:

|

||||

return {

|

||||

"result": open("/etc/passwd").read(),

|

||||

}

|

||||

```

|

||||

|

||||

This code snippet has the following issues:

|

||||

|

||||

* **Unauthorized file access:** The code attempts to read the "/etc/passwd" file, which is a critical system file in Unix/Linux systems that stores user account information.

|

||||

* **Sensitive information disclosure:** The "/etc/passwd" file contains important information about system users, such as usernames, user IDs, group IDs, home directory paths, etc. Direct access could lead to information leakage.

|

||||

|

||||

Dangerous code will be automatically blocked by Cloudflare WAF. You can check if it's been blocked by looking at the "Network" tab in your browser's "Web Developer Tools".

|

||||

|

||||

|

||||

62

en-us/user-guide/build-app/flow-app/nodes/doc-extractor.mdx

Normal file

62

en-us/user-guide/build-app/flow-app/nodes/doc-extractor.mdx

Normal file

@@ -0,0 +1,62 @@

|

||||

---

|

||||

title: Doc Extractor

|

||||

---

|

||||

|

||||

#### Definition

|

||||

|

||||

LLMs cannot directly read or interpret document contents. Therefore, it's necessary to parse and read information from user-uploaded documents through a document extractor node, convert it to text, and then pass the content to the LLM to process the file contents.

|

||||

|

||||

#### Application Scenarios

|

||||

|

||||

* Building LLM applications that can interact with files, such as ChatPDF or ChatWord;

|

||||

* Analyzing and examining the contents of user-uploaded files;

|

||||

|

||||

#### Node Functionality

|

||||

|

||||

The document extractor node can be understood as an information processing center. It recognizes and reads files in the input variables, extracts information, and converts it into string-type output variables for downstream nodes to call.

|

||||

|

||||

.png)

|

||||

|

||||

The document extractor node structure is divided into input variables and output variables.

|

||||

|

||||

**Input Variables**

|

||||

|

||||

The document extractor only accepts variables with the following data structures:

|

||||

|

||||

* `File`, a single file

|

||||

* `Array[File]`, multiple files

|

||||

|

||||

The document extractor can only extract information from document-type files, such as the contents of TXT, Markdown, PDF, HTML, DOCX format files. It cannot process image, audio, video, or other file formats.

|

||||

|

||||

**Output Variables**

|

||||

|

||||

The output variable is fixed and named as text. The type of output variable depends on the input variable:

|

||||

|

||||

* If the input variable is `File`, the output variable is `string`

|

||||

* If the input variable is `Array[File]`, the output variable is `array[string]`

|

||||

|

||||

> Array variables generally need to be used in conjunction with list operation nodes. For detailed instructions, please refer to list-operator.

|

||||

|

||||

#### Configuration Example

|

||||

|

||||

In a typical file interaction Q\&A scenario, the document extractor can serve as a preliminary step for the LLM node, extracting file information from the application and passing it to the downstream LLM node to answer user questions about the file.

|

||||

|

||||

This section will introduce the usage of the document extractor node through a typical ChatPDF example workflow template.

|

||||

|

||||

.png)

|

||||

|

||||

**Configuration Process:**

|

||||

|

||||

1. Enable file upload for the application. Add a **single file variable** in the "Start" node and name it `pdf`.

|

||||

2. Add a document extractor node and select the `pdf` variable in the input variables.

|

||||

3. Add an LLM node and select the output variable of the document extractor node in the system prompt. The LLM can read the contents of the file through this output variable.

|

||||

|

||||

.png)

|

||||

|

||||

Configure the end node by selecting the output variable of the LLM node in the end node.

|

||||

|

||||

.png)

|

||||

|

||||

After configuration, the application will have file upload functionality, allowing users to upload PDF files and engage in conversation.

|

||||

|

||||

<Note>To learn how to upload files in chat conversations and interact with the LLM, please refer to Additional Features.</Note>

|

||||

29

en-us/user-guide/build-app/flow-app/nodes/end.mdx

Normal file

29

en-us/user-guide/build-app/flow-app/nodes/end.mdx

Normal file

@@ -0,0 +1,29 @@

|

||||

---

|

||||

title: End

|

||||

---

|

||||

|

||||

### 1 Definition

|

||||

|

||||

Define the final output content of a workflow. Every workflow needs at least one end node after complete execution to output the final result.

|

||||

|

||||

The end node is a termination point in the process; no further nodes can be added after it. In a workflow application, results are only output when the end node is reached. If there are conditional branches in the process, multiple end nodes need to be defined.

|

||||

|

||||

The end node must declare one or more output variables, which can reference any upstream node's output variables.

|

||||

|

||||

<Note>End nodes are not supported within Chatflow.</Note>

|

||||

|

||||

***

|

||||

|

||||

### 2 Scenarios

|

||||

|

||||

In the following long story generation workflow, the variable `Output` declared by the end node is the output of the upstream code node. This means the workflow will end after the Code node completes execution and will output the execution result of Code.

|

||||

|

||||

|

||||

|

||||

**Single Path Execution Example:**

|

||||

|

||||

|

||||

|

||||

**Multi-Path Execution Example:**

|

||||

|

||||

|

||||

43

en-us/user-guide/build-app/flow-app/nodes/http-request.mdx

Normal file

43

en-us/user-guide/build-app/flow-app/nodes/http-request.mdx

Normal file

@@ -0,0 +1,43 @@

|

||||

---

|

||||

title: HTTP Request

|

||||

---

|

||||

|

||||

### Definition

|

||||

|

||||

Allows sending server requests via the HTTP protocol, suitable for scenarios such as retrieving external data, webhooks, generating images, and downloading files. It enables you to send customized HTTP requests to specified web addresses, achieving interconnectivity with various external services.

|

||||

|

||||

This node supports common HTTP request methods:

|

||||

|

||||

* **GET**: Used to request the server to send a specific resource.

|

||||

* **POST**: Used to submit data to the server, typically for submitting forms or uploading files.

|

||||

* **HEAD**: Similar to GET requests, but the server only returns the response headers without the resource body.

|

||||

* **PATCH**: Used to apply partial modifications to a resource.

|

||||

* **PUT**: Used to upload resources to the server, typically for updating an existing resource or creating a new one.

|

||||

* **DELETE**: Used to request the server to delete a specified resource.

|

||||

|

||||

You can configure various aspects of the HTTP request, including URL, request headers, query parameters, request body content, and authentication information.

|

||||

|

||||

|

||||

|

||||

***

|

||||

|

||||

### Scenarios

|

||||

|

||||

* **Send Application Interaction Content to a Specific Server**

|

||||

|

||||

One practical feature of this node is the ability to dynamically insert variables into different parts of the request based on the scenario. For example, when handling customer feedback requests, you can embed variables such as username or customer ID, feedback content, etc., into the request to customize automated reply messages or fetch specific customer information and send related resources to a designated server.

|

||||

|

||||

|

||||

|

||||

The return values of an HTTP request include the response body, status code, response headers, and files. Notably, if the response contains a file, this node can automatically save the file for use in subsequent steps of the workflow. This design not only improves processing efficiency but also makes handling responses with files straightforward and direct.

|

||||

|

||||

* **Send File**

|

||||

|

||||

You can use an HTTP PUT request to send files from the application to other API services. In the request body, you can select the file variable within the `binary`. This method is commonly used in scenarios such as file transfer, document storage, or media processing.

|

||||

|

||||

Example: Suppose you are developing a document management application and need to send a user-uploaded PDF file to a third-party service. You can use an HTTP request node to pass the file variable.

|

||||

|

||||

Here is a configuration example:

|

||||

|

||||

.png)

|

||||

|

||||

46

en-us/user-guide/build-app/flow-app/nodes/ifelse.mdx

Normal file

46

en-us/user-guide/build-app/flow-app/nodes/ifelse.mdx

Normal file

@@ -0,0 +1,46 @@

|

||||

---

|

||||

title: Conditional Branch IF/ELSE

|

||||

---

|

||||

|

||||

### Definition

|

||||

|

||||

Allows you to split the workflow into two branches based on if/else conditions.

|

||||

|

||||

A conditional branching node has three parts:

|

||||

|

||||

* IF Condition: Select a variable, set the condition, and specify the value that satisfies the condition.

|

||||

* IF condition evaluates to `True`, execute the IF path.

|

||||

* IF condition evaluates to `False`, execute the ELSE path.

|

||||

* If the ELIF condition evaluates to `True`, execute the ELIF path;

|

||||

* If the ELIF condition evaluates to `False`, continue to evaluate the next ELIF path or execute the final ELSE path.

|

||||

|

||||

**Condition Types**

|

||||

|

||||

* Contains

|

||||

* Not contains

|

||||

* Starts with

|

||||

* Ends with

|

||||

* Is

|

||||

* Is not

|

||||

* Is empty

|

||||

* Is not empty

|

||||

|

||||

***

|

||||

|

||||

### Scenario

|

||||

|

||||

|

||||

|

||||

Taking the above **Text Summary Workflow** as an example:

|

||||

|

||||

* IF Condition: Select the `summarystyle` variable from the start node, with the condition **Contains** `technical`.

|

||||

* IF condition evaluates to `True`, follow the IF path by querying technology-related knowledge through the knowledge retrieval node, then respond via the LLM node (as shown in the upper half of the diagram);

|

||||

* IF condition evaluates to `False`, but an `ELIF` condition is added, where the input for the `summarystyle` variable **does not include** `technology`, yet the `ELIF` condition includes `science`, check if the condition in `ELIF` is `True`, then execute the steps defined within that path;

|

||||

* If the condition within `ELIF` is `False`, meaning the input variable contains neither `technology` nor `science`, continue to evaluate the next `ELIF` condition or execute the final `ELSE` path;

|

||||

* IF condition evaluates to `False`, i.e., the `summarystyle` variable input **does not contain** `technical`, execute the ELSE path, responding via the LLM2 node (lower part of the diagram).

|

||||

|

||||

**Multiple Condition Judgments**

|

||||

|

||||

For complex condition judgments, you can set multiple condition judgments and configure **AND** or **OR** between conditions to take the **intersection** or **union** of the conditions, respectively.

|

||||

|

||||

.png)

|

||||

163

en-us/user-guide/build-app/flow-app/nodes/iteration.mdx

Normal file

163

en-us/user-guide/build-app/flow-app/nodes/iteration.mdx

Normal file

@@ -0,0 +1,163 @@

|

||||

---

|

||||

title: Iteration

|

||||

---

|

||||

|

||||

### Definition

|

||||

|

||||

Execute multiple steps on an array until all results are output.

|

||||

|

||||

The iteration step performs the same steps on each item in a list. To use iteration, ensure that the input value is formatted as a list object. The iteration node allows AI workflows to handle more complex processing logic. It is a user-friendly version of the loop node, making some compromises in customization to allow non-technical users to quickly get started.

|

||||

|

||||

***

|

||||

|

||||

### Scenarios

|

||||

|

||||

#### **Example 1: Long Article Iteration Generator**

|

||||

|

||||

|

||||

|

||||

1. Enter the story title and outline in the **Start Node**.

|

||||

2. Use a **Generate Subtitles and Outlines Node** to use LLM to generate the complete content from user input.

|

||||

3. Use a **Extract Subtitles and Outlines Node** to convert the complete content into an array format.

|

||||

4. Use an **Iteration Node** to wrap an **LLM Node** and generate content for each chapter through multiple iterations.

|

||||

5. Add a **Direct Answer Node** inside the iteration node to achieve streaming output after each iteration.

|

||||

|

||||

**Detailed Configuration Steps**

|

||||

|

||||

1. Configure the story title (title) and outline (outline) in the **Start Node**.

|

||||

|

||||

|

||||

|

||||

2. Use a **Generate Subtitles and Outlines Node** to convert the story title and outline into complete text.

|

||||

|

||||

|

||||

|

||||

3. Use a **Extract Subtitles and Outlines Node** to convert the story text into an array (Array) structure. The parameter to extract is `sections`, and the parameter type is `Array[Object]`.

|

||||

|

||||

|

||||

|

||||

<Note>

|

||||

The effectiveness of parameter extraction is influenced by the model's inference capability and the instructions given. Using a model with stronger inference capabilities and adding examples in the **instructions** can improve the parameter extraction results.

|

||||

</Note>

|

||||

|

||||

4. Use the array-formatted story outline as the input for the iteration node and process it within the iteration node using an **LLM Node**.

|

||||

|

||||

|

||||

|

||||

Configure the input variables `GenerateOverallOutline/output` and `Iteration/item` in the LLM Node.

|

||||

|

||||

|

||||

|

||||

<Note>

|

||||

Built-in variables for iteration: `items[object]` and `index[number]`.

|

||||

|

||||

`items[object]` represents the input item for each iteration;

|

||||

|

||||

`index[number]` represents the current iteration round;

|

||||

</Note>

|

||||

|

||||

1. Configure a **Direct Reply Node** inside the iteration node to achieve streaming output after each iteration.

|

||||

|

||||

|

||||

|

||||

6. Complete debugging and preview.

|

||||

|

||||

|

||||

|

||||

#### **Example 2: Long Article Iteration Generator (Another Arrangement)**

|

||||

|

||||

|

||||

|

||||

* Enter the story title and outline in the **Start Node**.

|

||||

* Use an **LLM Node** to generate subheadings and corresponding content for the article.

|

||||

* Use a **Code Node** to convert the complete content into an array format.

|

||||

* Use an **Iteration Node** to wrap an **LLM Node** and generate content for each chapter through multiple iterations.

|

||||

* Use a **Template Conversion** Node to convert the string array output from the iteration node back to a string.

|

||||