.

8

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

@@ -0,0 +1,8 @@

|

||||

blank_issues_enabled: false

|

||||

contact_links:

|

||||

- name: "\U0001F4A1 Model Providers & Plugins"

|

||||

url: "https://github.com/langgenius/dify-official-plugins/issues/new/choose"

|

||||

about: Report issues with official plugins or model providers, you will need to provide the plugin version and other relevant details.

|

||||

- name: "\U0001F4E7 Discussions"

|

||||

url: https://github.com/langgenius/dify/discussions/categories/general

|

||||

about: General discussions and seek help from the community

|

||||

68

.github/ISSUE_TEMPLATE/docs.yml

vendored

Normal file

@@ -0,0 +1,68 @@

|

||||

name: Documentation Change Request

|

||||

description: Suggest changes or improvements to the documentation

|

||||

title: "[DOCS]: "

|

||||

labels: ["documentation"]

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

Thanks for taking the time to help us improve our documentation!

|

||||

|

||||

- type: dropdown

|

||||

id: type

|

||||

attributes:

|

||||

label: Type of Documentation Change

|

||||

options:

|

||||

- Error/Typo fix

|

||||

- Content update

|

||||

- New documentation

|

||||

- Translation

|

||||

- Reorganization

|

||||

- Other

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: input

|

||||

id: page

|

||||

attributes:

|

||||

label: Documentation Page URL or Path

|

||||

description: Please provide the URL or file path of the documentation page you're referring to.

|

||||

placeholder: e.g., https://docs.dify.ai/getting-started or /getting-started.md

|

||||

validations:

|

||||

required: false

|

||||

|

||||

- type: textarea

|

||||

id: current

|

||||

attributes:

|

||||

label: Current Content

|

||||

description: What does the documentation currently say?

|

||||

placeholder: Paste the current content here...

|

||||

validations:

|

||||

required: false

|

||||

|

||||

- type: textarea

|

||||

id: suggestion

|

||||

attributes:

|

||||

label: Suggested Changes

|

||||

description: What would you like to be changed or added?

|

||||

placeholder: Describe your suggested changes...

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

id: reason

|

||||

attributes:

|

||||

label: Reason for Change

|

||||

description: Why do you think this change would improve the documentation?

|

||||

placeholder: Explain why this change would be helpful...

|

||||

validations:

|

||||

required: false

|

||||

|

||||

- type: checkboxes

|

||||

id: terms

|

||||

attributes:

|

||||

label: Code of Conduct

|

||||

description: By submitting this issue, you agree to follow our contribution guidelines

|

||||

options:

|

||||

- label: I agree to follow Dify's documentation [contribution guidelines](https://github.com/langgenius/dify/blob/0277a37fcad5ad86aeb239485c27fffd5cd90043/CONTRIBUTING.md)

|

||||

required: true

|

||||

157

.github/workflows/translate.yml

vendored

Normal file

@@ -0,0 +1,157 @@

|

||||

name: Auto Translate Docs

|

||||

|

||||

on:

|

||||

workflow_run:

|

||||

workflows: ["Process Documentation"]

|

||||

types:

|

||||

- completed

|

||||

branches-ignore:

|

||||

- 'main'

|

||||

push:

|

||||

branches-ignore:

|

||||

- 'main'

|

||||

paths-ignore:

|

||||

- '.github/workflows/**'

|

||||

|

||||

jobs:

|

||||

translate:

|

||||

runs-on: ubuntu-latest

|

||||

# Only run if the workflow_run event was successful, or if it's a direct push

|

||||

if: github.event_name == 'push' || github.event.workflow_run.conclusion == 'success'

|

||||

permissions:

|

||||

contents: write

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 0 # Fetches all history for git diff

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

# For workflow_run events, checkout the head of the triggering workflow

|

||||

ref: ${{ github.event_name == 'workflow_run' && github.event.workflow_run.head_sha || github.sha }}

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: '3.9'

|

||||

|

||||

- name: Install dependencies

|

||||

run: pip install httpx aiofiles python-dotenv

|

||||

|

||||

- name: Get changed markdown files

|

||||

id: changed-files

|

||||

run: |

|

||||

# Get the list of newly added files between the current and previous commit

|

||||

# We filter for .md and .mdx files that are inside the language directories

|

||||

# Only include added (A) files, skip modified (M) and deleted (D) files

|

||||

|

||||

# Determine the commit SHA to use based on event type

|

||||

if [[ "${{ github.event_name }}" == "workflow_run" ]]; then

|

||||

current_sha="${{ github.event.workflow_run.head_sha }}"

|

||||

echo "Using workflow_run head_sha: $current_sha"

|

||||

else

|

||||

current_sha="${{ github.sha }}"

|

||||

echo "Using github.sha: $current_sha"

|

||||

fi

|

||||

|

||||

# Try different approaches to get the diff

|

||||

if [[ -n "${{ github.event.before }}" && "${{ github.event_name }}" == "push" ]]; then

|

||||

echo "Using github.event.before: ${{ github.event.before }}"

|

||||

files=$(git diff --name-status ${{ github.event.before }} $current_sha | grep -E '^A\s+' | cut -f2 | grep -E '^(en|en-us|zh-hans|ja-jp|plugin-dev-en|plugin-dev-zh|plugin-dev-ja|versions)/.*(\.md|\.mdx)$' || true)

|

||||

else

|

||||

echo "Using HEAD~1 for comparison"

|

||||

files=$(git diff --name-status HEAD~1 $current_sha | grep -E '^A\s+' | cut -f2 | grep -E '^(en|en-us|zh-hans|ja-jp|plugin-dev-en|plugin-dev-zh|plugin-dev-ja|versions)/.*(\.md|\.mdx)$' || true)

|

||||

fi

|

||||

|

||||

echo "Detected files (Added only):"

|

||||

echo "$files"

|

||||

|

||||

# Filter out files that don't actually exist

|

||||

existing_files=""

|

||||

if [[ -n "$files" ]]; then

|

||||

while IFS= read -r file; do

|

||||

if [[ -n "$file" && -f "$file" ]]; then

|

||||

if [[ -z "$existing_files" ]]; then

|

||||

existing_files="$file"

|

||||

else

|

||||

existing_files="$existing_files"$'\n'"$file"

|

||||

fi

|

||||

else

|

||||

echo "Skipping non-existent file: $file"

|

||||

fi

|

||||

done <<< "$files"

|

||||

fi

|

||||

|

||||

echo "Final files to translate:"

|

||||

echo "$existing_files"

|

||||

|

||||

if [[ -z "$existing_files" ]]; then

|

||||

echo "No new markdown files to translate."

|

||||

echo "files=" >> $GITHUB_OUTPUT

|

||||

else

|

||||

# The script expects absolute paths, but we run it from the root, so relative is fine.

|

||||

echo "files<<EOF" >> $GITHUB_OUTPUT

|

||||

echo "$existing_files" >> $GITHUB_OUTPUT

|

||||

echo "EOF" >> $GITHUB_OUTPUT

|

||||

fi

|

||||

|

||||

- name: Run translation script

|

||||

if: steps.changed-files.outputs.files

|

||||

env:

|

||||

DIFY_API_KEY: ${{ secrets.DIFY_API_KEY }}

|

||||

run: |

|

||||

echo "Files to translate:"

|

||||

echo "${{ steps.changed-files.outputs.files }}"

|

||||

|

||||

# Create temporary file list

|

||||

echo "${{ steps.changed-files.outputs.files }}" > /tmp/files_to_translate.txt

|

||||

|

||||

# Start all translation processes in parallel

|

||||

pids=()

|

||||

while IFS= read -r file; do

|

||||

if [[ -n "$file" ]]; then

|

||||

echo "Starting translation for $file..."

|

||||

python tools/translate/main.py "$file" "$DIFY_API_KEY" &

|

||||

pids+=($!)

|

||||

fi

|

||||

done < /tmp/files_to_translate.txt

|

||||

|

||||

# Wait for all background processes to complete

|

||||

echo "Waiting for ${#pids[@]} translation processes to complete..."

|

||||

failed=0

|

||||

for pid in "${pids[@]}"; do

|

||||

if ! wait "$pid"; then

|

||||

echo "Translation process $pid failed"

|

||||

failed=1

|

||||

fi

|

||||

done

|

||||

|

||||

if [ $failed -eq 1 ]; then

|

||||

echo "Some translations failed"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

echo "All translations completed successfully"

|

||||

|

||||

- name: Commit and push changes

|

||||

run: |

|

||||

git config --global user.name 'github-actions[bot]'

|

||||

git config --global user.email 'github-actions[bot]@users.noreply.github.com'

|

||||

# Check if there are any changes to commit

|

||||

if [[ -n $(git status --porcelain) ]]; then

|

||||

git add .

|

||||

git commit -m "docs: auto-translate documentation"

|

||||

# Push to the appropriate branch based on event type

|

||||

if [[ "${{ github.event_name }}" == "workflow_run" ]]; then

|

||||

# For workflow_run events, push to the head branch of the triggering workflow

|

||||

branch_ref="${{ github.event.workflow_run.head_branch }}"

|

||||

echo "Pushing to workflow_run head branch: $branch_ref"

|

||||

git push origin HEAD:$branch_ref

|

||||

else

|

||||

# For push events, push to the same branch the workflow was triggered from

|

||||

echo "Pushing to current branch: ${{ github.ref_name }}"

|

||||

git push origin HEAD:${{ github.ref_name }}

|

||||

fi

|

||||

echo "Translated files have been pushed to the branch."

|

||||

else

|

||||

echo "No new translations to commit."

|

||||

fi

|

||||

BIN

dify-logo.png

Normal file

|

After Width: | Height: | Size: 6.0 KiB |

@@ -3,20 +3,27 @@ title: "10-Minute Quick Start"

|

||||

description: "Dive into Dify through a quick example app"

|

||||

---

|

||||

|

||||

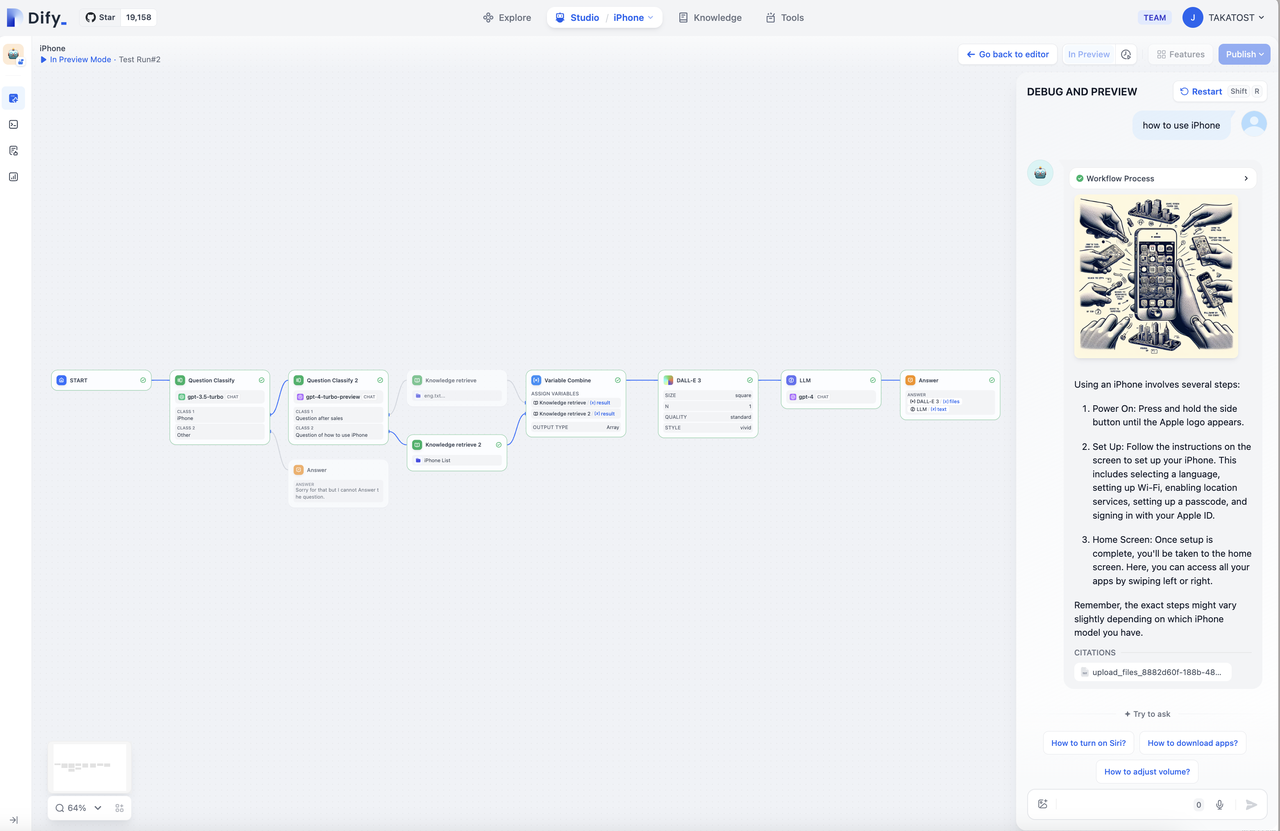

The real value of Dify lies in how easily you can build, deploy, and scale an idea no matter how complex. It’s built for fast prototyping, smooth iteration, and reliable deployment at any level.

|

||||

The real value of Dify lies in how easily you can build, deploy, and scale an idea no matter how complex. It's built for fast prototyping, smooth iteration, and reliable deployment at any level.

|

||||

|

||||

Let's start by learning reliable LLM integration into your applications. In this guide, you'll build a simple chatbot that classifies the user’s question, respond directly using the LLM, and enhance the response with a country-specific fun fact.

|

||||

Let's start by learning reliable LLM integration into your applications. In this guide, you'll build a simple chatbot that classifies the user's question, respond directly using the LLM, and enhance the response with a country-specific fun fact.

|

||||

|

||||

<iframe className="w-full aspect-video rounded-xl" src="https://www.youtube.com/embed/opKZRpfd80k?si=HEkyjRpiYheMyrZ0" title="Dify Quick Start Video" frameBorder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowFullScreen />

|

||||

<iframe

|

||||

className="w-full aspect-video rounded-xl"

|

||||

src="https://www.youtube.com/embed/opKZRpfd80k?si=HEkyjRpiYheMyrZ0"

|

||||

title="Dify Quick Start Video"

|

||||

frameBorder="0"

|

||||

allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture"

|

||||

allowFullScreen

|

||||

/>

|

||||

|

||||

## Step 1: Create a New Workflow (2 min)

|

||||

|

||||

1. Go to `Studio`**\> ** `Workflow`**\> ** `Create from Blank`**\> ** `Orchestrate`**\> ** `New Chatflow`**\> ** `Create`

|

||||

1. Go to **Studio** > **Workflow** > **Create from Blank** > **Orchestrate** > **New Chatflow** > **Create**

|

||||

|

||||

## Step 2: Add Workflow Nodes (6 min)

|

||||

|

||||

<Tip>

|

||||

IMPORTANT: When you want to reference any variable, type `{` or `/`first and you can see the different variables available in your workflow.

|

||||

When you want to reference any variable, type `{` or `/` first and you can see the different variables available in your workflow.

|

||||

</Tip>

|

||||

|

||||

### 1. LLM Node and Output: Understand and Answer the Question

|

||||

@@ -25,43 +32,85 @@ Let's start by learning reliable LLM integration into your applications. In this

|

||||

`LLM` node sends a prompt to a language model to generate a response based on user input. It abstracts away the complexity of API calls, rate limits, and infrastructure, so you can just focus on designing logic.

|

||||

</Info>

|

||||

|

||||

- Create an LLM node using the `Add Node` button and connect it to your Start node

|

||||

- Choose a default model

|

||||

- Paste this into `System Prompt`**:**

|

||||

<Steps>

|

||||

<Step title="Create LLM Node">

|

||||

Create an LLM node using the `Add Node` button and connect it to your Start node

|

||||

</Step>

|

||||

|

||||

<Step title="Configure Model">

|

||||

Choose a default model

|

||||

</Step>

|

||||

|

||||

<Step title="Set System Prompt">

|

||||

Paste this into the System Prompt field:

|

||||

|

||||

> The user will ask a question about a country. The question is {{sys.query}} Tasks: 1. Identify the country mentioned. 2. Rephrase the question clearly. 3. Answer the question using general knowledge. \

|

||||

> \

|

||||

> Respond in the following JSON format: { "country": "\<country name\>","question": "\<rephrased question\>","answer": "\<direct answer to the question\>" }

|

||||

- **_Enable Structured Output_** allows you to easily control what the LLM will return and ensure consistent, machine-readable outputs for downstream use in precise data extraction or conditional logic.\_

|

||||

- Toggle Output Variables Structured ON \> `Configure` and click `Import from JSON`

|

||||

- Paste:

|

||||

|

||||

> { "country": "string", "question": "string", "answer": "string" }

|

||||

```text

|

||||

The user will ask a question about a country. The question is {{sys.query}}

|

||||

Tasks:

|

||||

1. Identify the country mentioned.

|

||||

2. Rephrase the question clearly.

|

||||

3. Answer the question using general knowledge.

|

||||

|

||||

Respond in the following JSON format:

|

||||

{

|

||||

"country": "<country name>",

|

||||

"question": "<rephrased question>",

|

||||

"answer": "<direct answer to the question>"

|

||||

}

|

||||

```

|

||||

</Step>

|

||||

|

||||

<Step title="Enable Structured Output">

|

||||

**Enable Structured Output** allows you to easily control what the LLM will return and ensure consistent, machine-readable outputs for downstream use in precise data extraction or conditional logic.

|

||||

|

||||

- Toggle Output Variables Structured ON > `Configure` and click `Import from JSON`

|

||||

- Paste:

|

||||

|

||||

```json

|

||||

{

|

||||

"country": "string",

|

||||

"question": "string",

|

||||

"answer": "string"

|

||||

}

|

||||

```

|

||||

</Step>

|

||||

</Steps>

|

||||

|

||||

### 2. Code Block: Get Fun Fact

|

||||

|

||||

<Info>

|

||||

`Code` node _executes custom logic using code. It lets you inject code exactly where needed—within a visual workflow—saving you from wiring up an entire backend._

|

||||

`Code` node executes custom logic using code. It lets you inject code exactly where needed—within a visual workflow—saving you from wiring up an entire backend.

|

||||

</Info>

|

||||

|

||||

- Create a `Code` Node using the `Add Node` button and connect to LLM block

|

||||

- Change one `Input Variable` name to "country" and set the variable to `structured_output`**\>** `country`

|

||||

<Steps>

|

||||

<Step title="Create Code Node">

|

||||

Create a `Code` Node using the `Add Node` button and connect to LLM block

|

||||

</Step>

|

||||

|

||||

<Step title="Configure Input Variable">

|

||||

Change one `Input Variable` name to "country" and set the variable to `structured_output` > `country`

|

||||

</Step>

|

||||

|

||||

<Step title="Add Python Code">

|

||||

Paste this code into `PYTHON3`:

|

||||

|

||||

Paste this code into `PYTHON3`:

|

||||

|

||||

```python expandable

|

||||

def main(country: str) -> dict:

|

||||

country_name = country.lower()

|

||||

fun_facts = {

|

||||

"japan": "Japan has more than 5 million vending machines.",

|

||||

"france": "France is the most visited country in the world.",

|

||||

"italy": "Italy has more UNESCO World Heritage sites than any other country."

|

||||

}

|

||||

fun_fact = fun_facts.get(country_name, f"No fun fact available for {country.title()}.")

|

||||

return {"fun_fact": fun_fact}

|

||||

```

|

||||

|

||||

- Change output variable`result`into `fun_fact` to have a better labeled variable

|

||||

```python

|

||||

def main(country: str) -> dict:

|

||||

country_name = country.lower()

|

||||

fun_facts = {

|

||||

"japan": "Japan has more than 5 million vending machines.",

|

||||

"france": "France is the most visited country in the world.",

|

||||

"italy": "Italy has more UNESCO World Heritage sites than any other country."

|

||||

}

|

||||

fun_fact = fun_facts.get(country_name, f"No fun fact available for {country.title()}.")

|

||||

return {"fun_fact": fun_fact}

|

||||

```

|

||||

</Step>

|

||||

|

||||

<Step title="Rename Output Variable">

|

||||

Change output variable `result` to `fun_fact` to have a better labeled variable

|

||||

</Step>

|

||||

</Steps>

|

||||

|

||||

### 3. Answer Node: Final Answer to User

|

||||

|

||||

@@ -69,19 +118,30 @@ def main(country: str) -> dict:

|

||||

`Answer` Node creates a clean final output to return.

|

||||

</Info>

|

||||

|

||||

- Create an`Answer`Node using the `Add Node` button.

|

||||

- Paste into the `Answer Field`**:**

|

||||

<Steps>

|

||||

<Step title="Create Answer Node">

|

||||

Create an `Answer` Node using the `Add Node` button

|

||||

</Step>

|

||||

|

||||

<Step title="Configure Answer Field">

|

||||

Paste into the Answer Field:

|

||||

|

||||

> Q: {{ structured_output.question }}

|

||||

>

|

||||

> A: {{ structured_output.answer }}

|

||||

>

|

||||

> Fun Fact: {{ fun_fact }}

|

||||

```text

|

||||

Q: {{ structured_output.question }}

|

||||

|

||||

A: {{ structured_output.answer }}

|

||||

|

||||

Fun Fact: {{ fun_fact }}

|

||||

```

|

||||

</Step>

|

||||

</Steps>

|

||||

|

||||

End Workflow:

|

||||

|

||||

<img

|

||||

style={{ width:"1850%" }}

|

||||

src="/images/quick-start-workflow-overview.png"

|

||||

alt="Complete workflow diagram showing LLM, Code, and Answer nodes connected"

|

||||

style={{ width: "100%" }}

|

||||

/>

|

||||

|

||||

---

|

||||

@@ -90,13 +150,13 @@ End Workflow:

|

||||

|

||||

Click `Preview`, then ask:

|

||||

|

||||

- “What is the capital of France?”

|

||||

- “Tell me about Japanese cuisine”

|

||||

- “Describe the culture in Italy”

|

||||

- "What is the capital of France?"

|

||||

- "Tell me about Japanese cuisine"

|

||||

- "Describe the culture in Italy"

|

||||

- Any other questions

|

||||

|

||||

Make sure your Bot works as expected\!

|

||||

Make sure your Bot works as expected!

|

||||

|

||||

## You’ve Completed the Bot\!

|

||||

## You've Completed the Bot!

|

||||

|

||||

This guide showed how to integrate language models reliably and scalably without reinventing infrastructure. With Dify’s visual workflows and modular nodes, you’re not just building faster, you’re adopting a clean, production-ready architecture for LLM-powered apps.

|

||||

This guide showed how to integrate language models reliably and scalably without reinventing infrastructure. With Dify's visual workflows and modular nodes, you're not just building faster, you're adopting a clean, production-ready architecture for LLM-powered apps.

|

||||

221

en/getting-started/readme/features-and-specifications.mdx

Normal file

@@ -0,0 +1,221 @@

|

||||

---

|

||||

title: Features and Specifications

|

||||

description: For those already familiar with LLM application tech stacks, this document serves as a shortcut to understand Dify's unique advantages

|

||||

---

|

||||

|

||||

|

||||

We adopt transparent policies around product specifications to ensure decisions are made based on complete understanding. Such transparency not only benefits your technical selection, but also promotes deeper comprehension within the community for active contributions.

|

||||

|

||||

### Project Basics

|

||||

|

||||

<table>

|

||||

<thead>

|

||||

<tr>

|

||||

<th>Attribute</th>

|

||||

<th>Details</th>

|

||||

</tr>

|

||||

</thead>

|

||||

<tbody>

|

||||

<tr>

|

||||

<td>Established</td>

|

||||

<td>March 2023</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Open Source License</td>

|

||||

<td>[Apache License 2.0 with commercial licensing](../../policies/open-source)</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Official R&D Team</td>

|

||||

<td>Over 15 full-time employees</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Community Contributors</td>

|

||||

<td>Over [290](https://ossinsight.io/analyze/langgenius/dify#overview) people (As of Q2 2024)</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Backend Technology</td>

|

||||

<td>Python/Flask/PostgreSQL</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Frontend Technology</td>

|

||||

<td>Next.js</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Codebase Size</td>

|

||||

<td>Over 130,000 lines</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Release Frequency</td>

|

||||

<td>Average once per week</td>

|

||||

</tr>

|

||||

</tbody>

|

||||

</table>

|

||||

|

||||

### Technical Features

|

||||

|

||||

<table>

|

||||

<thead>

|

||||

<tr>

|

||||

<th>Feature</th>

|

||||

<th>Details</th>

|

||||

</tr>

|

||||

</thead>

|

||||

<tbody>

|

||||

<tr>

|

||||

<td>LLM Inference Engines</td>

|

||||

<td>Dify Runtime (LangChain removed since v0.4)</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Commercial Models Supported</td>

|

||||

<td>

|

||||

<p><strong>10+</strong>, including OpenAI and Anthropic</p>

|

||||

<p>Onboard new mainstream models within 48 hours</p>

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>MaaS Vendor Supported</td>

|

||||

<td><strong>7</strong>, Hugging Face, Replicate, AWS Bedrock, NVIDIA, GroqCloud, together.ai, OpenRouter</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Local Model Inference Runtimes Supported</td>

|

||||

<td><strong>6</strong>, Xoribits (recommended), OpenLLM, LocalAI, ChatGLM, Ollama, NVIDIA TIS</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>OpenAI Interface Standard Model Integration Supported</td>

|

||||

<td><strong>∞</strong></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Multimodal Capabilities</td>

|

||||

<td>

|

||||

<p>ASR Models</p>

|

||||

<p>Rich-text models up to GPT-4o specs</p>

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Built-in App Types</td>

|

||||

<td>Text generation, Chatbot, Agent, Workflow, Chatflow</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Prompt-as-a-Service Orchestration</td>

|

||||

<td>

|

||||

<p>Visual orchestration interface widely praised, modify Prompts and preview effects in one place.</p>

|

||||

<p><strong>Orchestration Modes</strong></p>

|

||||

<ul>

|

||||

<li>Simple orchestration</li>

|

||||

<li>Assistant orchestration</li>

|

||||

<li>Flow orchestration</li>

|

||||

</ul>

|

||||

<p><strong>Prompt Variable Types</strong></p>

|

||||

<ul>

|

||||

<li>String</li>

|

||||

<li>Radio enum</li>

|

||||

<li>External API</li>

|

||||

<li>File (Q3 2024)</li>

|

||||

</ul>

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Agentic Workflow Features</td>

|

||||

<td>

|

||||

<p>Industry-leading visual workflow orchestration interface, live-editing node debugging, modular DSL, and native code runtime, designed for building more complex, reliable, and stable LLM applications.</p>

|

||||

<p><strong>Supported Nodes</strong></p>

|

||||

<ul>

|

||||

<li>LLM</li>

|

||||

<li>Knowledge Retrieval</li>

|

||||

<li>Question Classifier</li>

|

||||

<li>IF/ELSE</li>

|

||||

<li>CODE</li>

|

||||

<li>Template</li>

|

||||

<li>HTTP Request</li>

|

||||

<li>Tool</li>

|

||||

</ul>

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>RAG Features</td>

|

||||

<td>

|

||||

<p>Industry-first visual knowledge base management interface, supporting snippet previews and recall testing.</p>

|

||||

<p><strong>Indexing Methods</strong></p>

|

||||

<ul>

|

||||

<li>Keywords</li>

|

||||

<li>Text vectors</li>

|

||||

<li>LLM-assisted question-snippet model</li>

|

||||

</ul>

|

||||

<p><strong>Retrieval Methods</strong></p>

|

||||

<ul>

|

||||

<li>Keywords</li>

|

||||

<li>Text similarity matching</li>

|

||||

<li>Hybrid Search</li>

|

||||

<li>N choose 1 (Legacy)</li>

|

||||

<li>Multi-path retrieval</li>

|

||||

</ul>

|

||||

<p><strong>Recall Optimization</strong></p>

|

||||

<ul>

|

||||

<li>Rerank models</li>

|

||||

</ul>

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>ETL Capabilities</td>

|

||||

<td>

|

||||

<p>Automated cleaning for TXT, Markdown, PDF, HTML, DOC, CSV formats. Unstructured service enables maximum support.</p>

|

||||

<p>Sync Notion docs as knowledge bases.</p>

|

||||

<p>Sync Webpages as knowledge bases.</p>

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Vector Databases Supported</td>

|

||||

<td>Qdrant (recommended), Weaviate, Zilliz/Milvus, Pgvector, Pgvector-rs, Chroma, OpenSearch, TiDB, Tencent Cloud VectorDB, Oracle, Relyt, Analyticdb, Couchbase, OceanBase, Tablestore, Lindorm, OpenGauss, Matrixone</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Agent Technologies</td>

|

||||

<td>

|

||||

<p>ReAct, Function Call.</p>

|

||||

<p><strong>Tooling Support</strong></p>

|

||||

<ul>

|

||||

<li>Invoke OpenAI Plugin standard tools</li>

|

||||

<li>Directly load OpenAPI Specification APIs as tools</li>

|

||||

</ul>

|

||||

<p><strong>Built-in Tools</strong></p>

|

||||

<ul>

|

||||

<li>40+ tools (As of Q2 2024)</li>

|

||||

</ul>

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Logging</td>

|

||||

<td>Supported, annotations based on logs</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Annotation Reply</td>

|

||||

<td>Based on human-annotated Q&As, used for similarity-based replies. Exportable as data format for model fine-tuning.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Content Moderation</td>

|

||||

<td>OpenAI Moderation or external APIs</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Team Collaboration</td>

|

||||

<td>Workspaces, multi-member management</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>API Specs</td>

|

||||

<td>RESTful, most features covered</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>Deployment Methods</td>

|

||||

<td>Docker, Helm</td>

|

||||

</tr>

|

||||

</tbody>

|

||||

</table>

|

||||

|

||||

{/*

|

||||

Contributing Section

|

||||

DO NOT edit this section!

|

||||

It will be automatically generated by the script.

|

||||

*/}

|

||||

|

||||

---

|

||||

|

||||

[Edit this page](https://github.com/langgenius/dify-docs/edit/main/en/getting-started/readme/features-and-specifications.mdx) | [Report an issue](https://github.com/langgenius/dify-docs/issues/new?template=docs.yml)

|

||||

|

||||

58

en/guides/workflow/README.mdx

Normal file

@@ -0,0 +1,58 @@

|

||||

---

|

||||

title: Introduction

|

||||

---

|

||||

|

||||

### Basic Introduction

|

||||

|

||||

Workflows reduce system complexity by breaking down complex tasks into smaller steps (nodes), reducing reliance on prompt engineering and model inference capabilities, and enhancing the performance of LLM applications for complex tasks. This also improves the system's interpretability, stability, and fault tolerance.

|

||||

|

||||

Dify workflows are divided into two types:

|

||||

|

||||

* **Chatflow**: Designed for conversational scenarios, including customer service, semantic search, and other conversational applications that require multi-step logic in response construction.

|

||||

* **Workflow**: Geared towards automation and batch processing scenarios, suitable for high-quality translation, data analysis, content generation, email automation, and more.

|

||||

|

||||

|

||||

|

||||

To address the complexity of user intent recognition in natural language input, Chatflow provides question understanding nodes. Compared to Workflow, it adds support for Chatbot features such as conversation history (Memory), annotated replies, and Answer nodes.

|

||||

|

||||

To handle complex business logic in automation and batch processing scenarios, Workflow offers a variety of logic nodes, such as code nodes, IF/ELSE nodes, template transformation, iteration nodes, and more. Additionally, it provides capabilities for timed and event-triggered actions, facilitating the construction of automated processes.

|

||||

|

||||

### Common Use Cases

|

||||

|

||||

* Customer Service

|

||||

|

||||

By integrating LLM into your customer service system, you can automate responses to common questions, reducing the workload of your support team. LLM can understand the context and intent of customer queries and generate helpful and accurate answers in real-time.

|

||||

|

||||

* Content Generation

|

||||

|

||||

Whether you need to create blog posts, product descriptions, or marketing materials, LLM can assist by generating high-quality content. Simply provide an outline or topic, and LLM will use its extensive knowledge base to produce engaging, informative, and well-structured content.

|

||||

|

||||

* Task Automation

|

||||

|

||||

LLM can be integrated with various task management systems like Trello, Slack, and Lark to automate project and task management. Using natural language processing, LLM can understand and interpret user input, create tasks, update statuses, and assign priorities without manual intervention.

|

||||

|

||||

* Data Analysis and Reporting

|

||||

|

||||

LLM can analyze large datasets and generate reports or summaries. By providing relevant information to LLM, it can identify trends, patterns, and insights, transforming raw data into actionable intelligence. This is particularly valuable for businesses looking to make data-driven decisions.

|

||||

|

||||

* Email Automation

|

||||

|

||||

LLM can be used to draft emails, social media updates, and other forms of communication. By providing a brief outline or key points, LLM can generate well-structured, coherent, and contextually relevant messages. This saves significant time and ensures your responses are clear and professional.

|

||||

|

||||

### How to Get Started

|

||||

|

||||

* Start by building a workflow from scratch or use system templates to help you get started.

|

||||

* Get familiar with basic operations, including creating nodes on the canvas, connecting and configuring nodes, debugging workflows, and viewing run history.

|

||||

* Save and publish a workflow.

|

||||

* Run the published application or call the workflow through an API.

|

||||

|

||||

{/*

|

||||

Contributing Section

|

||||

DO NOT edit this section!

|

||||

It will be automatically generated by the script.

|

||||

*/}

|

||||

|

||||

---

|

||||

|

||||

[Edit this page](https://github.com/langgenius/dify-docs/edit/main/en/guides/workflow/README.mdx) | [Report an issue](https://github.com/langgenius/dify-docs/issues/new?template=docs.yml)

|

||||

|

||||

127

en/resources/about-dify/dify-brand-guidelines.mdx

Normal file

@@ -0,0 +1,127 @@

|

||||

---

|

||||

title: Dify Brand Guidelines

|

||||

---

|

||||

|

||||

The Dify identity is more than a logo—it's a statement of thoughtful construction, system-level clarity, and open collaboration.

|

||||

When you are authorized or recognized as part of the Dify ecosystem, you represent the values we stand for.

|

||||

By using Dify Brand Assets in an official or affiliate capacity, you share in the responsibility of upholding our design system and product philosophy.

|

||||

Use of Dify Brand Assets must comply with our Brand Guidelines and be consistent with the principles outlined in the Dify Brand Usage Terms.

|

||||

Any use that implies endorsement, partnership, or certification without explicit permission is not allowed.

|

||||

Dify may revoke usage rights at any time if misuse occurs.

|

||||

|

||||

Refer to: [Dify Brand Usage Terms](/en/resources/about-dify/dify-brand-usage-terms).

|

||||

|

||||

## Our Logo

|

||||

|

||||

|

||||

|

||||

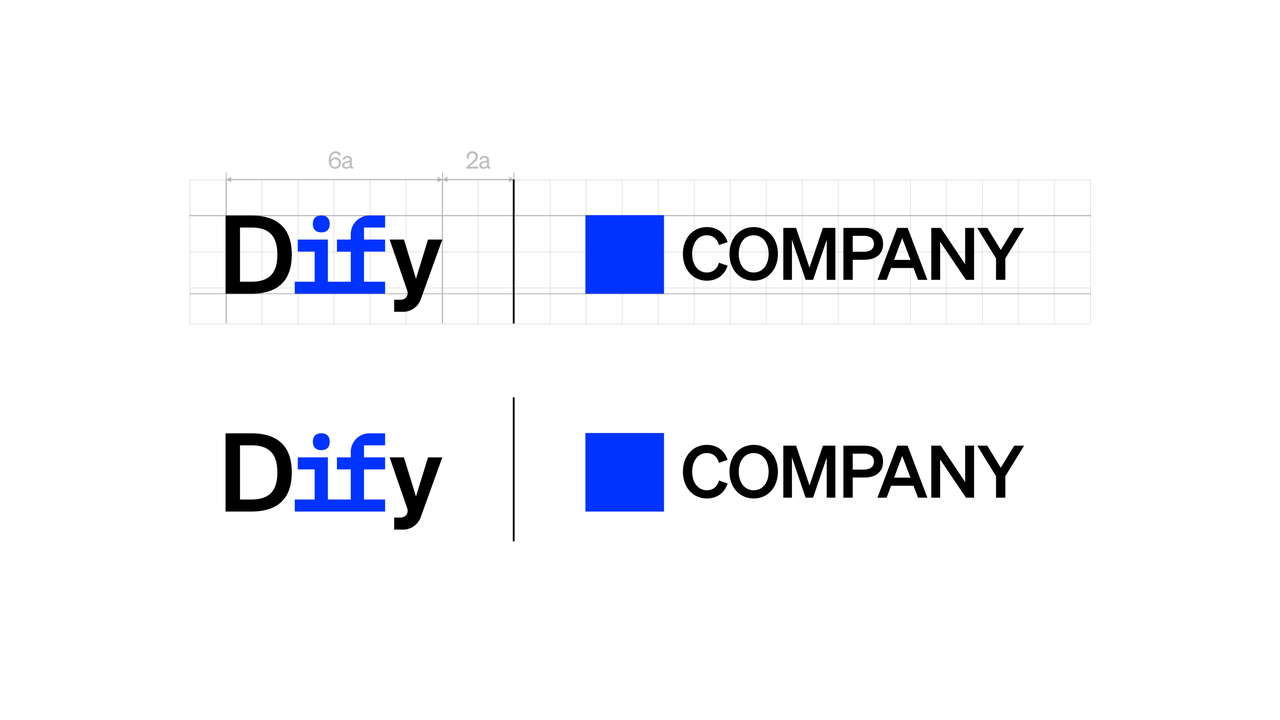

## Using Our Logo

|

||||

|

||||

The Dify logo signifies more than product—it signals trust, clarity, and technical credibility.

|

||||

To maintain brand integrity, it must be used in accordance with our guidelines.

|

||||

Misuse or casual placement may imply endorsement or affiliation where none exists.

|

||||

Please use thoughtfully and only in approved contexts.

|

||||

|

||||

### Examples of appropriate logo usage

|

||||

|

||||

- In co-branded visuals with official partners

|

||||

- In event materials or pages where Dify is a sponsor or participant

|

||||

- In a group of logos alongside other tools (“logo garden”)

|

||||

- In documentation, presentations, or integrations referencing Dify technologies

|

||||

|

||||

## Use the Right Color

|

||||

|

||||

Whenever possible, use the official two-tone Dify logo.

|

||||

|

||||

This version best represents the balance between clarity and structure in our brand.

|

||||

|

||||

Alternative color variations may be used when:

|

||||

|

||||

- Background colors limit contrast

|

||||

- Specific print or digital limitations apply

|

||||

- Layout demands mono or reversed options

|

||||

|

||||

|

||||

|

||||

## Keep It Legible

|

||||

|

||||

To ensure visibility and clarity, the Dify logo must always be surrounded by a minimum area of clear space.This prevents visual interference from nearby text, images, or other graphic elements.

|

||||

- More space is recommended wherever possible to enhance legibility.

|

||||

- Do not crowd the logo. Respecting this spacing is essential to maintain its impact and recognizability across all applications.

|

||||

|

||||

|

||||

|

||||

## Co-Branding Guidance

|

||||

|

||||

|

||||

|

||||

When pairing the Dify logo with another brand, always follow the co-branding layout shown here to maintain clarity and balance.

|

||||

- Use a thin vertical divider to separate the Dify logo from the partner brand.

|

||||

- Always maintain the relative positioning and proportions between the Dify and partner logos.

|

||||

- Do not rearrange the layout, change alignment, or scale elements independently.

|

||||

|

||||

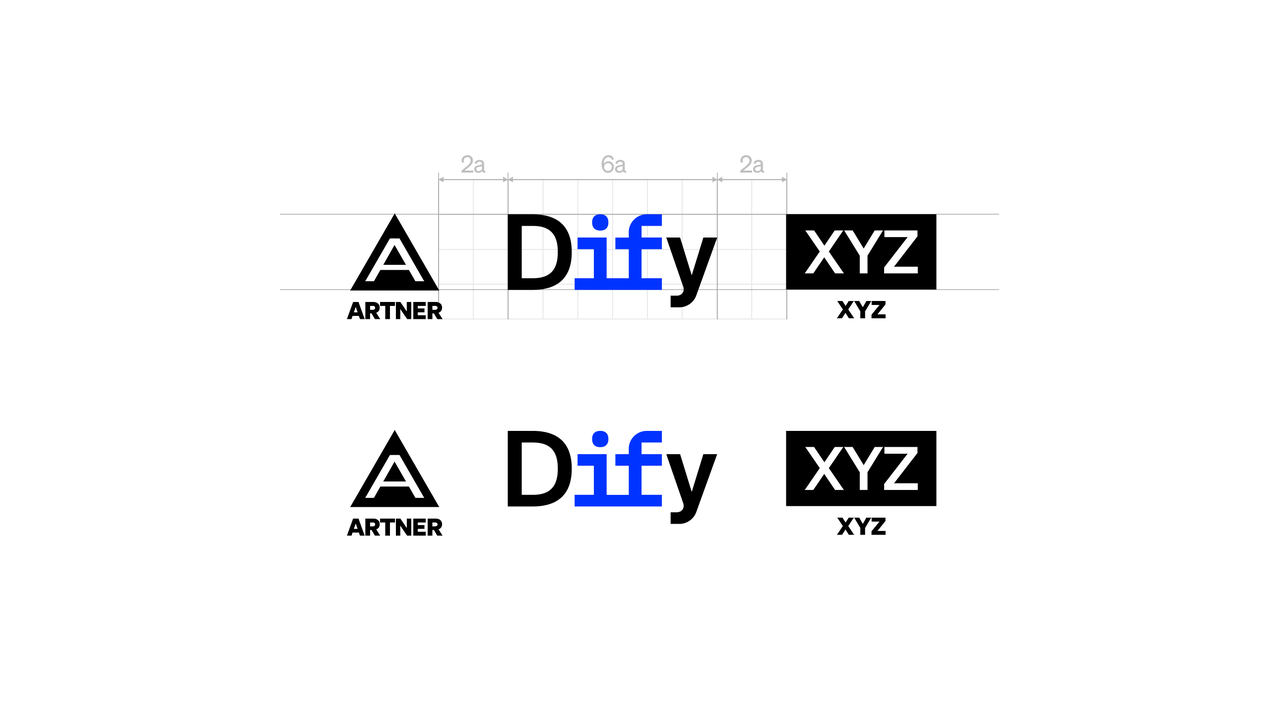

## Multiple Logo Lockup Guidelines

|

||||

|

||||

|

||||

|

||||

When Dify appears alongside two or more partner logos, follow these rules to ensure clarity and visual order:

|

||||

|

||||

- Do not use dividers between logos.

|

||||

- Maintain consistent and equal spacing between all logos.

|

||||

- If the initiative is partner-led, the partner’s logo should appear first. All other logos, including Dify, should follow in alphabetical order.

|

||||

- All logos must be visually aligned to the same height. Do not scale or distort individual marks.

|

||||

- Preserve each brand’s official colors, adequate whitespace, and consistent spacing throughout.

|

||||

|

||||

## Dify Partner Badge

|

||||

|

||||

|

||||

|

||||

The Dify Partner Badge is used to indicate an official relationship with Dify.

|

||||

It can be included in marketing materials, presentations, documentation, and event assets owned by the partner, as long as the content refers to Dify’s products, integrations, or support.

|

||||

- Ensure minimum clear space so the design doesn't appear cluttered.

|

||||

- Do not change the badge color.

|

||||

- Do not change the proportions of the logo.

|

||||

- Do not alter or replace the text in the badge.

|

||||

- Do not make the badge bigger than the partner logo.

|

||||

|

||||

## Powered by Dify Badge

|

||||

|

||||

|

||||

|

||||

The “Powered by Dify” badge indicates that your project, product, or content integrates Dify’s open-source capabilities or platform services.

|

||||

|

||||

It is a lightweight attribution that highlights the origin of the tooling—not an endorsement or partnership.

|

||||

|

||||

### Where You Can Use It

|

||||

|

||||

- On websites, applications, or systems that include Dify-powered features

|

||||

- In open-source project documentation, README files, demos, or footers

|

||||

- In educational materials, presentations, blog posts, or talks that showcase Dify's technology stack

|

||||

|

||||

### Badge Usage Rules

|

||||

|

||||

- Use the official “Powered by Dify” badge provided by Dify

|

||||

- Do not alter the badge’s color, typography, proportions, or wording

|

||||

- The badge must be clearly visible and not distorted, compressed, or placed on visually disruptive backgrounds

|

||||

|

||||

### Usage Restrictions

|

||||

|

||||

- Do not imply endorsement, certification, or official partnership with Dify

|

||||

- Do not use the badge in commercial marketing, paid training, or monetized courses without prior written permission

|

||||

|

||||

[Download Dify Design Kit](https://assets-docs.dify.ai/2025/05/5f547d3718bf32067849d9a83d9c92cf.zip)

|

||||

|

||||

---

|

||||

|

||||

© LangGenius, Inc. All rights reserved. Unauthorized use of Dify brand assets is strictly prohibited.

|

||||

|

||||

{/*

|

||||

Contributing Section

|

||||

DO NOT edit this section!

|

||||

It will be automatically generated by the script.

|

||||

*/}

|

||||

|

||||

---

|

||||

|

||||

[Edit this page](https://github.com/langgenius/dify-docs/edit/main/en/resources/about-dify/dify-brand-guidelines.mdx) | [Report an issue](https://github.com/langgenius/dify-docs/issues/new?template=docs.yml)

|

||||

|

||||

123

en/resources/about-dify/dify-brand-usage-terms.mdx

Normal file

@@ -0,0 +1,123 @@

|

||||

---

|

||||

title: Dify Brand Usage Terms

|

||||

---

|

||||

|

||||

These Brand Usage Terms ("Terms") govern the proper use of trademarks, logos, names, designs, and other brand elements (collectively, "Brand Assets") owned by LangGenius, Inc. ("Dify," "we," "our," or "us"). By using Dify Brand Assets, you agree to comply with these Terms.

|

||||

|

||||

---

|

||||

## 1. Permitted Uses (No Prior Approval Required)

|

||||

|

||||

You may use Dify Brand Assets without prior approval in the following cases, provided you adhere to the Dify Brand Guidelines:

|

||||

|

||||

### Community & Content Creation

|

||||

|

||||

- In blog posts, articles, documentation, social media, forums, and presentations to reference or discuss Dify.

|

||||

- In open-source projects (e.g., GitHub repositories, README files, and code examples) to indicate integration or support for Dify, as long as it is clear that your project is not officially affiliated with or endorsed by Dify.

|

||||

- In YouTube videos, podcasts, and developer talks for educational or informational purposes.

|

||||

|

||||

### Open-Source Projects

|

||||

|

||||

- You may display "Powered by Dify" in non-commercial open-source projects to acknowledge the use of Dify.

|

||||

- Linking to the official Dify website or GitHub repository is encouraged.

|

||||

|

||||

### Media & News Coverage

|

||||

|

||||

- Journalists, bloggers, and media outlets may use Dify Brand Assets in news articles or reports, provided the content is factually accurate and does not imply endorsement or sponsorship by Dify.

|

||||

|

||||

---

|

||||

|

||||

## 2. Restricted Uses (Approval Required)

|

||||

|

||||

The following uses require prior written approval from Dify:

|

||||

|

||||

### Commercial Use & Marketing

|

||||

|

||||

- Resellers, partners, and SaaS platforms integrating Dify into commercial products or services.

|

||||

- Using Dify Brand Assets in advertisements, promotional materials, or sales collateral.

|

||||

- Using Dify Brand Assets in paid training, consulting, or courses that suggest official endorsement.

|

||||

|

||||

### Official Partnership & Endorsement

|

||||

|

||||

- You may not use any Dify Brand Assets in a way that implies endorsement, certification, sponsorship, or partnership with Dify without explicit written authorization.

|

||||

|

||||

- Dify Partner badges are exclusively available to officially contracted partners. Each badge tier (e.g., Partner, Preferred Partner, Elite Partner) requires separate written approval and may only be used by partners who have been expressly granted that specific designation. Unauthorized use of any badge, or use of a badge outside your approved tier, is strictly prohibited.

|

||||

|

||||

### Merchandise & Physical Goods

|

||||

|

||||

- You may not use Dify Brand Assets on T-shirts, stickers, posters, or any merchandise without written permission.

|

||||

|

||||

### Modifications & Brand Combinations

|

||||

|

||||

- You may not alter the Dify Logo, including changes to colors, fonts, proportions, or effects.

|

||||

- You may not combine the Dify Logo with other logos or symbols to create a new or composite mark.

|

||||

|

||||

---

|

||||

|

||||

## 3. Brand Usage Guidelines

|

||||

|

||||

When using Dify Brand Assets, you must comply with our Brand Guidelines, which include:

|

||||

|

||||

- Maintain logo integrity: Do not distort, stretch, or rotate the logo.

|

||||

- Use official logo versions: Do not use unofficial or outdated versions.

|

||||

- Provide sufficient spacing: Ensure clear visual separation around the logo.

|

||||

- Do not alter colors: The logo must be used in the official Dify color scheme.

|

||||

|

||||

---

|

||||

|

||||

## 4. Trademark & Copyright Notice

|

||||

|

||||

- "Dify" and all related logos and brand elements are trademarks or registered trademarks of LangGenius, Inc.

|

||||

- These Terms do not grant any ownership or licensing rights to you. Dify retains all rights to its Brand Assets.

|

||||

|

||||

---

|

||||

|

||||

## 5. Misuse & Enforcement

|

||||

|

||||

- Dify reserves the right to revoke usage rights at any time and may require you to cease using the Brand Assets if they are used improperly.

|

||||

- Unauthorized or misleading use of the Brand Assets may result in legal action.

|

||||

|

||||

---

|

||||

|

||||

## 6. How to Request Permission?

|

||||

|

||||

If your use case requires approval (e.g., commercial use, partnerships, merchandising), please submit a request to: [business@dify.ai](mailto:business@dify.ai)

|

||||

|

||||

Your request should include:

|

||||

|

||||

- Your name / company name

|

||||

- A detailed description of how you intend to use the Dify Brand Assets

|

||||

- Relevant materials (e.g., mockups, website links)

|

||||

|

||||

---

|

||||

## 7. Reporting Brand Misuse

|

||||

|

||||

If you believe that Dify Brand Assets are being misused in violation of these Terms—such as unauthorized commercial use, misleading endorsements, or counterfeit merchandise—you may report it to us.

|

||||

|

||||

To submit a report, contact [brand@dify.ai](mailto:brand@dify.ai) with the following details:

|

||||

|

||||

- A description of the issue (e.g., unauthorized commercial use, misleading branding, counterfeit products)

|

||||

- Relevant evidence (e.g., screenshots, links, documents)

|

||||

- Your contact information (optional, in case we need further details)

|

||||

|

||||

Dify will review all reports and take appropriate action as necessary, which may include legal enforcement or requiring the violating party to cease unauthorized use. We appreciate your support in protecting the Dify brand.

|

||||

|

||||

---

|

||||

## 8. Updates to These Terms

|

||||

|

||||

Dify may update these Brand Usage Terms periodically. Any changes will be published on this page, and by continuing to use the Brand Assets, you agree to the latest version.

|

||||

|

||||

Latest Version: 2025/03/04

|

||||

|

||||

---

|

||||

© LangGenius, Inc. All rights reserved. Unauthorized use of Dify Brand Assets is strictly prohibited.

|

||||

|

||||

{/*

|

||||

Contributing Section

|

||||

DO NOT edit this section!

|

||||

It will be automatically generated by the script.

|

||||

*/}

|

||||

|

||||

---

|

||||

|

||||

[Edit this page](https://github.com/langgenius/dify-docs/edit/main/en/resources/about-dify/dify-brand-usage-terms.mdx) | [Report an issue](https://github.com/langgenius/dify-docs/issues/new?template=docs.yml)

|

||||

|

||||

201

en/resources/termbase.mdx

Normal file

@@ -0,0 +1,201 @@

|

||||

---

|

||||

title: Termbase

|

||||

---

|

||||

|

||||

## A

|

||||

### Agent

|

||||

An autonomous AI system capable of making decisions and executing tasks based on environmental information. In the Dify platform, agents combine the comprehension capabilities of large language models with the ability to interact with external tools, automatically completing a series of operations ranging from simple to complex, such as searching for information, calling APIs, or generating content.

|

||||

|

||||

### Agentic Workflow

|

||||

A task orchestration method that allows AI systems to autonomously solve complex problems through multiple steps. For example, an agentic workflow can first understand a user's question, then query a knowledge base, call computational tools, and finally integrate information to generate a complete answer, all without human intervention.

|

||||

|

||||

### Automatic Speech Recognition (ASR)

|

||||

Technology that converts human speech into text and serves as the foundation for voice interaction applications. This technology allows users to interact with AI systems by speaking rather than typing, and is widely used in scenarios such as voice assistants, meeting transcription, and accessibility services.

|

||||

|

||||

## B

|

||||

### Backbone of Thought (BoT)

|

||||

A structured thinking framework that provides the main structure for reasoning in large language models. It helps models maintain a clear thinking path when processing complex problems, similar to the outline of an academic paper or the skeleton of a decision tree.

|

||||

|

||||

## C

|

||||

### Chunking

|

||||

A processing technique that splits long text into smaller content blocks, enabling retrieval systems to find relevant information more precisely. A good chunking strategy considers both the semantic integrity of the content and the context window limitations of language models, thereby improving the quality of retrieval and generation.

|

||||

|

||||

### Citation and Attribution

|

||||

Features that allow AI systems to clearly indicate the sources of information, increasing the credibility and transparency of responses. When the system generates answers based on knowledge base content, it can automatically annotate the referenced document name, page number, or URL, enabling users to understand the origin of the information.

|

||||

|

||||

### Chain of Thought (CoT)

|

||||

A prompting technique that guides large language models to display their step-by-step thinking process. For example, when solving a math problem, the model first lists the known conditions, then follows reasoning steps to solve it one by one, and finally reaches a conclusion. The entire process resembles human thinking.

|

||||

|

||||

## D

|

||||

### Domain-Specific Language (DSL)

|

||||

A programming language or configuration format designed for a specific application domain. Dify DSL is an application engineering file standard based on YAML format, used to define various configurations of AI applications, including model parameters, prompt design, and workflow orchestration, allowing non-professional developers to build complex AI applications.

|

||||

|

||||

## E

|

||||

### Extract, Transform, Load (ETL)

|

||||

A classic data processing workflow: extracting raw data, transforming it into a format suitable for analysis, and then loading it into the target system. In AI document processing, ETL may include extracting text from PDFs, cleaning formats, splitting content, calculating embedding vectors, and finally loading into a vector database, preparing for RAG systems.

|

||||

|

||||

## F

|

||||

### Frequency Penalty

|

||||

A text generation control parameter that increases output diversity by reducing the probability of generating frequently occurring vocabulary. The higher the value, the more the model tends to use diverse vocabulary and expressions; at a value of 0, the model will not specifically avoid reusing the same vocabulary.

|

||||

|

||||

### Function Calling

|

||||

The capability of large language models to recognize when to call specific functions and provide the required parameters. For example, when a user asks about the weather, the model can automatically call a weather API, construct the correct parameter format (city, date), and then generate a response based on the API's returned results.

|

||||

|

||||

## G

|

||||

### General Chunking Pattern

|

||||

A simple text splitting strategy that divides documents into mutually independent content blocks. This pattern is suitable for documents with clear structures and relatively independent paragraphs, such as product manuals or encyclopedia entries, where each chunk can be understood independently without heavily relying on context.

|

||||

|

||||

### Graph of Thought (GoT)

|

||||

A method of representing the thinking process as a network structure, capturing complex relationships between concepts. Unlike the linear Chain of Thought, the Graph of Thought can express branching, cyclical, and multi-path thinking patterns, suitable for dealing with complex problems that have multiple interrelated factors.

|

||||

|

||||

## H

|

||||

### Hybrid Search

|

||||

A search method that combines the advantages of keyword matching and semantic search to provide more comprehensive retrieval results. For example, when searching for "apple nutritional components," hybrid search can find both documents containing the keywords "apple" and "nutrition," as well as content discussing related semantic concepts like "fruit health value," selecting the optimal results through weight adjustment or reranking.

|

||||

|

||||

## I

|

||||

### Inverted Index

|

||||

A core data structure of search engines that records which documents each word appears in. Unlike traditional indexes that find content from documents, inverted indexes find documents from vocabulary, greatly improving full-text retrieval speed. For example, the index entry for the term "artificial intelligence" would list all document IDs and positions containing this term.

|

||||

|

||||

## K

|

||||

### Keyword Search

|

||||

A search method based on exact matching that finds documents containing specific vocabulary. This method is computationally efficient and suitable for scenarios where users clearly know the terms they want to find, such as product models, proper nouns, or specific commands, but may miss content expressed using synonyms or related concepts.

|

||||

|

||||

### Knowledge Base

|

||||

A database that stores structured information in AI applications, providing a source of professional knowledge for models. In the Dify platform, knowledge bases can contain various documents (PDF, Word, web pages, etc.), which are processed for AI retrieval and used to generate accurate, well-founded answers, particularly suitable for building domain expert applications.

|

||||

|

||||

### Knowledge Retrieval

|

||||

The process of finding information from a knowledge base that is most relevant to a user's question, and is a key component of RAG systems. Effective knowledge retrieval not only finds relevant content but also controls the amount of information returned, avoiding irrelevant content that could interfere with the model, while providing sufficient background to ensure accurate and complete answers.

|

||||

|

||||

## L

|

||||

### Large Language Model (LLM)

|

||||

An AI model trained on massive amounts of text that can understand and generate human language. Modern LLMs (such as the GPT series, Claude, etc.) can write articles, answer questions, write code, and even conduct reasoning. They are the core engines of various AI applications, especially suitable for scenarios requiring language understanding and generation.

|

||||

|

||||

### Local Model Inference

|

||||

The process of running AI models on a user's own device rather than relying on cloud services. This approach provides better privacy protection (data does not leave the local environment) and lower latency (no network transmission required), making it suitable for processing sensitive data or scenarios requiring offline work, though it is typically limited by the computational capacity of local devices.

|

||||

|

||||

## M

|

||||

### Model-as-a-Service (MaaS)

|

||||

A cloud service model where providers offer access to pre-trained models through APIs. Users don't need to worry about training, deploying, or maintaining models; they simply call the API and pay for usage, significantly lowering the development threshold and infrastructure costs of AI applications. It's suitable for quickly validating ideas or building prototypes.

|

||||

|

||||

### Max_tokens

|

||||

A parameter that controls the maximum number of characters the model generates in a single response. One token is approximately equivalent to 4 characters or 3/4 of an English word. Setting a reasonable maximum token count can control the length of the answer, avoid overly verbose output, and ensure complete expression of necessary information. For example, a brief summary might be set to 200 tokens, while a detailed report might require 2000 tokens.

|

||||

|

||||

### Memory

|

||||

The ability of AI systems to save and use historical interaction information, keeping multi-turn conversations coherent. Effective memory mechanisms enable AI to understand contextual references, remember user preferences, and track long-term goals, thereby providing personalized and continuous user experiences, avoiding repeatedly asking for information that has already been provided.

|

||||

|

||||

### Metadata Filtering

|

||||

A technique that utilizes document attribute information (such as title, author, date, classification tags) for content filtering. For example, users can restrict retrieval to technical documents within a specific date range, or only query reports from a specific department, thereby narrowing the scope before retrieval, improving search efficiency and result relevance.

|

||||

|

||||

### Multimodal Model

|

||||

A model capable of processing multiple types of input data, such as text, images, audio, etc. These models break the single-perception limitations of traditional AI and can understand image content, analyze video scenes, recognize voice emotions, creating possibilities for more comprehensive information understanding, suitable for complex application scenarios requiring cross-media understanding.

|

||||

|

||||

### Multi-tool-call

|

||||

The ability of a model to call multiple different tools in a single response. For example, when processing a request like "Compare tomorrow's weather in Beijing and Shanghai and recommend suitable clothing," the model can simultaneously call weather APIs for both cities, then provide reasonable suggestions based on the returned results, improving the efficiency of handling complex tasks.

|

||||

|

||||

### Multi-path Retrieval

|

||||

A strategy for obtaining information in parallel through multiple retrieval methods. For example, the system can simultaneously use keyword search, semantic matching, and knowledge graph queries, then merge and filter the results, improving the coverage and accuracy of information retrieval, particularly suitable for handling complex or ambiguous user queries.

|

||||

|

||||

## P

|

||||

### Parent-Child Chunking

|

||||

An advanced text splitting strategy that creates two levels of content blocks: parent blocks retain the complete context, while child blocks provide precise matching points. The system first uses child blocks to determine the location of relevant content, then retrieves the corresponding parent blocks to provide complete background, balancing retrieval precision and context completeness, suitable for processing complex documents such as research papers or technical manuals.

|

||||

|

||||

### Presence Penalty

|

||||

A parameter setting that prevents language models from repeating content. It encourages models to explore new expressions by reducing the probability of generating vocabulary that has already appeared. The higher the parameter value, the less likely the model is to repeat previously generated content, helping to avoid common circular arguments or repetitive problem statements in AI responses.

|

||||

|

||||

### Predefined Model

|

||||

A ready-made model trained and provided by AI vendors that users can directly call without training themselves. These closed-source models (such as GPT-4, Claude, etc.) are typically trained and optimized on a large scale, powerful and easy to use, suitable for rapid application development or teams lacking independent training resources.

|

||||

|

||||

### Prompt

|

||||

Input text that guides AI models to generate specific responses. Well-designed prompts can significantly improve output quality, including elements such as clear instructions, providing examples, setting format requirements, etc. For example, different prompts can guide the same model to generate academic articles, creative stories, or technical analysis, making them one of the most critical factors affecting AI output.

|

||||

|

||||

## Q

|

||||

### Q&A Mode

|

||||

A special indexing strategy that automatically generates question-answer pairs for document content, implementing "question-to-question" matching. When a user asks a question, the system looks for semantically similar pre-generated questions and returns the corresponding answers. This mode is particularly suitable for FAQ content or structured knowledge points, providing a more precise question-answering experience.

|

||||

|

||||

## R

|

||||

### Retrieval-Augmented Generation (RAG)

|

||||

A technical architecture that combines external knowledge retrieval and language generation. The system first retrieves information from a knowledge base related to the user's question, then provides this information as context to the language model, generating well-founded, accurate answers. RAG overcomes the limited knowledge and hallucination problems of language models, particularly suitable for application scenarios requiring the latest or specialized knowledge.

|

||||

|

||||

### Reasoning and Acting (ReAct)

|

||||

An AI agent framework that enables models to alternate between thinking and executing operations. In the problem-solving process, the model first analyzes the current state, formulates a plan, then calls appropriate tools (such as search engines, calculators), and thinks about the next step based on the tool's returned results, forming a thinking-action-thinking cycle until the problem is solved. It is suitable for complex tasks requiring multiple steps and external tools.

|

||||

|

||||

### ReRank

|

||||

A technique for secondary sorting of preliminary retrieval results to improve the relevance of final results. For example, the system might first quickly retrieve a large number of candidate content through efficient algorithms, then use more complex but precise models to reevaluate and sort these results, placing the most relevant content first, balancing retrieval efficiency and result quality.

|

||||

|

||||

### Rerank Model

|

||||

A model specifically designed to evaluate the relevance of retrieval results to queries and reorder them. Unlike preliminary retrieval, these models typically use more complex algorithms, consider more semantic factors, and can more accurately determine how well content matches user intent. For example, models like Cohere Rerank and BGE Reranker can significantly improve the quality of search and recommendation system results.

|

||||

|

||||

### Response_format

|

||||

A specification of the structure type for model output, such as plain text, JSON, or HTML. Setting a specific response format can make AI output easier to process by programs or integrate into other systems. For example, requiring the model to answer in JSON format ensures the output has a consistent structure, facilitating direct parsing and display by frontend applications.

|

||||

|

||||

### Reverse Calling

|

||||

A bidirectional mechanism for plugins to interact with platforms, allowing plugins to actively call platform functionality. In Dify, this means third-party plugins can not only be called by AI but can also use Dify's core features in return, such as triggering workflows or calling other plugins, greatly enhancing the system's extensibility and flexibility.

|

||||

|

||||

### Retrieval Test

|

||||