Example Use Case

:::tip **Temporary Chat Session** diff --git a/docs/features/document-extraction/docling.md b/docs/features/document-extraction/docling.md index f2256e61..cb6487f8 100644 --- a/docs/features/document-extraction/docling.md +++ b/docs/features/document-extraction/docling.md @@ -42,6 +42,21 @@ docker run --gpus all -p 5001:5001 -e DOCLING_SERVE_ENABLE_UI=true quay.io/docli * Update the context extraction engine URL to `http://host.docker.internal:5001`. * Save the changes. +### (optional) Step 3: Configure Docling's picture description features + +* on the `Documents` tab: +* Activate `Describe Pictures in Documents` button. +* Below, choose a description mode: `local` or `API` + * `local`: vision model will run in the same context as Docling itself + * `API`: Docling will make a call to an external service/container (i.e. Ollama) +* fill in an **object value** as described at https://github.com/docling-project/docling-serve/blob/main/docs/usage.md#picture-description-options +* Save the changes. + + #### Make sure the object value is a valid JSON! Working examples below: + +  +  + Verifying Docling in Docker ===================================== diff --git a/docs/features/openldap.mdx b/docs/features/openldap.mdx new file mode 100644 index 00000000..cefb6f0b --- /dev/null +++ b/docs/features/openldap.mdx @@ -0,0 +1,208 @@ +--- +sidebar_position: 9 +--- + +# OpenLDAP Integration + +This guide provides a comprehensive walkthrough for setting up OpenLDAP authentication with Open WebUI. It covers creating a test OpenLDAP server using Docker, seeding it with sample users, configuring Open WebUI to connect to it, and troubleshooting common issues. + +## 1. Setting Up OpenLDAP with Docker + +The easiest way to get a test OpenLDAP server running is by using Docker. This `docker-compose.yml` file will create an OpenLDAP container and an optional phpLDAPadmin container for a web-based GUI. + +```yaml title="docker-compose.yml" +version: "3.9" +services: + ldap: + image: osixia/openldap:1.5.0 + container_name: openldap + environment: + LDAP_ORGANISATION: "Example Inc" + LDAP_DOMAIN: "example.org" + LDAP_ADMIN_PASSWORD: admin + LDAP_TLS: "false" + volumes: + - ./ldap/var:/var/lib/ldap + - ./ldap/etc:/etc/ldap/slapd.d + ports: + - "389:389" + networks: [ldapnet] + + phpldapadmin: + image: osixia/phpldapadmin:0.9.0 + environment: + PHPLDAPADMIN_LDAP_HOSTS: "ldap" + ports: + - "6443:443" + networks: [ldapnet] + +networks: + ldapnet: + driver: bridge +``` + +- The `osixia/openldap` image automatically creates a basic organization structure with just `LDAP_DOMAIN` and `LDAP_ADMIN_PASSWORD`. +- The `osixia/phpldapadmin` image provides a web interface for managing your LDAP directory, accessible at `https://localhost:6443`. + +Run `docker-compose up -d` to start the containers. Confirm that the LDAP server has started by checking the logs: `docker logs openldap`. You should see a "started slapd" message. + +## 2. Seeding a Sample User (LDIF) + +To test the login, you need to add a sample user to the LDAP directory. Create a file named `seed.ldif` with the following content: + +```ldif title="seed.ldif" +dn: ou=users,dc=example,dc=org +objectClass: organizationalUnit +ou: users + +dn: uid=jdoe,ou=users,dc=example,dc=org +objectClass: inetOrgPerson +cn: John Doe +sn: Doe +uid: jdoe +mail: jdoe@example.org +userPassword: {PLAIN}password123 +``` + +**Note on Passwords:** The `userPassword` field is set to a plain text value for simplicity in this test environment. In production, you should always use a hashed password. You can generate a hashed password using `slappasswd` or `openssl passwd`. For example: + +```bash +# Using slappasswd (inside the container) +docker exec openldap slappasswd -s your_password + +# Using openssl +openssl passwd -6 your_password +``` + +Copy the LDIF file into the container and use `ldapadd` to add the entry: + +```bash +docker cp seed.ldif openldap:/seed.ldif +docker exec openldap ldapadd -x -D "cn=admin,dc=example,dc=org" -w admin -f /seed.ldif +``` + +A successful operation will show an "adding new entry" message. + +## 3. Verifying the LDAP Connection + +Before configuring Open WebUI, verify that the LDAP server is accessible and the user exists. + +```bash +ldapsearch -x -H ldap://localhost:389 \ + -D "cn=admin,dc=example,dc=org" -w admin \ + -b "dc=example,dc=org" "(uid=jdoe)" +``` + +If the command returns the `jdoe` user entry, your LDAP server is ready. + +## 4. Configuring Open WebUI + +Now, configure your Open WebUI instance to use the LDAP server for authentication. + +### Environment Variables + +Set the following environment variables for your Open WebUI instance. + +:::info +Open WebUI reads these environment variables only on the first startup. Subsequent changes must be made in the Admin settings panel of the UI unless you have `ENABLE_PERSISTENT_CONFIG=false`. +::: + +```env +# Enable LDAP +ENABLE_LDAP="true" + +# --- Server Settings --- +LDAP_SERVER_LABEL="OpenLDAP" +LDAP_SERVER_HOST="localhost" # Or the IP/hostname of your LDAP server +LDAP_SERVER_PORT="389" # Use 389 for plaintext/StartTLS, 636 for LDAPS +LDAP_USE_TLS="false" # Set to "true" for LDAPS or StartTLS +LDAP_VALIDATE_CERT="false" # Set to "true" in production with valid certs + +# --- Bind Credentials --- +LDAP_APP_DN="cn=admin,dc=example,dc=org" +LDAP_APP_PASSWORD="admin" + +# --- User Schema --- +LDAP_SEARCH_BASE="dc=example,dc=org" +LDAP_ATTRIBUTE_FOR_USERNAME="uid" +LDAP_ATTRIBUTE_FOR_MAIL="mail" +LDAP_SEARCH_FILTER="(uid=%(user)s)" # More secure and performant +``` + +### UI Configuration + +Alternatively, you can configure these settings directly in the UI: + +1. Log in as an administrator. +2. Navigate to **Settings** > **General**. +3. Enable **LDAP Authentication**. +4. Fill in the fields corresponding to the environment variables above. +5. Save the settings and restart Open WebUI. + +## 5. Logging In + +Open a new incognito browser window and navigate to your Open WebUI instance. + +- **Login ID:** `jdoe` +- **Password:** `password123` (or the password you set) + +Upon successful login, a new user account with "User" role will be created automatically in Open WebUI. An administrator can later elevate the user's role if needed. + +## 6. Troubleshooting Common Errors + +Here are solutions to common errors encountered during LDAP integration. + +### `port must be an integer` + +``` +ERROR | open_webui.routers.auths:ldap_auth:447 - LDAP authentication error: port must be an integer - {} +``` + +**Cause:** The `LDAP_SERVER_PORT` environment variable is being passed as a string with quotes (e.g., `"389"`). + +**Solution:** +- Ensure the `LDAP_SERVER_PORT` value in your `.env` file or export command has no quotes: `LDAP_SERVER_PORT=389`. +- Remove the protocol (`http://`, `ldap://`) from `LDAP_SERVER_HOST`. It should just be the hostname or IP (e.g., `localhost`). + +### `[SSL: UNEXPECTED_EOF_WHILE_READING] EOF occurred` + +``` +ERROR | LDAP authentication error: ("('socket ssl wrapping error: [SSL: UNEXPECTED_EOF_WHILE_READING] EOF occurred in violation of protocol (_ssl.c:1016)',)",) - {} +``` + +**Cause:** This is a TLS handshake failure. The client (Open WebUI) is trying to initiate a TLS connection, but the server (OpenLDAP) is not configured for it or is expecting a different protocol. + +**Solution:** +- **If you don't intend to use TLS:** Set `LDAP_USE_TLS="false"` and connect to the standard plaintext port `389`. +- **If you intend to use LDAPS:** Ensure your LDAP server is configured for TLS and listening on port `636`. Set `LDAP_SERVER_PORT="636"` and `LDAP_USE_TLS="true"`. +- **If you intend to use StartTLS:** Your LDAP server must support the StartTLS extension. Connect on port `389` and set `LDAP_USE_TLS="true"`. + +### `err=49 text=` (Invalid Credentials) + +``` +openldap | ... conn=1001 op=0 RESULT tag=97 err=49 text= +``` + +**Cause:** The LDAP server rejected the bind attempt because the distinguished name (DN) or the password was incorrect. This happens during the second bind attempt, where Open WebUI tries to authenticate with the user's provided credentials. + +**Solution:** +1. **Verify the Password:** Ensure you are using the correct plaintext password. The `userPassword` value in the LDIF file is what the server expects. If it's a hash, you must provide the original plaintext password. +2. **Check the User DN:** The DN used for the bind (`uid=jdoe,ou=users,dc=example,dc=org`) must be correct. +3. **Test with `ldapwhoami`:** Verify the credentials directly against the LDAP server to isolate the issue from Open WebUI. + ```bash + ldapwhoami -x -H ldap://localhost:389 \ + -D "uid=jdoe,ou=users,dc=example,dc=org" -w "password123" + ``` + If this command fails with `ldap_bind: Invalid credentials (49)`, the problem is with the credentials or the LDAP server's password configuration, not Open WebUI. +4. **Reset the Password:** If you don't know the password, reset it using `ldapmodify` or `ldappasswd`. It's often easiest to use a `{PLAIN}` password for initial testing and then switch to a secure hash like `{SSHA}`. + + **Example LDIF to change password:** + ```ldif title="change_password.ldif" + dn: uid=jdoe,ou=users,dc=example,dc=org + changetype: modify + replace: userPassword + userPassword: {PLAIN}newpassword + ``` + Apply it with: + ```bash + docker exec openldap ldapmodify -x -D "cn=admin,dc=example,dc=org" -w admin -f /path/to/change_password.ldif diff --git a/docs/features/plugin/index.mdx b/docs/features/plugin/index.mdx index 90a68478..42b321e1 100644 --- a/docs/features/plugin/index.mdx +++ b/docs/features/plugin/index.mdx @@ -17,7 +17,7 @@ Getting started with Tools and Functions is easy because everything’s already ## What are "Tools" and "Functions"? -Let's start by thinking of **Open WebUI** as a "base" software that can do many tasks related to using Large Language Models (LLMs). But sometimes, you need extra features or abilities that don't come *out of the box*—this is where **tools** and **functions** come into play. +Let's start by thinking of **Open WebUI** as a "base" software that can do many tasks related to using Large Language Models (LLMs). But sometimes, you need extra features or abilities that don't come _out of the box_—this is where **tools** and **functions** come into play. ### Tools @@ -32,6 +32,7 @@ Imagine you're chatting with an LLM and you want it to give you the latest weath Tools are essentially **abilities** you’re giving your AI to help it interact with the outside world. By adding these, the LLM can "grab" useful information or perform specialized tasks based on the context of the conversation. #### Examples of Tools (extending LLM’s abilities): + 1. **Real-time weather predictions** 🛰️. 2. **Stock price retrievers** 📈. 3. **Flight tracking information** ✈️. @@ -40,19 +41,29 @@ Tools are essentially **abilities** you’re giving your AI to help it interact While **tools** are used by the AI during a conversation, **functions** help extend or customize the capabilities of Open WebUI itself. Imagine tools are like adding new ingredients to a dish, and functions are the process you use to control the kitchen! 🚪 -#### Let's break that down: +#### Let's break that down: - **Functions** give you the ability to tweak or add **features** inside **Open WebUI** itself. - You’re not giving new abilities to the LLM, but instead, you’re extending the **interface, behavior, or logic** of the platform itself! For instance, maybe you want to: + 1. Add a new AI model like **Anthropic** to the WebUI. 2. Create a custom button in your toolbar that performs a frequently used command. 3. Implement a better **filter** function that catches inappropriate or **spammy messages** from the incoming text. Without functions, these would all be out of reach. But with this framework in Open WebUI, you can easily extend these features! +### Where to Find and Manage Functions + +Functions are not located in the same place as Tools. + +- **Tools** are about model access and live in your **Workspace tabs** (where you add models, prompts, and knowledge collections). They can be added by users if granted permissions. +- **Functions** are about **platform customization** and are found in the **Admin Panel**. + They are configured and managed only by admins who want to extend the platform interface or behavior for all users. + ### Summary of Differences: + - **Tools** are things that allow LLMs to **do more things** outside their default abilities (such as retrieving live info or performing custom tasks based on external data). - **Functions** help the WebUI itself **do more things**, like adding new AI models or creating smarter ways to filter data. @@ -64,17 +75,17 @@ And then, we have **Pipelines**… Here’s where things start to sound pretty t **Pipelines** are part of an Open WebUI initiative focused on making every piece of the WebUI **inter-operable with OpenAI’s API system**. Essentially, they extend what both **Tools** and **Functions** can already do, but now with even more flexibility. They allow you to turn features into OpenAI API-compatible formats. 🧠 -### But here’s the thing… +### But here’s the thing… You likely **won't need** pipelines unless you're dealing with super-advanced setups. - **Who are pipelines for?** Typically, **experts** or people running more complicated use cases. - **When do you need them?** If you're trying to offload processing from your primary Open WebUI instance to a different machine (so you don’t overload your primary system). - + In most cases, as a beginner or even an intermediate user, you won’t have to worry about pipelines. Just focus on enjoying the benefits that **tools** and **functions** bring to your Open WebUI experience! ## Want to Try? 🚀 Jump into Open WebUI, head over to the community section, and try importing a tool like **weather updates** or maybe adding a new feature to the toolbar with a function. Exploring these tools will show you how powerful and flexible Open WebUI can be! -🌟 There's always more to learn, so stay curious and keep experimenting! \ No newline at end of file +🌟 There's always more to learn, so stay curious and keep experimenting! diff --git a/docs/getting-started/advanced-topics/observability.md b/docs/getting-started/advanced-topics/observability.md new file mode 100644 index 00000000..38b5a024 --- /dev/null +++ b/docs/getting-started/advanced-topics/observability.md @@ -0,0 +1,145 @@ +--- +sidebar_position: 7 +title: "📊 Observability & OpenTelemetry" +--- + +# 📊 Observability with OpenTelemetry + +Open WebUI (v0.4.0+) supports **distributed tracing and metrics** export via the OpenTelemetry (OTel) protocol (OTLP). This enables integration with monitoring systems like Grafana LGTM Stack, Jaeger, Tempo, and Prometheus to monitor request flows, database/Redis queries, response times, and more in real-time. + +## 🚀 Quick Start with Docker Compose + +The fastest way to get started with observability is using the pre-configured Docker Compose setup: + +```bash +# Minimal setup: WebUI + Grafana LGTM all-in-one +docker compose -f docker-compose.otel.yaml up -d +``` + +The `docker-compose.otel.yaml` file starts the following services: + +| Service | Port | Description | +|---------|------|-------------| +| **grafana/otel-lgtm** | 3000 (UI), 4317 (OTLP gRPC), 4318 (OTLP HTTP) | Loki + Grafana + Tempo + Mimir all-in-one | +| **open-webui** | 8080 → 8080 | WebUI with OTEL environment variables configured | + +After startup, visit `http://localhost:3000` and log in with `admin` / `admin` to access the Grafana dashboard. + +## ⚙️ Environment Variables + +Configure OpenTelemetry using these environment variables: + +| Variable | Default | Description | +|----------|---------|-------------| +| `ENABLE_OTEL` | **false** | Set to `true` to enable trace export | +| `OTEL_EXPORTER_OTLP_ENDPOINT` | `http://localhost:4317` | OTLP gRPC/HTTP Collector URL | +| `OTEL_EXPORTER_OTLP_INSECURE` | `true` | Disable TLS (for local testing) | +| `OTEL_SERVICE_NAME` | `open-webui` | Service name tag in resource attributes | +| `OTEL_BASIC_AUTH_USERNAME` / `OTEL_BASIC_AUTH_PASSWORD` | _(empty)_ | Basic Auth credentials for Collector if required | +| `ENABLE_OTEL_METRICS` | **true** | Enable FastAPI HTTP metrics export | + +> You can override these in your `.env` file or `docker-compose.*.yaml` configuration. + +## 📊 Data Collection + +### Distributed Tracing + +The `utils/telemetry/instrumentors.py` module automatically instruments the following libraries: + +* **FastAPI** (request routes) · **SQLAlchemy** · **Redis** · External calls via `requests` / `httpx` / `aiohttp` +* Span attributes include: + * `db.instance`, `db.statement`, `redis.args` + * `http.url`, `http.method`, `http.status_code` + * Error details: `error.message`, `error.kind` when exceptions occur + +Open WebUI creates worker threads only when needed, minimizing overhead while providing efficient trace export. + +### Metrics Collection + +The `utils/telemetry/metrics.py` module exports the following metrics: + +| Instrument | Type | Unit | Labels | +|------------|------|------|--------| +| `http.server.requests` | Counter | 1 | `http.method`, `http.route`, `http.status_code` | +| `http.server.duration` | Histogram | ms | Same as above | + +Metrics are pushed to the Collector (OTLP) every 10 seconds and can be visualized in Prometheus → Grafana. + +## 📈 Grafana Dashboard Setup + +The example LGTM image has pre-configured data source UIDs: `tempo`, `prometheus`, and `loki`. + +### Importing Dashboard Configuration + +1. **Dashboards → Import → Upload JSON** +2. Paste the provided JSON configuration (`docs/dashboards/ollama.json`) → Import +3. An "Ollama" dashboard will be created in the `Open WebUI` folder + + + +For persistent dashboard provisioning, mount the provisioning directories: + +```yaml +grafana: + volumes: + - ./grafana/dashboards:/etc/grafana/dashboards:ro + - ./grafana/provisioning/dashboards:/etc/grafana/provisioning/dashboards:ro +``` + +### Exploring Metrics with Prometheus + +You can explore and query metrics directly using Grafana's Explore feature: + + + +This allows you to: +- Run custom PromQL queries to analyze API call patterns +- Monitor request rates, error rates, and response times +- Create custom visualizations for specific metrics + + + +## 🔧 Custom Collector Setup + +If you're running your own OpenTelemetry Collector instead of the provided `docker-compose.otel.yaml`: + +```bash +# Set your collector endpoint +export OTEL_EXPORTER_OTLP_ENDPOINT=http://your-collector:4317 +export ENABLE_OTEL=true + +# Start Open WebUI +docker run -d --name open-webui \ + -p 8080:8080 \ + -e ENABLE_OTEL=true \ + -e OTEL_EXPORTER_OTLP_ENDPOINT=http://your-collector:4317 \ + -v open-webui:/app/backend/data \ + ghcr.io/open-webui/open-webui:main +``` + +## 🚨 Troubleshooting + +### Common Issues + +**Traces not appearing in Grafana:** +- Verify `ENABLE_OTEL=true` is set +- Check collector connectivity: `curl http://localhost:4317` +- Review Open WebUI logs for OTLP export errors + +**High overhead:** +- Reduce sampling rate using `OTEL_TRACES_SAMPLER_ARG` +- Disable metrics with `ENABLE_OTEL_METRICS=false` if not needed + +**Authentication issues:** +- Set `OTEL_BASIC_AUTH_USERNAME` and `OTEL_BASIC_AUTH_PASSWORD` for authenticated collectors +- Verify TLS settings with `OTEL_EXPORTER_OTLP_INSECURE` + +## 🌟 Best Practices + +1. **Start Simple:** Use the provided `docker-compose.otel.yaml` for initial setup +2. **Monitor Resource Usage:** Track CPU and memory impact of telemetry +3. **Adjust Sampling:** Reduce sampling in high-traffic production environments +4. **Custom Dashboards:** Create application-specific dashboards for your use cases +5. **Alert Setup:** Configure alerts for error rates and response time thresholds + +--- diff --git a/docs/getting-started/env-configuration.md b/docs/getting-started/env-configuration.md index a208651a..73f7d560 100644 --- a/docs/getting-started/env-configuration.md +++ b/docs/getting-started/env-configuration.md @@ -952,7 +952,7 @@ directly. Ensure that no users are present in the database if you intend to turn :::info -When deploying Open-WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the WEBUI_SECRET_KEY value is the same across all instances in order to enable users to continue working if a node is recycled or their session is transferred to a different node. Without it, they will need to sign in again each time the underlying node changes. +When deploying Open WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the WEBUI_SECRET_KEY value is the same across all instances in order to enable users to continue working if a node is recycled or their session is transferred to a different node. Without it, they will need to sign in again each time the underlying node changes. ::: @@ -965,16 +965,20 @@ When deploying Open-WebUI in a multi-node/worker cluster with a load balancer, y :::info **Disabled when enabled:** + - Automatic version update checks - Downloads of embedding models from Hugging Face Hub - If you did not download an embedding model prior to activating `OFFLINE_MODE` any RAG, web search and document analysis functionality may not work properly - Update notifications in the UI **Still functional:** + - External LLM API connections (OpenAI, etc.) - OAuth authentication providers - Web search and RAG with external APIs +Read more about `offline mode` in this [guide](/docs/tutorials/offline-mode.md). + ::: #### `RESET_CONFIG_ON_START` @@ -2974,6 +2978,13 @@ If `OAUTH_PICTURE_CLAIM` is set to `''` (empty string), then the OAuth picture c - Description: Enables or disables enforced temporary chats for users. - Persistence: This environment variable is a `PersistentConfig` variable. +#### `USER_PERMISSIONS_CHAT_SYSTEM_PROMPT` + +- Type: `str` +- Default: `True` +- Description: Allows or disallows users to set a custom system prompt for all of their chats. +- Persistence: This environment variable is a `PersistentConfig` variable. + ### Feature Permissions #### `USER_PERMISSIONS_FEATURES_DIRECT_TOOL_SERVERS` @@ -3173,6 +3184,8 @@ These variables are not specific to Open WebUI but can still be valuable in cert Supports SQLite and Postgres. Changing the URL does not migrate data between databases. Documentation on the URL scheme is available available [here](https://docs.sqlalchemy.org/en/20/core/engines.html#database-urls). +If your database password contains special characters, please ensure they are properly URL-encoded. For example, a password like `p@ssword` should be encoded as `p%40ssword`. + ::: #### `DATABASE_SCHEMA` @@ -3184,8 +3197,8 @@ Documentation on the URL scheme is available available [here](https://docs.sqlal #### `DATABASE_POOL_SIZE` - Type: `int` -- Default: `0` -- Description: Specifies the size of the database pool. A value of `0` disables pooling. +- Default: `None` +- Description: Specifies the pooling strategy and size of the database pool. By default SQLAlchemy will automatically chose the proper pooling strategy for the selected database connection. A value of `0` disables pooling. A value larger `0` will set the pooling strategy to `QueuePool` and the pool size accordingly. #### `DATABASE_POOL_MAX_OVERFLOW` @@ -3229,11 +3242,12 @@ More information about this setting can be found [here](https://docs.sqlalchemy. - Type: `str` - Example: `redis://localhost:6379/0` -- Description: Specifies the URL of the Redis instance for the app-state. +- Example with TLS: `rediss://localhost:6379/0` +- Description: Specifies the URL of the Redis instance for the app state. :::info -When deploying Open-WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the REDIS_URL value is set. Without it, session, persistency and consistency issues in the app-state will occur as the workers would be unable to communicate. +When deploying Open WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the REDIS_URL value is set. Without it, session, persistency and consistency issues in the app state will occur as the workers would be unable to communicate. ::: @@ -3256,7 +3270,7 @@ When deploying Open-WebUI in a multi-node/worker cluster with a load balancer, y :::info -When deploying Open-WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the ENABLE_WEBSOCKET_SUPPORT value is set. Without it, websocket consistency and persistency issues will occur. +When deploying Open WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the ENABLE_WEBSOCKET_SUPPORT value is set. Without it, websocket consistency and persistency issues will occur. ::: @@ -3268,7 +3282,7 @@ When deploying Open-WebUI in a multi-node/worker cluster with a load balancer, y :::info -When deploying Open-WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the WEBSOCKET_MANAGER value is set and a key-value NoSQL database like Redis is used. Without it, websocket consistency and persistency issues will occur. +When deploying Open WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the WEBSOCKET_MANAGER value is set and a key-value NoSQL database like Redis is used. Without it, websocket consistency and persistency issues will occur. ::: @@ -3280,7 +3294,7 @@ When deploying Open-WebUI in a multi-node/worker cluster with a load balancer, y :::info -When deploying Open-WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the WEBSOCKET_REDIS_URL value is set and a key-value NoSQL database like Redis is used. Without it, websocket consistency and persistency issues will occur. +When deploying Open WebUI in a multi-node/worker cluster with a load balancer, you must ensure that the WEBSOCKET_REDIS_URL value is set and a key-value NoSQL database like Redis is used. Without it, websocket consistency and persistency issues will occur. ::: diff --git a/docs/getting-started/quick-start/tab-docker/DockerCompose.md b/docs/getting-started/quick-start/tab-docker/DockerCompose.md index 99bc092e..a3765c21 100644 --- a/docs/getting-started/quick-start/tab-docker/DockerCompose.md +++ b/docs/getting-started/quick-start/tab-docker/DockerCompose.md @@ -13,7 +13,6 @@ Docker Compose requires an additional package, `docker-compose-v2`. Here is an example configuration file for setting up Open WebUI with Docker Compose: ```yaml -version: '3' services: openwebui: image: ghcr.io/open-webui/open-webui:main diff --git a/docs/intro.mdx b/docs/intro.mdx index d427cabe..e856d9ce 100644 --- a/docs/intro.mdx +++ b/docs/intro.mdx @@ -13,6 +13,9 @@ import { SponsorList } from "@site/src/components/SponsorList"; **Open WebUI is an [extensible](https://docs.openwebui.com/features/plugin/), feature-rich, and user-friendly self-hosted AI platform designed to operate entirely offline.** It supports various LLM runners like **Ollama** and **OpenAI-compatible APIs**, with **built-in inference engine** for RAG, making it a **powerful AI deployment solution**. +Passionate about open-source AI? [Join our team →](https://careers.openwebui.com/) + +    @@ -23,6 +26,10 @@ import { SponsorList } from "@site/src/components/SponsorList"; [](https://discord.gg/5rJgQTnV4s) [](https://github.com/sponsors/tjbck) + + + +  :::tip diff --git a/docs/license.mdx b/docs/license.mdx index c8de7721..4a0dcb4c 100644 --- a/docs/license.mdx +++ b/docs/license.mdx @@ -29,6 +29,8 @@ We take your trust seriously. We want Open WebUI to stay **empowering and access ## Open WebUI License: Explained + + **Effective with v0.6.6 (April 19, 2025):** Open WebUI’s license is now: @@ -43,6 +45,10 @@ Open WebUI’s license is now: - **All code submitted/merged to the codebase up to and including release v0.6.5 remains BSD-3 licensed** (no branding requirement applies for that legacy code). - **CLA required for new contributions** after v0.6.5 (v0.6.6+) under the updated license. +:::tip +**TL;DR: Want to use Open WebUI for free? Just keep the branding.** +::: + This is not legal advice—refer to the full [LICENSE](https://github.com/open-webui/open-webui/blob/main/LICENSE) for exact language. --- @@ -184,15 +190,37 @@ Anything pre-0.6.6 is pure BSD-3—**these branding limits didn’t exist**. The Otherwise, you must retain branding and clearly say your fork isn't the official version. -### **10. I’m an academic/non-profit researcher. Can I customize or remove branding for a research study?** +### **10. I’m an individual academic/non-profit researcher. Can I customize or remove branding for a research study?** -**Absolutely—academic research matters to us!** If you are a non-profit or academic institution running a research study and require branding changes (removal, customization, or white-labeling) for study purposes, **please email us at [hello@openwebui.com](mailto:hello@openwebui.com) with details about your project**. We review these requests on a case-by-case basis. We’re committed to supporting research and education, and _in almost all cases, we gladly grant permission for branding customizations or exemptions to facilitate your academic study—just ask!_ -Please ensure your request includes your institution, the research purpose, and expected duration/scale of your deployment. We’re here to help you advance open, reproducible science! +:::info +Please note: _This exemption is intended **exclusively for research studies**, not for general-purpose or ongoing institutional use._ +::: -### **TL;DR: Want to use Open WebUI for free? Just keep the branding.** +**Absolutely—academic research matters to us!** If you’re a researcher at a non-profit or academic institution conducting a **specific, time-limited research project** (for example: a user study, clinical trial, or classroom experiment), you may request permission to **remove or customize Open WebUI branding** for the duration of your study. **This is intended for single, well-defined research projects**—not for regular, day-to-day platform use across a department or lab. -- **No hidden costs, no trickery.** -- **If you want to remove or rebrand, let's talk—contributions and partnerships welcomed.** +To apply: +Please email us at [research-study@openwebui.com](mailto:research-study@openwebui.com) and use the subject line: +`Research Exemption Request – [Your Institution]` +- Your institution/department +- The purpose and description of your research study +- The planned start/end dates or study duration +- The expected scale (example: number of participants, research group size) + +We review requests individually and, in almost all cases, will grant permission for branding changes to support your research. + +**Example approved use cases:** +- Conducting a one-quarter psychology experiment involving students +- Running a clinical user acceptance study within a hospital for five weeks +- Field-testing model interaction in a history course for one semester + +**Not eligible:** +- Removing branding for ongoing departmental use, internal tooling, or public university portals +- “White-labeling” for all organization users beyond a research study scope + +We’re happy to help make Open WebUI accessible for your research—just ask! + + +--- #### Example Fork Branding Disclosure @@ -210,3 +238,61 @@ If you are a business that needs private or custom branding, advanced white-labe **Contact [sales@openwebui.com](mailto:sales@openwebui.com) for more information about commercial options.** + +--- + +## Branding, Attribution, and Customization Guidelines: What’s Allowed (and What’s Not) + +We want to make it as straightforward as possible for everyone, users, businesses, integrators, and contributors, to follow Open WebUI’s fair-use branding policy. **Transparency is key.** Here’s a simple, practical guide: + +- The Open WebUI NAME and LOGO must remain in their original, prominent locations throughout the application and user interface, including, but not limited to, the site header, sidebar, login screens, about page, and any other area where Open WebUI branding appears by default. +- ALL visual “Open WebUI” elements, logos, wordmarks, “About”/“Help” links, and any system/credits screens, must remain unmodified and fully visible. +- You must NOT obscure, overlay, or minimize Open WebUI branding in any way that de-emphasizes its identity, or suggests your build is the “official” one when it isn’t. +- Side-by-side branding: If, with approval, you add your own logo next to “Open WebUI,” the Open WebUI logo must always appear first (left or top), be fully visible, and your logo must be smaller and secondary. This is rarely allowed without a commercial license. + +### **What is (Typically) ALLOWED Without a License** + +| Scenario | Allowed Without License? | Guidance / Notes | +|---|:---:|---| +| **Use Open WebUI “as is” with branding** | ✅ | Just use the software as provided; no changes to logo, name, or identity. Easiest option! | +| **Add custom features, UI extensions, plugins while keeping Open WebUI branding fully intact** | ✅ | Absolutely encouraged! You can add new buttons, workflows, extensions, etc.—just don’t remove or alter Open WebUI’s own name and logo. | +| **Deploy to any number of internal users within your own organization (with all official branding kept)** | ✅ | Scale however you like! No per-user limits for internal/staff/company-wide use as long as Open WebUI branding is always present and prominent. | +| **Integrate Open WebUI with internal tools, scripts, or enterprise authentication** | ✅ | SSO, LDAP, custom backends—all welcomed! Integration is wide open, provided branding remains. | +| **Add a small, unobtrusive info banner at top, bottom, or side (e.g., “Managed by YourOrg”)** | ✅ | Banner must be visually secondary/subordinate (footer, bottom-right, etc.), not obscuring or distracting from Open WebUI’s branding. | +| **Add a legal or company link/notice in the About modal/dialog** | ✅ | Feel free to provide an additional legal link, team contact, or credits section—just never replace or remove Open WebUI credits. | +| **Add monitoring, analytics, or custom backend features** | ✅ | Backend integrations and observability are totally permitted. No restrictions if branding/UI is untouched. | +| **Create and maintain your own public fork (with all branding, plus “fork disclosure” notice)** | ✅ | As long as you keep branding as required and clearly state it’s “a fork of Open WebUI, not official.” | +| **Link to your company/project/github in the footer or About box** | ✅ | No problem if subordinate—must not give impression of replacing or rebranding the app. | +| **For deployments ≤50 users/rolling 30-day period** | ✅ | “Small scale” deployments may remove Open WebUI branding, but only under the explicit user count threshold—see policy for details. | + + +### **What is NOT ALLOWED Without an Enterprise/Proprietary License** + +| Scenario | Allowed Without License? | Why NOT Allowed/Details | +|--------------------------------------|:------------------------:|----------------------------------------------------------------------| +| Removing **ANY** Open WebUI logo/name from the UI | ❌ | This is a clear violation, even if you add “Powered by Open WebUI” somewhere else. | +| Modifying/changing the Open WebUI logo or color scheme to your branding | ❌ | The identity must remain clear and intact. No recoloring or swaps. | +| Adding your logo side-by-side/equal in size or priority | ❌ | Your logo must NEVER be bigger or more prominent than Open WebUI. If you place them adjacent, we must approve it, and Open WebUI always comes first, both visually and hierarchically. | +| Calling your fork “Open WebUI Enterprise” or misleadingly similar | ❌ | Don’t use our project’s name or branding in a way that could confuse users about source or official status. | +| Major UI modifications that move, minimize, or obscure original Open WebUI branding | ❌ | Moving the logo, shrinking it, hiding it, or pushing it offscreen violates the terms. | +| White-labelling for your business/SaaS | ❌ | You MUST get an enterprise or proprietary license before removing our branding for mass redistribution or resale. | +| “Powered by Open WebUI” credit ONLY (if all native branding is hidden/removed) | ❌ | This is NOT enough and does not meet license terms. | + +### **How to Add Custom Branding Responsibly** + +- **DO NOT remove, shrink, relocate, or recolor Open WebUI branding.** +- **If allowed (within limits!), your addition must appear in a secondary/footer area**—always clearly subordinate in visual hierarchy to Open WebUI’s own logo and name, and never replacing or overshadowing our original branding. +- **If you want to add a “Managed by Company X” banner**, it must remain clearly distinct, unobtrusive, and subordinate to Open WebUI’s own visual hierarchy. +- **Don’t “co-brand” or sandwich your logo next to ours** without explicit written approval. For nearly all non-trivial cases, you will need a commercial or custom license. +- **Always show us a screenshot before launch!** If in doubt, email [hello@openwebui.com](mailto:hello@openwebui.com)—we’re happy to review and advise. + +### **Still Unsure?** + +If you’re ever unsure, please feel free to contact us and share your planned deployment (even just a screenshot or Figma mockup) before launching. Our goal is to work with everyone and support honest, respectful uses—we’re always happy to offer guidance or help you with the right license if needed. Openness and fairness for all is important to us, so let’s collaborate to make it work for everyone. + +--- + +*This page is provided for informational purposes only and does not constitute legal advice. For exact and legally binding terms, refer to the full text of the [LICENSE](https://github.com/open-webui/open-webui/blob/main/LICENSE).* + + +**Thank you for respecting the Open WebUI community, our contributors, and our project’s future.** \ No newline at end of file diff --git a/docs/security.mdx b/docs/security.mdx new file mode 100644 index 00000000..a8a7ee79 --- /dev/null +++ b/docs/security.mdx @@ -0,0 +1,68 @@ +--- +sidebar_position: 1500 +title: "🔒 Security Policy" +--- + +import { TopBanners } from "@site/src/components/TopBanners"; + +

+

+

+

+## Setting up Open WebUI to use `Chatterbox TTS API`

+

+We recommend running with the frontend interface so you can upload the audio files for the voices you'd like to use before configuring Open WebUI's settings. If started correctly (see guide above), you can visit `http://localhost:4321` to access the frontend.

+

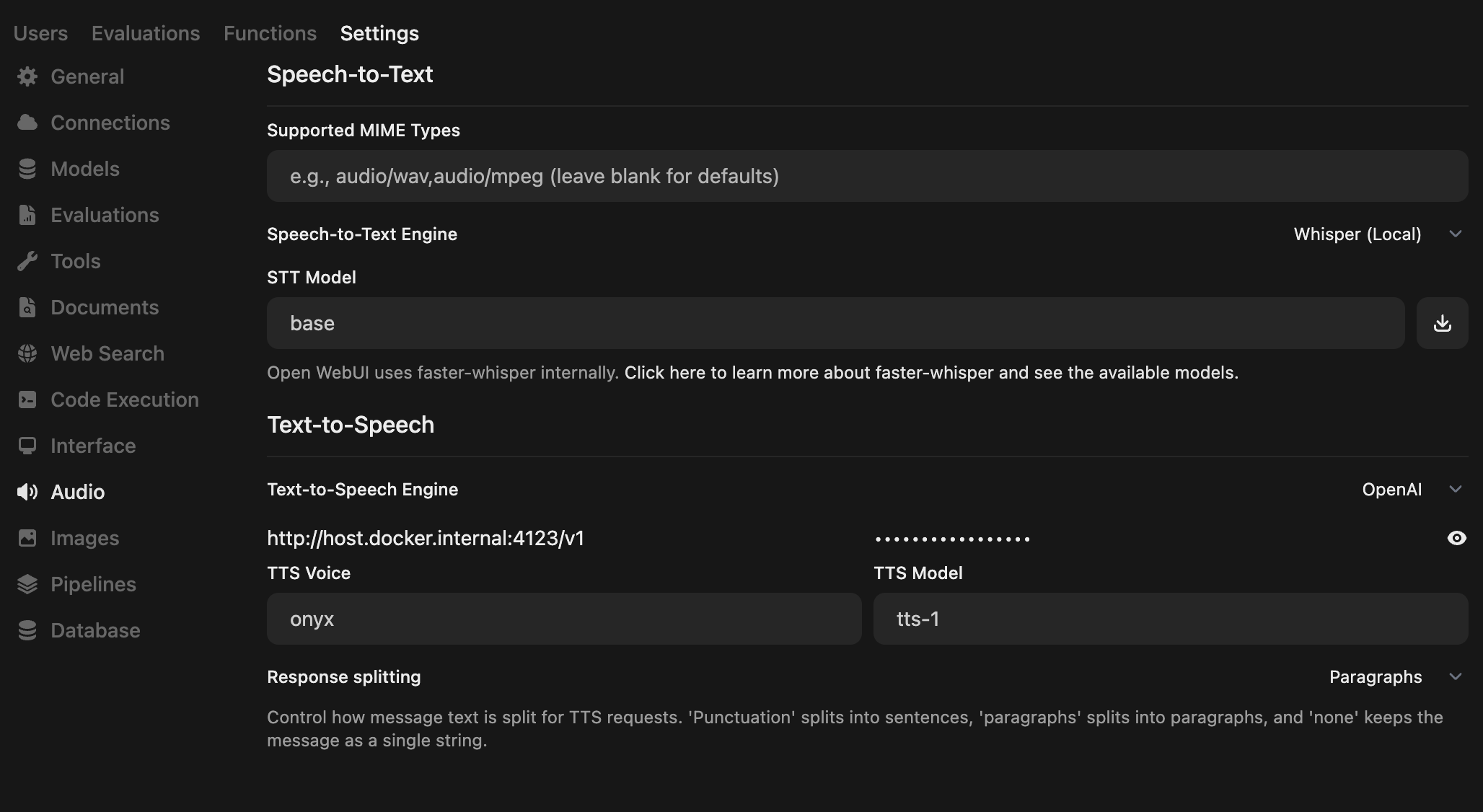

+To use Chatterbox TTS API with Open WebUI, follow these steps:

+

+- Open the Admin Panel and go to `Settings` -> `Audio`

+- Set your TTS Settings to match the following:

+- - Text-to-Speech Engine: OpenAI

+ - API Base URL: `http://localhost:4123/v1` # alternatively, try `http://host.docker.internal:4123/v1`

+ - API Key: `none`

+ - TTS Model: `tts-1` or `tts-1-hd`

+ - TTS Voice: Name of the voice you've cloned (can also include aliases, defined in the frontend)

+ - Response splitting: `Paragraphs`

+

+:::info

+The default API key is the string `none` (no API key required)

+:::

+

+

+

+

+# Please ⭐️ star the [repo on GitHub](https://github.com/travisvn/chatterbox-tts-api) to support development

+

+

+## Need help?

+

+Chatterbox can be challenging to get running the first time, and you may want to try different install options if you run into issues with a particular one.

+

+For more information on `chatterbox-tts-api`, you can visit the [GitHub repo](https://github.com/travisvn/chatterbox-tts-api)

+

+- 📖 **Documentation**: See [API Documentation](https://github.com/travisvn/chatterbox-tts-api/blob/main/docs/API_README.md) and [Docker Guide](https://github.com/travisvn/chatterbox-tts-api/blob/main/docs/DOCKER_README.md)

+- 💬 **Discord**: [Join the Discord for this project](http://chatterboxtts.com/discord)

diff --git a/src/components/Testimonals.tsx b/src/components/Testimonals.tsx

new file mode 100644

index 00000000..5423b7de

--- /dev/null

+++ b/src/components/Testimonals.tsx

@@ -0,0 +1,37 @@

+import { TopBanner } from "@site/src/components/Sponsors/TopBanner";

+import { useEffect, useState } from "react";

+

+export const Testimonals = ({bannerClassName = 'h-18', label= true, description= true, mobile = true }) => {

+ const items = [

+ {

+ imgSrc: "https://avatars.githubusercontent.com/u/5860369?v=4",

+ url: "https://github.com/Ithanil/",

+ name: "Jan Kessler, AI Architect",

+ company: "JGU Mainz",

+ content:

+ "Deploying an self-hosted AI chat stack for a large university like the Johannes Gutenberg University Mainz demands scalable and seamlessly integrable solutions. As the AI Architect at the university's Data Center, I chose Open WebUI as our chat frontend, impressed by its out-of-the-box readiness for enterprise environments and its rapidly paced, community-driven development. Now, our fully open-source stack – comprising LLMs, proxy/loadbalancer, and frontend – is successfully serving our user base of 30,000+ students and 5,000+ employees, garnering very positive feedback. Open WebUI’s user-centric design, rich feature set, and adaptability have solidified it as an outstanding choice for our institution.",

+ },

+ ];

+

+

+ return (

+ <>

+ {items.map((item, index) => (

+ 🚀 Running with the Frontend Interface

+ +This project includes an optional React-based web UI. Use Docker Compose profiles to easily opt in or out of the frontend: + +### With Docker Compose Profiles + +```bash +# API only (default behavior) +docker compose -f docker/docker-compose.yml up -d + +# API + Frontend + Web UI (with --profile frontend) +docker compose -f docker/docker-compose.yml --profile frontend up -d + +# Or use the convenient helper script for fullstack: +python start.py fullstack + +# Same pattern works with all deployment variants: +docker compose -f docker/docker-compose.gpu.yml --profile frontend up -d # GPU + Frontend +docker compose -f docker/docker-compose.uv.yml --profile frontend up -d # uv + Frontend +docker compose -f docker/docker-compose.cpu.yml --profile frontend up -d # CPU + Frontend +``` + +### Local Development + +For local development, you can run the API and frontend separately: + +```bash +# Start the API first (follow earlier instructions) +# Then run the frontend: +cd frontend && npm install && npm run dev +``` + +Click the link provided from Vite to access the web UI. + +### Build for Production + +Build the frontend for production deployment: + +```bash +cd frontend && npm install && npm run build +``` + +You can then access it directly from your local file system at `/dist/index.html`. + +### Port Configuration + +- **API Only**: Accessible at `http://localhost:4123` (direct API access) +- **With Frontend**: Web UI at `http://localhost:4321`, API requests routed via proxy + +The frontend uses a reverse proxy to route requests, so when running with `--profile frontend`, the web interface will be available at `http://localhost:4321` while the API runs behind the proxy. + +

+

+

+ ))}

+

+ )

+}

\ No newline at end of file

diff --git a/static/images/tutorial_image_generation_2.png b/static/images/tutorial_image_generation_2.png

new file mode 100644

index 00000000..3b174300

Binary files /dev/null and b/static/images/tutorial_image_generation_2.png differ

diff --git a/static/images/tutorials/otel/dashboards-config-import.png b/static/images/tutorials/otel/dashboards-config-import.png

new file mode 100644

index 00000000..cd5adb25

Binary files /dev/null and b/static/images/tutorials/otel/dashboards-config-import.png differ

diff --git a/static/images/tutorials/otel/explore-prometheus.png b/static/images/tutorials/otel/explore-prometheus.png

new file mode 100644

index 00000000..d435337d

Binary files /dev/null and b/static/images/tutorials/otel/explore-prometheus.png differ

{item.content}

+