` for assistance if your educational email expires.

+Your verification remains valid while your educational email is active. Email [support@dify.ai](mailto:support@dify.ai) for assistance if your educational email expires.

- **I don’t have an educational email. How can I apply for educational verification?**

diff --git a/en/getting-started/install-self-hosted/aa-panel.mdx b/en/getting-started/install-self-hosted/aa-panel.mdx

index 32c826f7..b6f5e981 100644

--- a/en/getting-started/install-self-hosted/aa-panel.mdx

+++ b/en/getting-started/install-self-hosted/aa-panel.mdx

@@ -34,7 +34,7 @@ title: Deploy with aaPanel

2. The first time you will be prompteINLINE_CODE_P`Docker Compose` the `Docker` and `Docker Compose` services, click Install Now. If it is already installed, please ignore it.

-3INLINE_COD`One-Click Install`e ins`install` is complete, find `Dify` in `One-Click Install` and click `install`

+3. INLINE_COD`One-Click Install`e ins`install` is complete, find `Dify` in `One-Click Install` and click `install`

4. configure basic information such as the domain name, ports to complete the installation

> \[!IMPORTANT]

@@ -48,8 +48,11 @@ title: Deploy with aaPanel

- Allow external access: If you nee`8088`ct access through `IP+Port`, please check. If you have set up a domain name, please do not check here.

- Port: De`Docker`0 `8088`, can be modified by yourself

+6. After submission, the panel will automatically initialize the application, which will take about `1-3` minutes. It can be accessed after the initialization is completed.

-6. After submission, the panel will automatically initialize the application, which will take about `1-3` minutes. It can be accessed after the initializa`Docker`1p the admin account:

+### Access Dify

+

+Access administrator initialization page to set up the admin account:

```bash

# If you have set domain

@@ -65,11 +68,6 @@ Dify web interface address:

# If you have set domain

http://yourdomain/

-# If you choose to access through `IP+Port`

-http://your_server_ip```bash

-# If you have set domain

-http://yourdomain/

-

# If you choose to access through `IP+Port`

http://your_server_ip:8088/

-```

\ No newline at end of file

+```

diff --git a/en/getting-started/install-self-hosted/docker-compose.mdx b/en/getting-started/install-self-hosted/docker-compose.mdx

index 1b03c8bc..1b657e75 100644

--- a/en/getting-started/install-self-hosted/docker-compose.mdx

+++ b/en/getting-started/install-self-hosted/docker-compose.mdx

@@ -41,7 +41,7 @@ title: Deploy with Docker Compose

> \[!IMPORTANT]

>

-> Dify 0.6.12 has introduced significant enhancements to Docker Compose deployment, designed to improve your setup and update experience. For more information, read the [README.md](https://github.com/langgenius/dify/blob/main/docker/README.md).

+> Dify 0.6.12 has introduced significant enhancements to Docker Compose deployment, designed to improve your setup and update experience. For more information, read the [README.md](https://github.com/langgenius/dify/blob/main/docker/README).

### Clone Dify

diff --git a/en/getting-started/install-self-hosted/environments.mdx b/en/getting-started/install-self-hosted/environments.mdx

index 4725abe4..276cd25e 100644

--- a/en/getting-started/install-self-hosted/environments.mdx

+++ b/en/getting-started/install-self-hosted/environments.mdx

@@ -413,6 +413,10 @@ Used to store uploaded data set files, team/tenant encryption keys,`https://cons

For example: `http://unstructured:8000/general/v0/general`

+- TOP_K_MAX_VALUE

+

+ The maximum top-k value of RAG, default 10.

+

#### Multi-modal Configuration

- MULTIMODAL_SENDINLINE_CODE_PLACEHO`https://app.dify.ai`736ORMA`https://app.dify.ai`8The format o`https://app.dify.ai`9age sent when the multi-modal model is input`https://udify.app/`0fault `https://udify.app/`1ase`https://udify.app/`2al `url`. The delay of the call in `url` mode will be lower than that in `base64` mode. It is generally recommended to use the more compatible `base64` mode. If configured as `url`, you need to configure `FILES_URL` as an externally accessible address so that the multi-modal model can access the image.

@@ -585,7 +589,7 @@ Used to set the browser policy for session cookies used for identity verificatio

### Chunk Length Configuration

-#### MAXIMUM_CHUNK_TOKEN_LENGTH

+#### INDEXING_MAX_SEGMENTATION_TOKENS_LENGTH

Configuration for document chunk length. It is used to control the size of text segments when processing long documents. Default: 500. Maximum: 4000.

diff --git a/en/getting-started/install-self-hosted/readme.mdx b/en/getting-started/install-self-hosted/readme.mdx

index b0add896..0ccc1ccd 100644

--- a/en/getting-started/install-self-hosted/readme.mdx

+++ b/en/getting-started/install-self-hosted/readme.mdx

@@ -12,4 +12,4 @@ Dify, an open-source project on [GitHub](https://github.com/langgenius/dify), ca

To maintain code quality, we require all code contributions - even from those with direct commit access - to be submitted as pull requests. These must be reviewed and approved by the core development team before merging.

-We welcome contributions from everyone! If you're interested in helping, please check our [Contribution Guide](https://github.com/langgenius/dify/blob/main/CONTRIBUTING.md) for more information on getting started.

+We welcome contributions from everyone! If you're interested in helping, please check our [Contribution Guide](https://github.com/langgenius/dify/blob/main/CONTRIBUTING) for more information on getting started.

diff --git a/en/getting-started/install-self-hosted/start-the-frontend-docker-container.mdx b/en/getting-started/install-self-hosted/start-the-frontend-docker-container.mdx

index 9b9625e3..c9336cb0 100644

--- a/en/getting-started/install-self-hosted/start-the-frontend-docker-container.mdx

+++ b/en/getting-started/install-self-hosted/start-the-frontend-docker-container.mdx

@@ -1,8 +1,7 @@

---

-title: Start the frontend Docker container separately

+title: Start Frontend Docker Container Separately

---

-

When developing the backend separately, you may only need to start the backend service from source code without building and launching the frontend locally. In this case, you can directly start the frontend service by pulling the Docker image and running the container. Here are the specific steps:

#### Pull the Docker image for the frontend service from DockerHub:

diff --git a/en/getting-started/readme/features-and-specifications.mdx b/en/getting-started/readme/features-and-specifications.mdx

index 9fdf9854..deaea297 100644

--- a/en/getting-started/readme/features-and-specifications.mdx

+++ b/en/getting-started/readme/features-and-specifications.mdx

@@ -22,7 +22,7 @@ We adopt transparent policies around product specifications to ensure decisions

| Open Source License |

- Apache License 2.0 with commercial licensing |

+ [Apache License 2.0 with commercial licensing](../../policies/open-source) |

| Official R&D Team |

@@ -30,7 +30,7 @@ We adopt transparent policies around product specifications to ensure decisions

| Community Contributors |

- Over 290 people (As of Q2 2024) |

+ Over [290](https://ossinsight.io/analyze/langgenius/dify#overview) people (As of Q2 2024) |

| Backend Technology |

diff --git a/en/guides/application-orchestrate/agent.mdx b/en/guides/application-orchestrate/agent.mdx

index 329c5cdc..7fc1480a 100644

--- a/en/guides/application-orchestrate/agent.mdx

+++ b/en/guides/application-orchestrate/agent.mdx

@@ -50,6 +50,14 @@ You can set up a conversation opener and initial questions for your Agent Assist

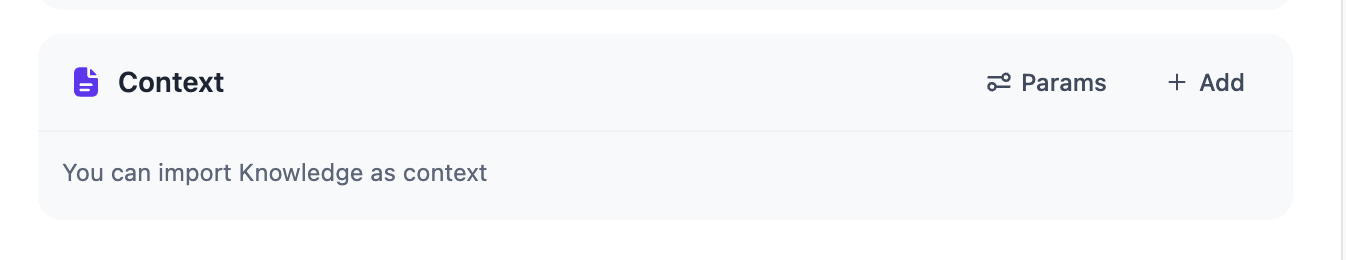

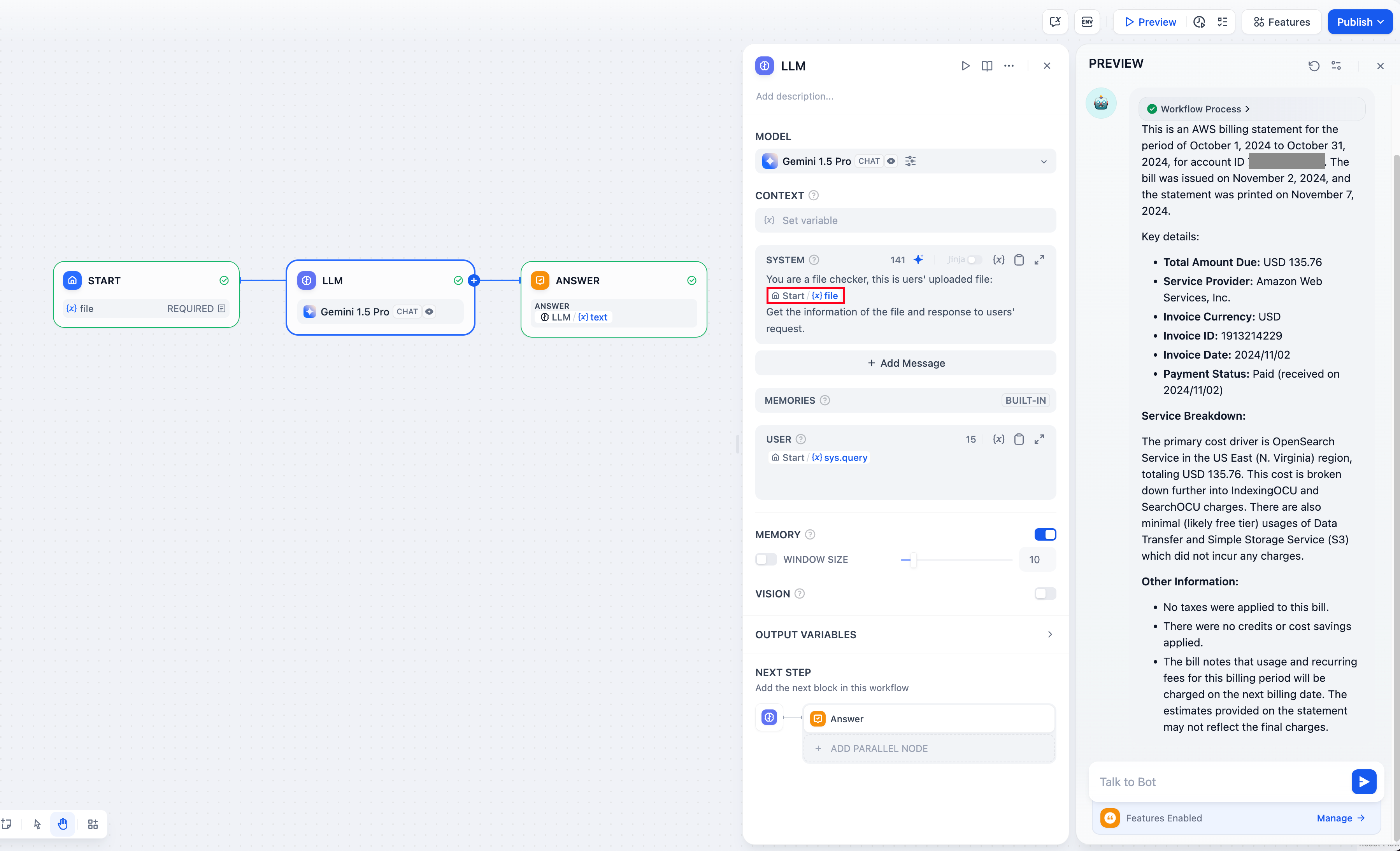

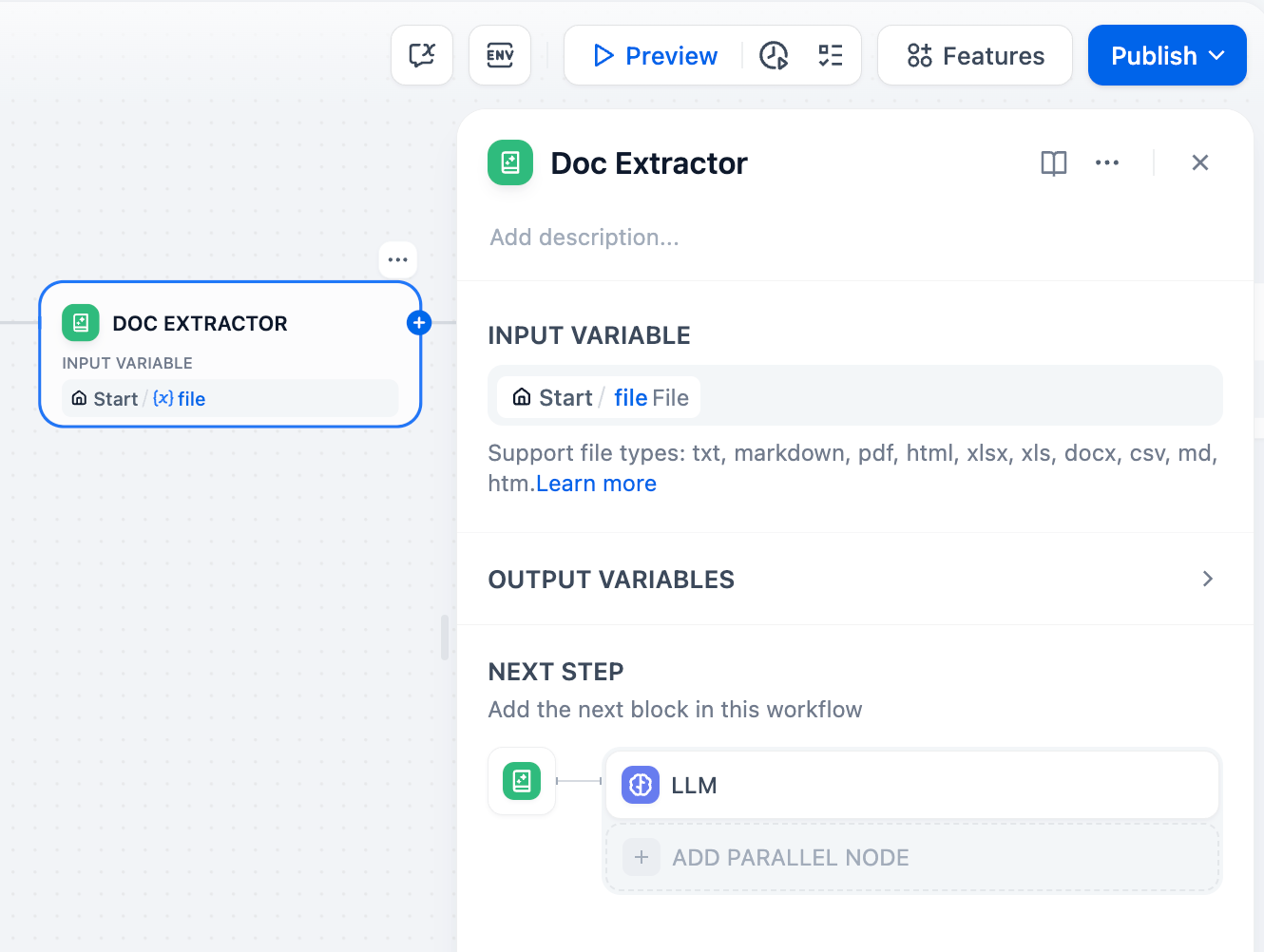

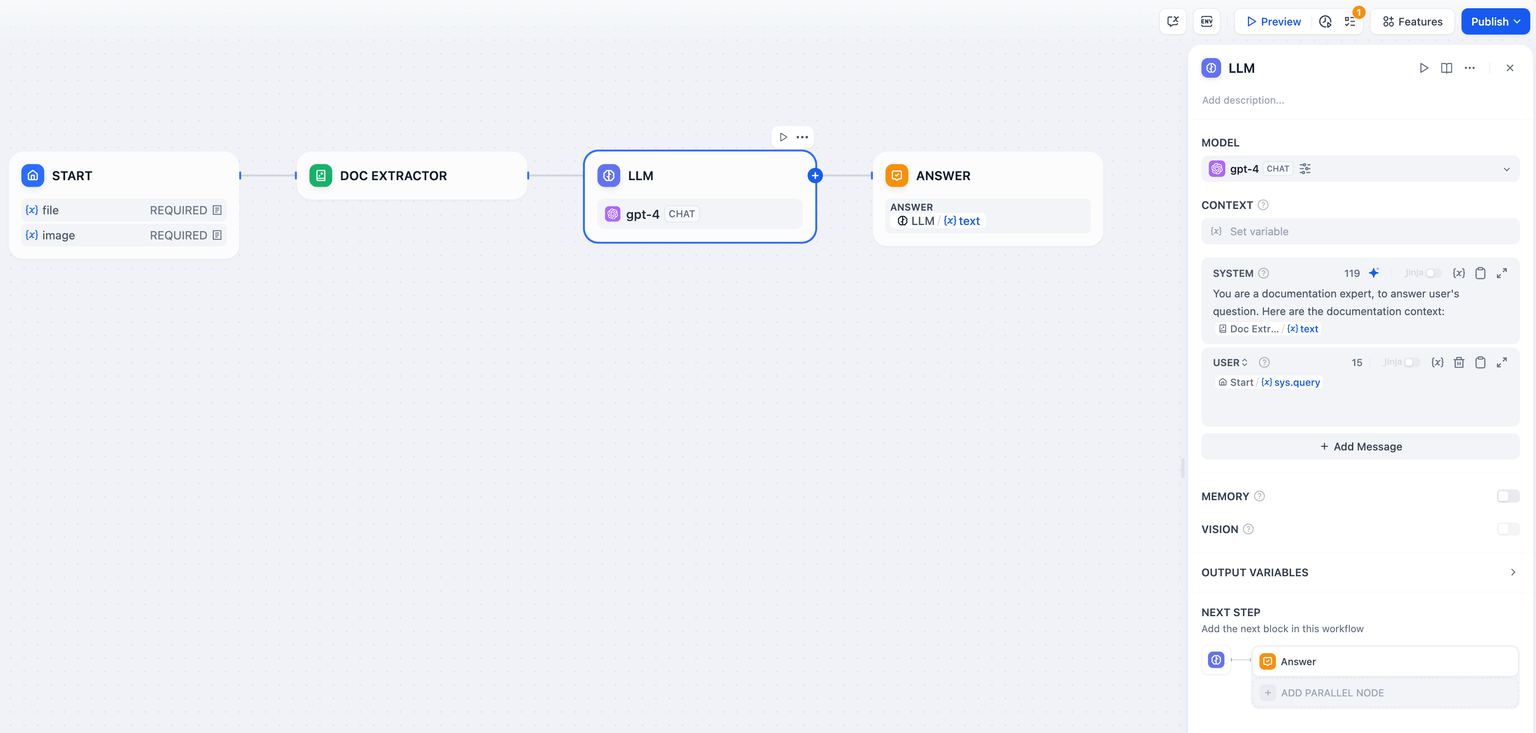

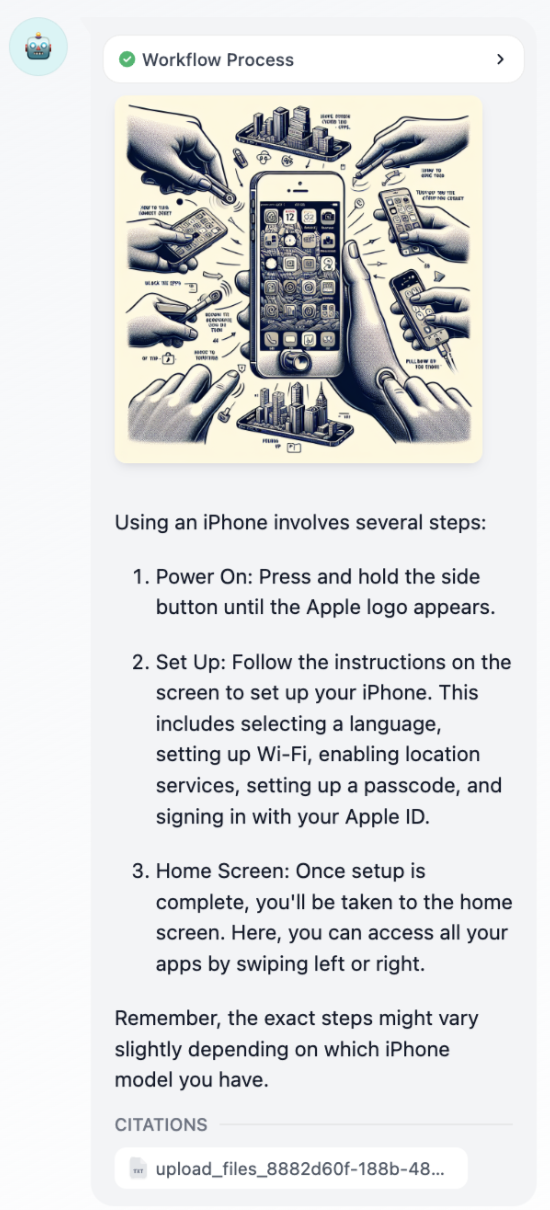

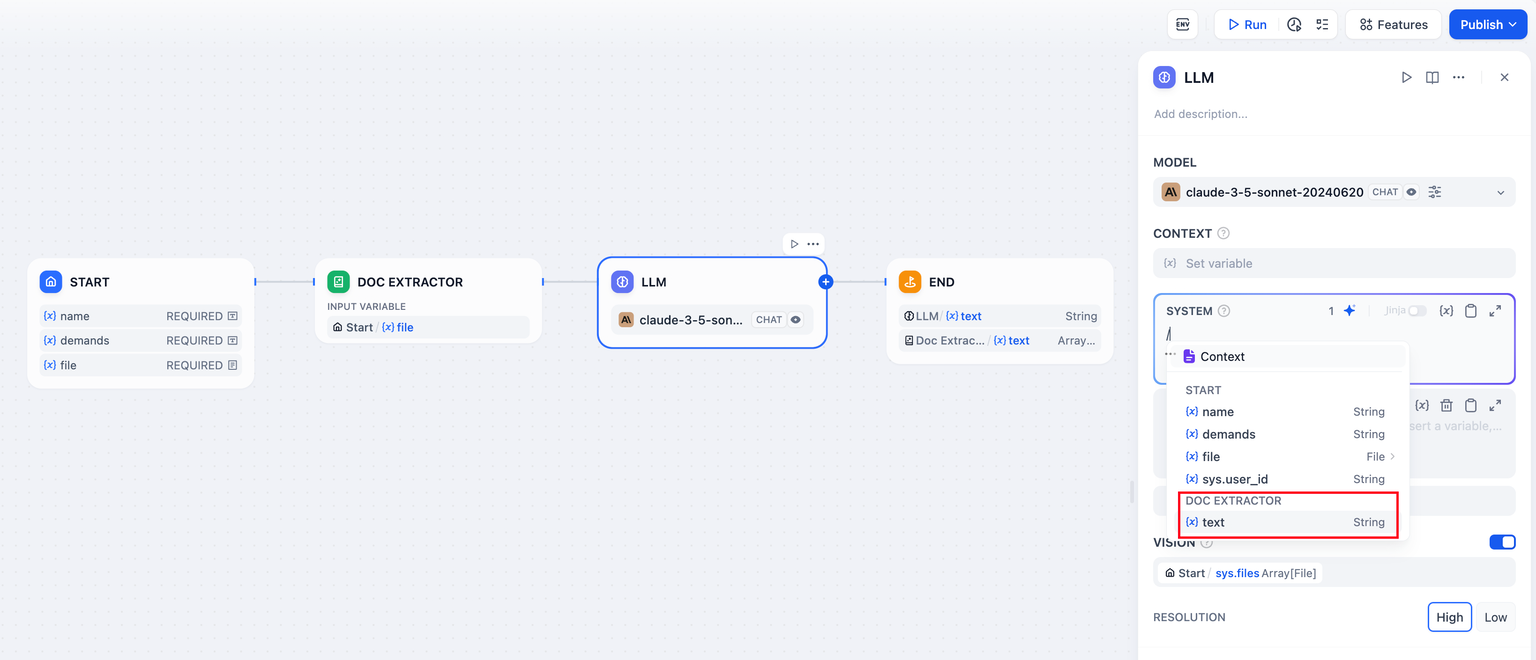

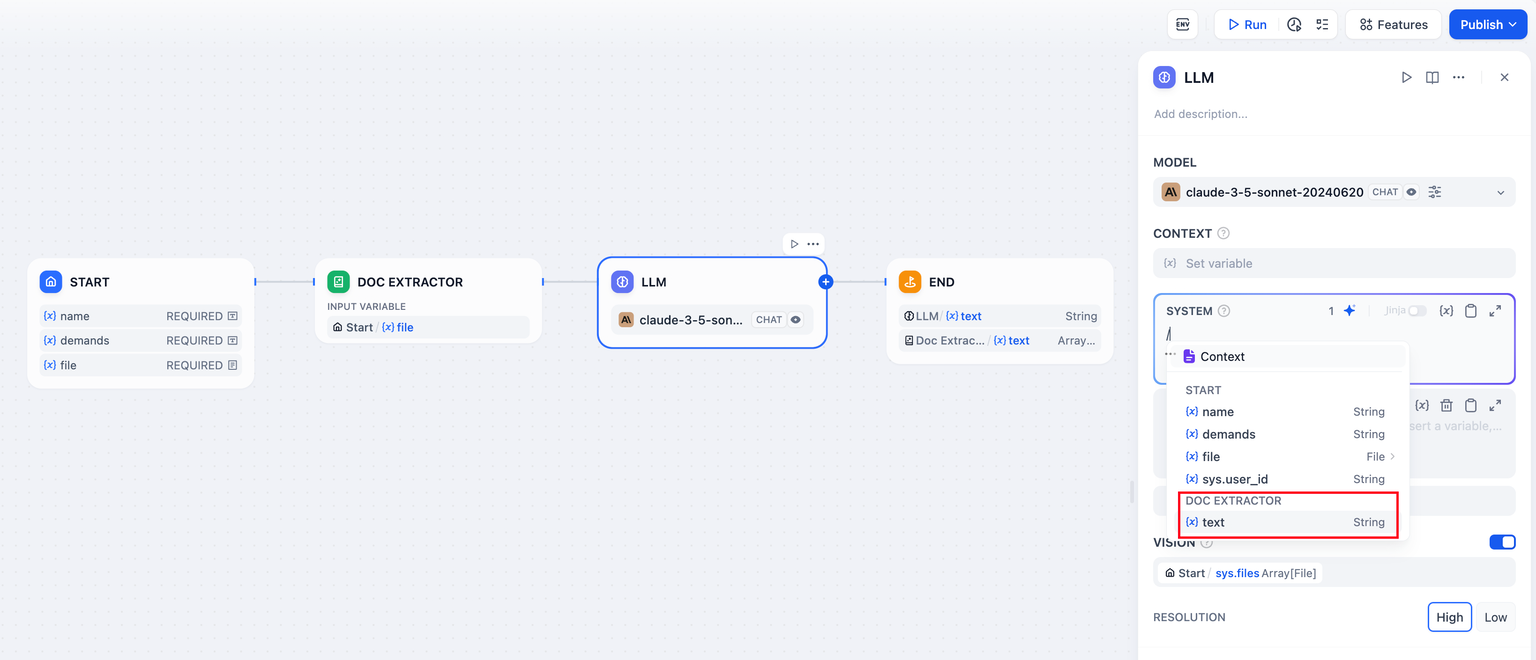

+## Uploading Documentation File

+

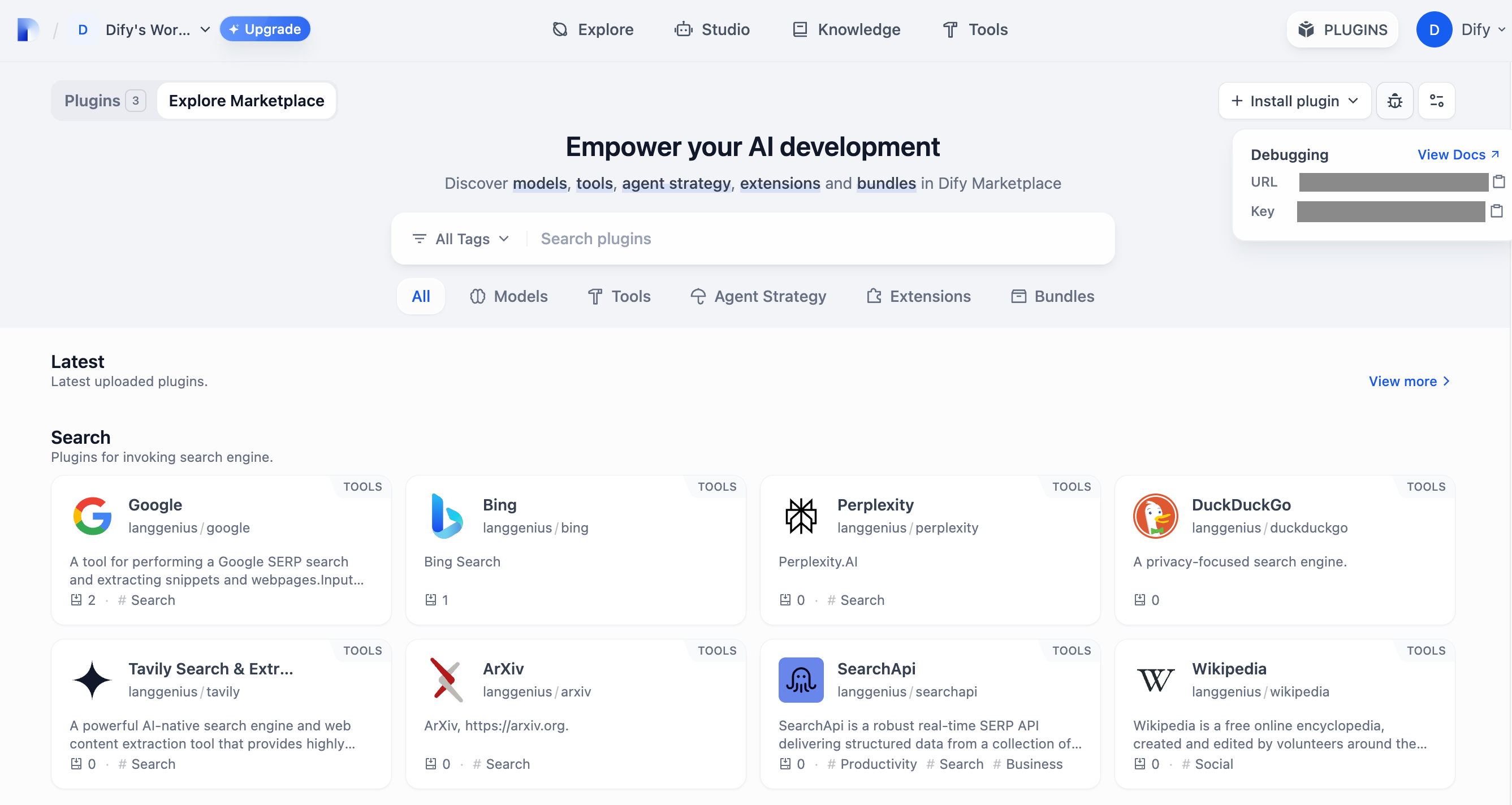

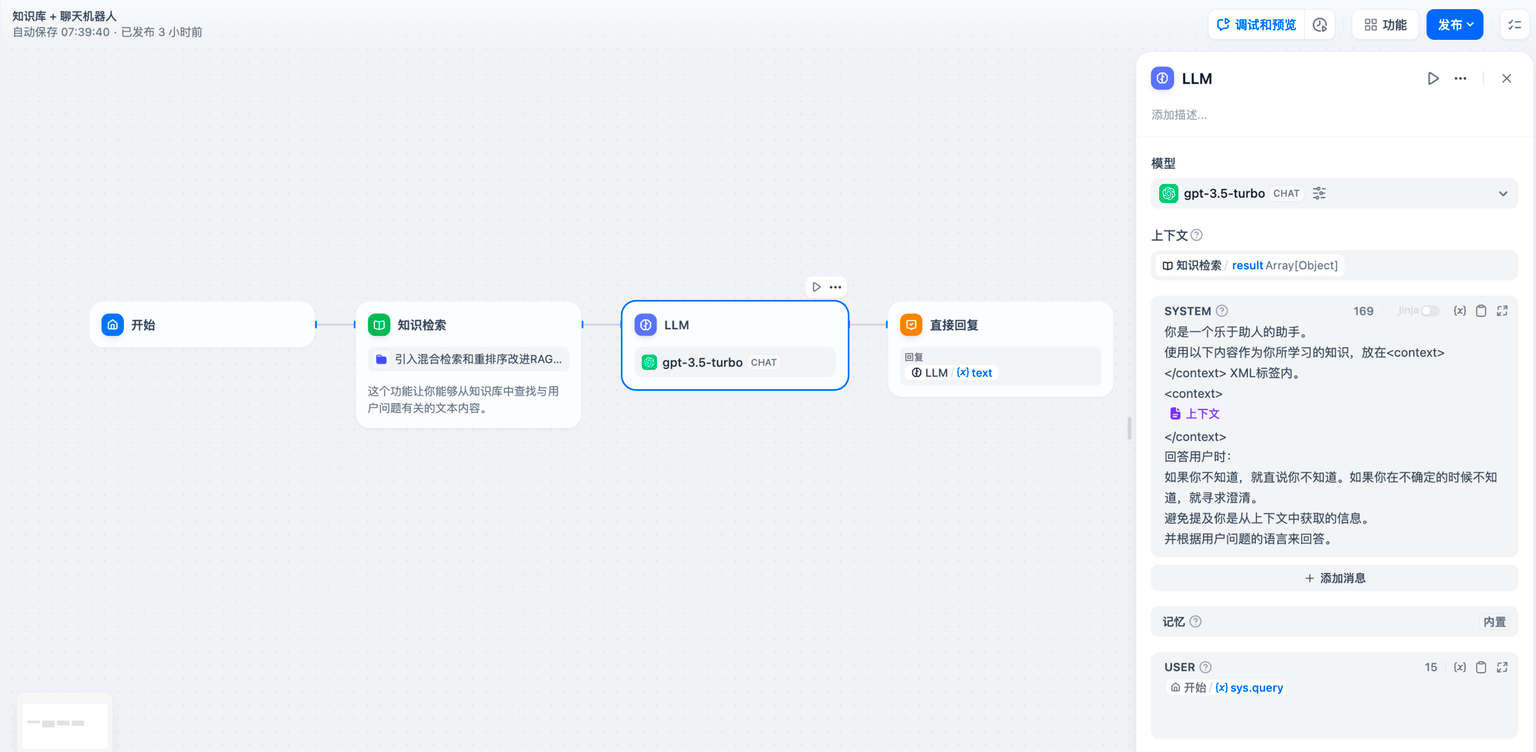

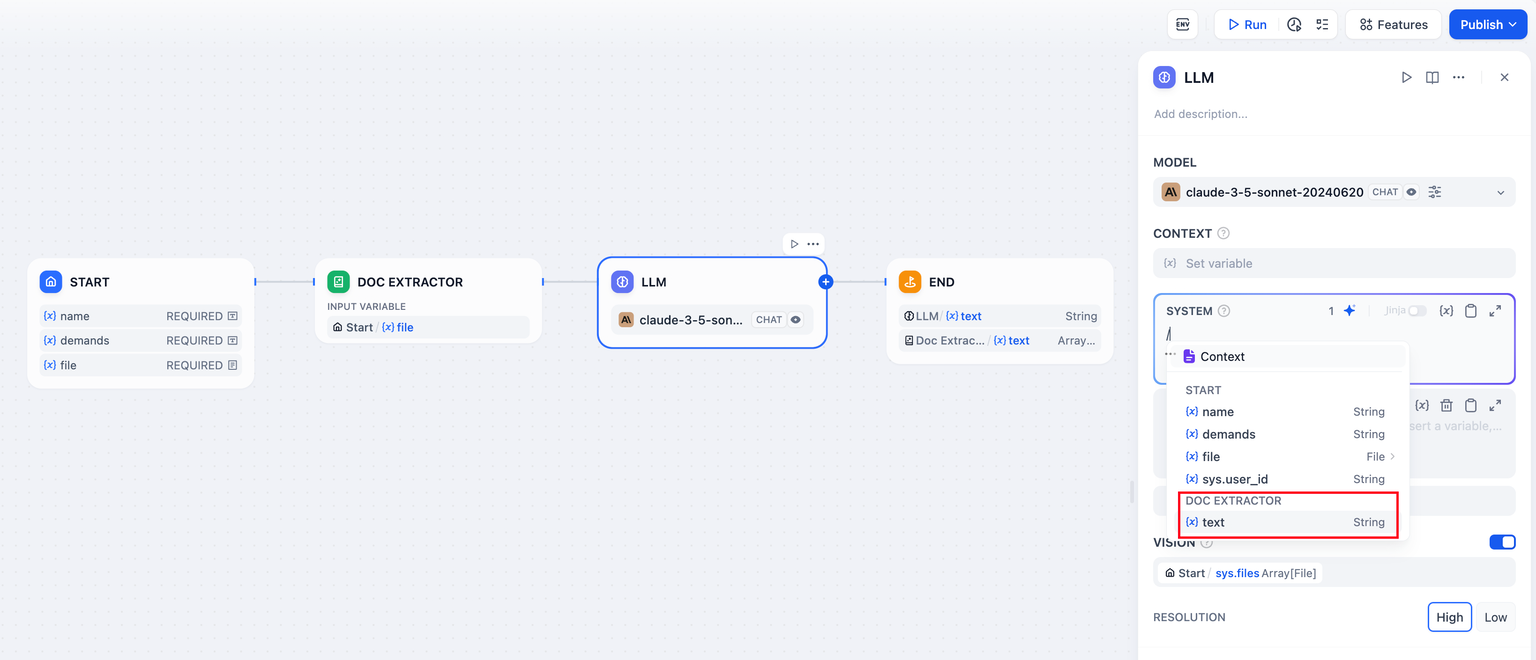

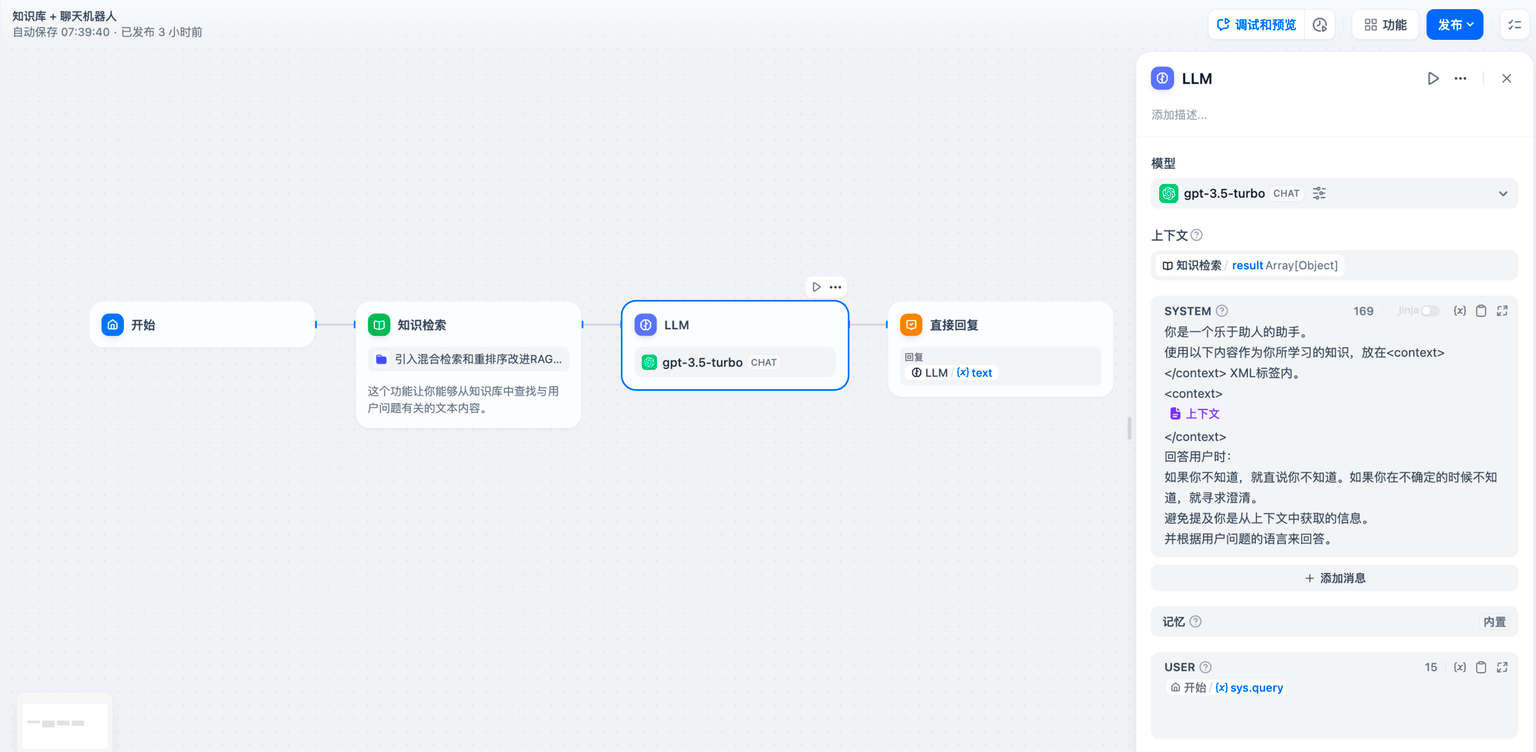

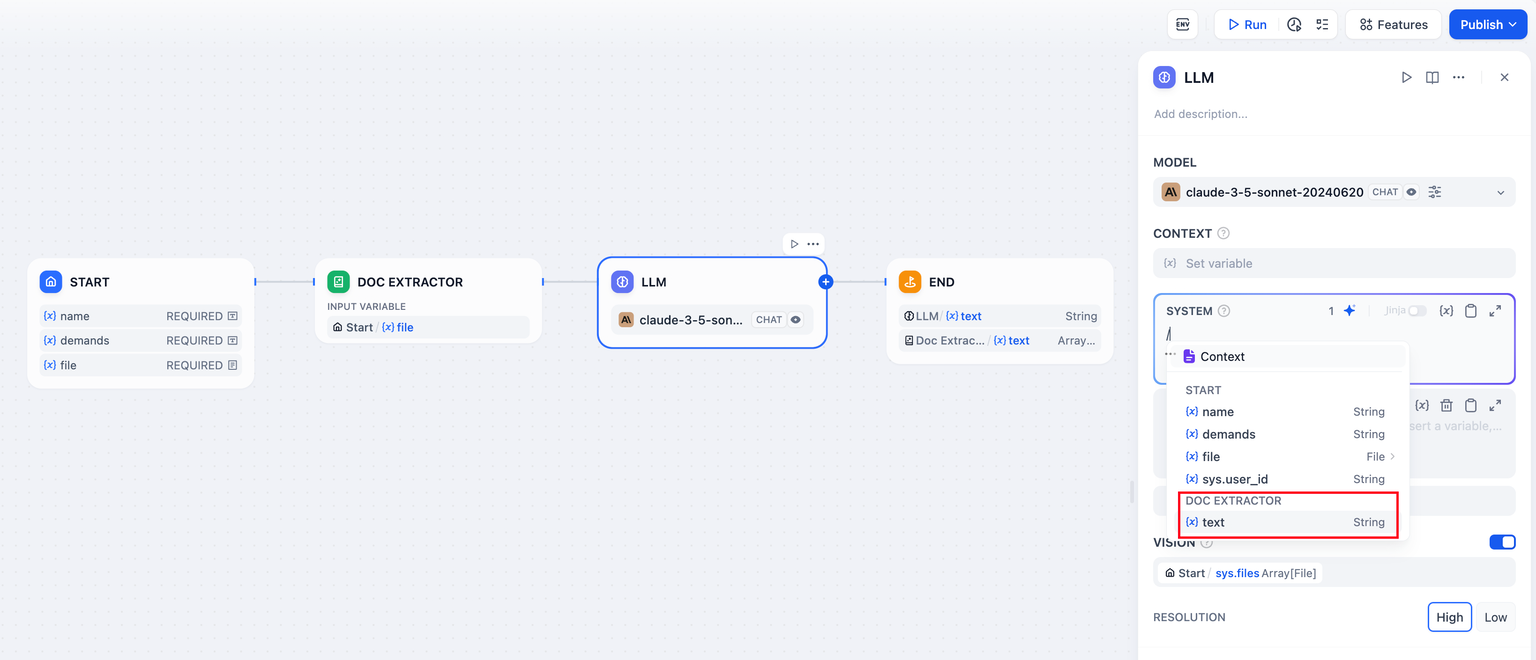

+Some LLMs now natively support file processing, such as [Claude 3.5 Sonnet](https://docs.anthropic.com/en/docs/build-with-claude/pdf-support) and [Gemini 1.5 Pro](https://ai.google.dev/api/files). You can check the LLMs' websites for details on their file upload capabilities.

+

+Select an LLM that supports file reading and enable the "Documentation" feature. This enables the Chatbot to recognize files without complex configurations.

+

+

+

## Debugging and Preview

After orchestrating the intelligent assistant, you can debug and preview it before publishing as an application to verify the assistant's task completion effectiveness.

diff --git a/en/guides/application-orchestrate/chatbot-application.mdx b/en/guides/application-orchestrate/chatbot-application.mdx

index 00077365..70da5543 100644

--- a/en/guides/application-orchestrate/chatbot-application.mdx

+++ b/en/guides/application-orchestrate/chatbot-application.mdx

@@ -2,29 +2,29 @@

title: Chatbot Application

---

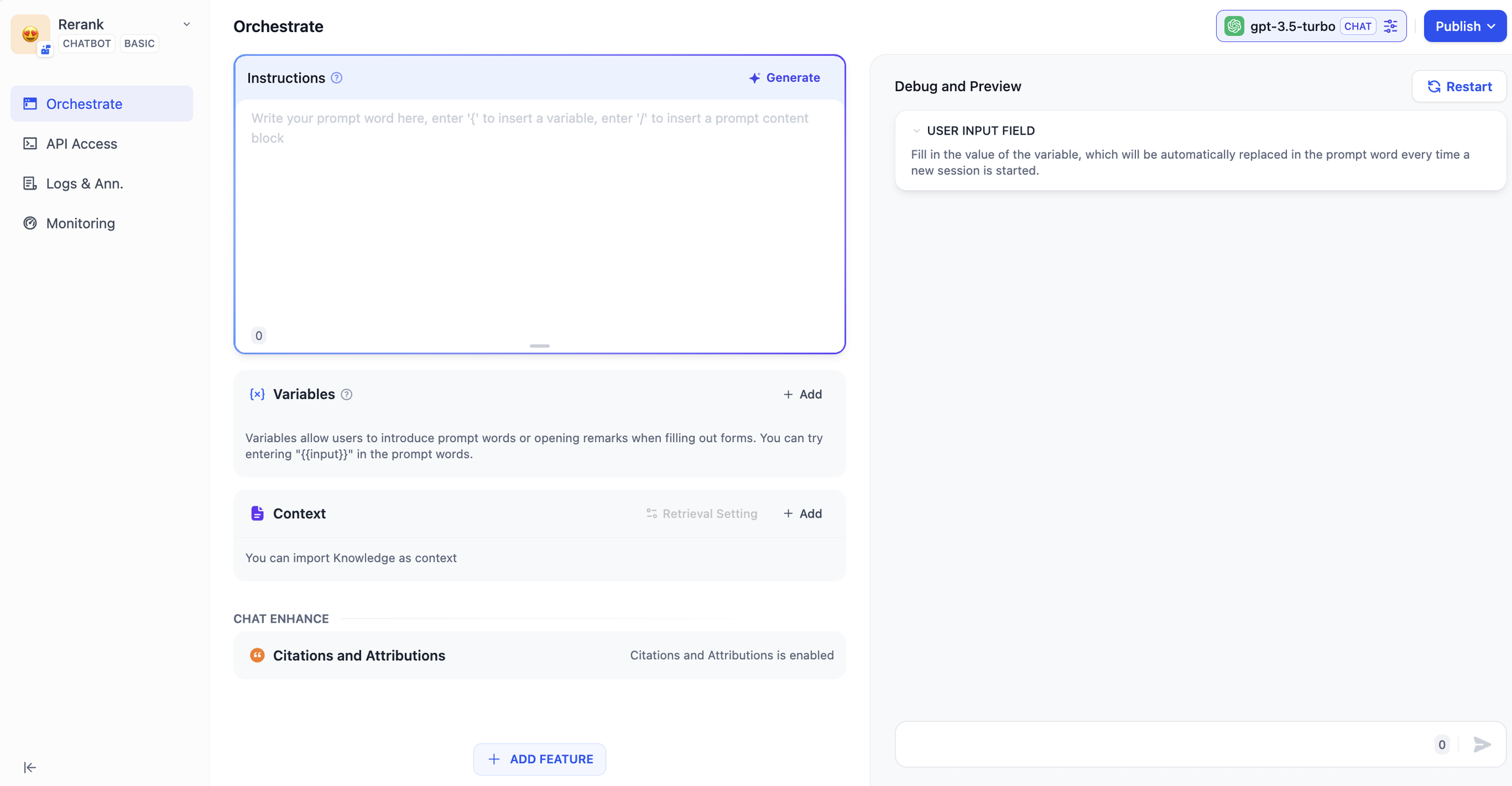

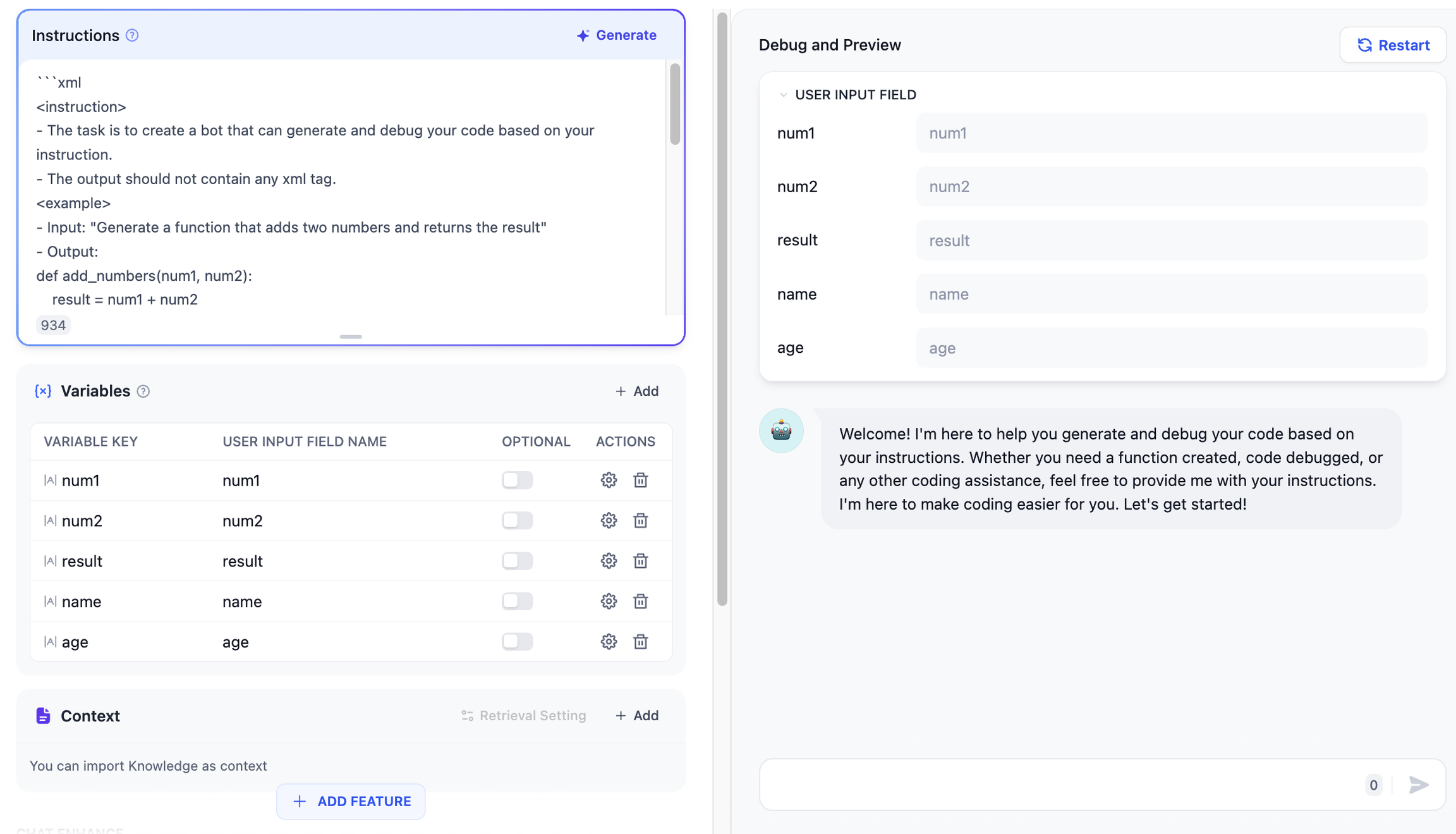

-Conversation applications use a one-question-one-answer mode to have a continuous conversation with the user.

+Chatbot applications use a one-question-one-answer mode to have a continuous conversation with the user.

### Applicable scenarios

-Conversation applications can be used in fields such as customer service, online education, healthcare, financial services, etc. These applications can help organizations improve work efficiency, reduce labor costs, and provide a better user experience.

+Chatbot applications can be used in fields such as customer service, online education, healthcare, financial services, etc. These applications can help organizations improve work efficiency, reduce labor costs, and provide a better user experience.

### How to compose

-Conversation applications supports: prompts, variables, context, opening remarks, and suggestions for the next question.

+Chatbot applications supports: prompts, variables, context, opening remarks, and suggestions for the next question.

-Here, we use a interviewer application as an example to introduce the way to compose a conversation applications.

+Here, we use a interviewer application as an example to introduce the way to compose a Chatbot applications.

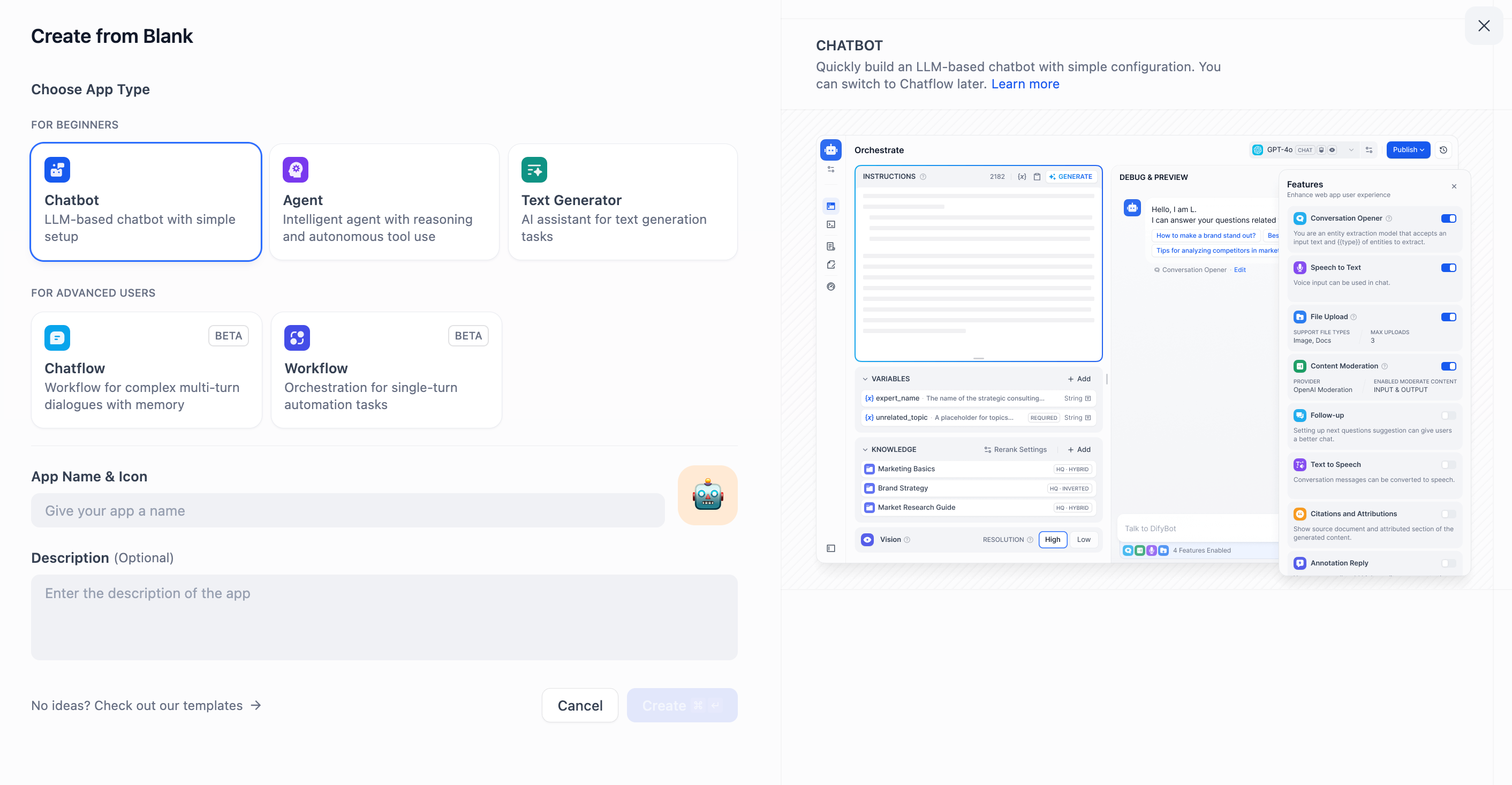

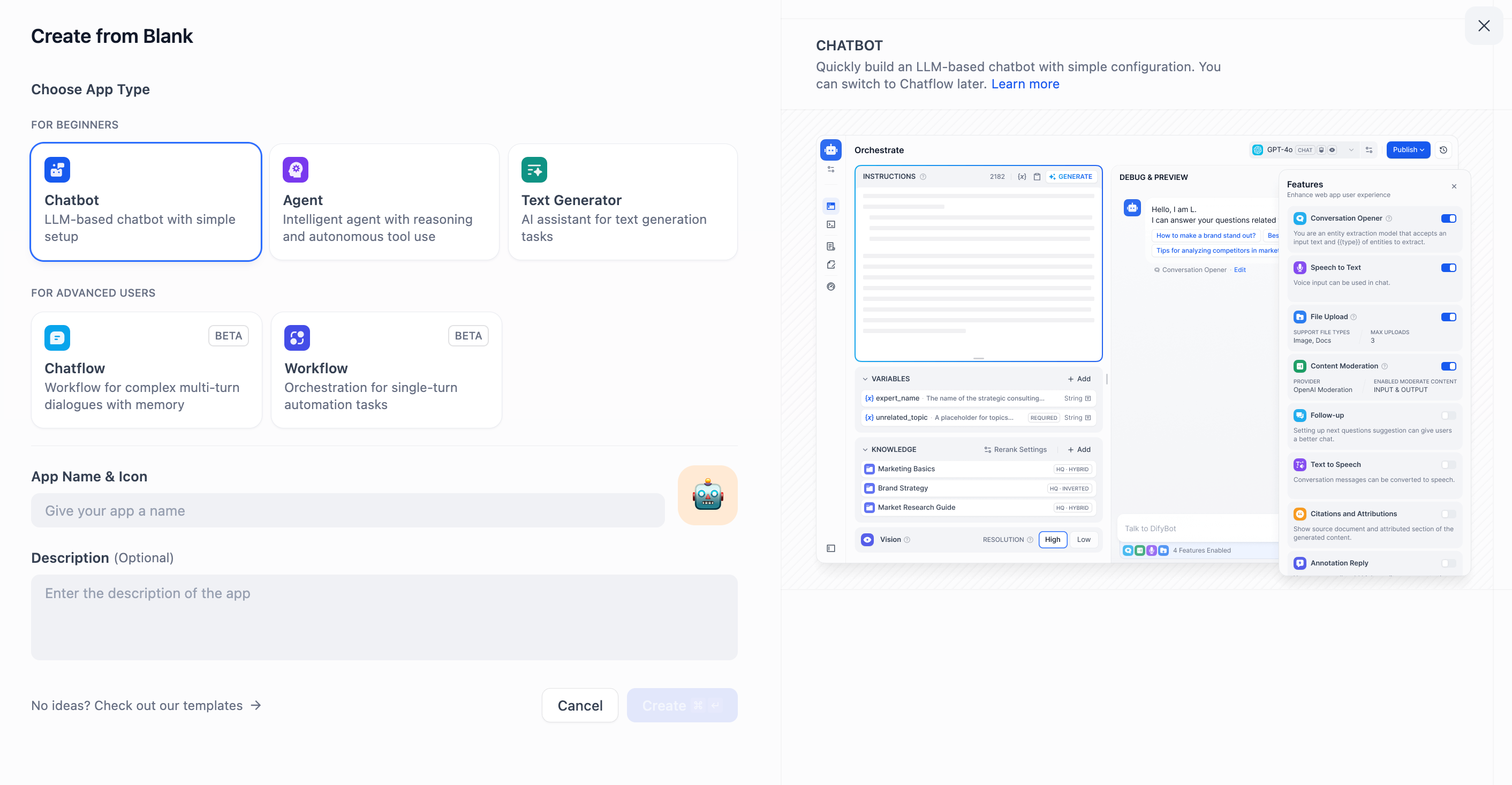

#### Step 1 Create an application

-Click the "Create Application" button on the homepage to create an application. Fill in the application name, and select **"Chat App"** as the application type.

+Click the "Create Application" button on the homepage to create an application. Fill in the application name, and select **"Chatbot"**.

-

+

#### Step 2: Compose the Application

After the application is successfully created, it will automatically redirect to the application overview page. Click on the button on the left menu: **"Orchestrate"** to compose the application.

-

+

**2.1 Fill in Prompts**

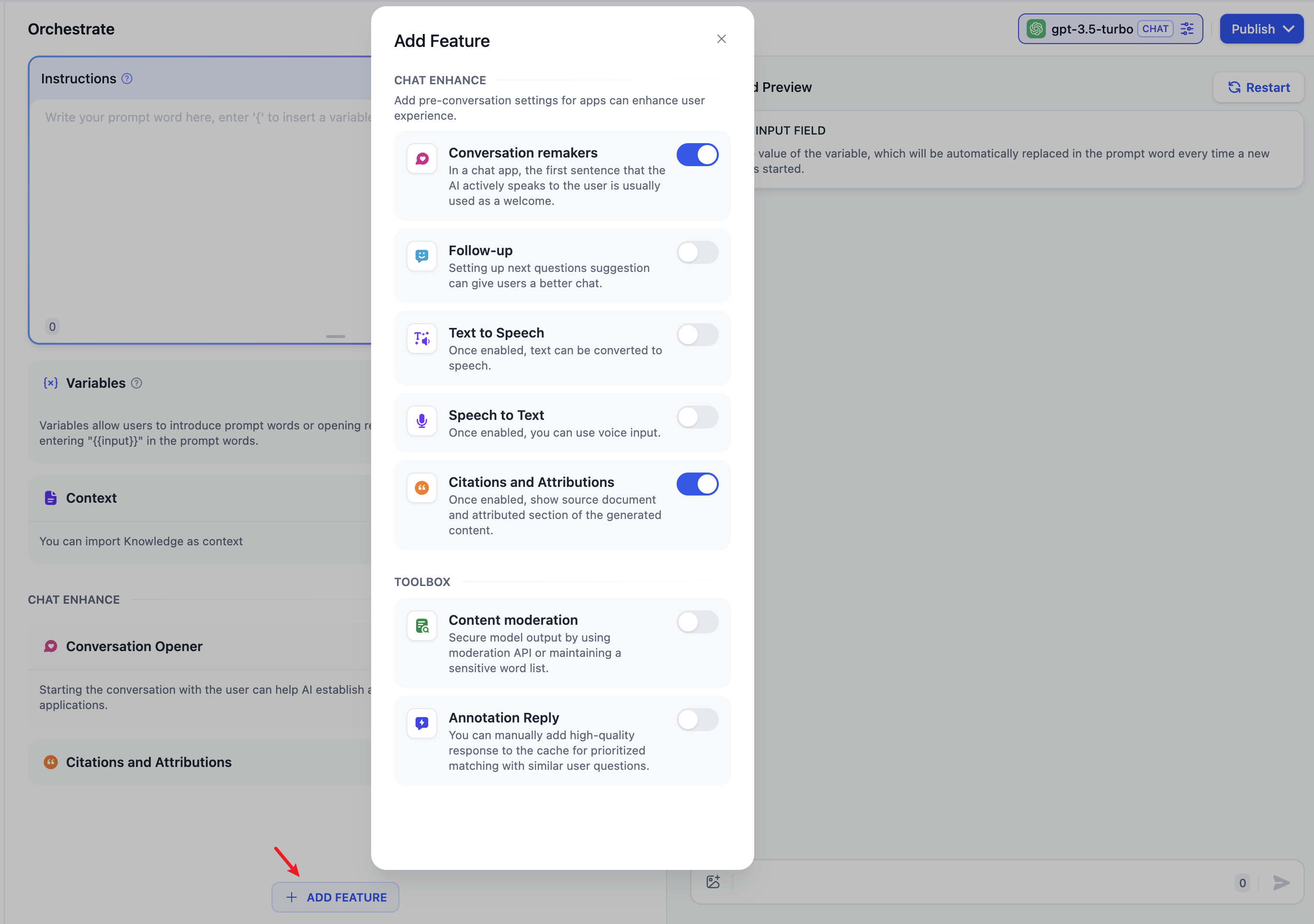

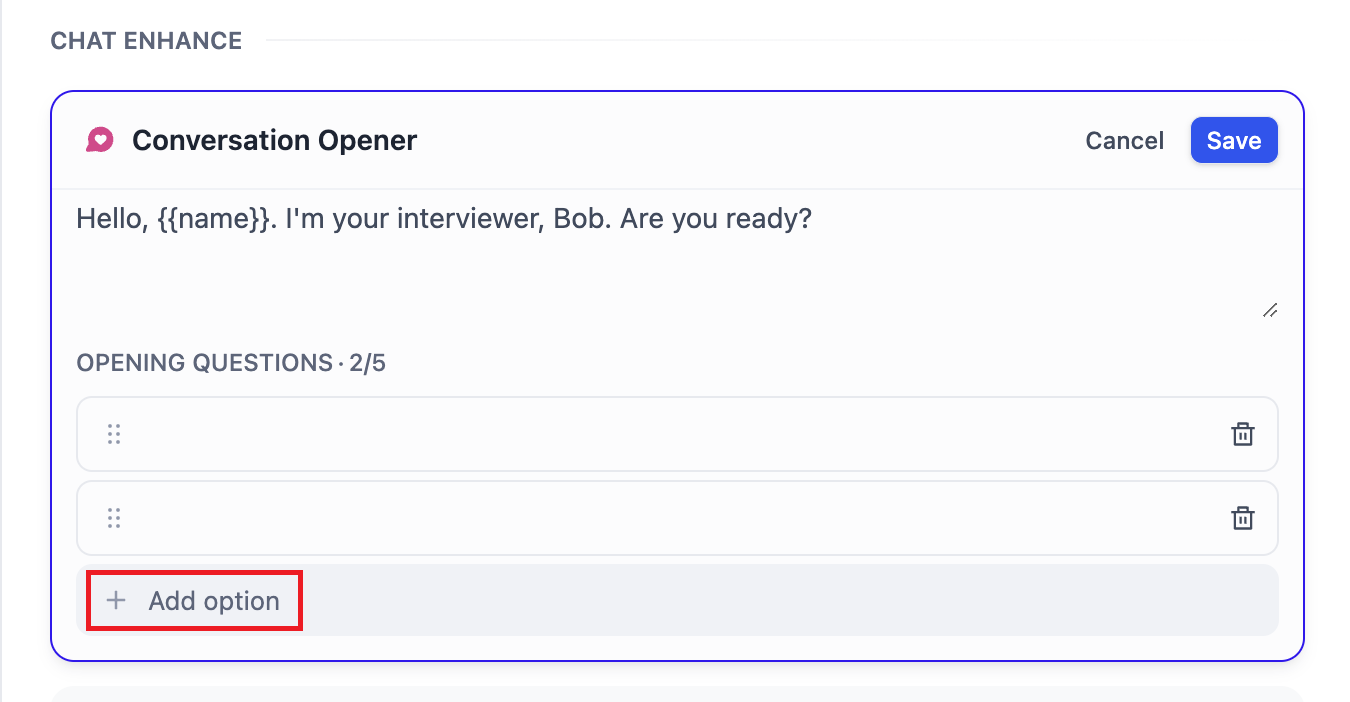

@@ -40,42 +40,52 @@ For a better experience, we will add an opening dialogue: `"Hello, {{name}}. I'm

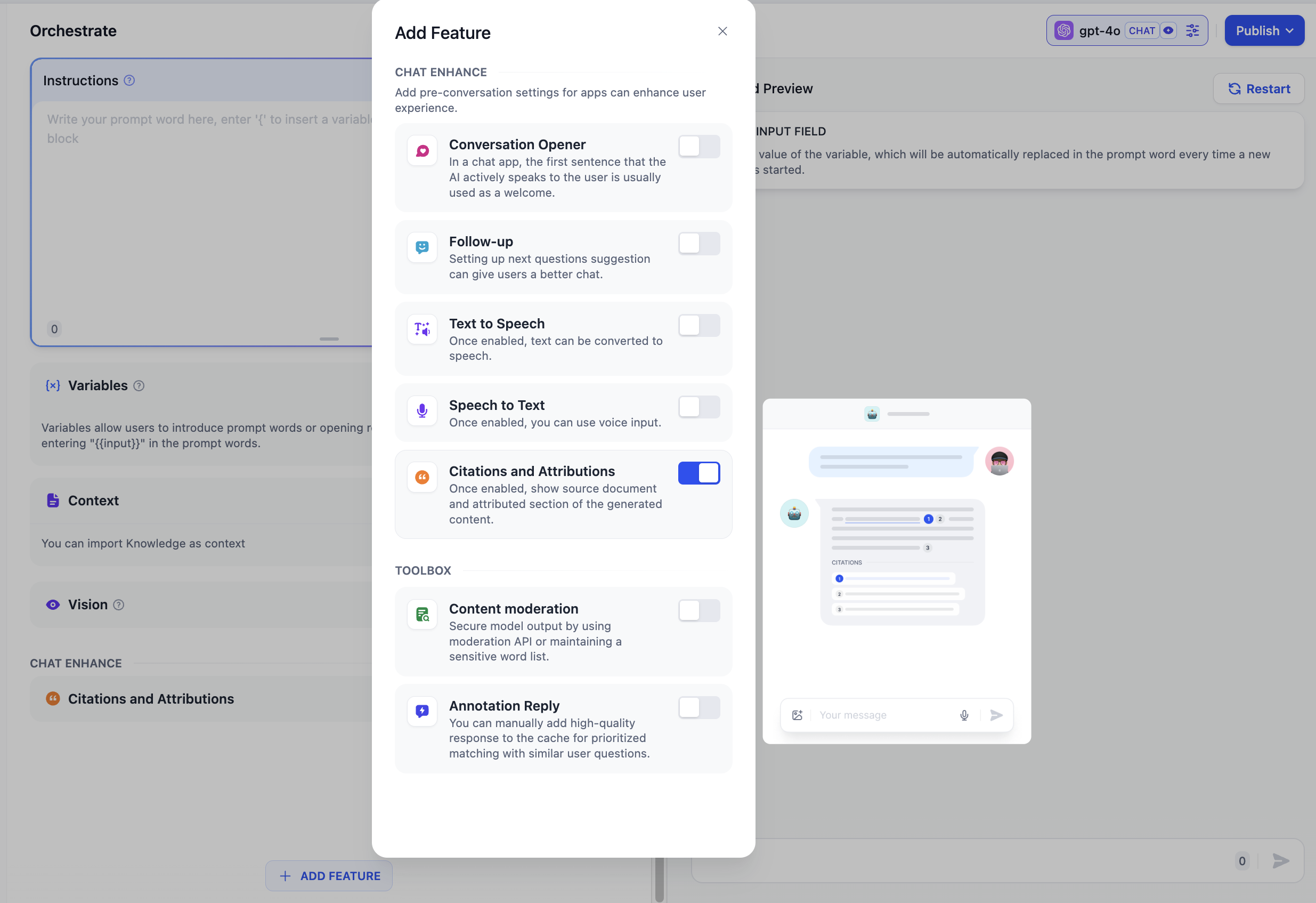

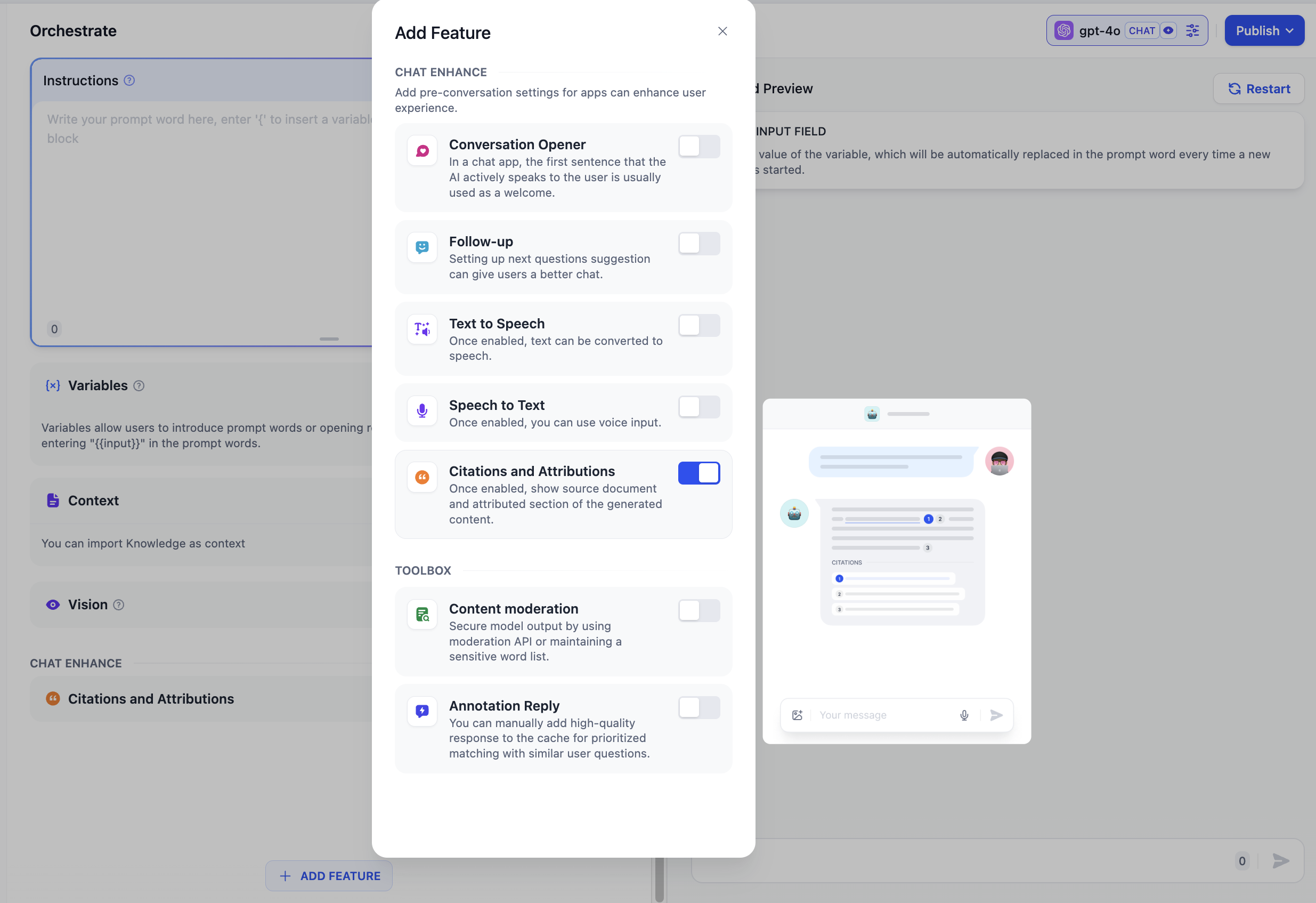

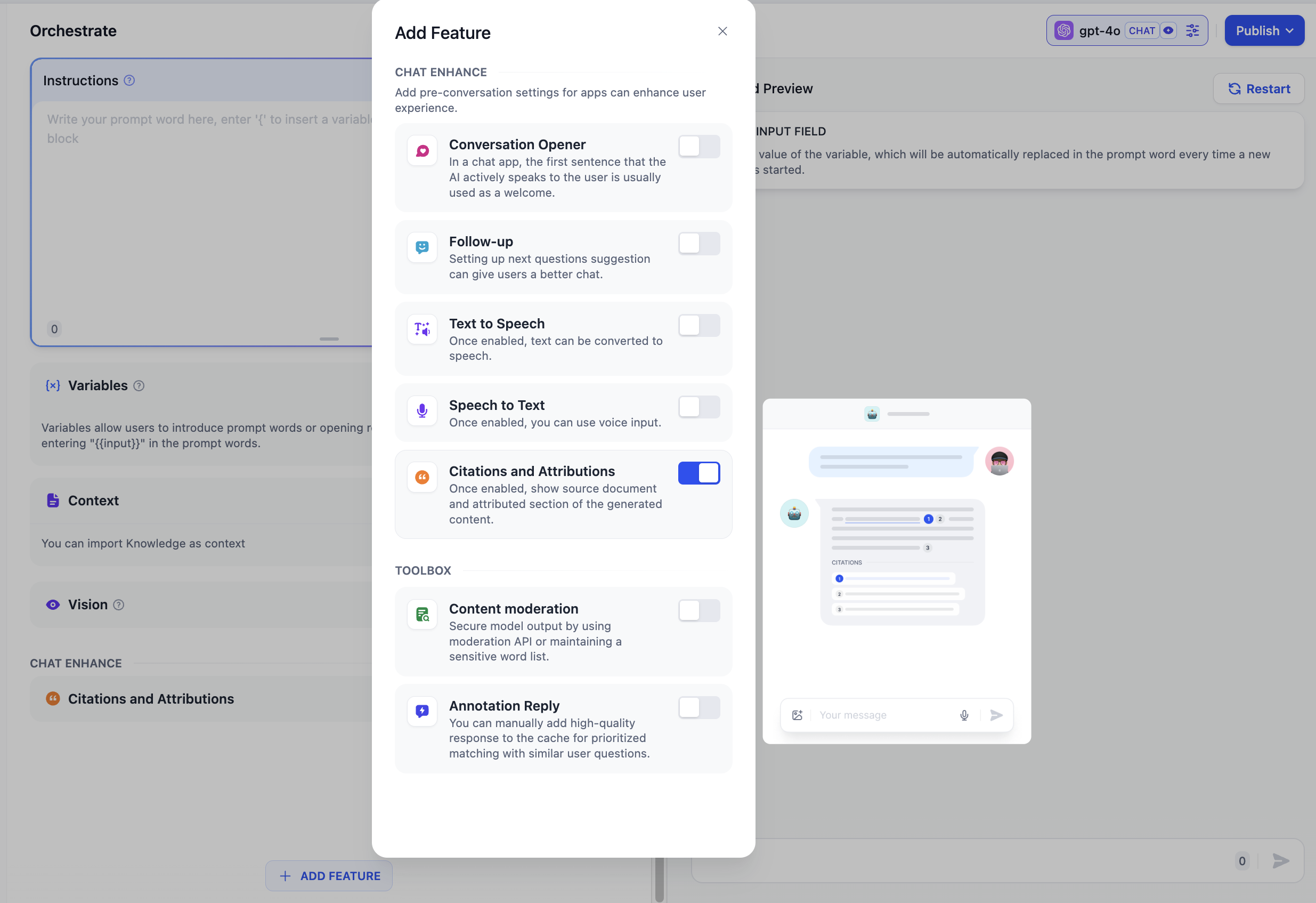

To add the opening dialogue, click the "Add Feature" button in the upper left corner, and enable the "Conversation remarkers" feature:

-

+

And then edit the opening remarks:

-

+

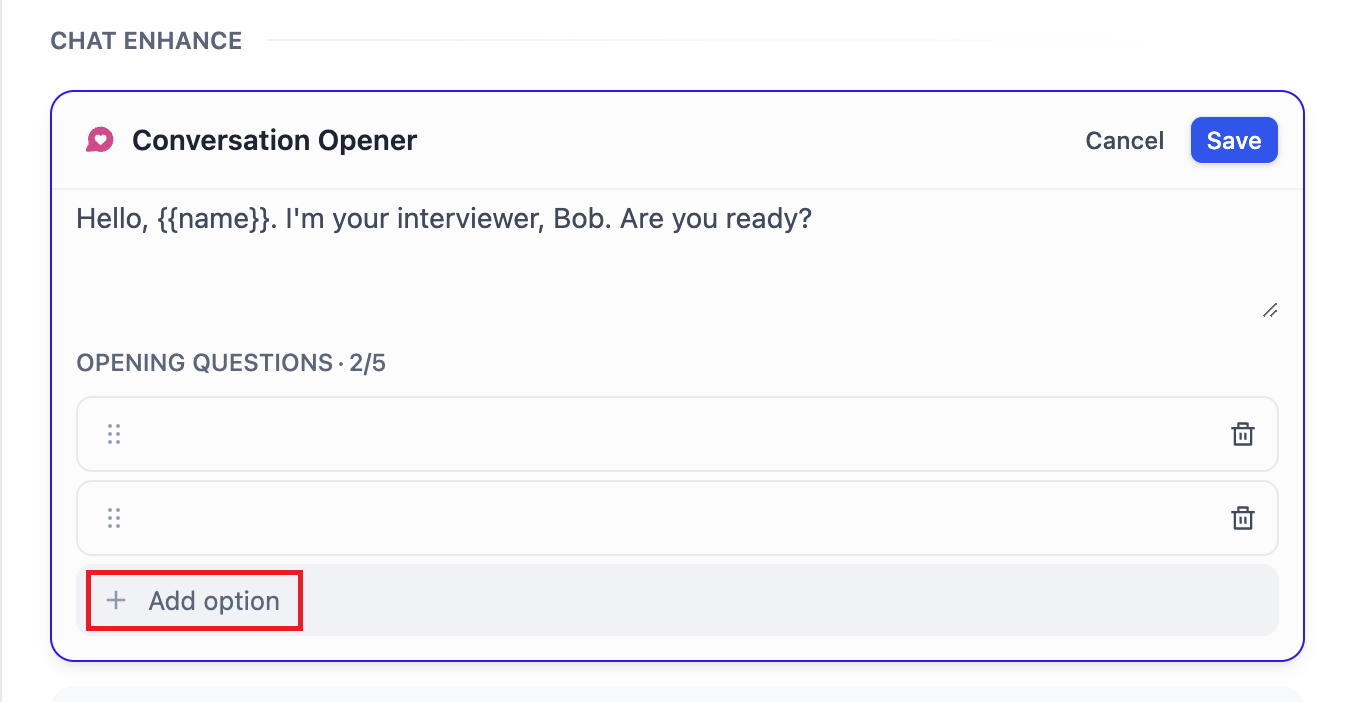

**2.2 Adding Context**

-If an application wants to generate content based on private contextual conversations, it can use our [knowledge](/en/guides/knowledge-base/readme) feature. Click the "Add" button in the context to add a knowledge base.

+If an application wants to generate content based on private contextual conversations, it can use our [knowledge](../knowledge-base/) feature. Click the "Add" button in the context to add a knowledge base.

-

+

-**2.3 Debugging**

+**2.3 Uploading Documentation File**

+

+Some LLMs now natively support file processing, such as [Claude 3.5 Sonnet](https://docs.anthropic.com/en/docs/build-with-claude/pdf-support) and [Gemini 1.5 Pro](https://ai.google.dev/api/files). You can check the LLMs' websites for details on their file upload capabilities.

+

+Select an LLM that supports file reading and enable the "Documentation" feature. This enables the Chatbot to recognize files without complex configurations.

+

+

+

+**2.4 Debugging**

Enter user inputs on the right side and check the respond content.

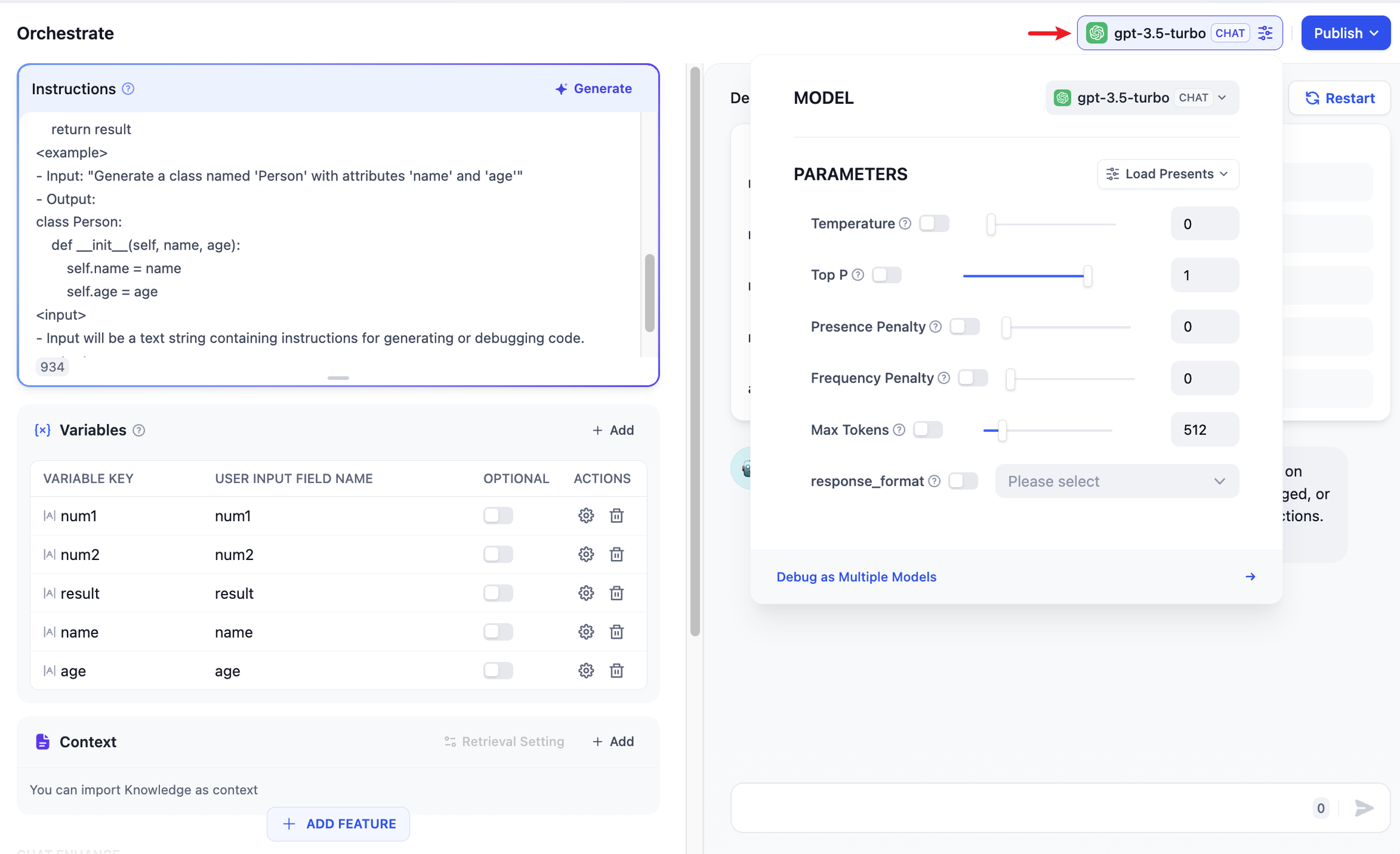

-

+

If the results are not satisfactory, you can adjust the prompts and model parameters. Click on the model name in the upper right corner to set the parameters of the model:

-

+

-**Debugging with multiple models:**

+**Multiple Model Debugging:**

-If debugging with a single model feels inefficient, you can utilize the **Debug as Multiple Models** feature to batch-test the models' response effectiveness.

-

-

-

-Supports adding up to 4 LLMs at the same time.

-

-

-

-> ⚠️ When using the multi-model debugging feature, if only some large models are visible, it is because other large models' keys have not been added yet. You can manually add multiple models' keys in ["Add New Provider"](/en/guides/model-configuration/new-provider).

+If the LLM’s response is unsatisfactory, you can refine the prompt or switch to different underlying models for comparison. To simultaneously observe how multiple models respond to the same question, see [Multiple Model Debugging](./multiple-llms-debugging).

**2.4 Publish App**

-After debugging your application, click the **"Publish"** button in the top right corner to create a standalone AI application. In addition to experiencing the application via a public URL, you can also perform secondary development based on APIs, embed it into websites, and more. For details, please refer to [Publishing](/en/guides/application-publishing/launch-your-webapp-quickly/web-app-settings).

+After debugging your application, click the **"Publish"** button in the top right corner to create a standalone AI application. In addition to experiencing the application via a public URL, you can also perform secondary development based on APIs, embed it into websites, and more. For details, please refer to [Publishing](https://docs.dify.ai/guides/application-publishing).

-If you want to customize the application that you share, you can Fork our open source [WebApp template](https://github.com/langgenius/webapp-conversation). Based on the template, you can modify the application to meet your specific needs and style requirements.

\ No newline at end of file

+If you want to customize the application that you share, you can Fork our open source [WebApp template](https://github.com/langgenius/webapp-conversation). Based on the template, you can modify the application to meet your specific needs and style requirements.

+

+### FAQ

+

+**How to add a third-party tool within the chatbot?**

+

+The chatbot app does not support adding third-party tools. You can add third-party tools within your [agent](../application-orchestrate/agent).

+

+**How to use metadata filtering when creating a Chatbot?**

+

+For instructions, see **Metadata Filtering > Chatbot** in *[Integrate Knowledge Base within Application](https://docs.dify.ai/guides/knowledge-base/integrate-knowledge-within-application)*.

diff --git a/en/guides/application-orchestrate/creating-an-application.mdx b/en/guides/application-orchestrate/creating-an-application.mdx

index be1b7dbe..713a1ccd 100644

--- a/en/guides/application-orchestrate/creating-an-application.mdx

+++ b/en/guides/application-orchestrate/creating-an-application.mdx

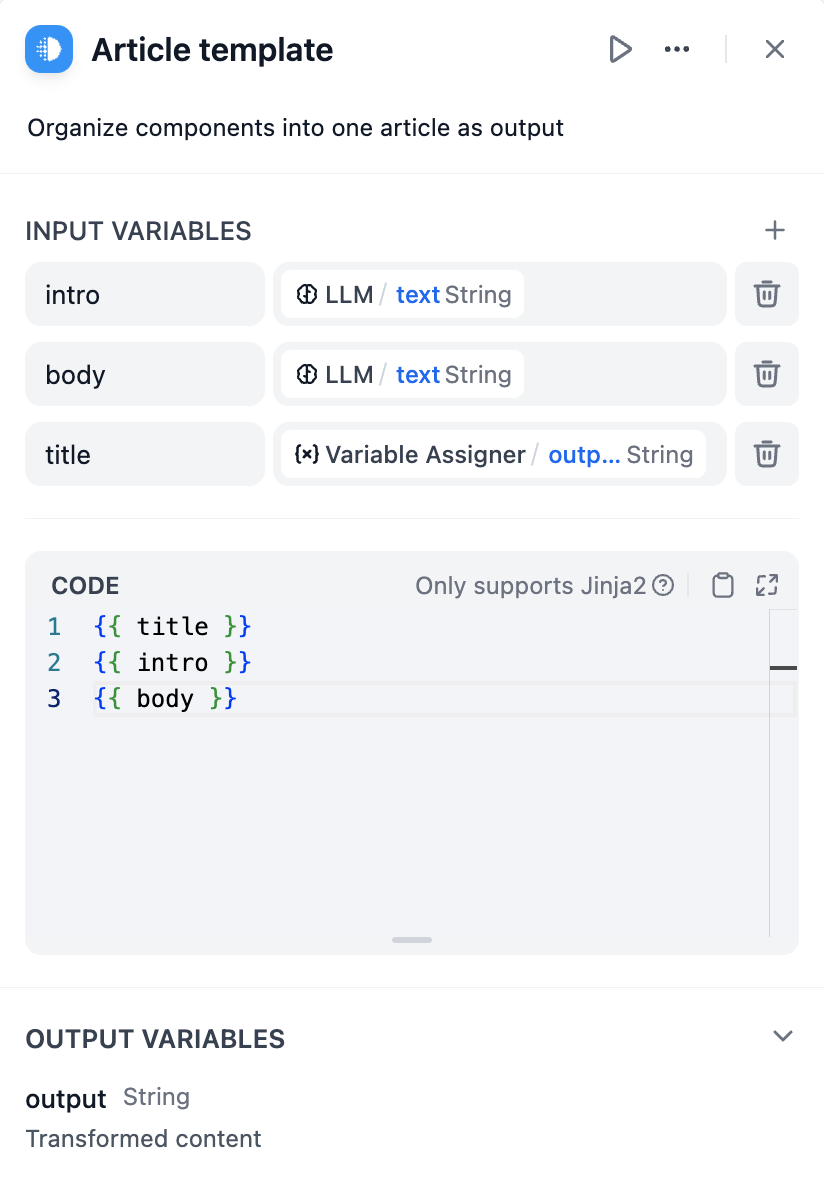

@@ -28,6 +28,13 @@ If you need to create a blank application on Dify, you can select "Studio" from

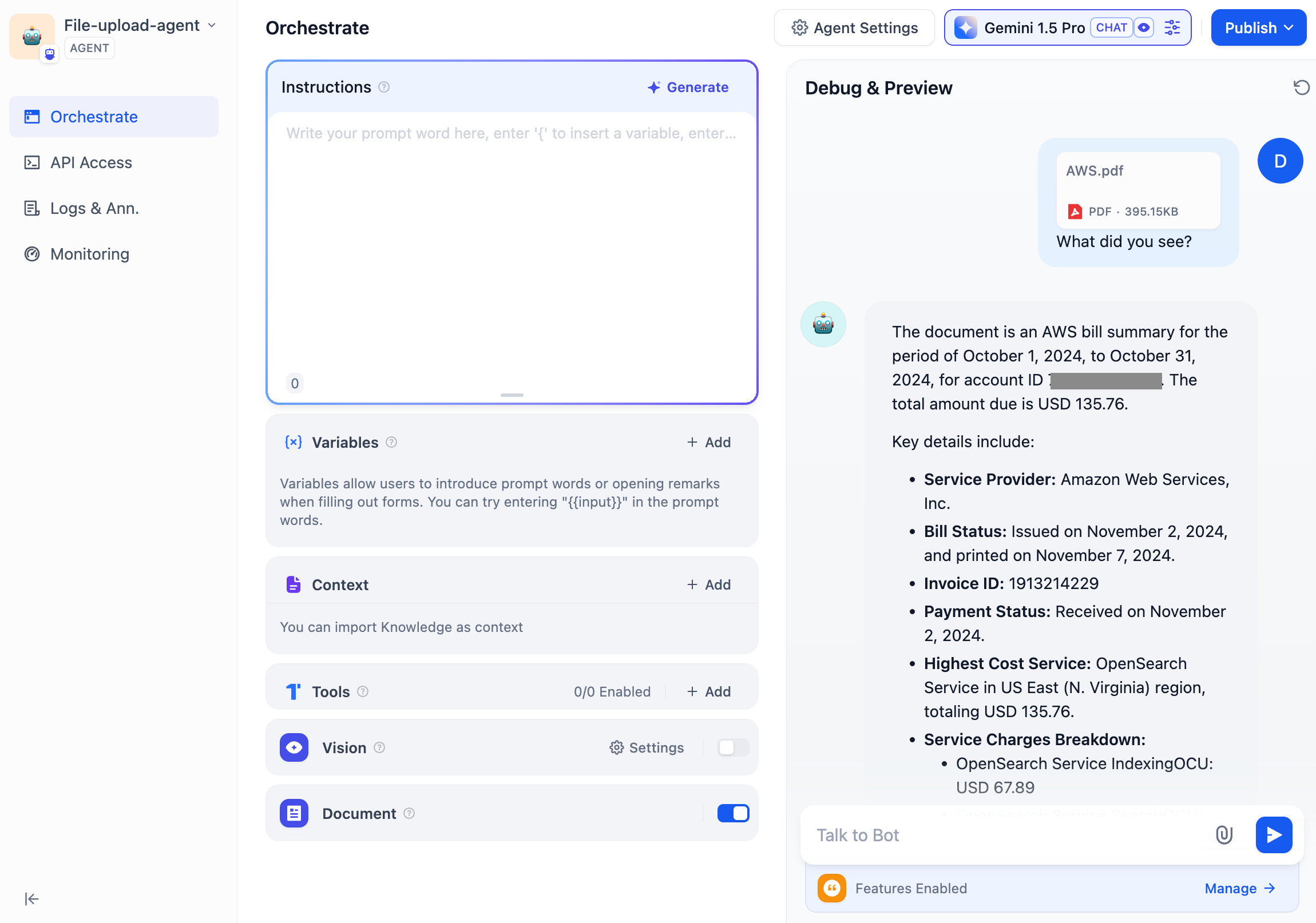

When creating an application for the first time, you might need to first understand the [basic concepts](./#application_type) of the five different types of applications on Dify: Chatbot, Text Generator, Agent, Chatflow and Workflow.

+

+

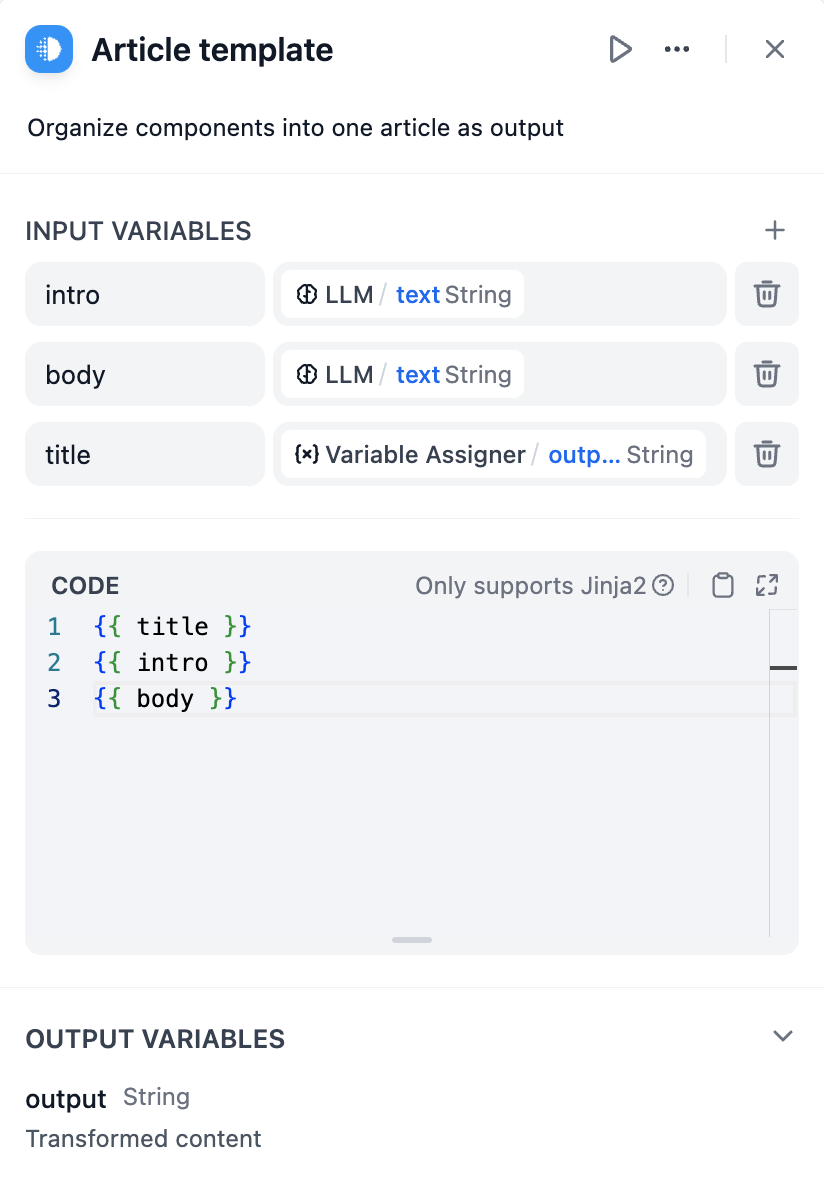

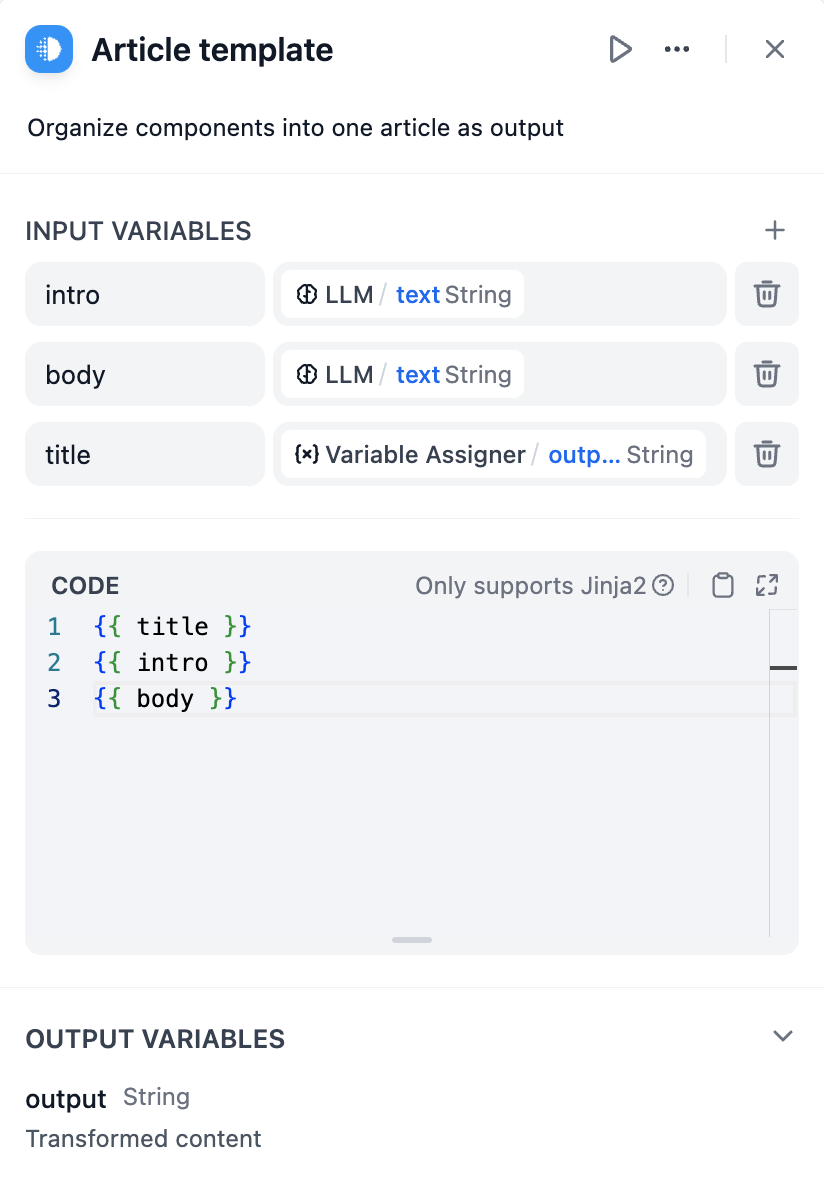

When selecting a specific application type, you can customize it by providing a name, choosing an appropriate icon(or uploading your favorite image as an icon), and writing a clear and concise description of its purpose. These details will help team members easily understand and use the application in the future.

diff --git a/en/guides/application-orchestrate/multiple-llms-debugging.mdx b/en/guides/application-orchestrate/multiple-llms-debugging.mdx

new file mode 100644

index 00000000..2d3c6877

--- /dev/null

+++ b/en/guides/application-orchestrate/multiple-llms-debugging.mdx

@@ -0,0 +1,25 @@

+---

+title: Multiple Model Debugging

+---

+

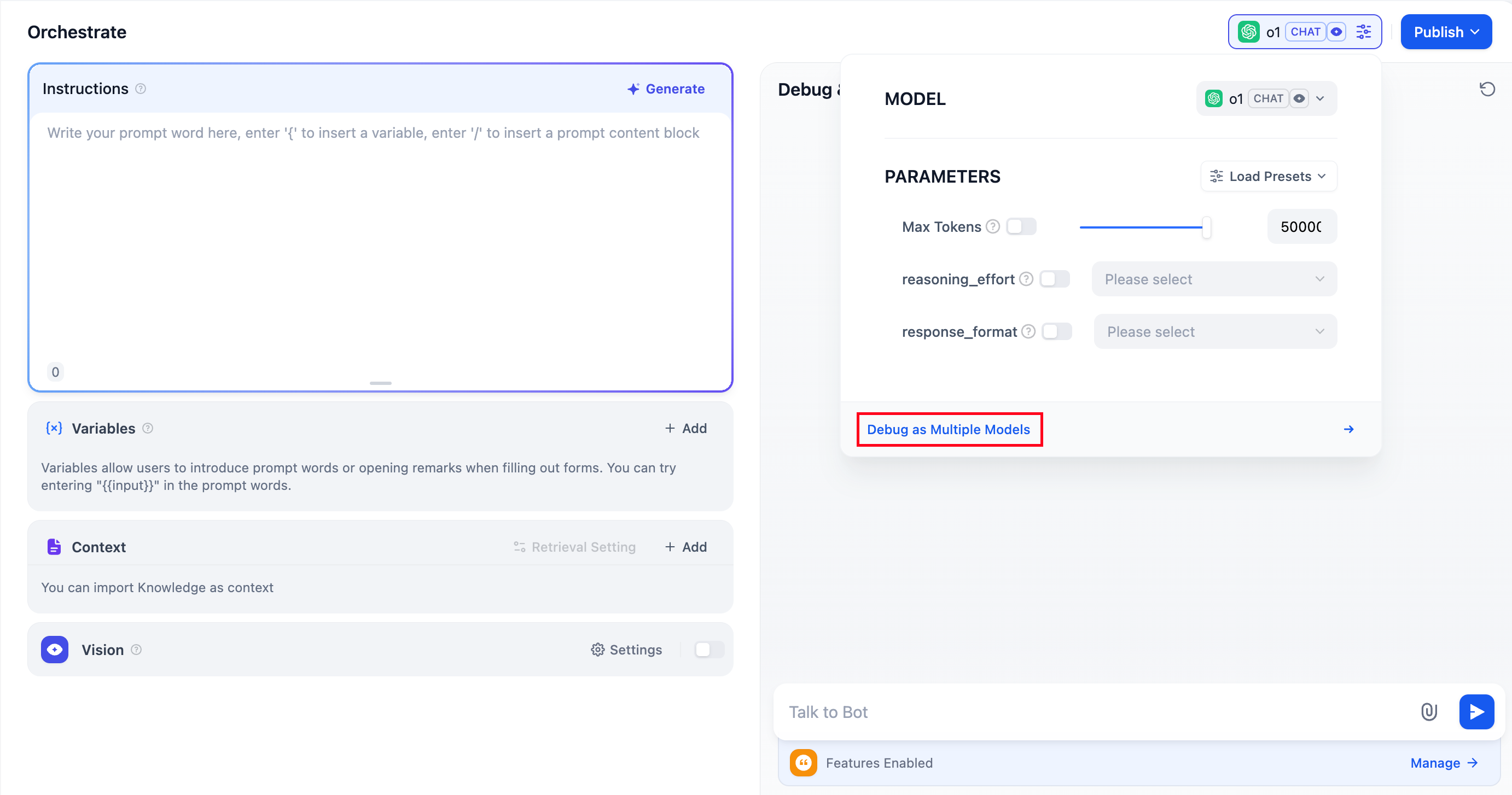

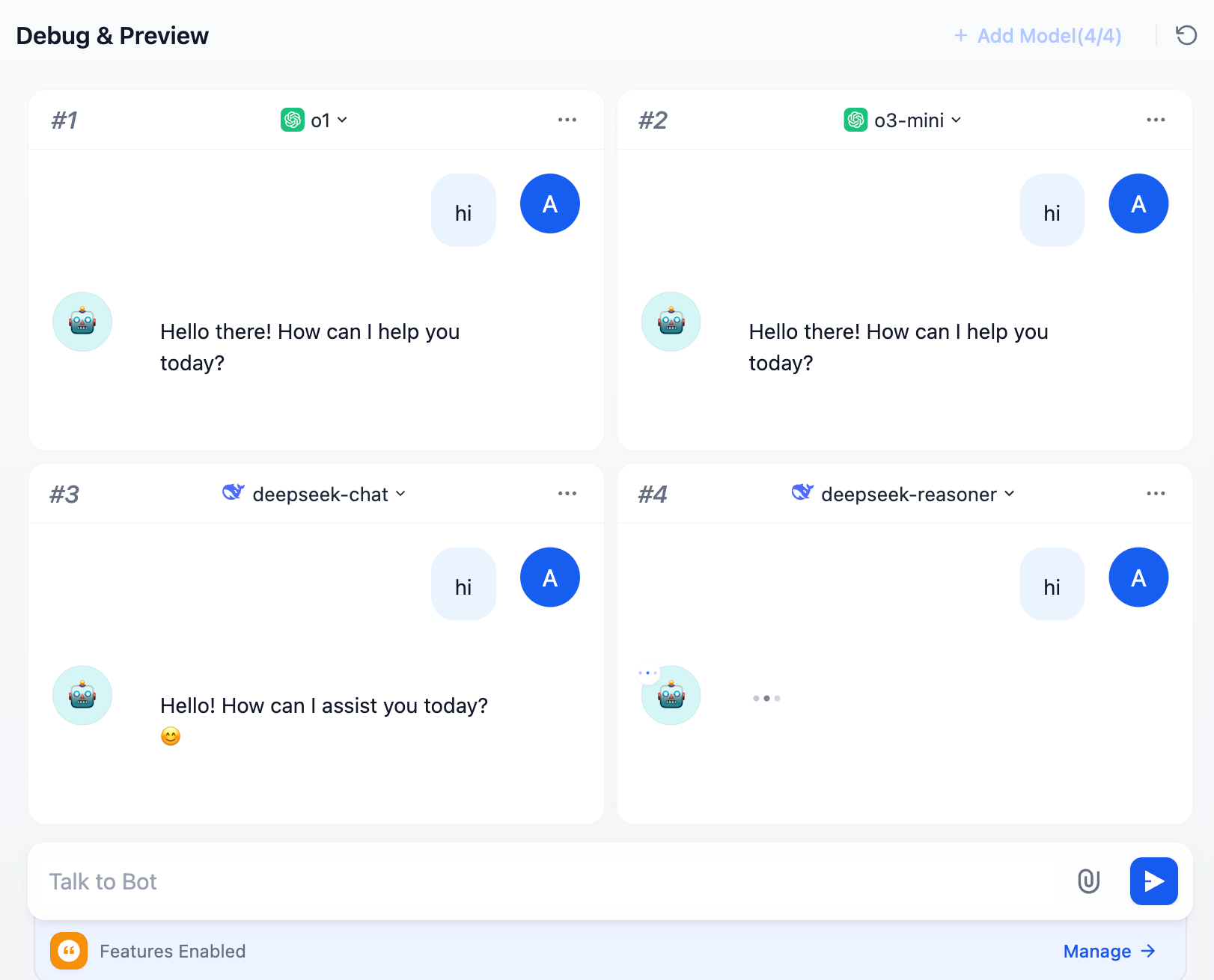

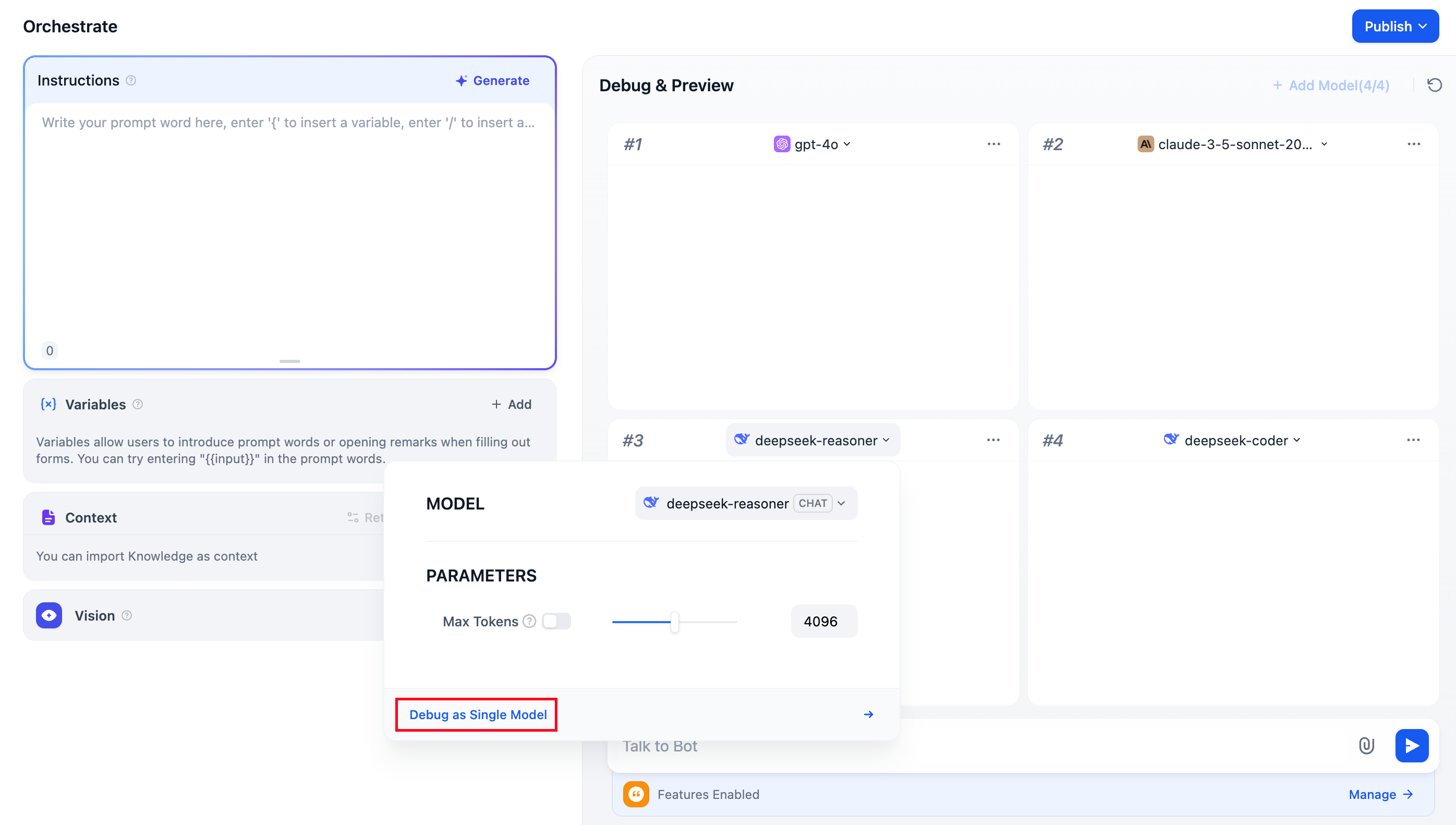

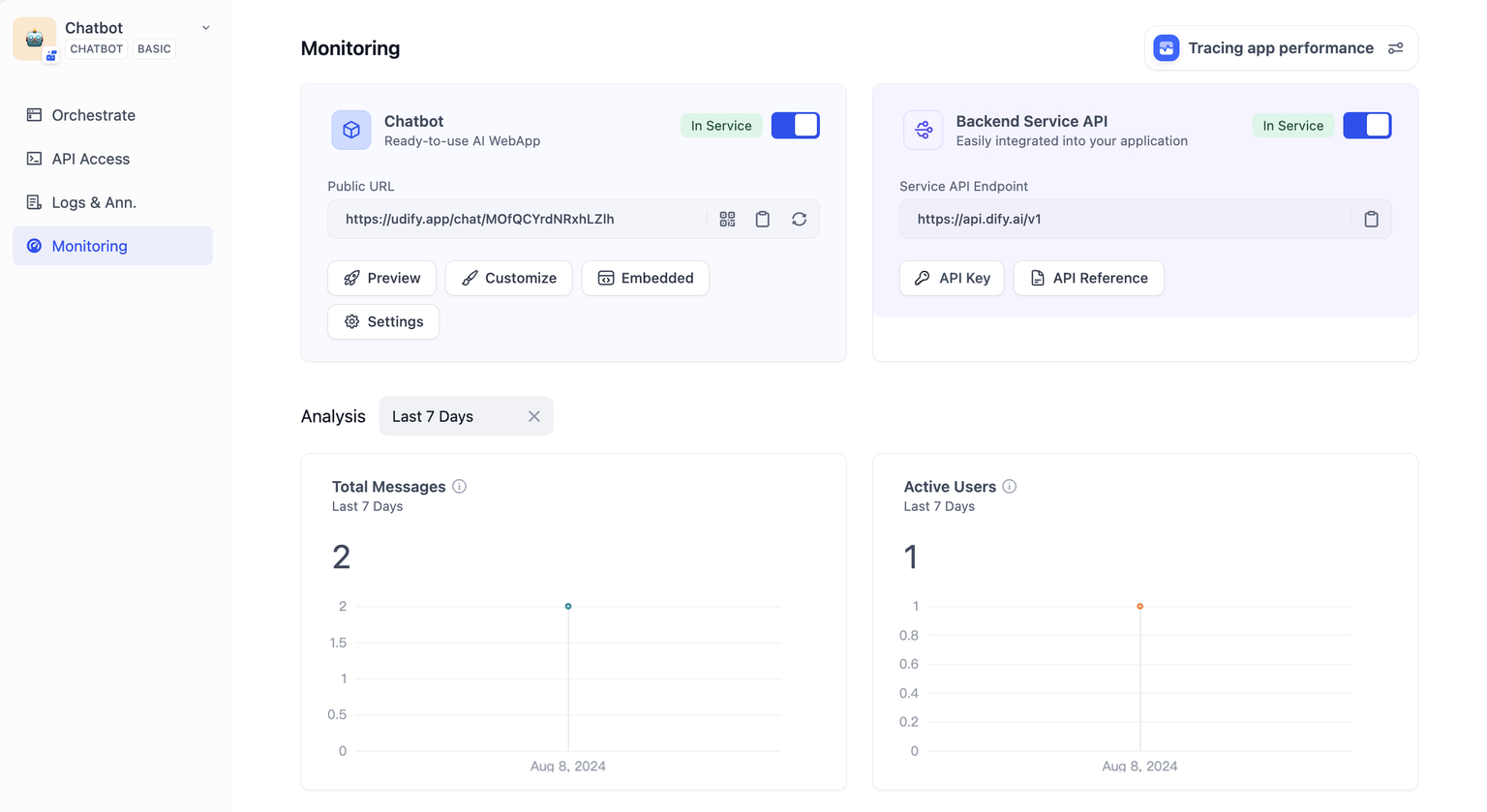

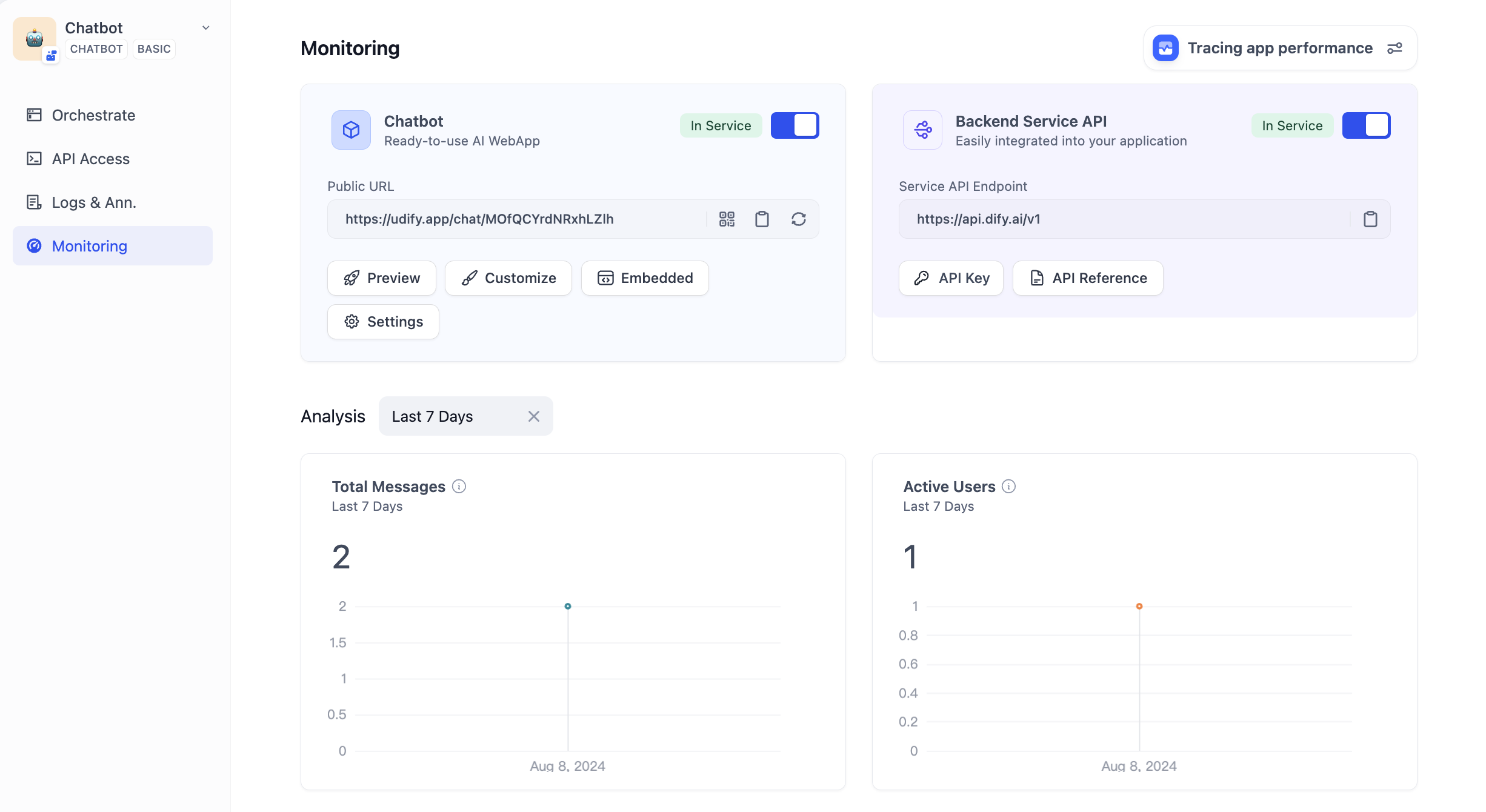

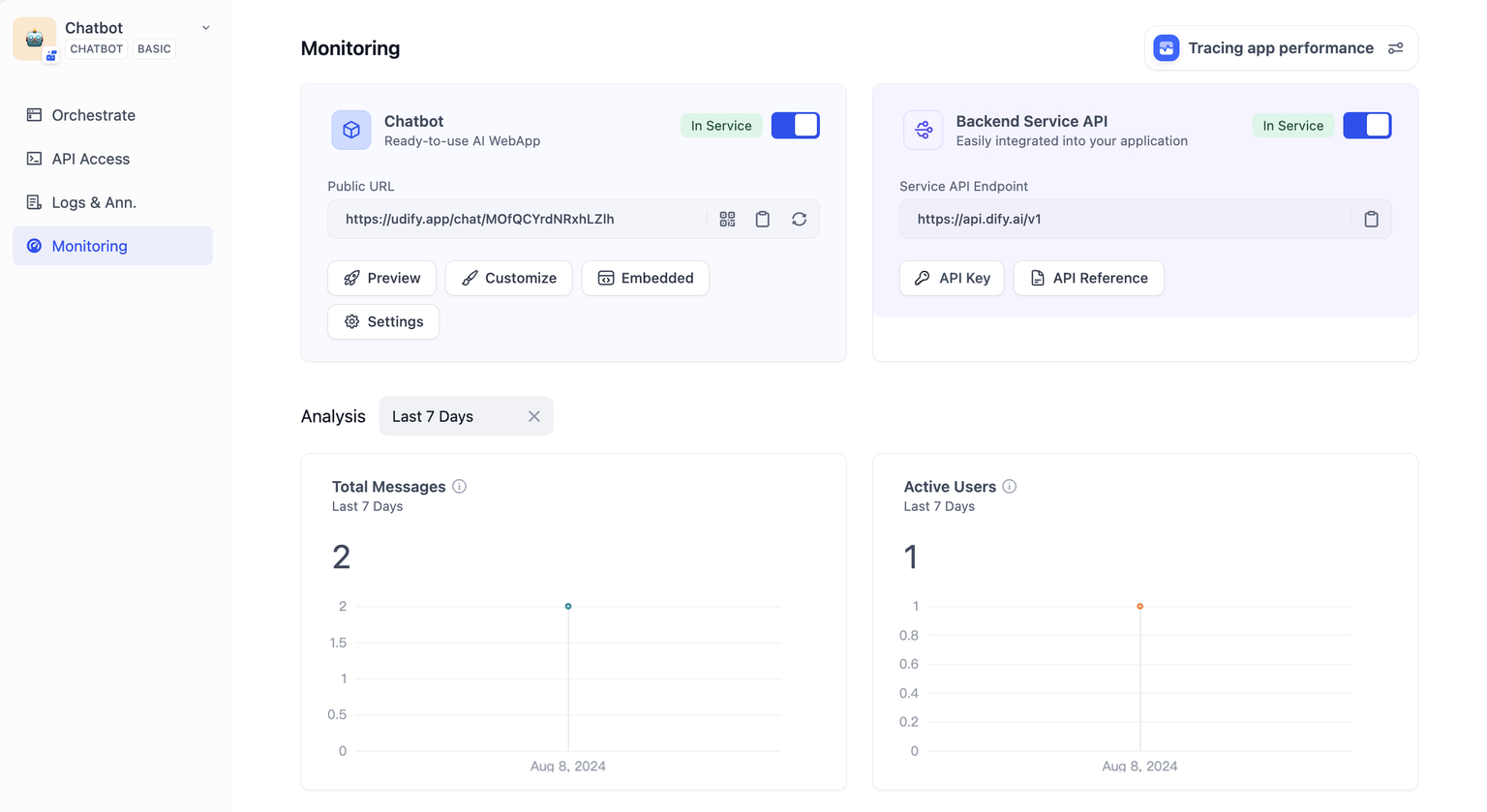

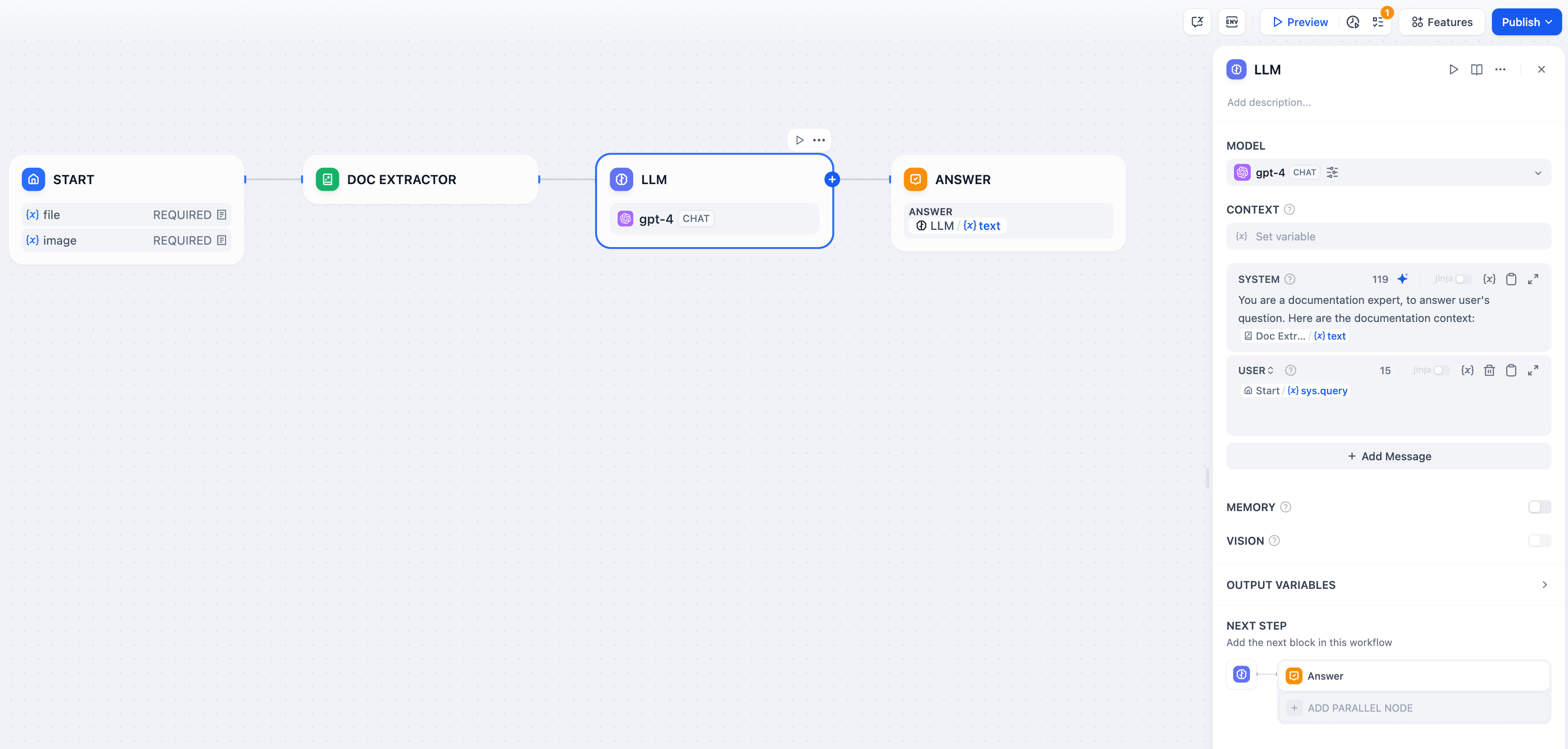

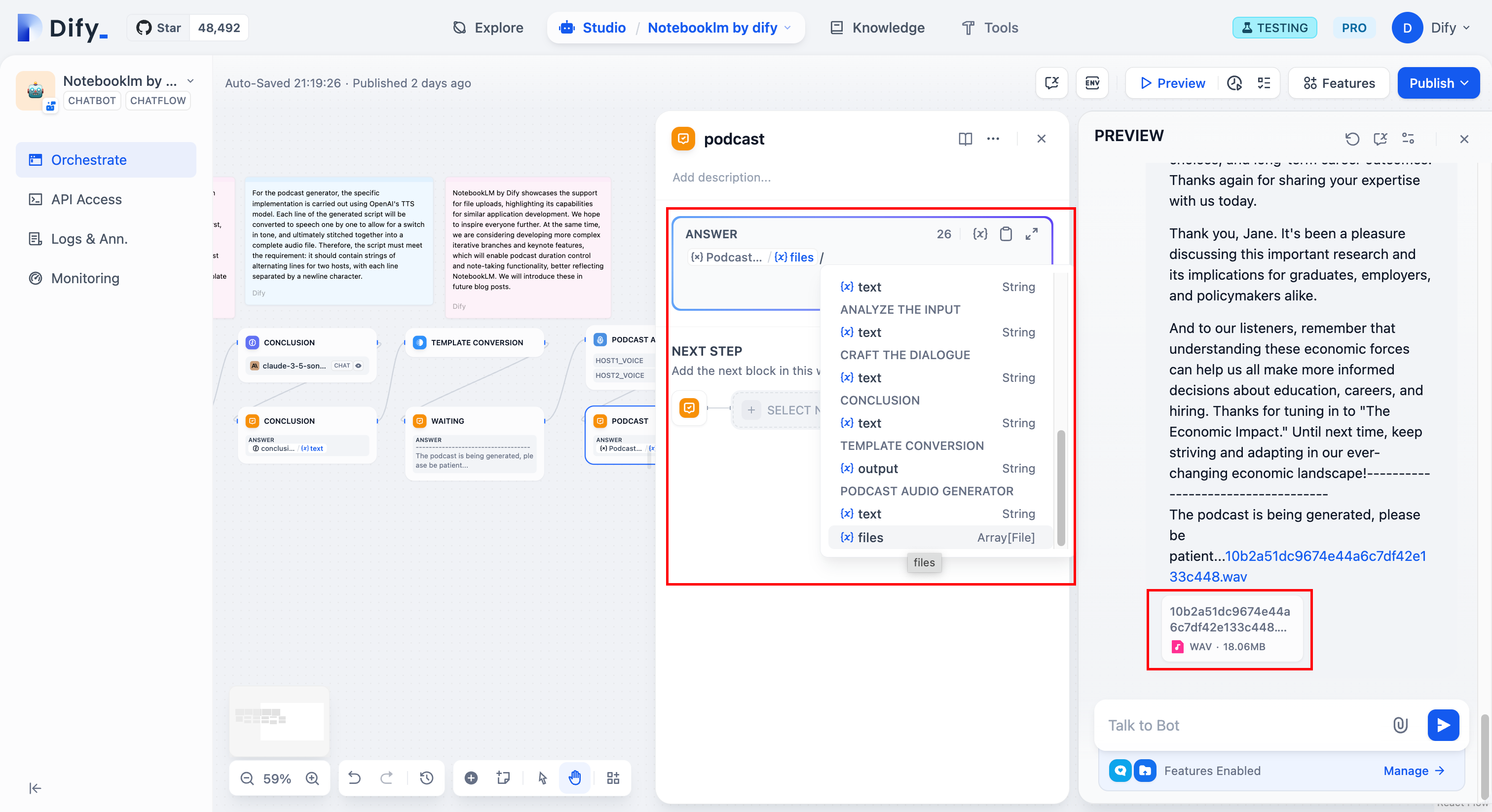

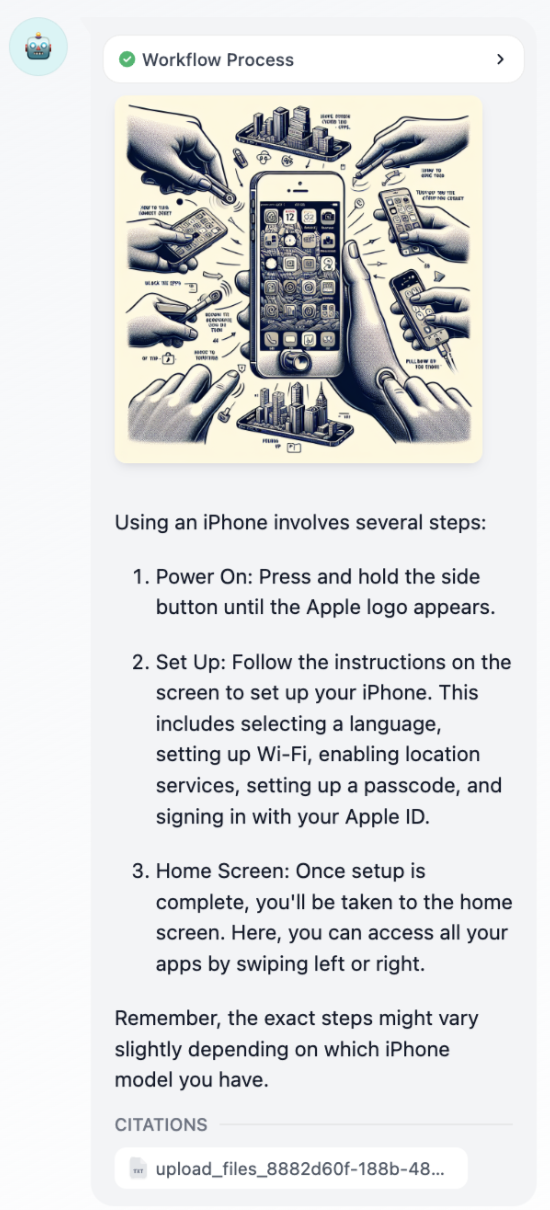

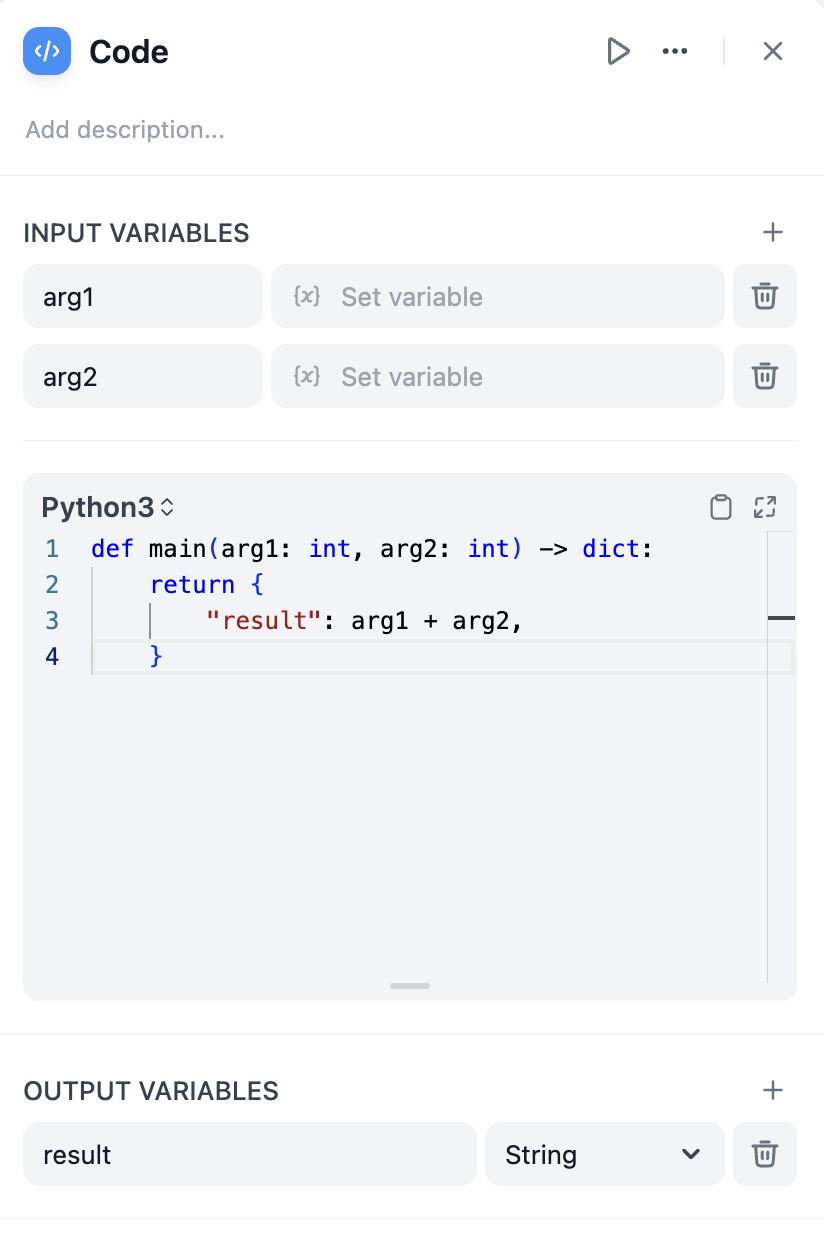

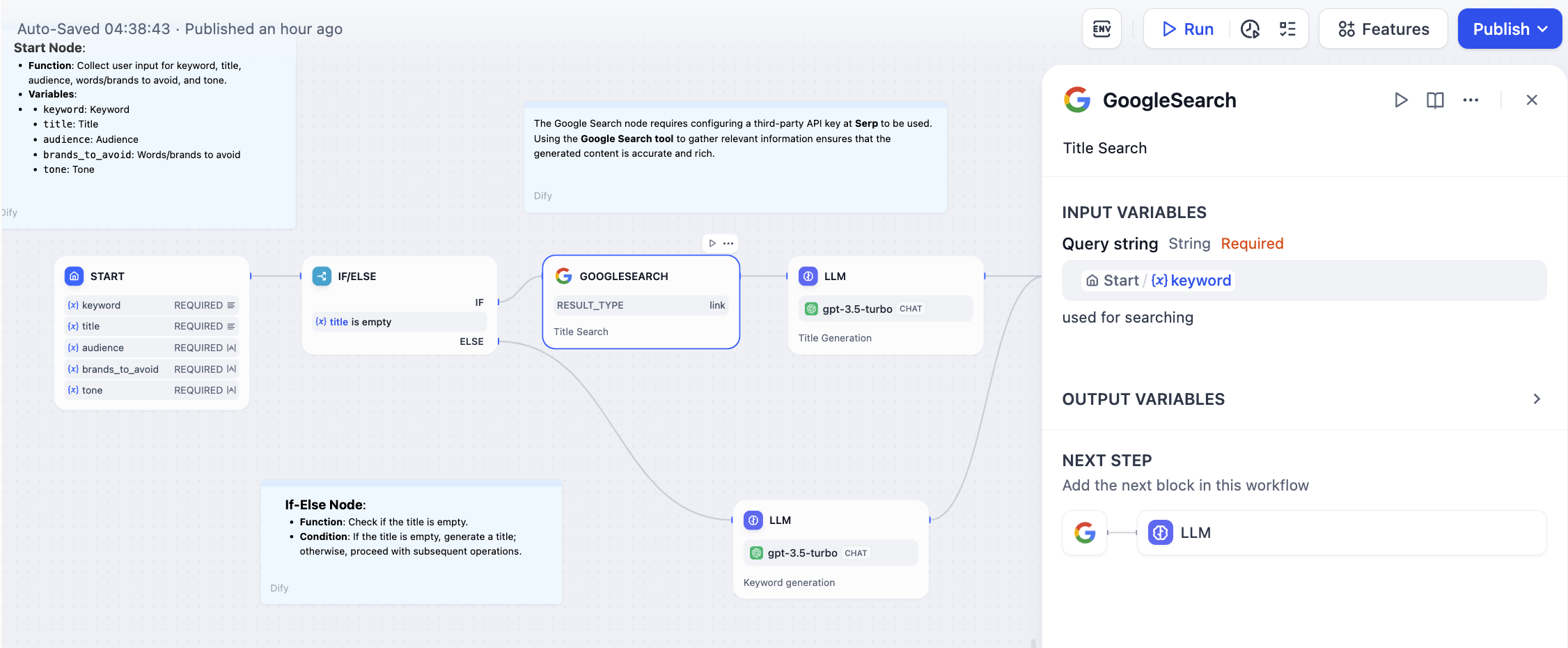

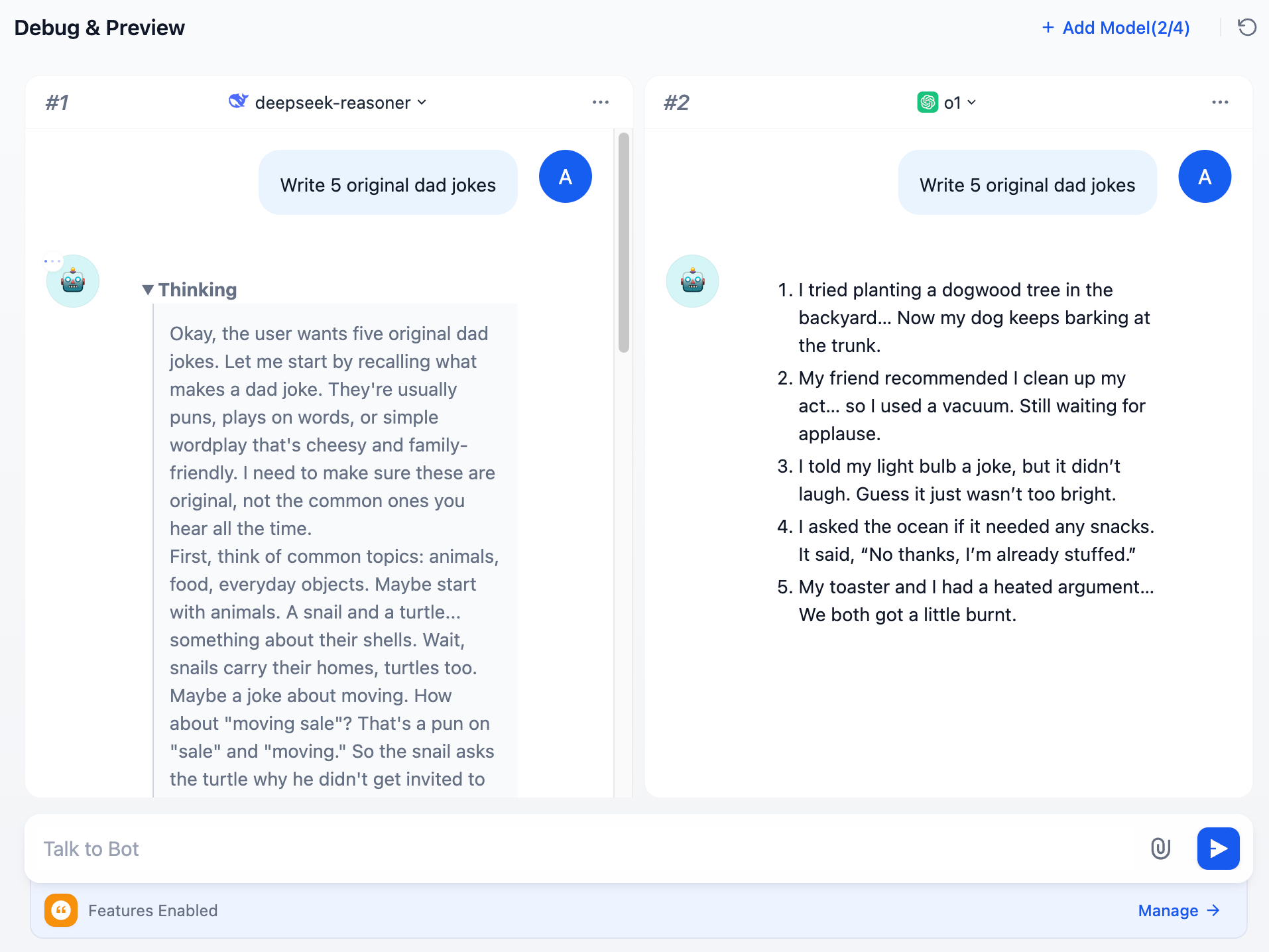

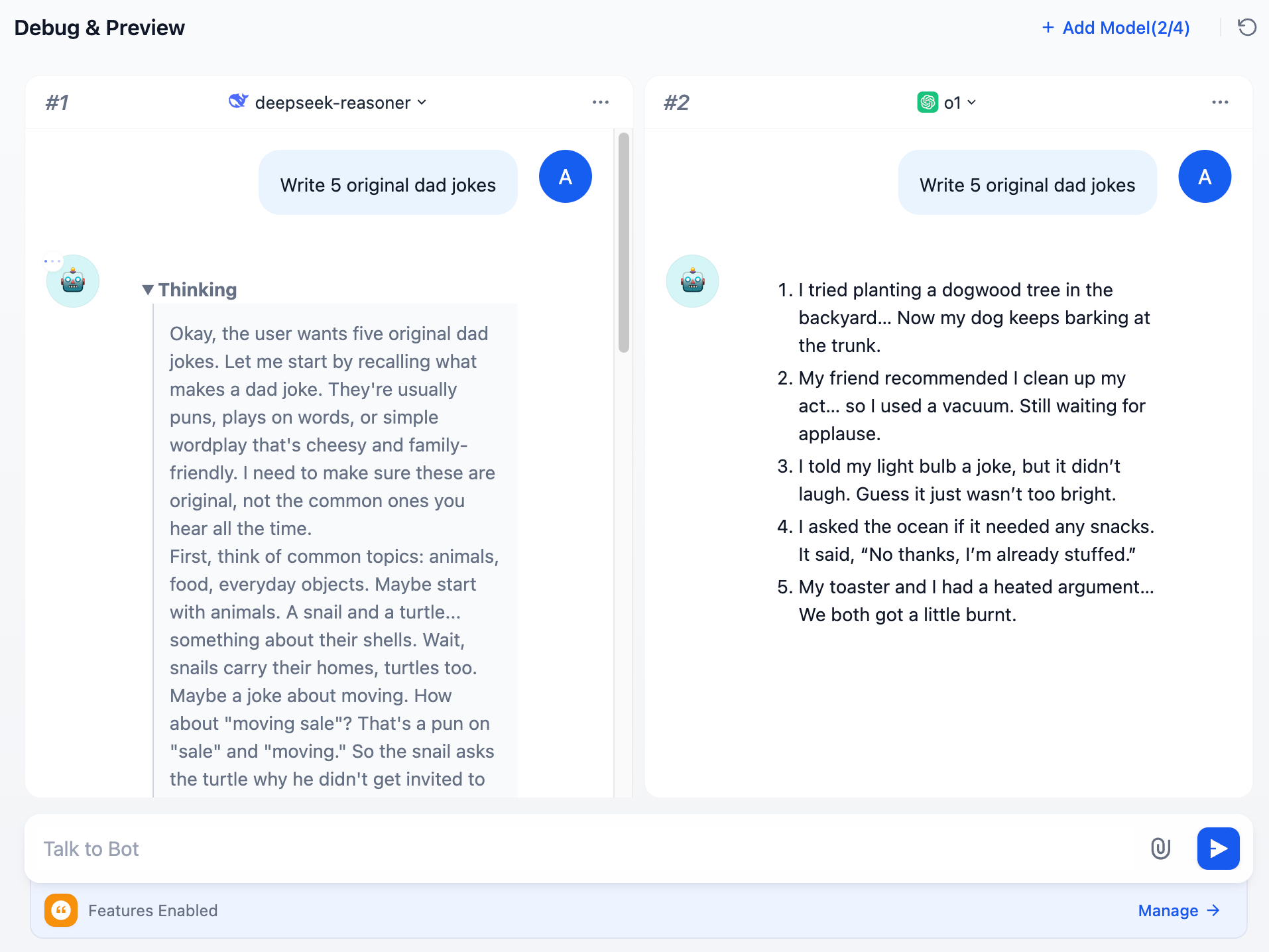

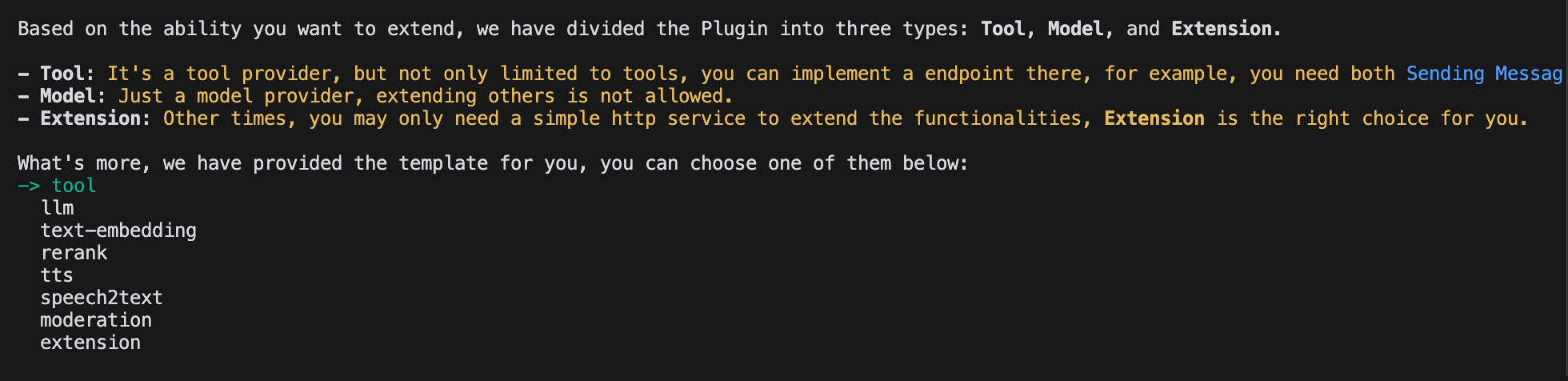

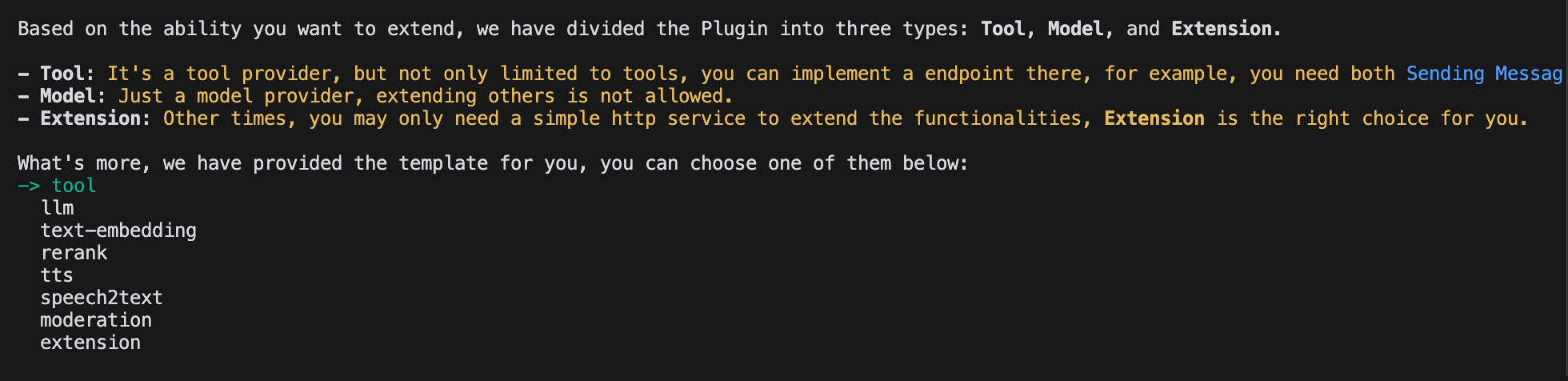

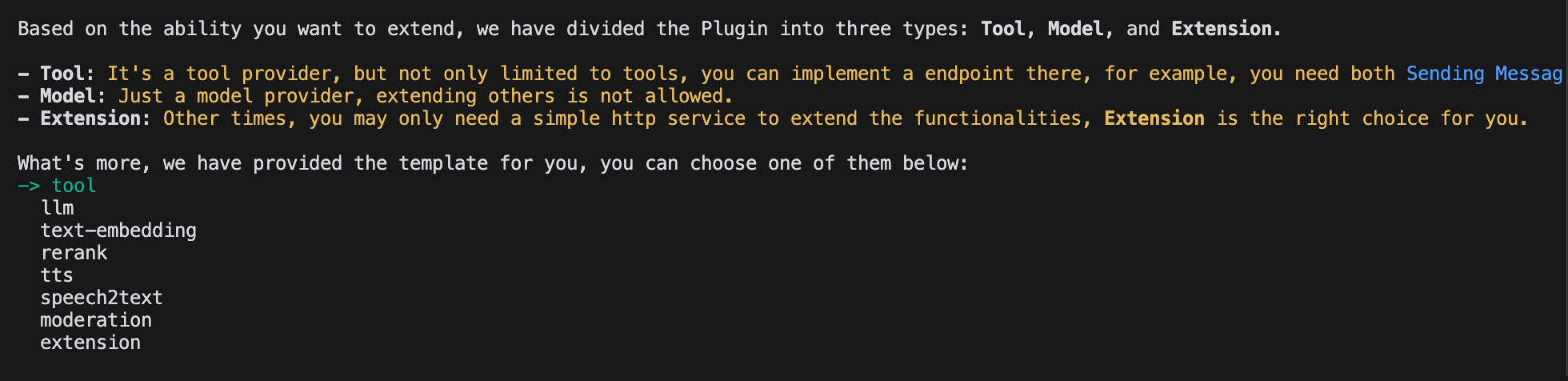

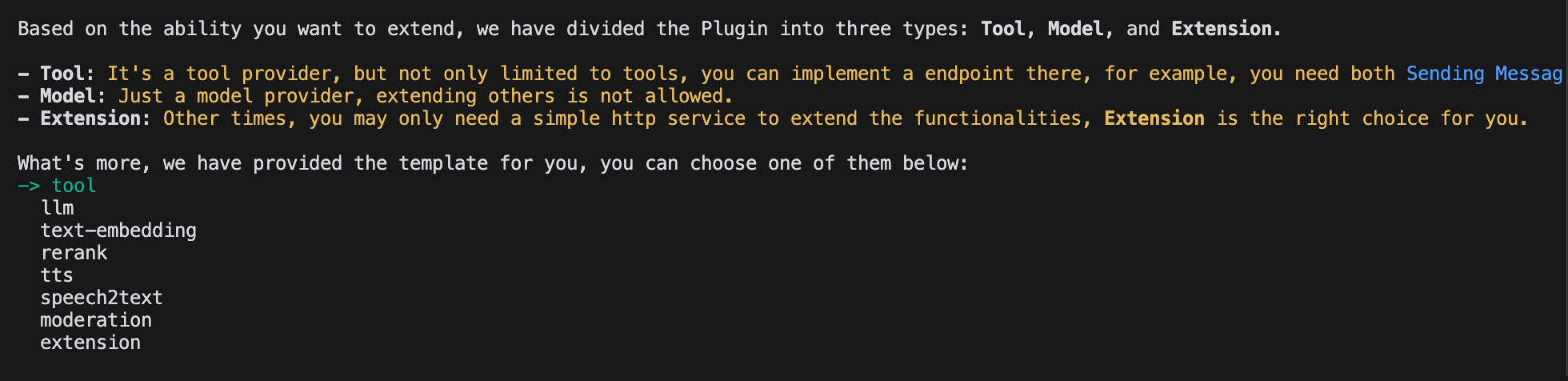

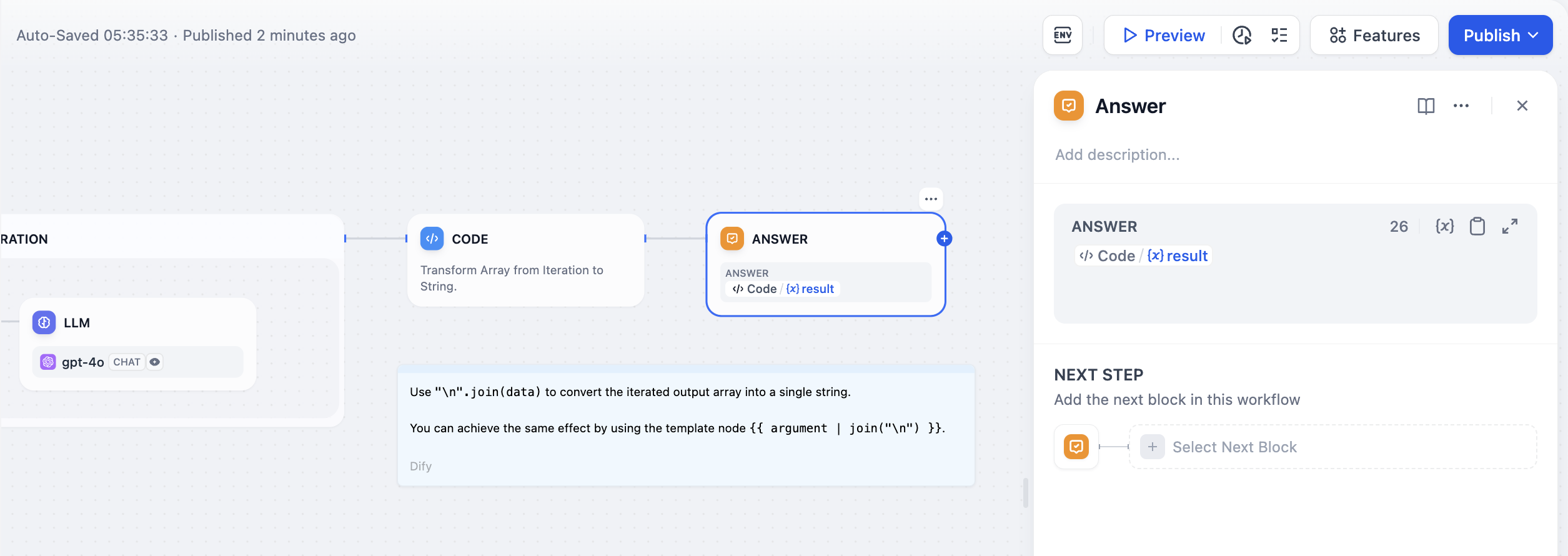

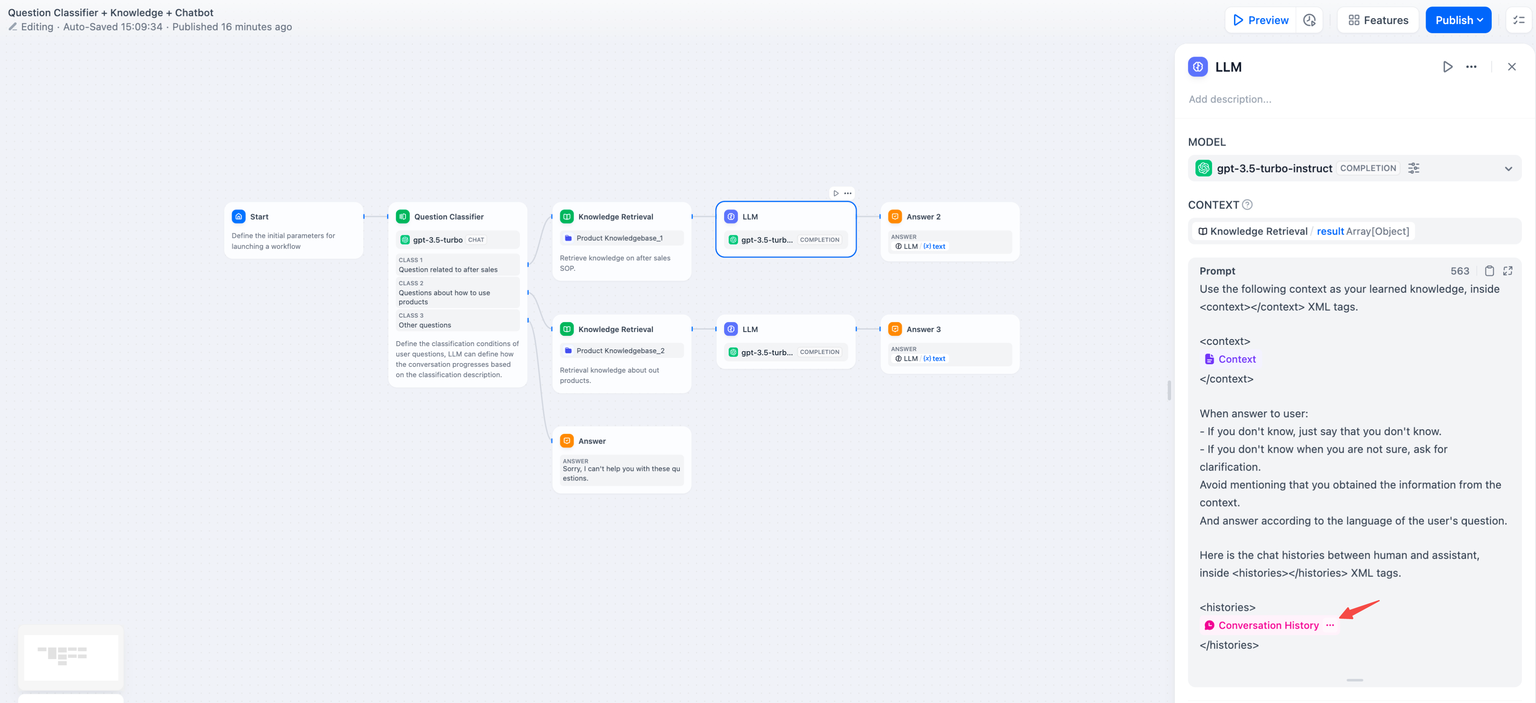

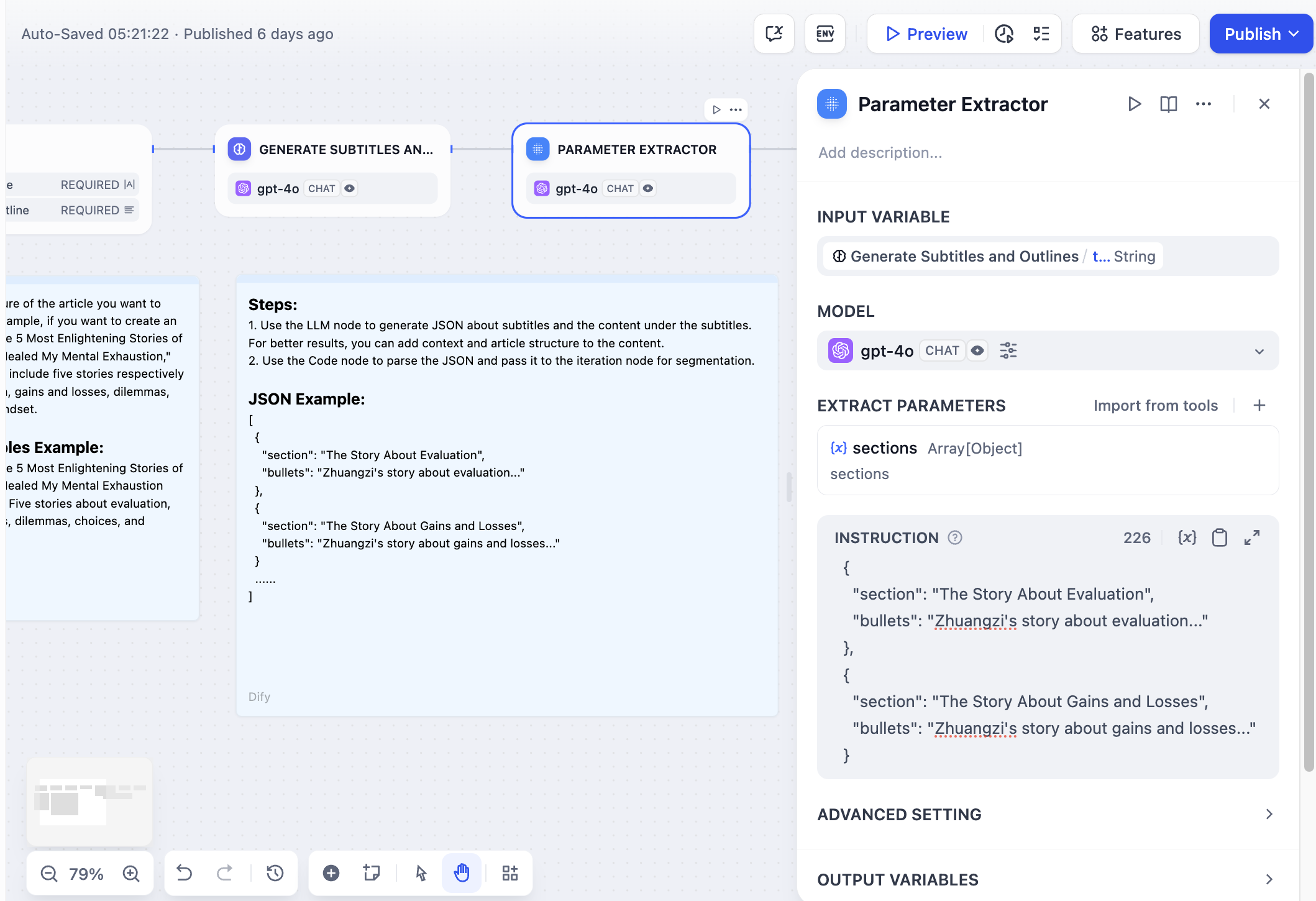

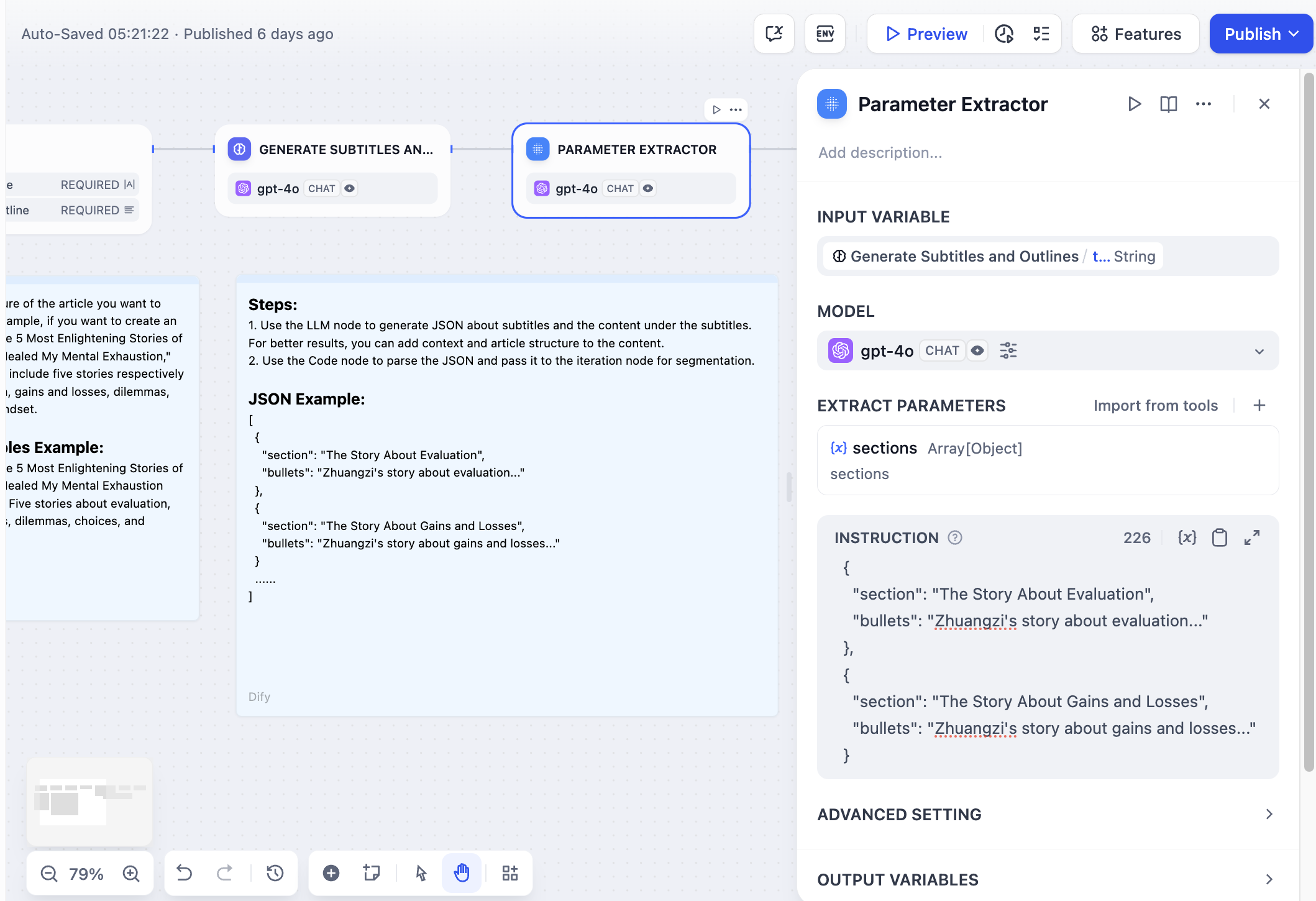

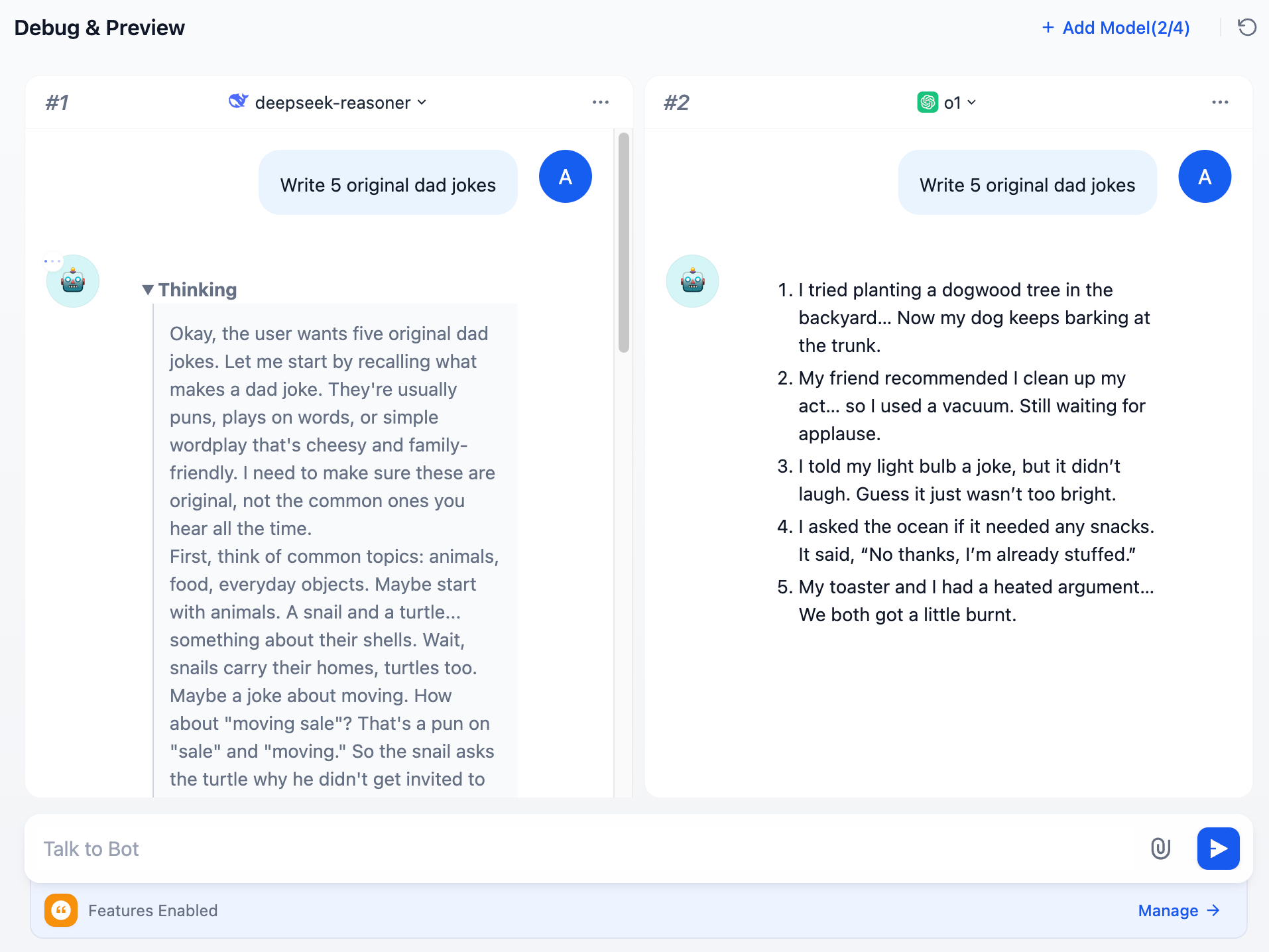

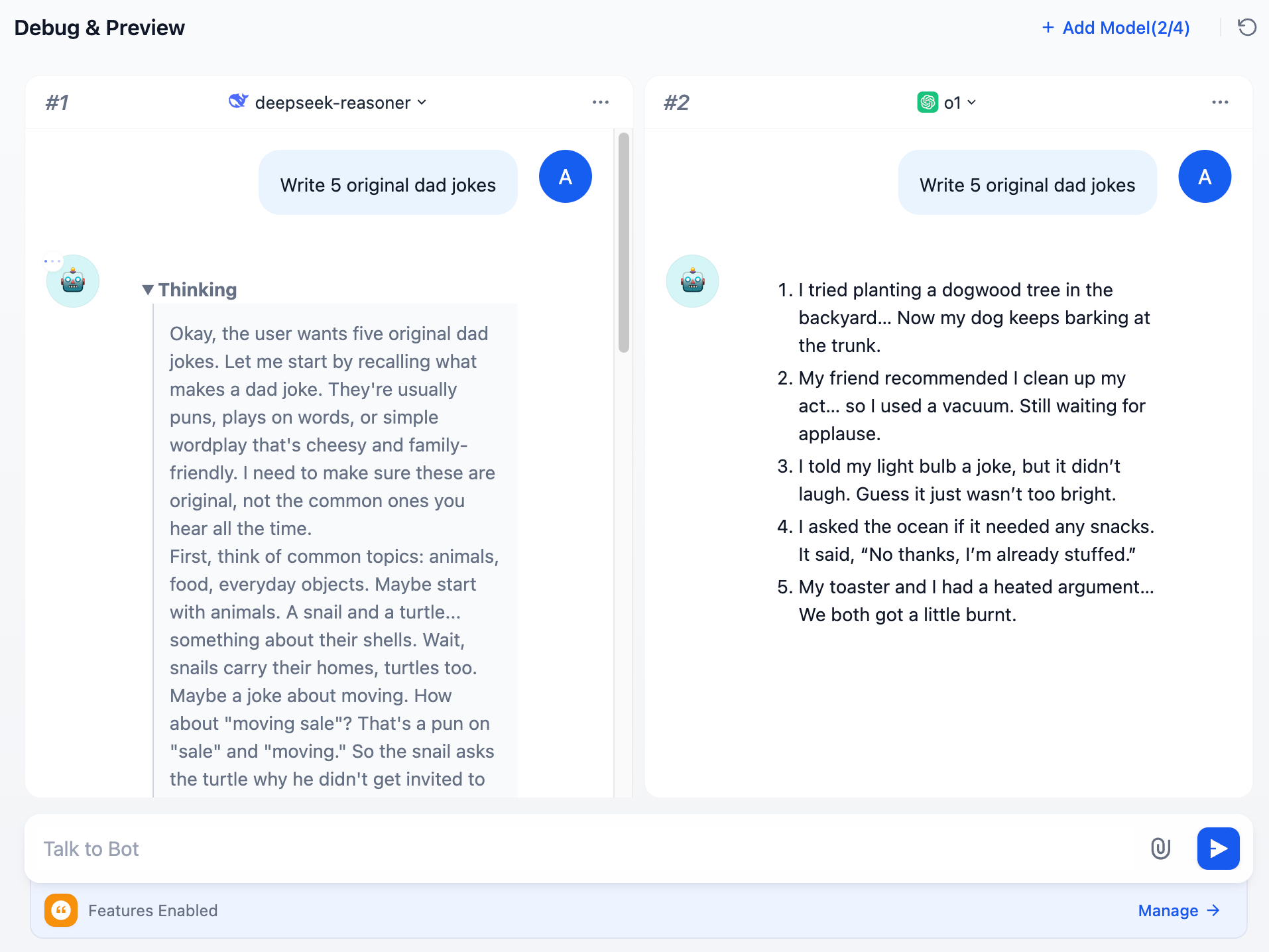

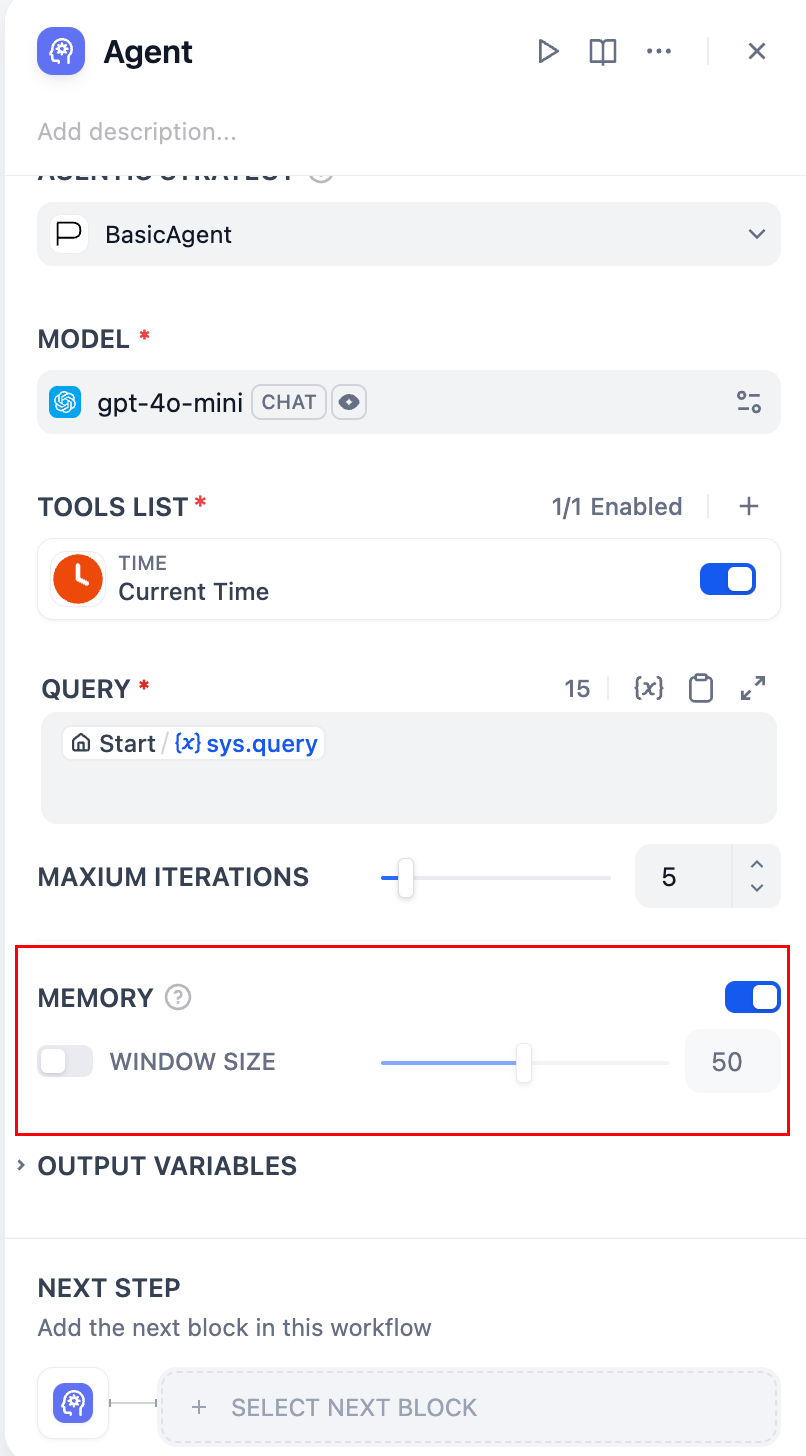

+The **Chatbot** application supports the **“Debug as Multiple Models”** feature, allowing you to simultaneously compare how different models respond to the same question.

+

+

+

+You can add up to **4** large language models at once.

+

+

+

+During debugging, if you find a model that performs well, click **“Debug as Single Model”** to switch to a dedicated preview window for the model.

+

+

+

+## FAQ

+

+### 1. Why aren't additional models visible when adding LLMs?

+

+Go to [“Model Providers”](/guides/model-configuration), and follow the instructions to add the keys for multiple models manually.

+

+### 2. How to exit Multiple Model Debugging mode?

+

+Select any model and click **“Debug with a Single Model”** to exit multiple model debugging mode.

diff --git a/en/guides/application-orchestrate/readme.mdx b/en/guides/application-orchestrate/readme.mdx

index d7ddeb22..644690d4 100644

--- a/en/guides/application-orchestrate/readme.mdx

+++ b/en/guides/application-orchestrate/readme.mdx

@@ -1,5 +1,5 @@

---

-title: Introduction

+title: Application Orchestration

---

In Dify, an "application" refers to a practical scenario application built on large language models like GPT. By creating an application, you can apply intelligent AI technology to specific needs. It encompasses both the engineering paradigm for developing AI applications and the specific deliverables.

diff --git a/en/guides/application-publishing/based-on-frontend-templates.mdx b/en/guides/application-publishing/based-on-frontend-templates.mdx

index 77bf9a39..ae95fd7e 100644

--- a/en/guides/application-publishing/based-on-frontend-templates.mdx

+++ b/en/guides/application-publishing/based-on-frontend-templates.mdx

@@ -1,8 +1,7 @@

---

-title: Based on WebApp Template

+title: Re-develop Based on Frontend Templates

---

-

If developers are developing new products from scratch or in the product prototype design phase, you can quickly launch AI sites using Dify. At the same time, Dify hopes that developers can fully freely create different forms of front-end applications. For this reason, we provide:

* **SDK** for quick access to the Dify API in various languages

diff --git a/en/guides/application-publishing/launch-your-webapp-quickly/README.mdx b/en/guides/application-publishing/launch-your-webapp-quickly/README.mdx

index 4d60fc05..383fb405 100644

--- a/en/guides/application-publishing/launch-your-webapp-quickly/README.mdx

+++ b/en/guides/application-publishing/launch-your-webapp-quickly/README.mdx

@@ -2,7 +2,6 @@

title: Publish as a Single-page Web App

---

-

One of the benefits of creating AI applications with Dify is that you can publish a Single-page AI web app accessible to all users on the internet within minutes.

* If you're using the self-hosted open-source version, the application will run on your server

diff --git a/en/guides/application-publishing/launch-your-webapp-quickly/conversation-application.mdx b/en/guides/application-publishing/launch-your-webapp-quickly/conversation-application.mdx

index e82afc6b..5c986603 100644

--- a/en/guides/application-publishing/launch-your-webapp-quickly/conversation-application.mdx

+++ b/en/guides/application-publishing/launch-your-webapp-quickly/conversation-application.mdx

@@ -26,7 +26,9 @@ Fill in the necessary details and click the "Start Conversation" button to begin

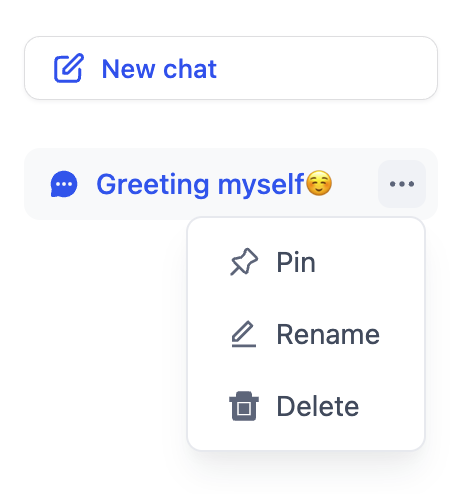

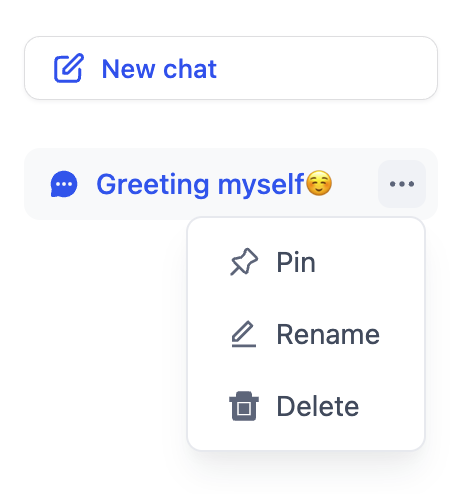

Click the "New Conversation" button to start a new conversation. Hover over a conversation to pin or delete it.

-

+

+  +

+

### Conversation Opener

diff --git a/en/guides/application-publishing/launch-your-webapp-quickly/web-app-settings.mdx b/en/guides/application-publishing/launch-your-webapp-quickly/web-app-settings.mdx

index 57512a61..c08f87e3 100644

--- a/en/guides/application-publishing/launch-your-webapp-quickly/web-app-settings.mdx

+++ b/en/guides/application-publishing/launch-your-webapp-quickly/web-app-settings.mdx

@@ -1,8 +1,7 @@

---

-title: Overview

+title: Web App Settings

---

-

Web applications are designed for application users. When an application developer creates an application on Dify, a corresponding web application is generated. Users of the web application can use it without logging in. The web application is adapted for various device sizes: PC, tablet, and mobile.

The content of the web application aligns with the configuration of the published application. When the application's configuration is modified and the "Publish" button is clicked on the prompt orchestration page, the web application's content will be updated according to the current configuration of the application.

diff --git a/en/guides/extension/api-based-extension/README.mdx b/en/guides/extension/api-based-extension/README.mdx

index bb6235b2..5b4e28e5 100644

--- a/en/guides/extension/api-based-extension/README.mdx

+++ b/en/guides/extension/api-based-extension/README.mdx

@@ -2,7 +2,6 @@

title: API-Based Extension

---

-

Developers can extend module capabilities through the API extension module. Currently supported module extensions include:

* `moderation`

@@ -38,14 +37,15 @@ POST {Your-API-Endpoint}

| `Authorization` |

- Bearer {api\_key} |

+ Bearer `{api_key}` |

The API Key is transmitted as a token. You need to parse the `api_key` and verify if it matches the provided API Key to ensure API security. |

+

### Request Body

-```JSON

+```BASH

{

"point": string, // Extension point, different modules may contain multiple extension points

"params": {

@@ -56,7 +56,7 @@ POST {Your-API-Endpoint}

### API Response

-```JSON

+```BASH

{

... // For the content returned by the API, see the specific module's design specifications for different extension points.

}

@@ -69,14 +69,14 @@ When configuring API-based Extension in Dify, Dify will send a request to the AP

### Header

-```JSON

+```BASH

Content-Type: application/json

Authorization: Bearer {api_key}

```

### Request Body

-```JSON

+```BASH

{

"point": "ping"

}

@@ -84,13 +84,12 @@ Authorization: Bearer {api_key}

### Expected API response

-```JSON

+```BASH

{

"result": "pong"

}

```

-\\

## For Example

@@ -102,14 +101,14 @@ Here we take the external data tool as an example, where the scenario is to retr

### **Header**

-```JSON

+```BASH

Content-Type: application/json

Authorization: Bearer 123456

```

### **Request Body**

-```JSON

+```BASH

{

"point": "app.external_data_tool.query",

"params": {

@@ -125,7 +124,7 @@ Authorization: Bearer 123456

### **API Response**

-```JSON

+```BASH

{

"result": "City: London\nTemperature: 10°C\nRealFeel®: 8°C\nAir Quality: Poor\nWind Direction: ENE\nWind Speed: 8 km/h\nWind Gusts: 14 km/h\nPrecipitation: Light rain"

}

@@ -137,14 +136,16 @@ The code is based on the Python FastAPI framework.

#### **Install dependencies.**

-pip install 'fastapi[all]' uvicorn

-

+```bash

+pip install 'fastapi[all]' uvicorn

+```

#### Write code according to the interface specifications.

-from fastapi import FastAPI, Body, HTTPException, Header

-from pydantic import BaseModel

-

+```python

+from fastapi import FastAPI, Body, HTTPException, Header

+from pydantic import BaseModel

+

app = FastAPI()

@@ -202,14 +203,15 @@ def handle_app_external_data_tool_query(params: dict):

}

else:

return {"result": "Unknown city"}

-

+```

#### Launch the API service.

The default port is 8000. The complete address of the API is: `http://127.0.0.1:8000/api/dify/receive`with the configured API Key '123456'.

-uvicorn main:app --reload --host 0.0.0.0

-

+```code

+uvicorn main:app --reload --host 0.0.0.0

+```

#### Configure this API in Dify.

@@ -221,7 +223,7 @@ The default port is 8000. The complete address of the API is: `http://127.0.0.1:

When debugging the App, Dify will request the configured API and send the following content (example):

-```JSON

+```BASH

{

"point": "app.external_data_tool.query",

"params": {

@@ -237,7 +239,7 @@ When debugging the App, Dify will request the configured API and send the follow

API Response:

-```JSON

+```BASH

{

"result": "City: London\nTemperature: 10°C\nRealFeel®: 8°C\nAir Quality: Poor\nWind Direction: ENE\nWind Speed: 8 km/h\nWind Gusts: 14 km/h\nPrecipitation: Light rain"

}

@@ -249,11 +251,11 @@ Since Dify's cloud version can't access internal network API services, you can u

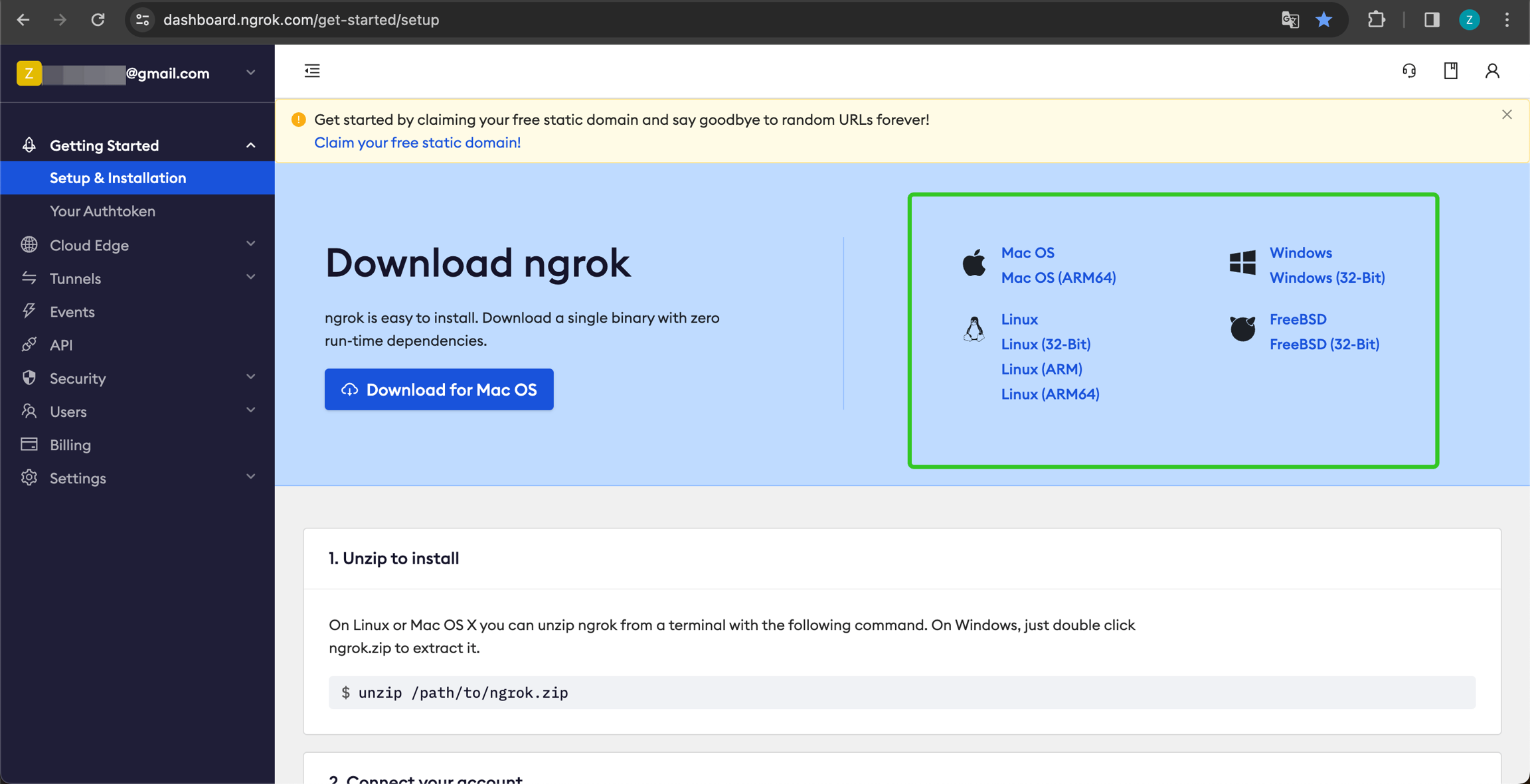

1. Visit the Ngrok official website at [https://ngrok.com](https://ngrok.com/), register, and download the Ngrok file.

-

+

2. After downloading, go to the download directory. Unzip the package and run the initialization script as instructed:

-```

+```bash

$ unzip /path/to/ngrok.zip

$ ./ngrok config add-authtoken 你的Token

```

@@ -264,7 +266,7 @@ $ ./ngrok config add-authtoken 你的Token

Run the following command to start:

-```

+```bash

$ ./ngrok http [port number]

```

@@ -282,4 +284,4 @@ Now, this API endpoint is accessible publicly. You can configure this endpoint i

We recommend that you use Cloudflare Workers to deploy your API extension, because Cloudflare Workers can easily provide a public address and can be used for free.

-[cloudflare-workers](cloudflare-workers)

+[Deploy API Tools with Cloudflare Workers](cloudflare-workers)

diff --git a/en/guides/extension/api-based-extension/cloudflare-workers.mdx b/en/guides/extension/api-based-extension/cloudflare-workers.mdx

index 5dade47d..cb92a107 100644

--- a/en/guides/extension/api-based-extension/cloudflare-workers.mdx

+++ b/en/guides/extension/api-based-extension/cloudflare-workers.mdx

@@ -7,7 +7,7 @@ title: Deploy API Tools with Cloudflare Workers

Since the Dify API Extension requires a publicly accessible internet address as an API Endpoint, we need to deploy our API extension to a public internet address. Here, we use Cloudflare Workers for deploying our API extension.

-We clone the [Example GitHub Repository](https://github.com/crazywoola/dify-extension-workers), which contains a simple API extension. We can modify this as a base.

+Clone the [Example GitHub Repository](https://github.com/crazywoola/dify-extension-workers), which contains a simple API extension. We can modify this as a base.

```bash

git clone https://github.com/crazywoola/dify-extension-workers.git

@@ -59,6 +59,32 @@ alt=""

/>

+Additionally, you can use the `npm run dev` command to deploy locally for testing.

+

+```bash

+npm install

+npm run dev

+```

+

+Related output:

+

+```bash

+$ npm run dev

+> dev

+> wrangler dev src/index.ts

+

+ ⛅️ wrangler 3.99.0

+-------------------

+

+Your worker has access to the following bindings:

+- Vars:

+ - TOKEN: "ban****ool"

+⎔ Starting local server...

+[wrangler:inf] Ready on http://localhost:58445

+```

+

+After this, you can use tools like Postman to debug the local interface.

+

## Other Logic TL;DR

### About Bearer Auth

diff --git a/en/guides/extension/api-based-extension/external-data-tool.mdx b/en/guides/extension/api-based-extension/external-data-tool.mdx

index 3f6da5c1..dfea1091 100644

--- a/en/guides/extension/api-based-extension/external-data-tool.mdx

+++ b/en/guides/extension/api-based-extension/external-data-tool.mdx

@@ -2,7 +2,6 @@

title: External Data Tools

---

-

External data tools are used to fetch additional data from external sources after the end user submits data, and then assemble this data into prompts as additional context information for the LLM. Dify provides a default tool for external API calls, check [External Data Tool](https://docs.dify.ai/guides/knowledge-base/external-data-tool) for details.

For developers deploying Dify locally, to meet more customized needs or to avoid developing an additional API Server, you can directly insert custom external data tool logic in the form of a plugin based on the Dify service. After extending custom tools, your custom tool options will be added to the dropdown list of tool types, and team members can use these custom tools to fetch external data.

@@ -17,7 +16,7 @@ Here is an example of extending an external data tool for `Weather Search`, with

4. Preview the frontend interface

5. Debug the extension

-### 1. **Initialize the Directory**

+### 1. Initialize the Directory

To add a custom type `Weather Search`, you need to create the relevant directory and files under `api/core/external_data_tool`.

@@ -32,7 +31,7 @@ To add a custom type `Weather Search`, you need to create the relevant directory

└── schema.json

```

-### 2. **Add Frontend Component Specifications**

+### 2. Add Frontend Component Specifications

* `schema.json`, which defines the frontend component specifications, detailed in [.](./ "mention")

@@ -79,7 +78,7 @@ To add a custom type `Weather Search`, you need to create the relevant directory

`weather_search.py` code template, where you can implement the specific business logic.

-Note: The class variable `name` must be the custom type name, consistent with the directory and file name, and must be unique.

+ The class variable `name` must be the custom type name, consistent with the directory and file name, and must be unique.

```python

@@ -130,17 +129,12 @@ class WeatherSearch(ExternalDataTool):

return f'Weather in {city} is 0°C'

```

-

-

-### 4. **Debug the Extension**

+### 4. Debug the Extension

Now, you can select the custom `Weather Search` external data tool extension type in the Dify application orchestration interface for debugging.

-## Implementation Class Template

+#### Implementation Class Template

```python

from typing import Optional

@@ -181,7 +175,7 @@ class WeatherSearch(ExternalDataTool):

### Detailed Introduction to Implementation Class Development

-### def validate_config

+#### def validate_config

`schema.json` form validation method, called when the user clicks "Publish" to save the configuration.

@@ -193,4 +187,4 @@ class WeatherSearch(ExternalDataTool):

User-defined data query implementation, the returned result will be replaced into the specified variable.

* `inputs`: Variables passed by the end user

-* `query`: Current conversation input content from the end user, a fixed parameter for conversational applications.

\ No newline at end of file

+* `query`: Current conversation input content from the end user, a fixed parameter for conversational applications.

diff --git a/en/guides/extension/api-based-extension/moderation-extension.mdx b/en/guides/extension/api-based-extension/moderation-extension.mdx

index 1a7eb9e7..9fb59d68 100644

--- a/en/guides/extension/api-based-extension/moderation-extension.mdx

+++ b/en/guides/extension/api-based-extension/moderation-extension.mdx

@@ -2,7 +2,6 @@

title: Moderation

---

-

This module is used to review the content input by end-users and the output from LLMs within the application, divided into two types of extension points.

Please read [.](./ "mention") to complete the development and integration of basic API service capabilities.

diff --git a/en/guides/extension/api-based-extension/moderation.mdx b/en/guides/extension/api-based-extension/moderation.mdx

index 5839fdd3..724e1eab 100644

--- a/en/guides/extension/api-based-extension/moderation.mdx

+++ b/en/guides/extension/api-based-extension/moderation.mdx

@@ -2,7 +2,6 @@

title: Sensitive Content Moderation

---

-

This module is used to review the content input by end-users and the output content of the LLM within the application. It is divided into two types of extension points.

### Extension Points

@@ -17,7 +16,7 @@ This module is used to review the content input by end-users and the output cont

#### Request Body

-```

+```json

{

"point": "app.moderation.input", // Extension point type, fixed as app.moderation.input here

"params": {

diff --git a/en/guides/extension/code-based-extension/external-data-tool.mdx b/en/guides/extension/code-based-extension/external-data-tool.mdx

index 1d06366f..66fd52d6 100644

--- a/en/guides/extension/code-based-extension/external-data-tool.mdx

+++ b/en/guides/extension/code-based-extension/external-data-tool.mdx

@@ -2,7 +2,6 @@

title: External Data Tools

---

-

External data tools are used to fetch additional data from external sources after the end user submits data, and then assemble this data into prompts as additional context information for the LLM. Dify provides a default tool for external API calls, check [api-based-extension](/en/guides/extension/api-based-extension) for details.

For developers deploying Dify locally, to meet more customized needs or to avoid developing an additional API Server, you can directly insert custom external data tool logic in the form of a plugin based on the Dify service. After extending custom tools, your custom tool options will be added to the dropdown list of tool types, and team members can use these custom tools to fetch external data.

@@ -34,7 +33,7 @@ To add a custom type `Weather Search`, you need to create the relevant directory

### 2. **Add Frontend Component Specifications**

-* `schema.json`, which defines the frontend component specifications, detailed in [Code Based Extension](.)

+* `schema.json`, which defines the frontend component specifications, detailed in [.](./ "mention")

```json

{

@@ -78,9 +77,9 @@ To add a custom type `Weather Search`, you need to create the relevant directory

`weather_search.py` code template, where you can implement the specific business logic.

-

+

Note: The class variable `name` must be the custom type name, consistent with the directory and file name, and must be unique.

-

+

```python

from typing import Optional

@@ -130,12 +129,6 @@ class WeatherSearch(ExternalDataTool):

return f'Weather in {city} is 0°C'

```

-

-

-

-

### 4. **Debug the Extension**

Now, you can select the custom `Weather Search` external data tool extension type in the Dify application orchestration interface for debugging.

@@ -193,4 +186,4 @@ class WeatherSearch(ExternalDataTool):

User-defined data query implementation, the returned result will be replaced into the specified variable.

* `inputs`: Variables passed by the end user

-* `query`: Current conversation input content from the end user, a fixed parameter for conversational applications.

\ No newline at end of file

+* `query`: Current conversation input content from the end user, a fixed parameter for conversational applications.

diff --git a/en/guides/extension/code-based-extension/moderation.mdx b/en/guides/extension/code-based-extension/moderation.mdx

index 7a16678b..6aae34de 100644

--- a/en/guides/extension/code-based-extension/moderation.mdx

+++ b/en/guides/extension/code-based-extension/moderation.mdx

@@ -2,7 +2,6 @@

title: Sensitive Content Moderation

---

-

In addition to the system's built-in content moderation types, Dify also supports user-defined content moderation rules. This method is suitable for developers customizing their own private deployments. For instance, in an enterprise internal customer service setup, it may be required that users, while querying or customer service agents while responding, not only avoid entering words related to violence, sex, and illegal activities but also avoid specific terms forbidden by the enterprise or violating internally established moderation logic. Developers can extend custom content moderation rules at the code level in a private deployment of Dify.

## Quick Start

@@ -19,7 +18,7 @@ Here is an example of extending a `Cloud Service` content moderation type, with

To add a custom type `Cloud Service`, create the relevant directories and files under the `api/core/moderation` directory.

-```Plain

+```plaintext

.

└── api

└── core

@@ -32,7 +31,7 @@ To add a custom type `Cloud Service`, create the relevant directories and files

### 2. Add Frontend Component Specifications

-* `schema.json`: This file defines the frontend component specifications. For details, see [.](./ "mention").

+* `schema.json`: This file defines the frontend component specifications.

```json

{

@@ -106,9 +105,9 @@ To add a custom type `Cloud Service`, create the relevant directories and files

`cloud_service.py` code template where you can implement specific business logic.

-

+

Note: The class variable name must be the same as the custom type name, matching the directory and file names, and must be unique.

-

+

```python

from core.moderation.base import Moderation, ModerationAction, ModerationInputsResult, ModerationOutputsResult

@@ -205,12 +204,6 @@ class CloudServiceModeration(Moderation):

return False

```

-

-

-

-

### 4. Debug the Extension

At this point, you can select the custom `Cloud Service` content moderation extension type for debugging in the Dify application orchestration interface.

@@ -278,7 +271,7 @@ class CloudServiceModeration(Moderation):

The `schema.json` form validation method is called when the user clicks "Publish" to save the configuration.

* `config` form parameters

- * `{{variable}}` custom variable of the form

+ * `{variable}` custom variable of the form

* `inputs_config` input moderation preset response

* `enabled` whether it is enabled

* `preset_response` input preset response

@@ -314,4 +307,5 @@ Output validation function

* `direct_output`: directly output the preset response

* `overridden`: override the passed variable values

* `preset_response`: preset response (returned only when action=direct_output)

- * `text`: overridden content of the LLM response (returned only when action=overridden).

\ No newline at end of file

+ * `text`: overridden content of the LLM response (returned only when action=overridden).

+

diff --git a/en/guides/knowledge-base/api-documentation/external-knowledge-api-documentation.mdx b/en/guides/knowledge-base/api-documentation/external-knowledge-api-documentation.mdx

index 431b18ae..279b6a3e 100644

--- a/en/guides/knowledge-base/api-documentation/external-knowledge-api-documentation.mdx

+++ b/en/guides/knowledge-base/api-documentation/external-knowledge-api-documentation.mdx

@@ -164,12 +164,5 @@ HTTP Status Code: 500

You can learn how to develop external knowledge base plugins through the following video tutorial using LlamaCloud as an example:

-

For more information about how it works, please refer to the plugin's [GitHub repository](https://github.com/langgenius/dify-official-plugins/tree/main/extensions/llamacloud).

diff --git a/en/guides/knowledge-base/api-documentation/external-knowledge-api.mdx b/en/guides/knowledge-base/api-documentation/external-knowledge-api.mdx

index 11f4f944..279b6a3e 100644

--- a/en/guides/knowledge-base/api-documentation/external-knowledge-api.mdx

+++ b/en/guides/knowledge-base/api-documentation/external-knowledge-api.mdx

@@ -1,415 +1,168 @@

---

-title: Chatbot

-version: 'English'

+title: External Knowledge API

---

-## Overview

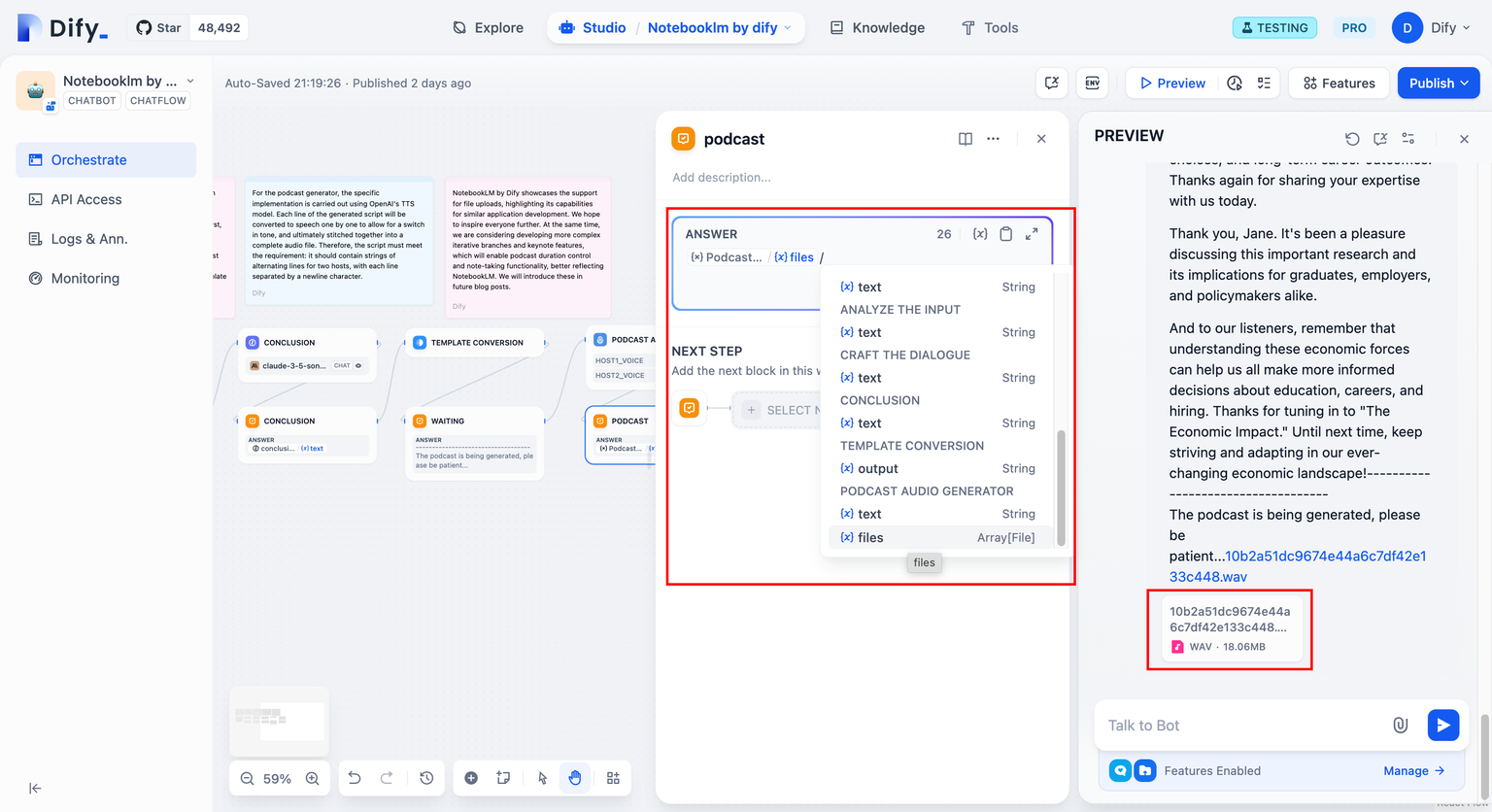

-Chat applications support session persistence, allowing previous chat history to be used as context for responses. This can be applicable for chatbots, customer service AI, etc.

+## Endpoint

-## Base URL

```

-https://api.dify.ai/v1

+POST /retrieval

```

-## Authentication

-The Service API uses API-Key authentication.

+## Header

-> **Important**: Strongly recommend storing your API Key on the server-side, not shared or stored on the client-side, to avoid possible API-Key leakage that can lead to serious consequences.

+This API is used to connect to a knowledge base that is independent of the Dify and maintained by developers. For more details, please refer to [Connecting to an External Knowledge Base](https://docs.dify.ai/guides/knowledge-base/connect-external-knowledge-base). You can use `API-Key` in the `Authorization` HTTP Header to verify permissions. The authentication logic is defined by you in the retrieval API, as shown below:

-For all API requests, include your API Key in the Authorization HTTP Header:

```

Authorization: Bearer {API_KEY}

```

-## API Endpoints

+## Request Body Elements

-### Send Chat Message

-`POST /chat-messages`

+The request accepts the following data in JSON format.

-Send a request to the chat application.

+| Property | Required | Type | Description | Example value |

+|----------|----------|------|-------------|---------------|

+| knowledge_id | TRUE | string | Your knowledge's unique ID | AAA-BBB-CCC |

+| query | TRUE | string | User's query | What is Dify? |

+| retrieval_setting | TRUE | object | Knowledge's retrieval parameters | See below |

+| metadata_condition | FALSE | Object | Original array filtering | See below |

-#### Request Body

+The `retrieval_setting` property is an object containing the following keys:

-- **query** (string) Required

- - User Input / Question Content

+| Property | Required | Type | Description | Example value |

+|----------|----------|------|-------------|---------------|

+| top_k | TRUE | int | Maximum number of retrieved results | 5 |

+| score_threshold | TRUE | float | The score limit of relevance of the result to the query, scope: 0~1 | 0.5 |

-- **inputs** (object) Required

- - Allows the entry of various variable values defined by the App

- - Contains multiple key/value pairs, each key corresponding to a specific variable

- - At least one key/value pair required

+The `metadata_condition` property is an object containing the following keys:

-- **response_mode** (string) Required

- - Modes supported:

- - `streaming`: Streaming mode (recommended), implements typewriter-like output through SSE

- - `blocking`: Blocking mode, returns result after execution completes

- - Note: Due to Cloudflare restrictions, requests will timeout after 100 seconds

- - Note: blocking mode is not supported in Agent Assistant mode

+| Attribute | Required | Type | Description | Example Value |

+|-----------|----------|------|-------------|--------------|

+| logical_operator | No | String | Logical operator, values can be `and` or `or`, default is `and` | and |

+| conditions | Yes | Array (Object) | List of conditions | See below |

-- **user** (string) Required

- - User identifier for retrieval and statistics

- - Should be uniquely defined within the application

+Each object in the `conditions` array contains the following keys:

-- **conversation_id** (string) Optional

- - Conversation ID to continue based on previous chat records

+| Attribute | Required | Type | Description | Example Value |

+|-----------|----------|------|-------------|--------------|

+| name | Yes | Array (String) | Names of the metadata to filter | `["category", "tag"]` |

+| comparison_operator | Yes | String | Comparison operator | `contains` |

+| value | No | String | Comparison value, can be omitted when the operator is `empty`, `not empty`, `null`, or `not null` | `"AI"` |

-- **files** (array[object]) Optional

- - File list for image input with text understanding

- - Available only when model supports Vision capability

- - Properties:

- - `type` (string): Supported type: image

- - `transfer_method` (string): 'remote_url' or 'local_file'

- - `url` (string): Image URL (for remote_url)

- - `upload_file_id` (string): Uploaded file ID (for local_file)

+Supported `comparison_operator` operators:

-- **auto_generate_name** (bool) Optional

- - Auto-generate title, default is false

- - Can achieve async title generation via conversation rename API

+- `contains`: Contains a certain value

+- `not contains`: Does not contain a certain value

+- `start with`: Starts with a certain value

+- `end with`: Ends with a certain value

+- `is`: Equals a certain value

+- `is not`: Does not equal a certain value

+- `empty`: Is empty

+- `not empty`: Is not empty

+- `=`: Equals

+- `≠`: Not equal

+- `>`: Greater than

+- `<`: Less than

+- `≥`: Greater than or equal to

+- `≤`: Less than or equal to

+- `before`: Before a certain date

+- `after`: After a certain date

-#### Response Types

+## Request Syntax

-**Blocking Mode Response (ChatCompletionResponse)**

-Returns complete App result with Content-Type: application/json

-

-```typescript

-interface ChatCompletionResponse {

- message_id: string;

- conversation_id: string;

- mode: string;

- answer: string;

- metadata: {

- usage: Usage;

- retriever_resources: RetrieverResource[];

- };

- created_at: number;

-}

-```

-

-**Streaming Mode Response (ChunkChatCompletionResponse)**

-Returns stream chunks with Content-Type: text/event-stream

-

-Events:

-- `message`: LLM text chunk event

-- `agent_message`: LLM text chunk event (Agent Assistant mode)

-- `agent_thought`: Agent reasoning process

-- `message_file`: New file created by tool

-- `message_end`: Stream end event

-- `message_replace`: Content replacement event

-- `workflow_started`: Workflow execution start

-- `node_started`: Node execution start

-- `node_finished`: Node execution completion

-- `workflow_finished`: Workflow execution completion

-- `parallel_branch_started`: Parallel branch start

-- `parallel_branch_finished`: Parallel branch completion

-- `iteration_started`: Iteration start

-- `iteration_next`: Next iteration

-- `iteration_completed`: Iteration completion

-- `error`: Exception event

-- `ping`: Keep-alive event (every 10s)

-

-### File Upload

-`POST /files/upload`

-

-Upload files (currently only images) for multimodal understanding.

-

-#### Supported Formats

-- png

-- jpg

-- jpeg

-- webp

-- gif

-

-#### Request Body

-Requires multipart/form-data:

-- `file` (File) Required

-- `user` (string) Required

-

-#### Response

-```typescript

-interface FileUploadResponse {

- id: string;

- name: string;

- size: number;

- extension: string;

- mime_type: string;

- created_by: string;

- created_at: number;

-}

-```

-

-### Stop Generate

-`POST /chat-messages/:task_id/stop`

-

-Stop ongoing generation (streaming mode only).

-

-#### Request Body

-- `user` (string) Required

-

-#### Response

```json

+POST /retrieval HTTP/1.1

+-- header

+Content-Type: application/json

+Authorization: Bearer your-api-key

+-- data

{

- "result": "success"

+ "knowledge_id": "your-knowledge-id",

+ "query": "your question",

+ "retrieval_setting":{

+ "top_k": 2,

+ "score_threshold": 0.5

+ }

}

```

-### Message Feedback

-`POST /messages/:message_id/feedbacks`

+## Response Elements

-Submit user feedback on messages.

+If the action is successful, the service sends back an HTTP 200 response.

-#### Request Body

-- `rating` (string) Required: "like" | "dislike" | null

-- `user` (string) Required

+The following data is returned in JSON format by the service.

+

+| Property | Required | Type | Description | Example value |

+|----------|----------|------|-------------|---------------|

+| records | TRUE | List[Object] | A list of records from querying the knowledge base. | See below |

+

+The `records` property is a list object containing the following keys:

+

+| Property | Required | Type | Description | Example value |

+|----------|----------|------|-------------|---------------|

+| content | TRUE | string | Contains a chunk of text from a data source in the knowledge base. | Dify:The Innovation Engine for GenAI Applications |

+| score | TRUE | float | The score of relevance of the result to the query, scope: 0~1 | 0.5 |

+| title | TRUE | string | Document title | Dify Introduction |

+| metadata | FALSE | json | Contains metadata attributes and their values for the document in the data source. | See example |

+

+## Response Syntax

-#### Response

```json

+HTTP/1.1 200

+Content-type: application/json

{

- "result": "success"

+ "records": [{

+ "metadata": {

+ "path": "s3://dify/knowledge.txt",

+ "description": "dify knowledge document"

+ },

+ "score": 0.98,

+ "title": "knowledge.txt",

+ "content": "This is the document for external knowledge."

+ },

+ {

+ "metadata": {

+ "path": "s3://dify/introduce.txt",

+ "description": "dify introduce"

+ },

+ "score": 0.66,

+ "title": "introduce.txt",

+ "content": "The Innovation Engine for GenAI Applications"

+ }

+ ]

}

```

-### Get Conversation History

-`GET /messages`

+## Errors

-Returns historical chat records in reverse chronological order.

+If the action fails, the service sends back the following error information in JSON format:

-#### Query Parameters

-- `conversation_id` (string) Required

-- `user` (string) Required

-- `first_id` (string) Optional: First chat record ID

-- `limit` (int) Optional: Default 20

+| Property | Required | Type | Description | Example value |

+|----------|----------|------|-------------|---------------|

+| error_code | TRUE | int | Error code | 1001 |

+| error_msg | TRUE | string | The description of API exception | Invalid Authorization header format. Expected 'Bearer ' format. |

-#### Response

-```typescript

-interface ConversationHistory {

- data: Array<{

- id: string;

- conversation_id: string;

- inputs: Record;

- query: string;

- message_files: Array<{

- id: string;

- type: string;

- url: string;

- belongs_to: 'user' | 'assistant';

- }>;

- agent_thoughts: Array<{

- id: string;

- message_id: string;

- position: number;

- thought: string;

- observation: string;

- tool: string;

- tool_input: string;

- created_at: number;

- message_files: string[];

- }>;

- answer: string;

- created_at: number;

- feedback?: {

- rating: 'like' | 'dislike';

- };

- retriever_resources: RetrieverResource[];

- }>;

- has_more: boolean;

- limit: number;

-}

-```

+The `error_code` property has the following types:

-### Get Conversations

-`GET /conversations`

+| Code | Description |

+|------|-------------|

+| 1001 | Invalid Authorization header format. |

+| 1002 | Authorization failed |

+| 2001 | The knowledge does not exist |

-Retrieve conversation list for current user.

+### HTTP Status Codes

-#### Query Parameters

-- `user` (string) Required

-- `last_id` (string) Optional

-- `limit` (int) Optional: Default 20

-- `pinned` (boolean) Optional

+**AccessDeniedException**

+The request is denied because of missing access permissions. Check your permissions and retry your request.

+HTTP Status Code: 403

-#### Response

-```typescript

-interface ConversationList {

- data: Array<{

- id: string;

- name: string;

- inputs: Record;

- introduction: string;

- created_at: number;

- }>;

- has_more: boolean;

- limit: number;

-}

-```

+**InternalServerException**

+An internal server error occurred. Retry your request.

+HTTP Status Code: 500

-### Delete Conversation

-`DELETE /conversations/:conversation_id`

+## Development Example

-Delete a conversation.

+You can learn how to develop external knowledge base plugins through the following video tutorial using LlamaCloud as an example:

-#### Request Body

-- `user` (string) Required

-#### Response

-```json

-{

- "result": "success"

-}

-```

-

-### Rename Conversation

-`POST /conversations/{conversation_id}/name`

-

-#### Request Body

-- `name` (string) Optional

-- `auto_generate` (boolean) Optional: Default false

-- `user` (string) Required

-

-#### Response

-```typescript

-interface RenamedConversation {

- id: string;

- name: string;

- inputs: Record;

- introduction: string;

- created_at: number;

-}

-```

-

-### Speech to Text

-`POST /audio-to-text`

-

-Convert audio to text.

-

-#### Request Body (multipart/form-data)

-- `file` (File) Required

- - Supported formats: mp3, mp4, mpeg, mpga, m4a, wav, webm

- - Size limit: 15MB

-- `user` (string) Required

-

-#### Response

-```typescript

-interface AudioToTextResponse {

- text: string;

-}

-```

-

-### Get Application Parameters

-`GET /parameters`

-

-Retrieve application configuration and settings.

-

-#### Query Parameters

-- `user` (string) Required

-

-#### Response

-```typescript

-interface ApplicationParameters {

- opening_statement: string;

- suggested_questions_after_answer: {

- enabled: boolean;

- };

- speech_to_text: {

- enabled: boolean;

- };

- retriever_resource: {

- enabled: boolean;

- };

- annotation_reply: {

- enabled: boolean;

- };

- user_input_form: Array<{

- 'text-input' | 'paragraph' | 'select': {

- label: string;

- variable: string;

- required: boolean;

- default: string;

- options?: string[];

- };

- }>;

- file_upload: {

- image: {

- enabled: boolean;

- number_limits: number;

- transfer_methods: string[];

- };

- };

- system_parameters: {

- image_file_size_limit: string;

- };

-}

-```

-

-### Get Application Meta Information

-`GET /meta`

-

-Retrieve tool icons and metadata.

-

-#### Query Parameters

-- `user` (string) Required

-

-#### Response

-```typescript

-interface ApplicationMeta {

- tool_icons: Record;

-}

-```

-

-## Type Definitions

-

-### Usage

-```typescript

-interface Usage {

- prompt_tokens: number;

- prompt_unit_price: string;

- prompt_price_unit: string;

- prompt_price: string;

- completion_tokens: number;

- completion_unit_price: string;

- completion_price_unit: string;

- completion_price: string;

- total_tokens: number;

- total_price: string;

- currency: string;

- latency: number;

-}

-```

-

-### RetrieverResource

-```typescript

-interface RetrieverResource {

- position: number;

- content: string;

- score: string;

- dataset_id: string;

- dataset_name: string;

- document_id: string;

- document_name: string;

- segment_id: string;

-}

-```

-

-## Error Codes

-

-Common error codes you may encounter:

-- 404: Conversation does not exist

-- 400: invalid_param - Abnormal parameter input

-- 400: app_unavailable - App configuration unavailable

-- 400: provider_not_initialize - No available model credential configuration

-- 400: provider_quota_exceeded - Model invocation quota insufficient

-- 400: model_currently_not_support - Current model unavailable

-- 400: completion_request_error - Text generation failed

-- 500: Internal server error

-

-For file uploads:

-- 400: no_file_uploaded - File must be provided

-- 400: too_many_files - Only one file accepted

-- 400: unsupported_preview - File does not support preview

-- 400: unsupported_estimate - File does not support estimation

-- 413: file_too_large - File is too large

-- 415: unsupported_file_type - Unsupported extension

-- 503: s3_connection_failed - Unable to connect to S3

-- 503: s3_permission_denied - No permission for S3

-- 503: s3_file_too_large - Exceeds S3 size limit

\ No newline at end of file

+For more information about how it works, please refer to the plugin's [GitHub repository](https://github.com/langgenius/dify-official-plugins/tree/main/extensions/llamacloud).

diff --git a/en/guides/knowledge-base/connect-external-knowledge-base.mdx b/en/guides/knowledge-base/connect-external-knowledge-base.mdx

index 1cedfee0..3f2c83fc 100644

--- a/en/guides/knowledge-base/connect-external-knowledge-base.mdx

+++ b/en/guides/knowledge-base/connect-external-knowledge-base.mdx

@@ -43,7 +43,7 @@ With the LlamaCloud plugin, you can directly use LlamaCloud's powerful retrieval

For more information about how it works, please refer to the plugin's [GitHub repository](https://github.com/langgenius/dify-official-plugins/tree/main/extensions/llamacloud).

-##### Video Tutorial

+#### Video Tutorial

The following video demonstrates in detail how to use the LlamaCloud plugin to connect to external knowledge bases:

diff --git a/en/guides/knowledge-base/create-knowledge-and-upload-documents/import-content-data/readme.mdx b/en/guides/knowledge-base/create-knowledge-and-upload-documents/import-content-data/readme.mdx

index ed4dda72..f0b3ef93 100644

--- a/en/guides/knowledge-base/create-knowledge-and-upload-documents/import-content-data/readme.mdx

+++ b/en/guides/knowledge-base/create-knowledge-and-upload-documents/import-content-data/readme.mdx

@@ -21,11 +21,11 @@ Drag and drop or select files to upload. The number of files allowed for **batch

When creating a **Knowledge**, you can import data from online sources. The knowledge supports the following two types of online data:

-

+

Learn how to import data from Notion

-

+

Learn how to sync data from websites

diff --git a/en/guides/knowledge-base/create-knowledge-and-upload-documents/import-content-data/sync-from-notion.mdx b/en/guides/knowledge-base/create-knowledge-and-upload-documents/import-content-data/sync-from-notion.mdx

index 0ad9c5d5..82f9f042 100644

--- a/en/guides/knowledge-base/create-knowledge-and-upload-documents/import-content-data/sync-from-notion.mdx

+++ b/en/guides/knowledge-base/create-knowledge-and-upload-documents/import-content-data/sync-from-notion.mdx

@@ -15,25 +15,27 @@ Dify datasets support importing from Notion and setting up **synchronization** s

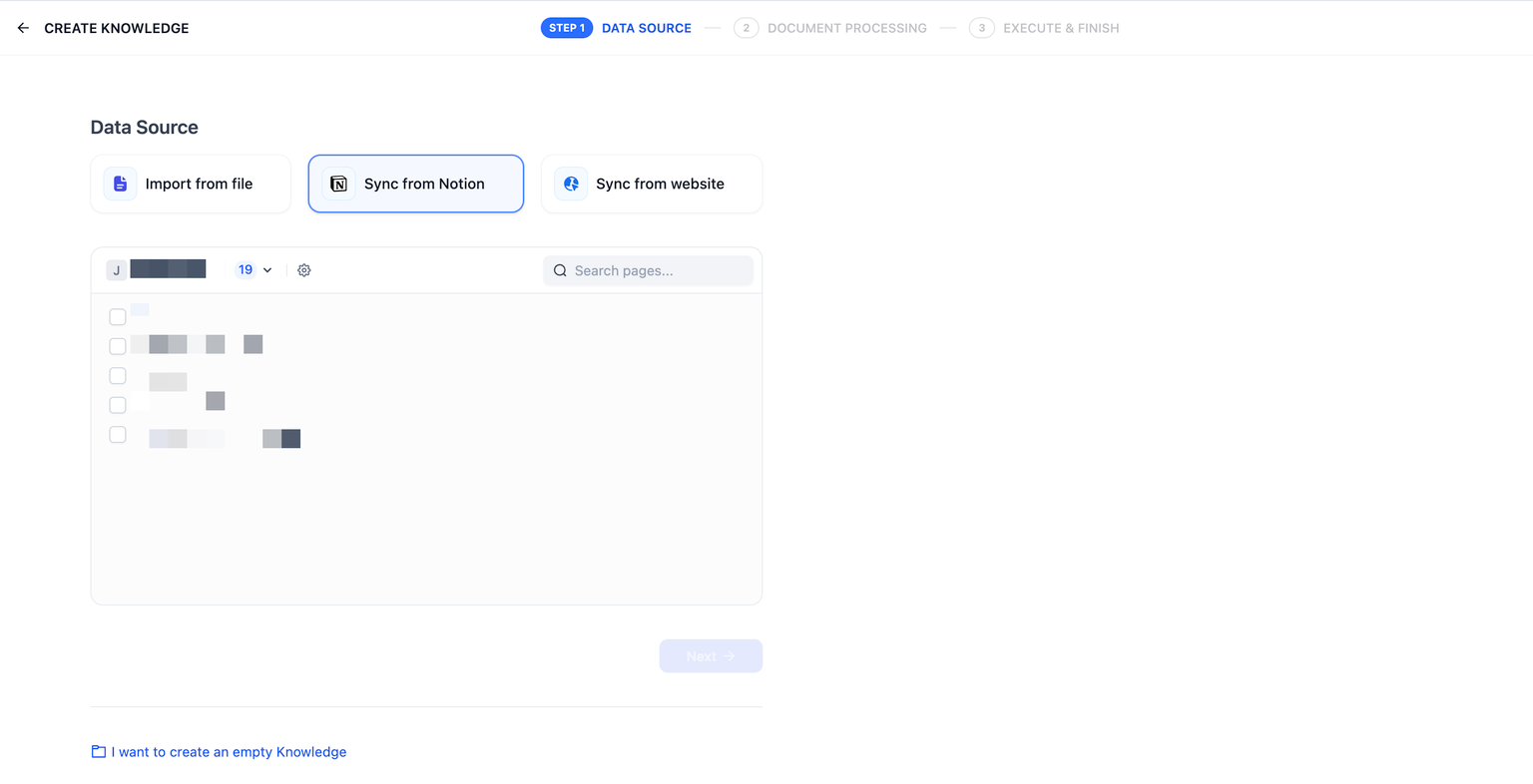

After completing the authorization verification, go to the create dataset page, click **Sync from Notion Content**, and select the authorized pages you need to import.

-### Segmentation and Cleaning

+

-Next, choose your **segmentation settings** and **indexing method**, then **Save and Process**. Wait for Dify to process this data for you, which typically requires token consumption in the LLM provider. Dify supports importing not only standard page types but also aggregates and saves page properties under the database type.

+### Chunking and Cleaning

-_**Please note: Images and files are not currently supported for import, and tabular data will be converted to text display.**_

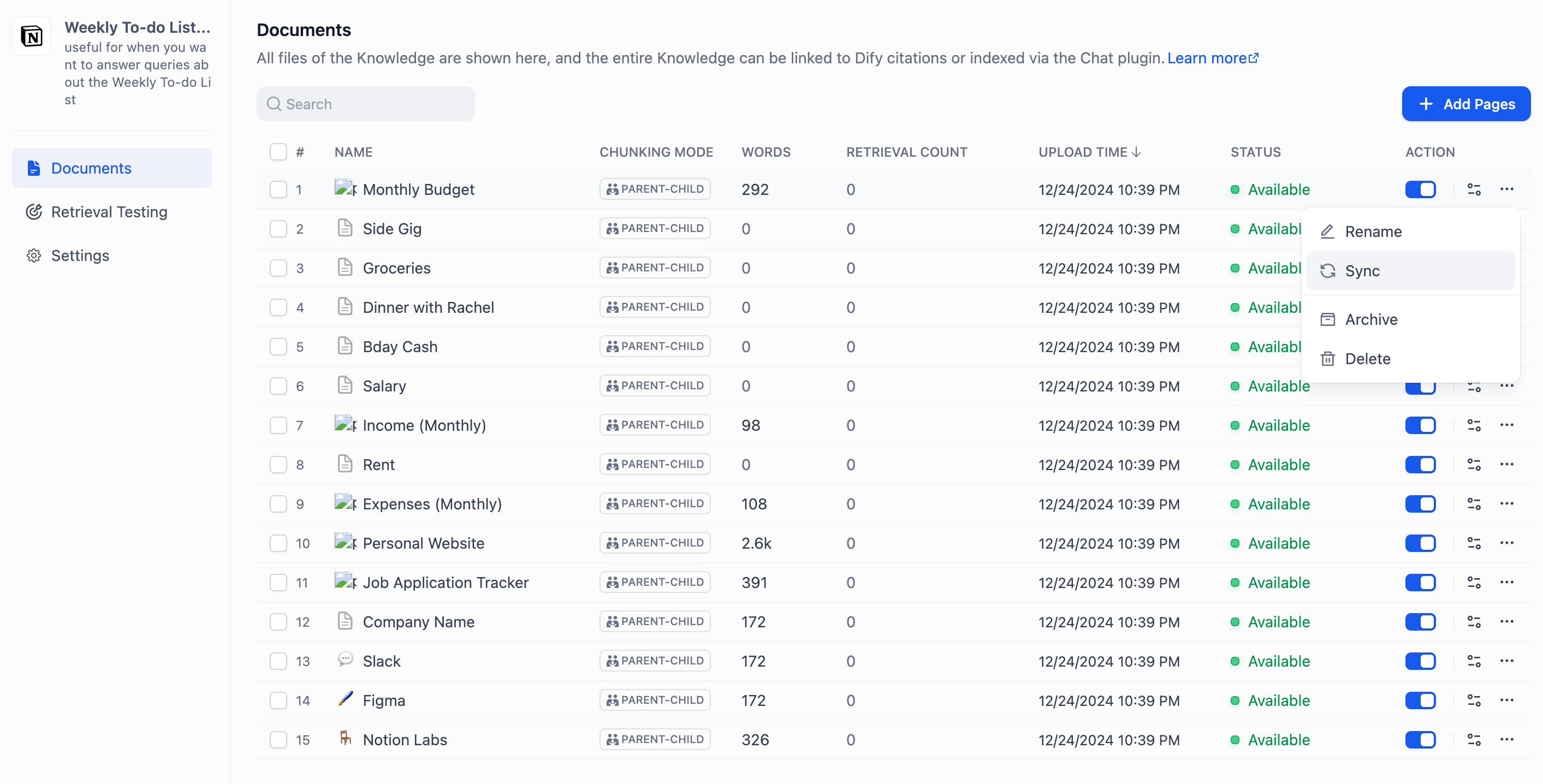

+Next, choose a [chunking mode](sync-from-notion.md#chunking-and-cleaning) and [indexing method](../setting-indexing-methods.md) for your knowledge base, then save it and wait for the automatically processing. Dify not only supports importing standard Notion pages but can also consolidate and save page attributes from database-type pages.

-

+_**Note: images and files cannot be imported, and data from tables will be converted to text.**_

+

+

### Synchronizing Notion Data

-If your Notion content is modified, you can directly click **Sync** in the Dify dataset **Document List Page** to perform a one-click data synchronization. This step requires token consumption.

+If your Notion content has been updated, you can sync the changes by clicking the **Sync** button for the corresponding page in the document list of your knowledge base. Syncing involves an embedding process, which will consume tokens from your embedding model.

### Integration Configuration Method for Community Edition Notion

-Notion integration can be done in two ways: **internal integration** and **public integration**. You can configure them as needed in Dify. For specific differences between the two integration methods, please refer to [Notion Official Documentation](https://developers.notion.com/docs/authorization).

+Notion offers two integration options: **internal integration** and **public integration**. For more details on the differences between these two methods, please refer to the [official Notion documentation](https://developers.notion.com/docs/authorization).

-### 1. **Using Internal Integration**

+#### 1. Using Internal Integration

First, create an integration in the integration settings page [Create Integration](https://www.notion.so/my-integrations). By default, all integrations start as internal integrations; internal integrations will be associated with the workspace you choose, so you need to be the workspace owner to create an integration.

@@ -45,13 +47,16 @@ Click the **New integration** button. The type is **Internal** by default (canno

After creating the integration, you can update its settings as needed under the Capabilities tab and click the **Show** button under Secrets to copy the secrets.

+

+

After copying, go back to the Dify source code, and configure the relevant environment variables in the **.env** file. The environment variables are as follows:

-**NOTION_INTEGRATION_TYPE**=internal or **NOTION_INTEGRATION_TYPE**=public

+```

+NOTION_INTEGRATION_TYPE = internal or NOTION_INTEGRATION_TYPE = public

+NOTION_INTERNAL_SECRET=you-internal-secret

+```

-**NOTION_INTERNAL_SECRET**=your-internal-secret

-

-### 2. **Using Public Integration**

+#### **Using Public Integration**

**You need to upgrade the internal integration to a public integration.** Navigate to the Distribution page of the integration, and toggle the switch to make the integration public. When switching to the public setting, you need to fill in additional information in the Organization Information form below, including your company name, website, and redirect URL, then click the **Submit** button.

@@ -63,8 +68,10 @@ After successfully making the integration public on the integration settings pag

Go back to the Dify source code, and configure the relevant environment variables in the **.env** file. The environment variables are as follows:

-* **NOTION_INTEGRATION_TYPE**=public

-* **NOTION_CLIENT_SECRET**=your-client-secret

-* **NOTION_CLIENT_ID**=your-client-id

+```

+NOTION_INTEGRATION_TYPE=public

+NOTION_CLIENT_SECRET=your-client-secret

+NOTION_CLIENT_ID=your-client-id

+```

After configuration, you can operate the Notion data import and synchronization functions in the dataset.

diff --git a/en/guides/knowledge-base/create-knowledge-and-upload-documents/setting-indexing-methods.mdx b/en/guides/knowledge-base/create-knowledge-and-upload-documents/setting-indexing-methods.mdx

index 82cd146a..cc4c5e4a 100644

--- a/en/guides/knowledge-base/create-knowledge-and-upload-documents/setting-indexing-methods.mdx

+++ b/en/guides/knowledge-base/create-knowledge-and-upload-documents/setting-indexing-methods.mdx

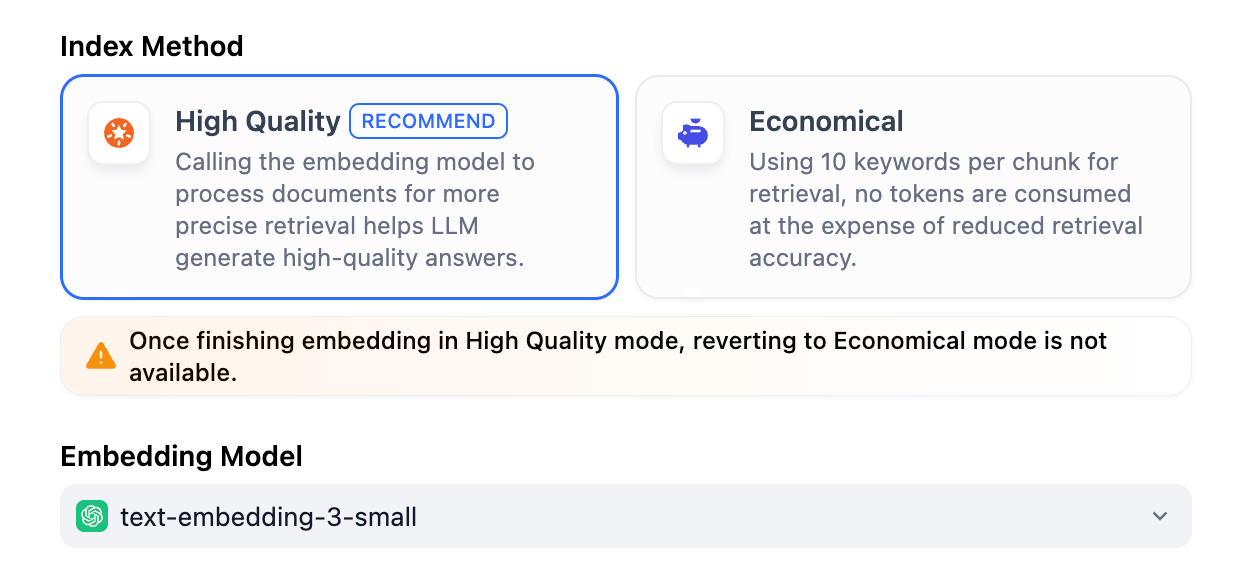

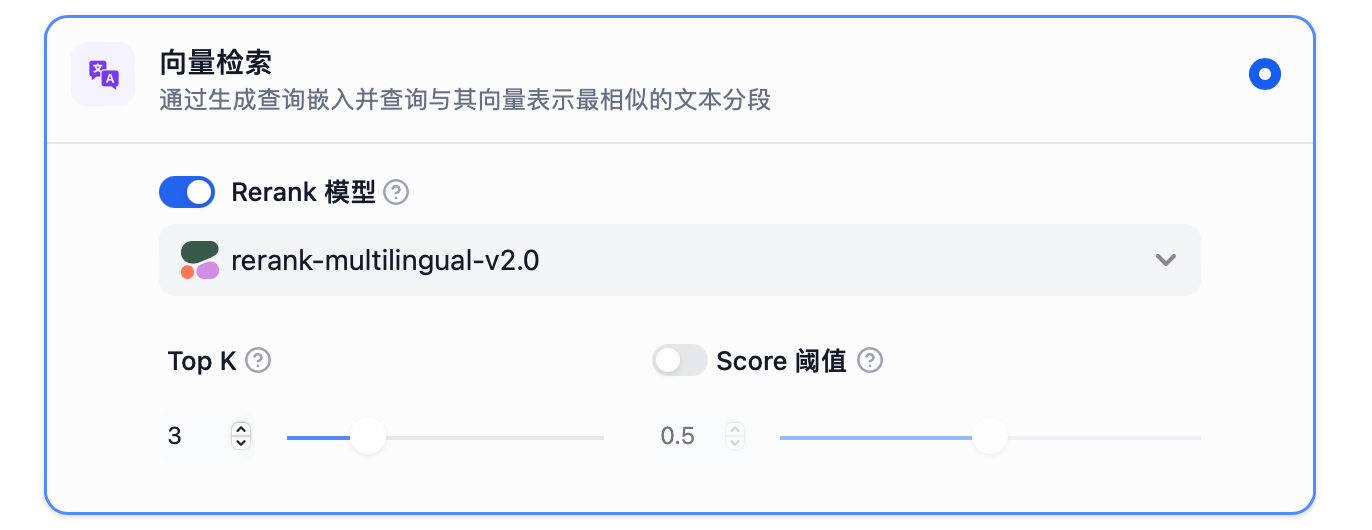

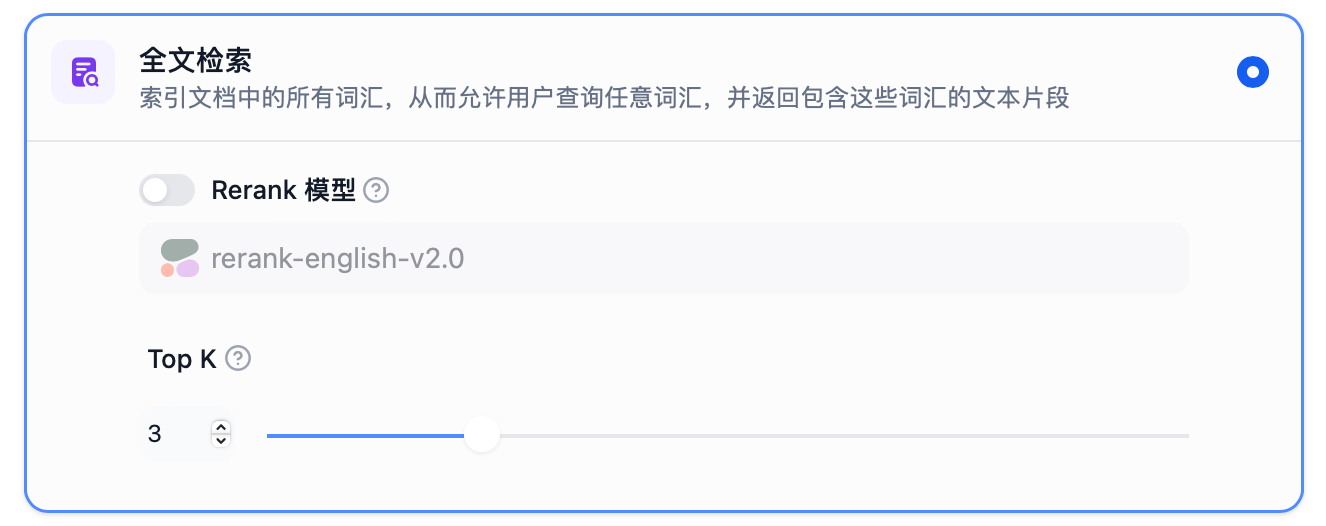

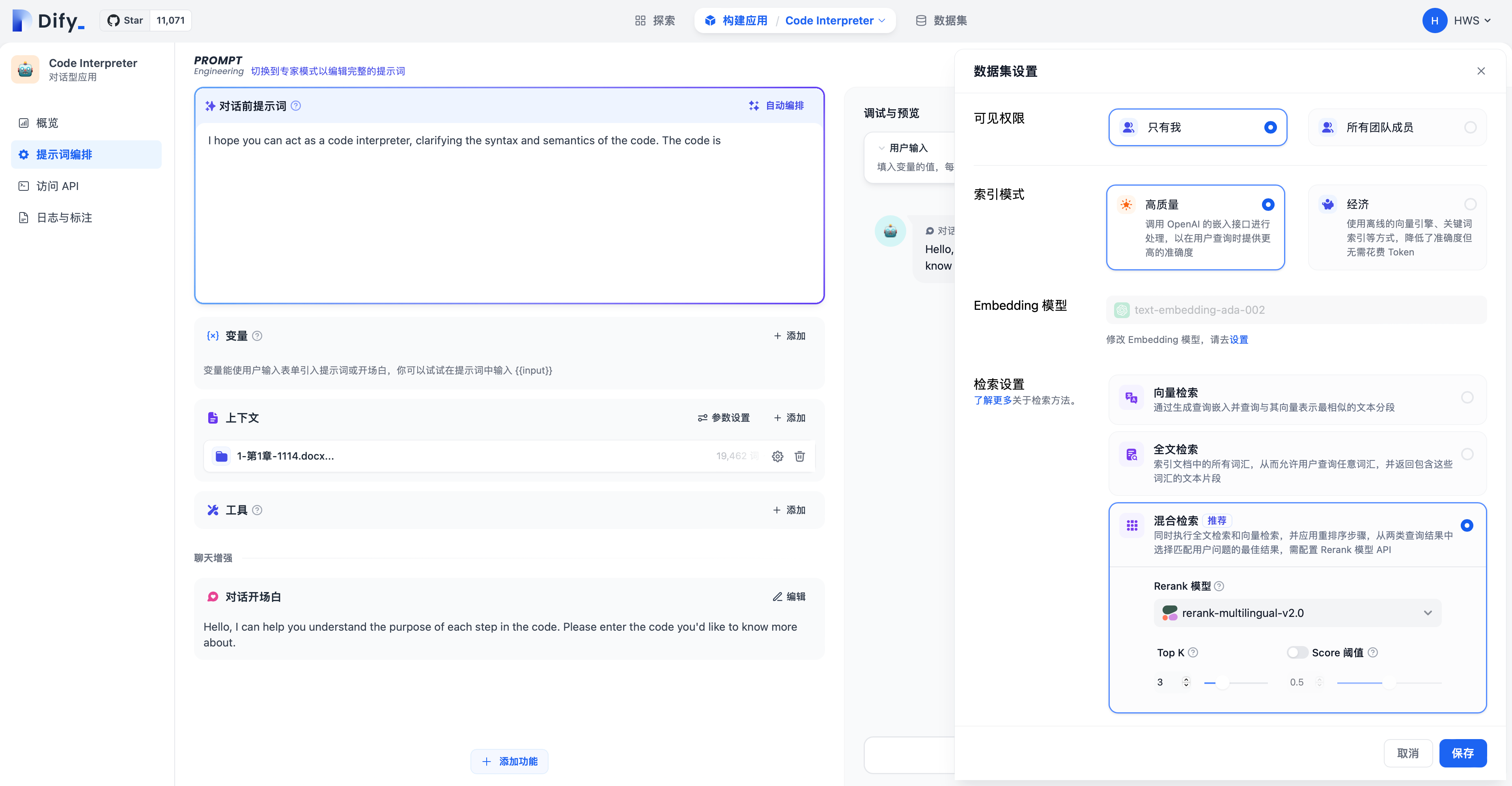

@@ -25,8 +25,8 @@ After selecting **High Quality** mode, the indexing method for the knowledge bas

-

-

+### Enable Q&A Mode (Optional, Community Edition Only)

+

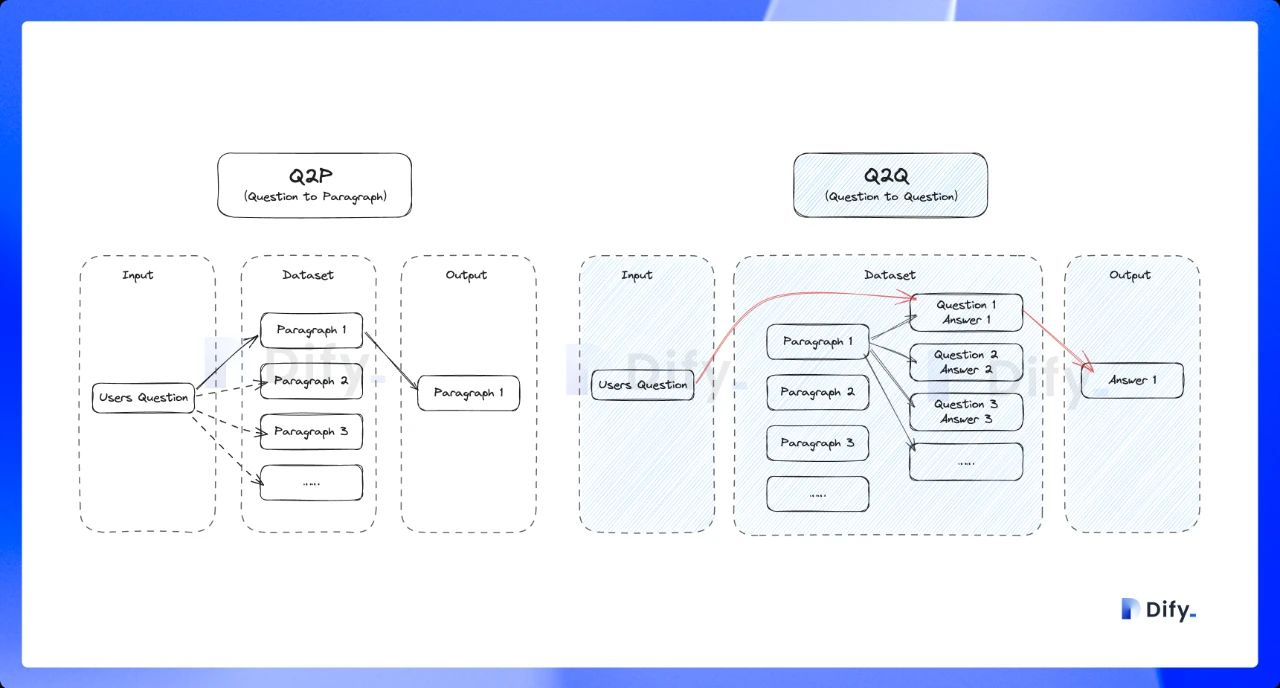

When this mode is enabled, the system segments the uploaded text and automatically generates Q\&A pairs for each segment after summarizing its content.

Compared with the common **Q to P** strategy (user questions matched with text paragraphs), the Q\&A mode uses a **Q to Q** strategy (questions matched with questions).

@@ -41,7 +41,7 @@ When a user asks a question, the system identifies the most similar question and

-

+

@@ -52,6 +52,7 @@ If the performance of the economical indexing method does not meet your expectat

+

## Setting the Retrieval Setting

@@ -152,6 +153,11 @@ The **"Weight Settings"** and **"Rerank Model"** settings support the following

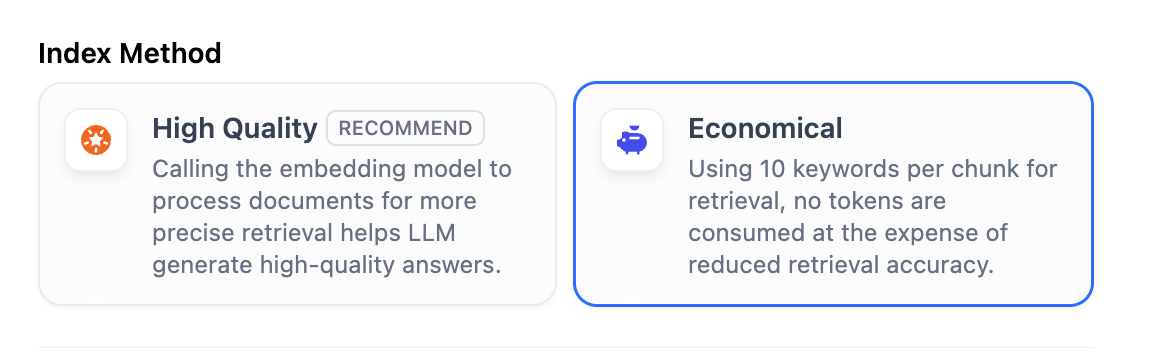

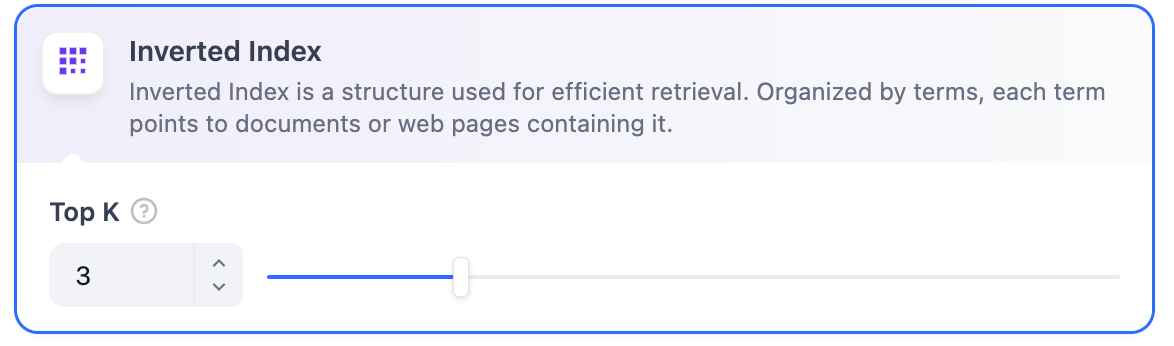

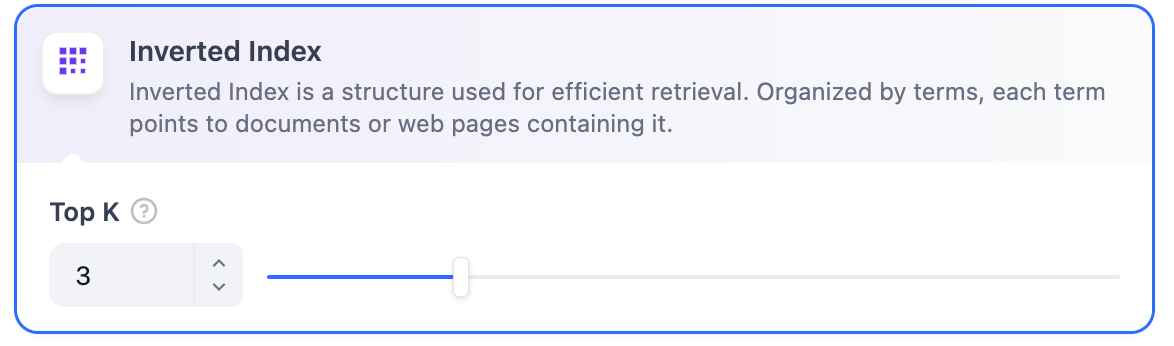

In **Economical Indexing** mode, only the inverted index approach is available. An inverted index is a data structure designed for fast keyword retrieval within documents, commonly used in online search engines. Inverted indexing supports only the **TopK** setting.

**TopK:** Determines how many text chunks, deemed most similar to the user’s query, are retrieved. It also automatically adjusts the number of chunks based on the chosen model’s context window. The default value is **3**, and higher numbers will recall more text chunks.

+

+

+  +

+

+

diff --git a/en/guides/knowledge-base/external-knowledge-api.mdx b/en/guides/knowledge-base/external-knowledge-api.mdx

index 8c822144..2a682aa6 100644

--- a/en/guides/knowledge-base/external-knowledge-api.mdx

+++ b/en/guides/knowledge-base/external-knowledge-api.mdx

@@ -1,5 +1,5 @@

---

-title: 'External Knowledge API'

+title: External Knowledge API

---

## Endpoint

@@ -140,7 +140,7 @@ If the action fails, the service sends back the following error information in J

| Property | Required | Type | Description | Example value |

|----------|----------|------|-------------|---------------|

| error_code | TRUE | int | Error code | 1001 |

-| error_msg | TRUE | string | The description of API exception | Invalid Authorization header format. Expected 'Bearer ' format. |

+| error_msg | TRUE | string | The description of API exception | Invalid Authorization header format. Expected 'Bearer ``' format. |

The `error_code` property has the following types:

@@ -164,6 +164,5 @@ HTTP Status Code: 500

You can learn how to develop external knowledge base plugins through the following video tutorial using LlamaCloud as an example:

-{% embed url="https://www.youtube.com/embed/FaOzKZRS-2E" %}

For more information about how it works, please refer to the plugin's [GitHub repository](https://github.com/langgenius/dify-official-plugins/tree/main/extensions/llamacloud).

diff --git a/en/guides/knowledge-base/knowledge-and-documents-maintenance/introduction.mdx b/en/guides/knowledge-base/knowledge-and-documents-maintenance/introduction.mdx

index aa5c99dd..e36ae0ea 100644

--- a/en/guides/knowledge-base/knowledge-and-documents-maintenance/introduction.mdx

+++ b/en/guides/knowledge-base/knowledge-and-documents-maintenance/introduction.mdx

@@ -18,8 +18,9 @@ Here, you can modify the knowledge base's name, description, permissions, indexi

* **"Only Me"**: Restricts access to the knowledge base owner.

* **"All team members"**: Grants access to every member of the team.

* **"Partial team members"**: Allows selective access to specific team members.

+

+ Users without appropriate permissions cannot access the knowledge base. When granting access to team members (Options 2 or 3), authorized users are granted full permissions, including the ability to view, edit, and delete knowledge base content.

- Users without appropriate permissions cannot access the knowledge base. When granting access to team members (Options 2 or 3), authorized users are granted full permissions, including the ability to view, edit, and delete knowledge base content.

* **Indexing Mode**: For detailed explanations, please refer to the [documentation](/en/guides/knowledge-base/create-knowledge-and-upload-documents/setting-indexing-methods).

* **Embedding Model**: Allows you to modify the embedding model for the knowledge base. Changing the embedding model will re-embed all documents in the knowledge base, and the original embeddings will be deleted.

* **Retrieval Settings**: For detailed explanations, please refer to the [documentation](/en/learn-more/extended-reading/retrieval-augment/retrieval).

diff --git a/en/guides/knowledge-base/knowledge-and-documents-maintenance/maintain-knowledge-documents.mdx b/en/guides/knowledge-base/knowledge-and-documents-maintenance/maintain-knowledge-documents.mdx

index aaedcd7a..0cea0f8b 100644

--- a/en/guides/knowledge-base/knowledge-and-documents-maintenance/maintain-knowledge-documents.mdx

+++ b/en/guides/knowledge-base/knowledge-and-documents-maintenance/maintain-knowledge-documents.mdx

@@ -1,5 +1,5 @@

---

-title: Knowledge Base and Document Maintenance

+title: Maintain Documents

---

## Manage Documentations in the Knowledge Base

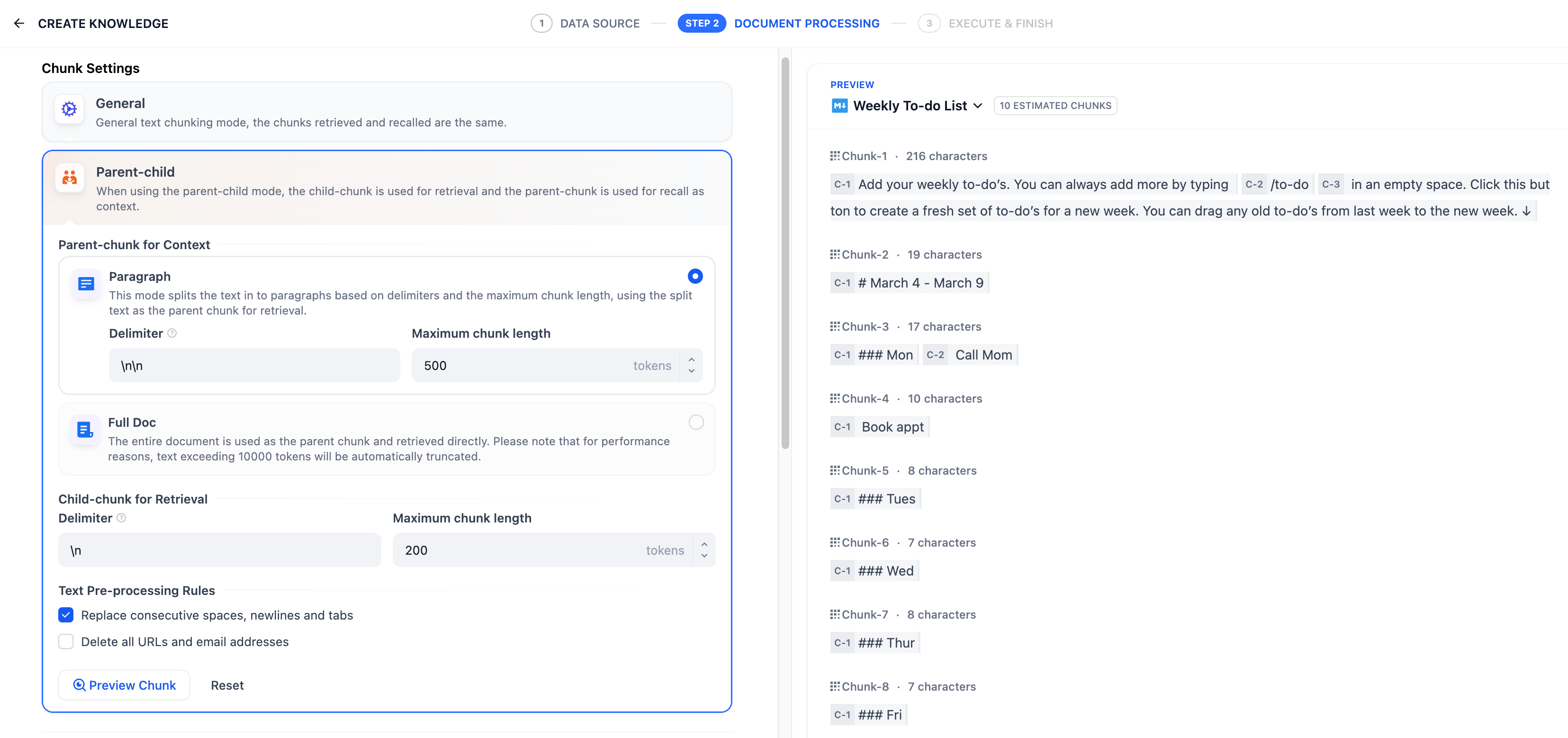

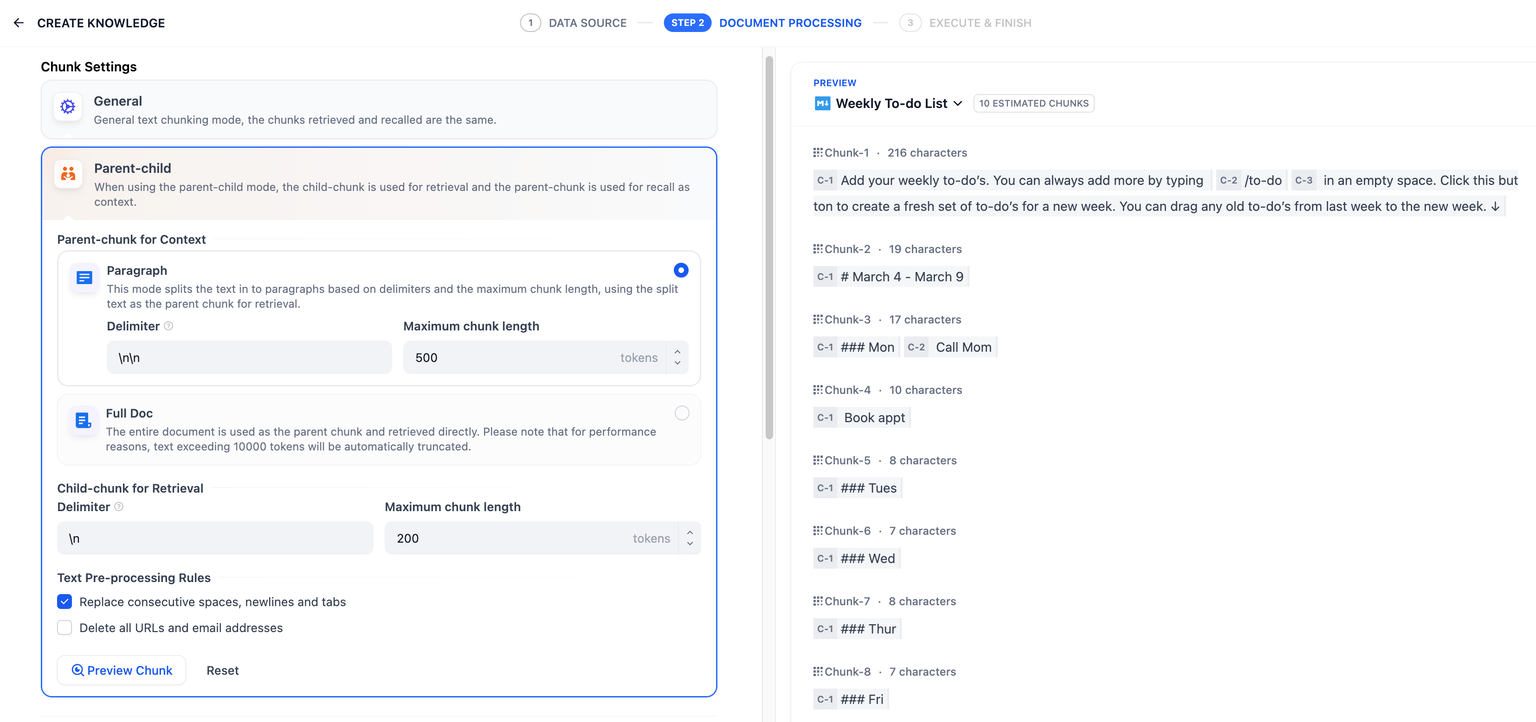

@@ -70,7 +70,7 @@ Different [chunking modes](/en/guides/knowledge-base/create-knowledge-and-upload

**Parent-child Mode**

- In[Parent-child](/en/guides/knowledge-base/create-knowledge-and-upload-documents/chunking-and-cleaning-text#parent-child-mode) mode, content is divided into parent chunks and child chunks.

+ In [Parent-child](/en/guides/knowledge-base/create-knowledge-and-upload-documents/chunking-and-cleaning-text#parent-child-mode) mode, content is divided into parent chunks and child chunks.

* **Parent chunks**

@@ -235,8 +235,6 @@ Go to **Chunk Settings**, adjust the settings, and click **Save & Process** to s

### Metadata

-In addition to capturing metadata (e.g., title, URL, keywords, or a web page description) from various source documents, metadata is also used as structured fields during the chunk retrieval process for filtering or displaying citation sources.

-

-

+For more details on metadata, see [_Metadata_](/en/guides/knowledge-base/metadata).

***

\ No newline at end of file

diff --git a/en/guides/knowledge-base/metadata.mdx b/en/guides/knowledge-base/metadata.mdx

index 738de16a..18006939 100644

--- a/en/guides/knowledge-base/metadata.mdx

+++ b/en/guides/knowledge-base/metadata.mdx

@@ -19,6 +19,7 @@ This guide aims to help you understand metadata and effectively manage your know

@@ -40,6 +41,7 @@ alt="metadata_field"

@@ -40,6 +41,7 @@ alt="metadata_field"

@@ -148,6 +150,7 @@ To create a new metadata field:

@@ -148,6 +150,7 @@ To create a new metadata field:

@@ -170,6 +173,7 @@ To edit a metadata field:

@@ -170,6 +173,7 @@ To edit a metadata field:

@@ -214,6 +218,7 @@ To add metadata in bulk:

@@ -214,6 +218,7 @@ To add metadata in bulk:

@@ -222,6 +227,7 @@ To add metadata in bulk:

@@ -222,6 +227,7 @@ To add metadata in bulk:

@@ -232,6 +238,7 @@ To add metadata in bulk:

@@ -232,6 +238,7 @@ To add metadata in bulk:

@@ -240,6 +247,7 @@ To add metadata in bulk:

@@ -240,6 +247,7 @@ To add metadata in bulk:

@@ -248,6 +256,7 @@ To add metadata in bulk:

@@ -248,6 +256,7 @@ To add metadata in bulk:

@@ -256,6 +265,7 @@ To add metadata in bulk:

@@ -256,6 +265,7 @@ To add metadata in bulk:

@@ -274,6 +284,7 @@ To update metadata in bulk:

@@ -274,6 +284,7 @@ To update metadata in bulk:

@@ -282,6 +293,7 @@ To update metadata in bulk:

@@ -282,6 +293,7 @@ To update metadata in bulk:

@@ -292,6 +304,7 @@ To update metadata in bulk:

@@ -292,6 +304,7 @@ To update metadata in bulk:

@@ -308,6 +321,7 @@ Use **Apply to All Documents** to control changes:

@@ -308,6 +321,7 @@ Use **Apply to All Documents** to control changes:

@@ -330,6 +344,7 @@ On the document details page, click **Start labeling** to begin editing.

To add a single document's metadata fields and values:

1. Click **+Add Metadata** to:

+

- Create new fields via **+New Metadata**.

diff --git a/en/guides/knowledge-base/retrieval-test-and-citation.mdx b/en/guides/knowledge-base/retrieval-test-and-citation.mdx

index d20e633b..2fec4b82 100644

--- a/en/guides/knowledge-base/retrieval-test-and-citation.mdx

+++ b/en/guides/knowledge-base/retrieval-test-and-citation.mdx

@@ -57,13 +57,13 @@ If you want to permanently modify the retrieval method for the knowledge base, g

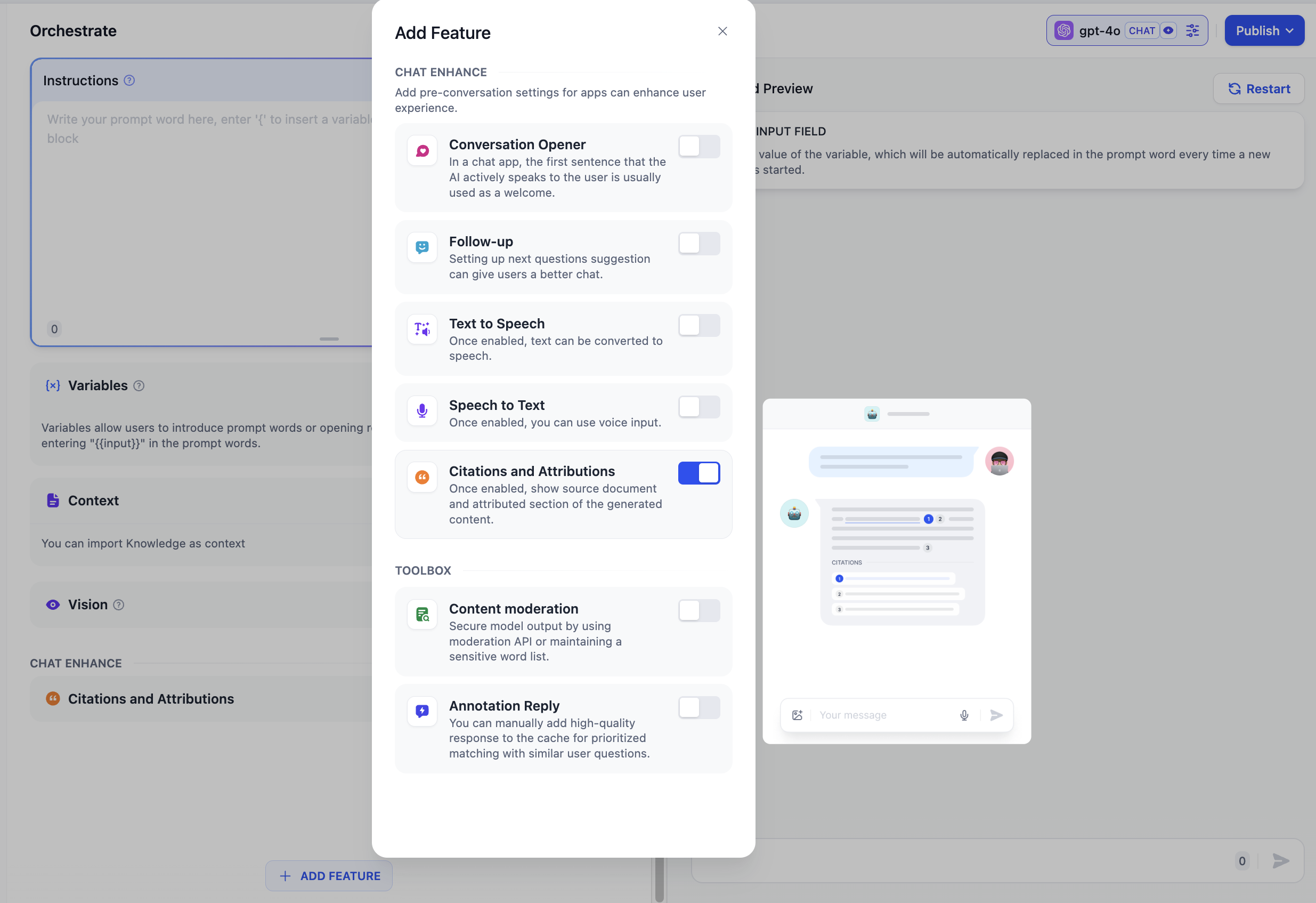

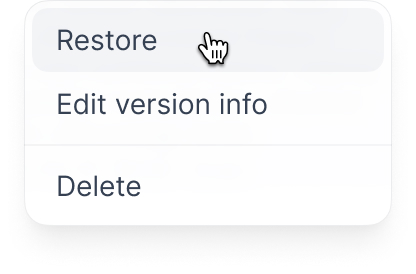

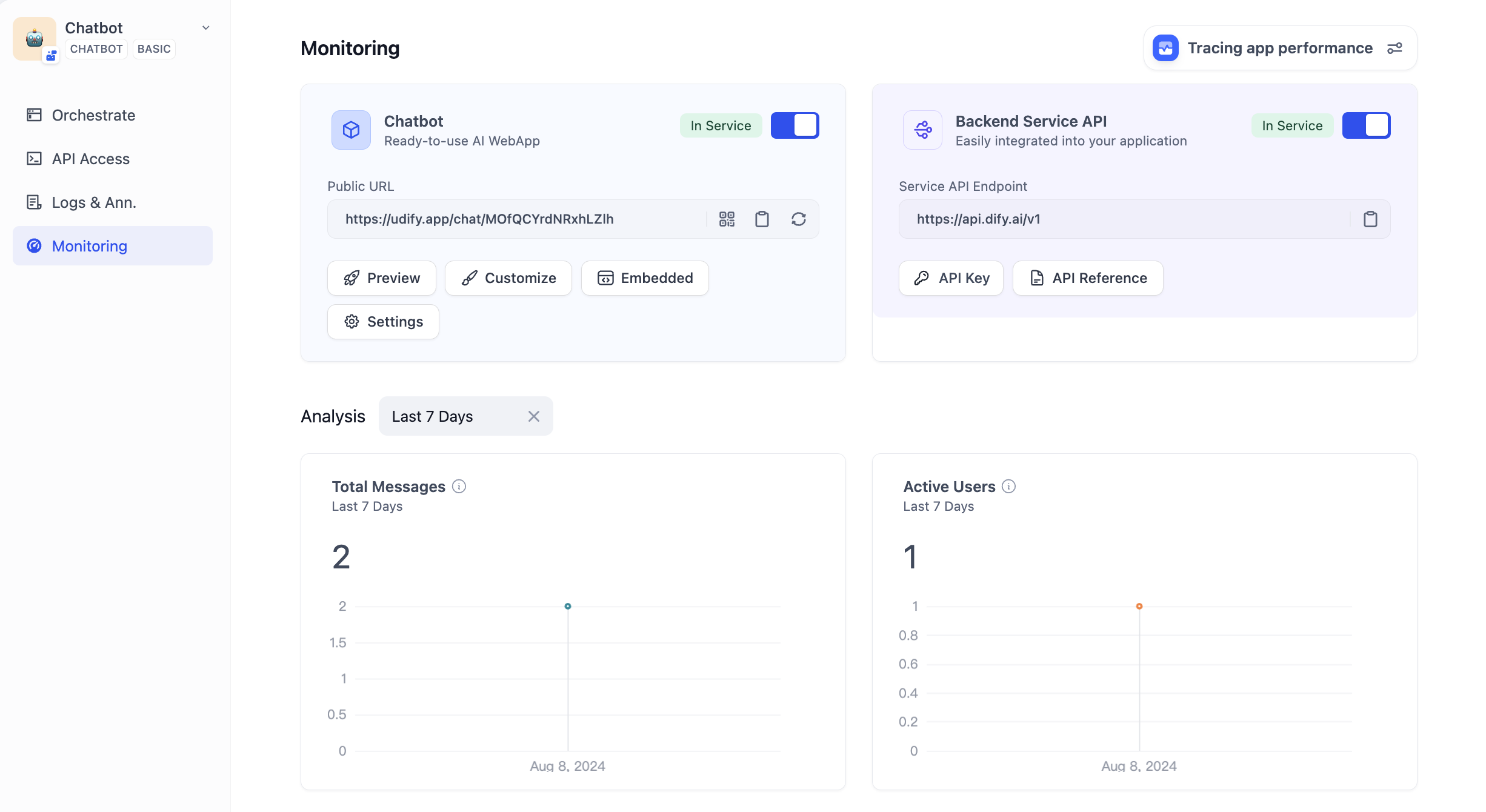

### 2. Citation and Attribution

-When testing the knowledge base effect within the application, you can go to **Workspace -- Add Feature -- Citation and Attribution** to enable the citation attribution feature.应用内的“上下文”添加知识库后,可以在 **“添加功能”** 内开启 **“引用与归属”**。在应用内输入问题后,若涉及已关联的知识库文档,将标注内容的引用来源。你可以通过此方式检查知识库所召回的内容分段是否符合预期。

+When testing the knowledge base effect within the application, you can go to **Workspace -- Add Feature -- Citation and Attribution** to enable the citation attribution feature.

After enabling the feature, when the large language model responds to a question by citing content from the knowledge base, you can view specific citation paragraph information below the response content, including **original segment text, segment number, matching degree**, etc. Clicking **Link to Knowledge** above the cited segment allows quick access to the segment list in the knowledge base, facilitating developers in debugging and editing.

-

+

### View Linked Applications in the Knowledge Base

diff --git a/en/guides/management/personal-account-management.mdx b/en/guides/management/personal-account-management.mdx

index 511a2d0c..f0934e47 100644

--- a/en/guides/management/personal-account-management.mdx

+++ b/en/guides/management/personal-account-management.mdx

@@ -53,7 +53,7 @@ To change the display language, click on your avatar in the upper right corner o

* Indonesian

* Ukrainian (Ukraine)

-Dify welcomes community volunteers to contribute additional language versions. Visit the [GitHub repository](https://github.com/langgenius/dify/blob/main/CONTRIBUTING.md) to contribute!

+Dify welcomes community volunteers to contribute additional language versions. Visit the [GitHub repository](https://github.com/langgenius/dify/blob/main/CONTRIBUTING) to contribute!

### View Apps Linked to Your Account

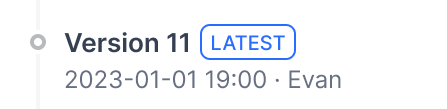

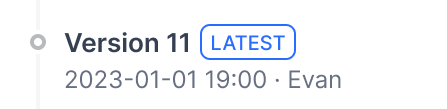

diff --git a/en/guides/management/version-control.mdx b/en/guides/management/version-control.mdx

index beea11bb..ceaa29c4 100644

--- a/en/guides/management/version-control.mdx

+++ b/en/guides/management/version-control.mdx

@@ -15,6 +15,7 @@ This article explains how to manage versions in Dify's Chatflow and Workflow.

@@ -330,6 +344,7 @@ On the document details page, click **Start labeling** to begin editing.

To add a single document's metadata fields and values:

1. Click **+Add Metadata** to:

+

- Create new fields via **+New Metadata**.

diff --git a/en/guides/knowledge-base/retrieval-test-and-citation.mdx b/en/guides/knowledge-base/retrieval-test-and-citation.mdx

index d20e633b..2fec4b82 100644

--- a/en/guides/knowledge-base/retrieval-test-and-citation.mdx

+++ b/en/guides/knowledge-base/retrieval-test-and-citation.mdx

@@ -57,13 +57,13 @@ If you want to permanently modify the retrieval method for the knowledge base, g

### 2. Citation and Attribution