-After specifying the retrieval settings, you can refer to [Retrieval Test/Citation Attribution](/en-us/user-guide/knowledge-base/retrieval-test-and-citation) to check the matching between keywords and content chunks.

+After specifying the retrieval settings, you can refer to [Retrieval Test/Citation Attribution](/en/guides/knowledge-base/retrieval-test-and-citation) to check the matching between keywords and content chunks.

## 5. Complete Upload

-After configuring all the settings mentioned above, simply click "Save and Process" to complete the creation of your knowledge base. You can refer to [Integrate Knowledge Base Within Application](integrate-knowledge-within-application.md) to build an LLM application that can answer questions based on the knowledge base.

+After configuring all the settings mentioned above, simply click "Save and Process" to complete the creation of your knowledge base. You can refer to [Integrate Knowledge Base Within Application](/en/guides/knowledge-base/integrate-knowledge-within-application) to build an LLM application that can answer questions based on the knowledge base.

***

diff --git a/en/guides/management/app-management.mdx b/en/guides/management/app-management.mdx

index 24334558..9f991624 100644

--- a/en/guides/management/app-management.mdx

+++ b/en/guides/management/app-management.mdx

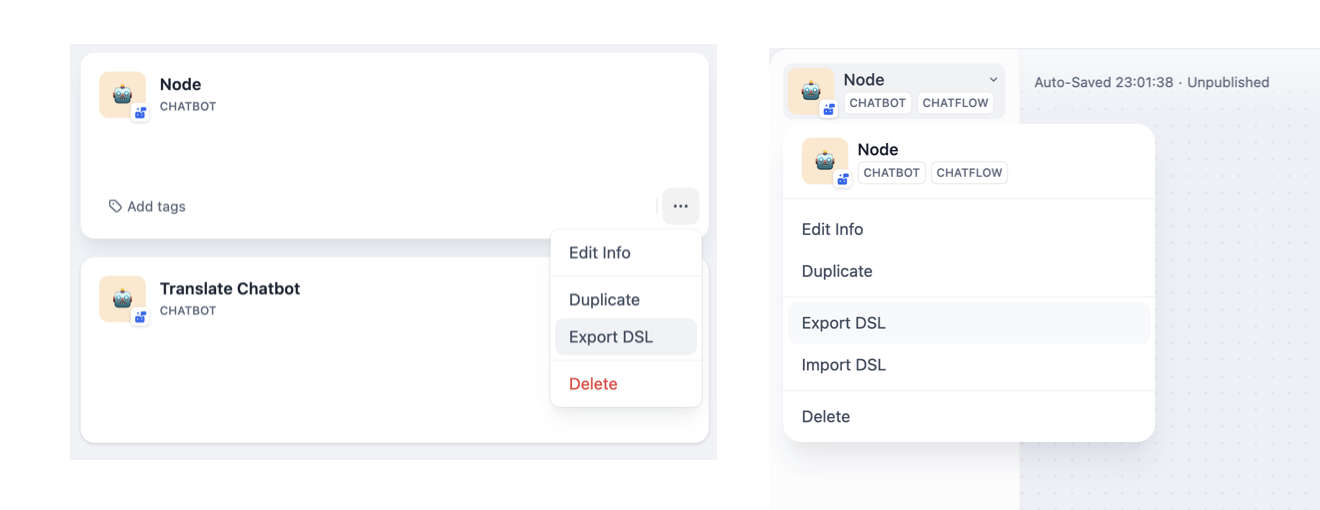

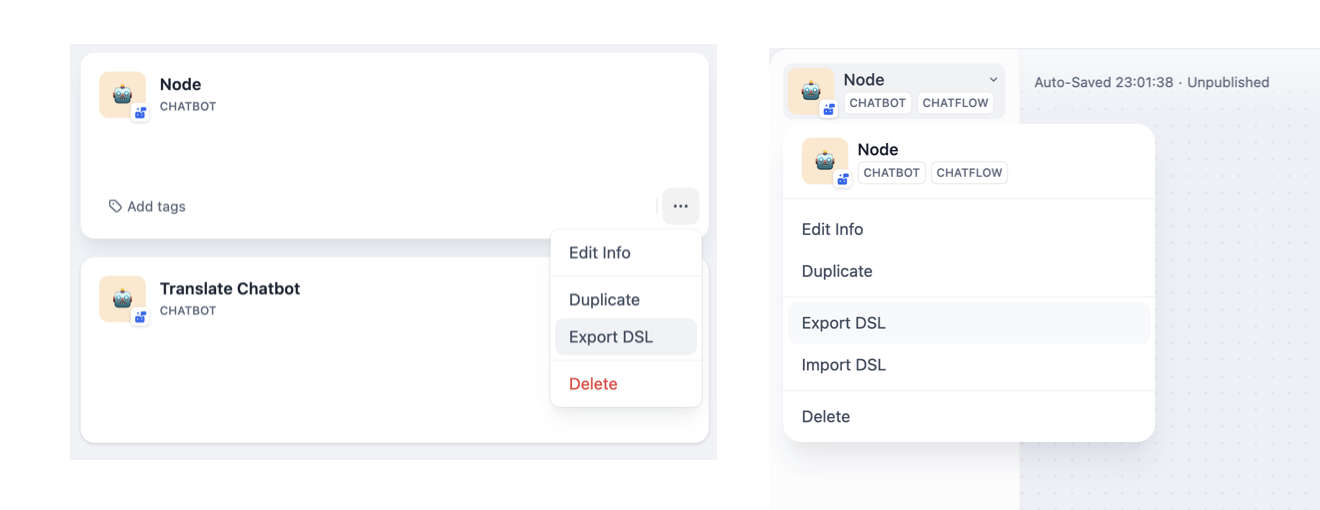

@@ -22,7 +22,7 @@ Applications created in Dify support export in DSL format files, allowing you to

-The DSL file does not include authorization information already filled in [Tool](../workflow/node/tools.md) nodes, such as API keys for third-party services.

+The DSL file does not include authorization information already filled in [Tool](/en/guides/workflow/nodes/tools) nodes, such as API keys for third-party services.

If the environment variables contain variables of the `Secret` type, a prompt will appear during file export asking whether to allow the export of this sensitive information.

@@ -37,7 +37,7 @@ Dify DSL is an AI application engineering file standard defined by Dify.AI in v0

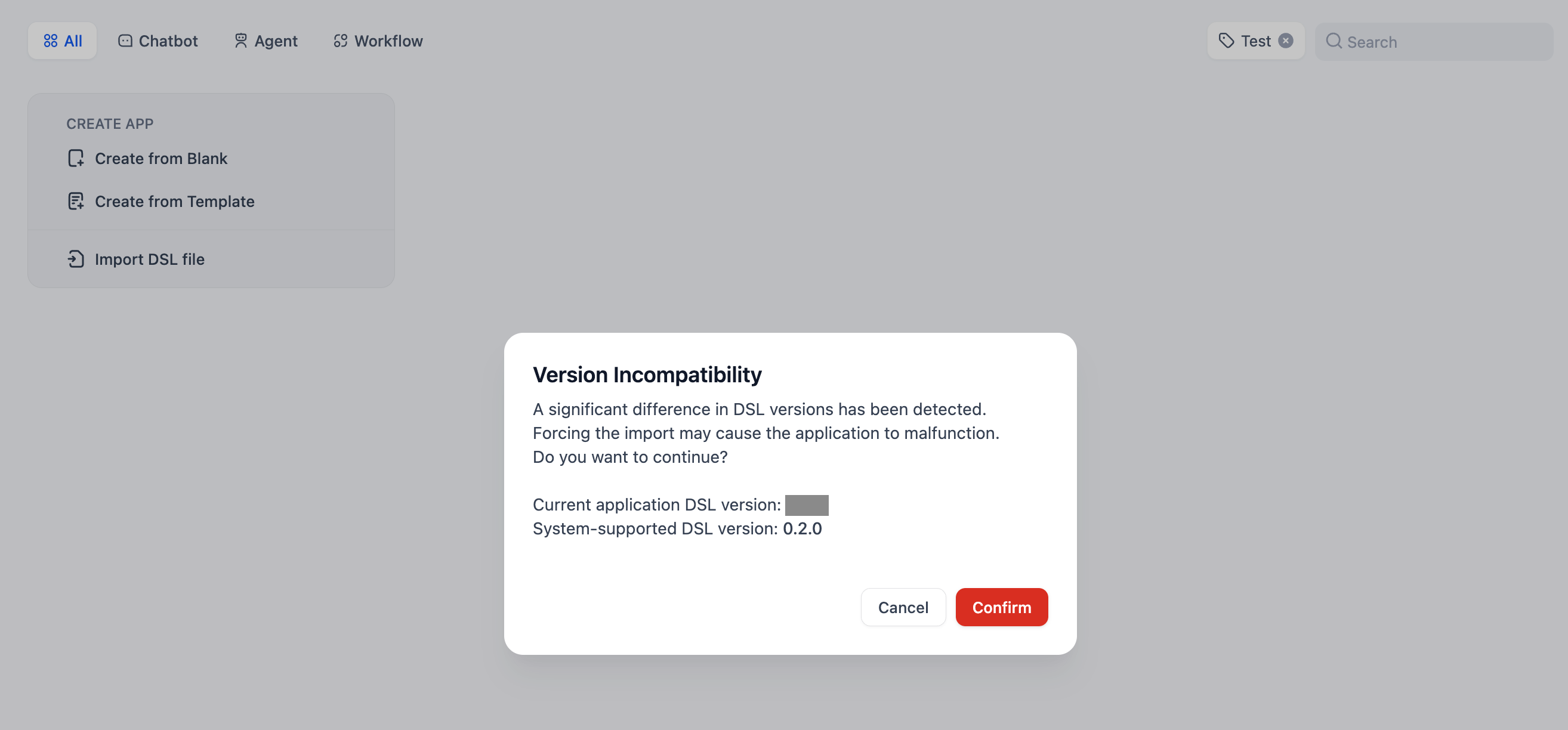

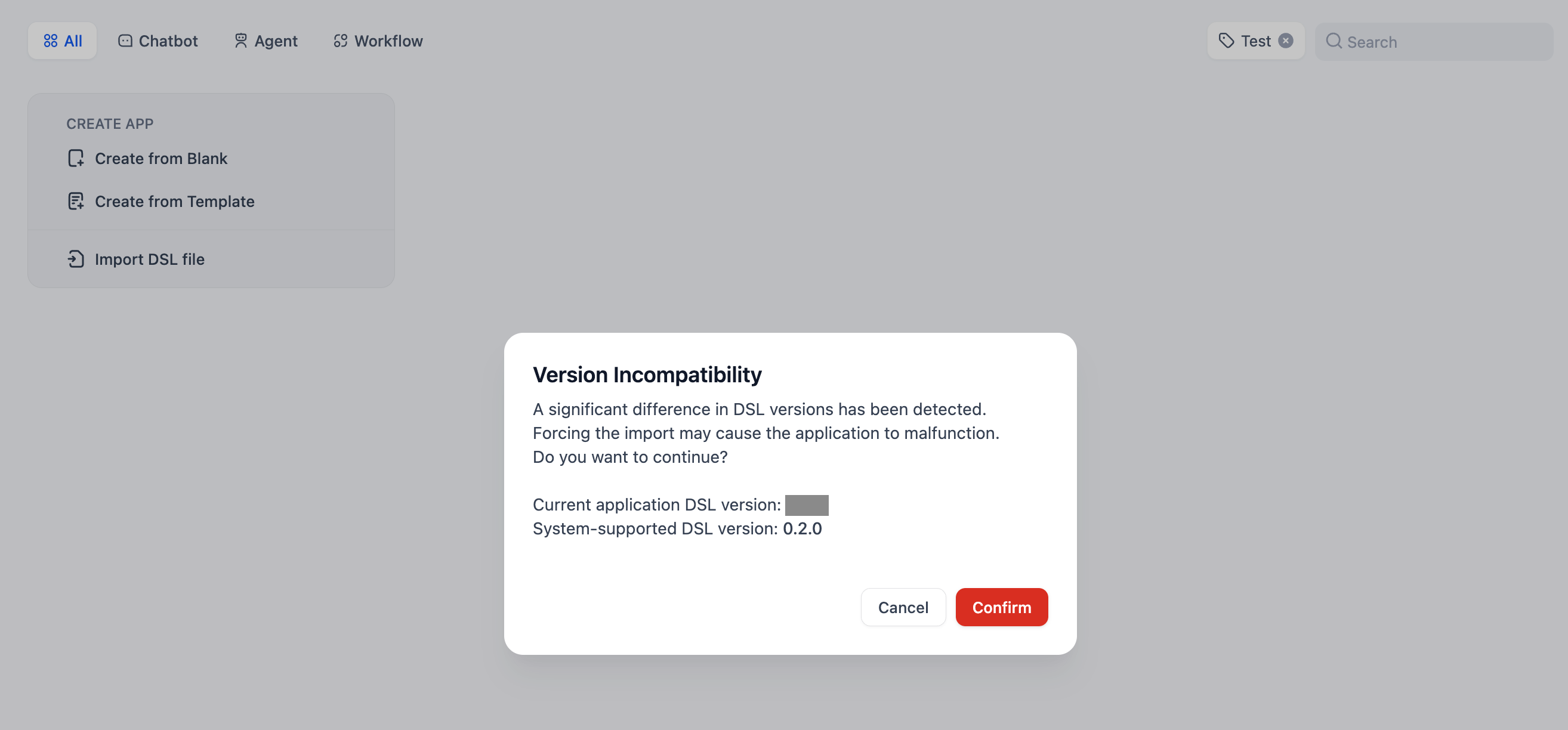

To import a Dify application, upload the DSL file to the Dify platform. A version check will be conducted during the import process, and a warning will be issued if a lower version of the DSL file is detected.

- For SaaS users, the DSL file exported from the SaaS platform will always be the latest version.

-- For Community users, it is recommended to consult [Upgrade Dify](https://docs.dify.ai/getting-started/install-self-hosted/docker-compose#upgrade-dify) to update the Community Edition and export an updated version of the DSL file, thus avoiding potential compatibility issues.

+- For Community users, it is recommended to consult [Upgrade Dify](/en/getting-started/install-self-hosted/docker-compose#upgrade-dify) to update the Community Edition and export an updated version of the DSL file, thus avoiding potential compatibility issues.

diff --git a/en/guides/management/subscription-management.mdx b/en/guides/management/subscription-management.mdx

index 3f1bd00f..c381d866 100644

--- a/en/guides/management/subscription-management.mdx

+++ b/en/guides/management/subscription-management.mdx

@@ -59,7 +59,7 @@ Upon cancellation of the subscription plan, **the team will automatically transi

-After specifying the retrieval settings, you can refer to [Retrieval Test/Citation Attribution](/en-us/user-guide/knowledge-base/retrieval-test-and-citation) to check the matching between keywords and content chunks.

+After specifying the retrieval settings, you can refer to [Retrieval Test/Citation Attribution](/en/guides/knowledge-base/retrieval-test-and-citation) to check the matching between keywords and content chunks.

## 5. Complete Upload

-After configuring all the settings mentioned above, simply click "Save and Process" to complete the creation of your knowledge base. You can refer to [Integrate Knowledge Base Within Application](integrate-knowledge-within-application.md) to build an LLM application that can answer questions based on the knowledge base.

+After configuring all the settings mentioned above, simply click "Save and Process" to complete the creation of your knowledge base. You can refer to [Integrate Knowledge Base Within Application](/en/guides/knowledge-base/integrate-knowledge-within-application) to build an LLM application that can answer questions based on the knowledge base.

***

diff --git a/en/guides/management/app-management.mdx b/en/guides/management/app-management.mdx

index 24334558..9f991624 100644

--- a/en/guides/management/app-management.mdx

+++ b/en/guides/management/app-management.mdx

@@ -22,7 +22,7 @@ Applications created in Dify support export in DSL format files, allowing you to

-The DSL file does not include authorization information already filled in [Tool](../workflow/node/tools.md) nodes, such as API keys for third-party services.

+The DSL file does not include authorization information already filled in [Tool](/en/guides/workflow/nodes/tools) nodes, such as API keys for third-party services.

If the environment variables contain variables of the `Secret` type, a prompt will appear during file export asking whether to allow the export of this sensitive information.

@@ -37,7 +37,7 @@ Dify DSL is an AI application engineering file standard defined by Dify.AI in v0

To import a Dify application, upload the DSL file to the Dify platform. A version check will be conducted during the import process, and a warning will be issued if a lower version of the DSL file is detected.

- For SaaS users, the DSL file exported from the SaaS platform will always be the latest version.

-- For Community users, it is recommended to consult [Upgrade Dify](https://docs.dify.ai/getting-started/install-self-hosted/docker-compose#upgrade-dify) to update the Community Edition and export an updated version of the DSL file, thus avoiding potential compatibility issues.

+- For Community users, it is recommended to consult [Upgrade Dify](/en/getting-started/install-self-hosted/docker-compose#upgrade-dify) to update the Community Edition and export an updated version of the DSL file, thus avoiding potential compatibility issues.

diff --git a/en/guides/management/subscription-management.mdx b/en/guides/management/subscription-management.mdx

index 3f1bd00f..c381d866 100644

--- a/en/guides/management/subscription-management.mdx

+++ b/en/guides/management/subscription-management.mdx

@@ -59,7 +59,7 @@ Upon cancellation of the subscription plan, **the team will automatically transi

| File Type | -Supported Formats | -

|---|---|

| Documents | -TXT, MARKDOWN, PDF, HTML, XLSX, XLS, DOCX, CSV, EML, MSG, PPTX, PPT, XML, EPUB. | -

| Images | -JPG, JPEG, PNG, GIF, WEBP, SVG. | -

| Audio | -MP3, M4A, WAV, WEBM, AMR. | -

| Video | -MP4, MOV, MPEG, MPGA. | -

| Others | -Custom file extension support | -

| Start | Defines the initial parameters for starting a workflow process. | |

| End | Defines the final output content for ending a workflow process. | |

| Answer | Defines the response content in a Chatflow process. | |

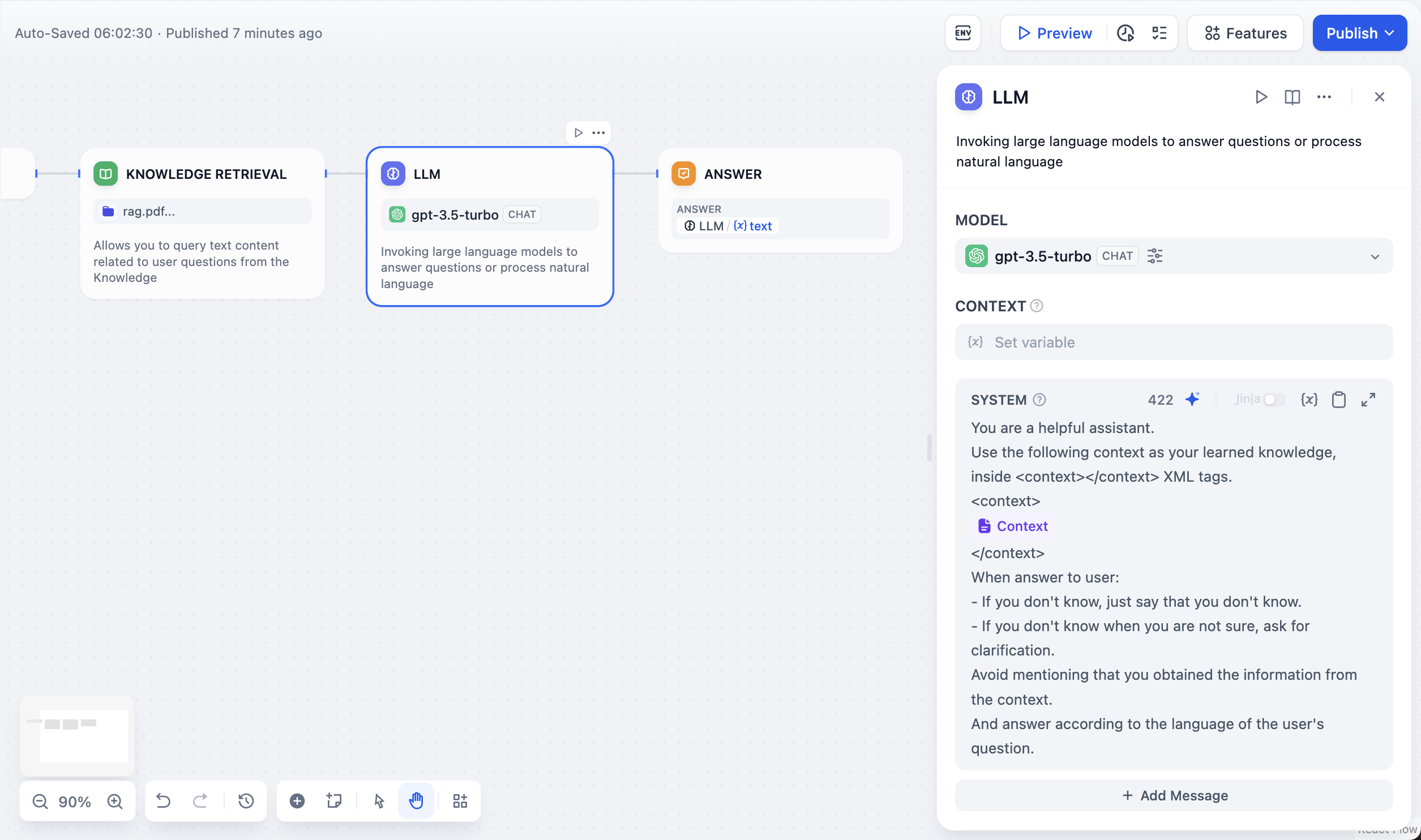

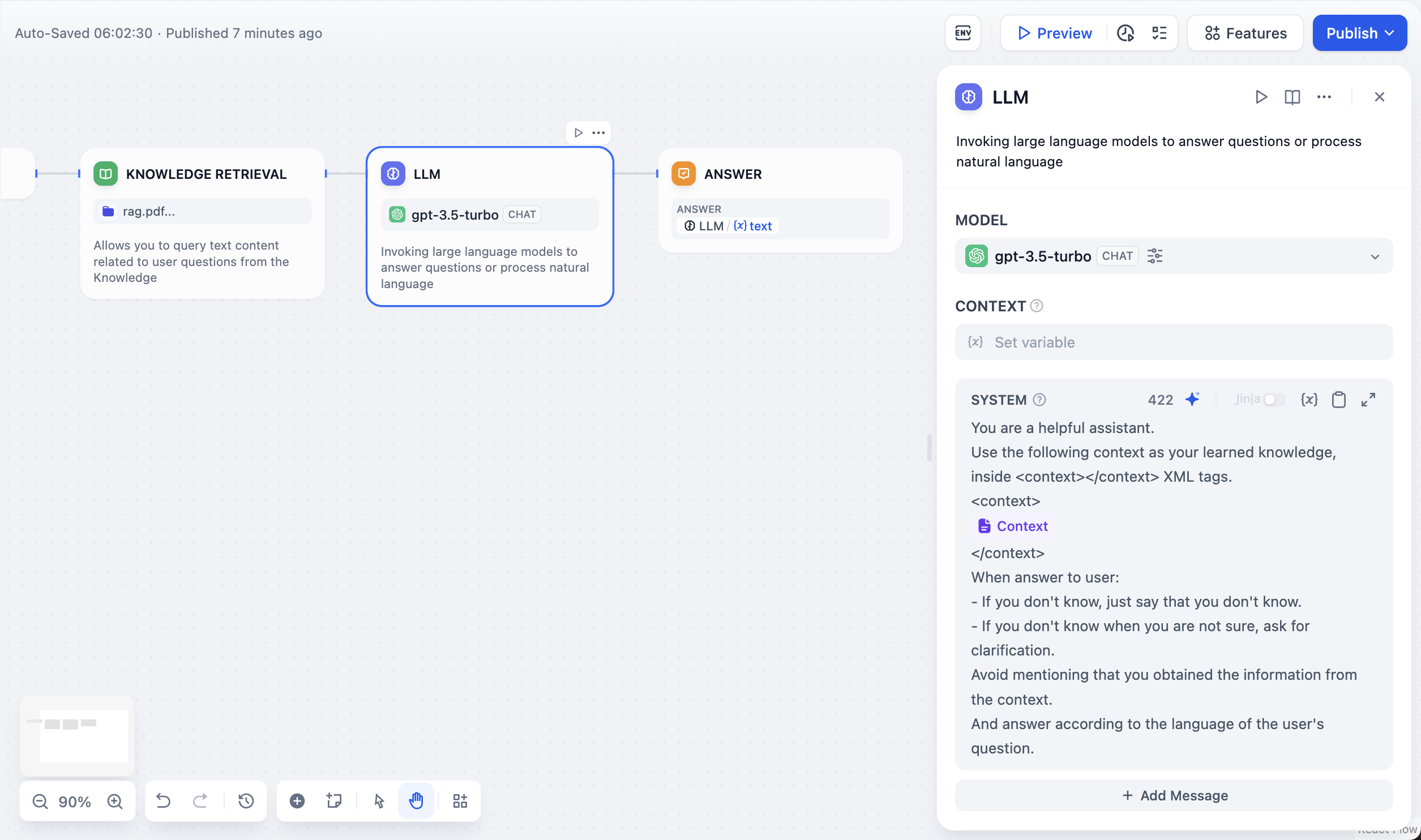

| Large Language Model (LLM) | Calls a large language model to answer questions or process natural language. | |

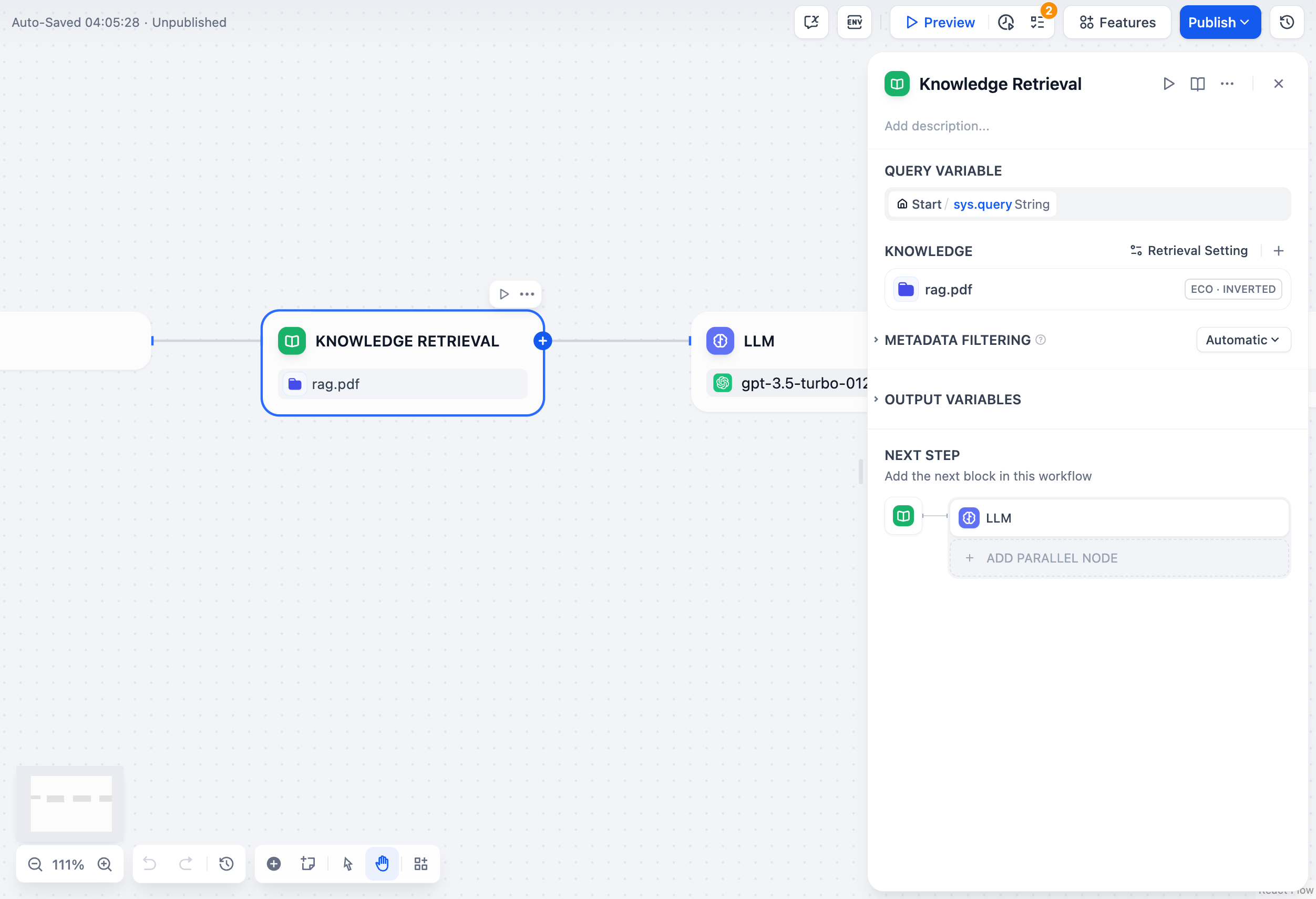

| Knowledge Retrieval | Retrieves text content related to user questions from a knowledge base, which can serve as context for downstream LLM nodes. | |

| Question Classifier | By defining classification descriptions, the LLM can select the matching classification based on user input. | |

| IF/ELSE | Allows you to split the workflow into two branches based on if/else conditions. | |

| Code Execution | Runs Python/NodeJS code to execute custom logic such as data transformation within the workflow. | |

| Template | Enables flexible data transformation and text processing using Jinja2, a Python templating language. | |

| Variable Aggregator | Aggregates variables from multiple branches into one variable for unified configuration of downstream nodes. | |

| Variable Assigner | The variable assigner node is used to assign values to writable variables. | |

| Parameter Extractor | Uses LLM to infer and extract structured parameters from natural language for subsequent tool calls or HTTP requests. | |

| Iteration | Executes multiple steps on list objects until all results are output. | |

| HTTP Request | Allows sending server requests via the HTTP protocol, suitable for retrieving external results, webhooks, generating images, and other scenarios. | |

| Tools | Enables calling built-in Dify tools, custom tools, sub-workflows, and more within the workflow. | |

| Loop | A Loop node executes repetitive tasks that depend on previous iteration results until exit conditions are met or the maximum loop count is reached. |

| Start | Defines the initial parameters for starting a workflow process. | |

| End | Defines the final output content for ending a workflow process. | |

| Answer | Defines the response content in a Chatflow process. | |

| Large Language Model (LLM) | Calls a large language model to answer questions or process natural language. | |

| Knowledge Retrieval | Retrieves text content related to user questions from a knowledge base, which can serve as context for downstream LLM nodes. | |

| Question Classifier | By defining classification descriptions, the LLM can select the matching classification based on user input. | |

| IF/ELSE | Allows you to split the workflow into two branches based on if/else conditions. | |

| Code Execution | Runs Python/NodeJS code to execute custom logic such as data transformation within the workflow. | |

| Template | Enables flexible data transformation and text processing using Jinja2, a Python templating language. | |

| Variable Aggregator | Aggregates variables from multiple branches into one variable for unified configuration of downstream nodes. | |

| Variable Assigner | The variable assigner node is used to assign values to writable variables. | |

| Parameter Extractor | Uses LLM to infer and extract structured parameters from natural language for subsequent tool calls or HTTP requests. | |

| Iteration | Executes multiple steps on list objects until all results are output. | |

| HTTP Request | Allows sending server requests via the HTTP protocol, suitable for retrieving external results, webhooks, generating images, and other scenarios. | |

| Tools | Enables calling built-in Dify tools, custom tools, sub-workflows, and more within the workflow. | |

| Loop | A Loop node executes repetitive tasks that depend on previous iteration results until exit conditions are met or the maximum loop count is reached. |

- ![]() +

+

+

+![]() +

+

### Scenarios

@@ -138,25 +148,12 @@ Error handling examples:

Array variables can be generated via the following nodes as iteration node inputs:

-* [Code Node](code.md"

-className="mx-auto"

-alt=""

-/>

-  -Dify offers two recall strategies for different knowledge base retrieval scenarios: "N-to-1 Recall" and "Multi-way Recall". In the N-to-1 mode, knowledge base queries are executed through function calling, requiring the selection of a system reasoning model. In the multi-way recall mode, a Rerank model needs to be configured for result re-ranking. For a detailed explanation of these two recall strategies, refer to the retrieval mode explanation in the [help documentation](https://docs.dify.ai/guides/knowledge-base/create-knowledge-and-upload-documents#id-5-indexing-methods).

+Dify offers two recall strategies for different knowledge base retrieval scenarios: "N-to-1 Recall" and "Multi-way Recall". In the N-to-1 mode, knowledge base queries are executed through function calling, requiring the selection of a system reasoning model. In the multi-way recall mode, a Rerank model needs to be configured for result re-ranking. For a detailed explanation of these two recall strategies, refer to the retrieval mode explanation in the [help documentation](/en/guides/knowledge-base/create-knowledge-and-upload-documents#id-5-indexing-methods).

diff --git a/en/guides/workflow/nodes/list-operator.mdx b/en/guides/workflow/nodes/list-operator.mdx

index 04e55bc6..d5067187 100644

--- a/en/guides/workflow/nodes/list-operator.mdx

+++ b/en/guides/workflow/nodes/list-operator.mdx

@@ -70,7 +70,7 @@ Array elements that meet all filter conditions. Filter conditions, sorting, and

In file interaction Q\&A scenarios, application users may upload document files or image files simultaneously. LLMs only support the ability to recognize image files and do not support reading document files. At this time, the List Operation node is needed to preprocess the array of file variables and send different file types to corresponding processing nodes. The orchestration steps are as follows:

-1. Enable the [Features](../additional-features.md) function and check both "Images" and "Document" types in the file types.

+1. Enable the [Features](/en/guides/workflow/additional-features) function and check both "Images" and "Document" types in the file types.

2. Add two list operation nodes, setting to extract image and document variables respectively in the "List Operator" conditions.

3. Extract document file variables and pass them to the "Doc Extractor" node; extract image file variables and pass them to the "LLM" node.

4. Add a "Answer" node at the end, filling in the output variable of the LLM node.

diff --git a/en/guides/workflow/nodes/llm.mdx b/en/guides/workflow/nodes/llm.mdx

index dd31a288..7c6278c1 100644

--- a/en/guides/workflow/nodes/llm.mdx

+++ b/en/guides/workflow/nodes/llm.mdx

@@ -2,7 +2,6 @@

title: LLM

---

-

### Definition

Invokes the capabilities of large language models to process information input by users in the "Start" node (natural language, uploaded files, or images) and provide effective response information.

@@ -40,7 +39,7 @@ By selecting the appropriate model and writing prompts, you can build powerful a

4. **Advanced Settings**: You can enable memory, set memory windows, and use the Jinja-2 template language for more complex prompts.

-Dify offers two recall strategies for different knowledge base retrieval scenarios: "N-to-1 Recall" and "Multi-way Recall". In the N-to-1 mode, knowledge base queries are executed through function calling, requiring the selection of a system reasoning model. In the multi-way recall mode, a Rerank model needs to be configured for result re-ranking. For a detailed explanation of these two recall strategies, refer to the retrieval mode explanation in the [help documentation](https://docs.dify.ai/guides/knowledge-base/create-knowledge-and-upload-documents#id-5-indexing-methods).

+Dify offers two recall strategies for different knowledge base retrieval scenarios: "N-to-1 Recall" and "Multi-way Recall". In the N-to-1 mode, knowledge base queries are executed through function calling, requiring the selection of a system reasoning model. In the multi-way recall mode, a Rerank model needs to be configured for result re-ranking. For a detailed explanation of these two recall strategies, refer to the retrieval mode explanation in the [help documentation](/en/guides/knowledge-base/create-knowledge-and-upload-documents#id-5-indexing-methods).

diff --git a/en/guides/workflow/nodes/list-operator.mdx b/en/guides/workflow/nodes/list-operator.mdx

index 04e55bc6..d5067187 100644

--- a/en/guides/workflow/nodes/list-operator.mdx

+++ b/en/guides/workflow/nodes/list-operator.mdx

@@ -70,7 +70,7 @@ Array elements that meet all filter conditions. Filter conditions, sorting, and

In file interaction Q\&A scenarios, application users may upload document files or image files simultaneously. LLMs only support the ability to recognize image files and do not support reading document files. At this time, the List Operation node is needed to preprocess the array of file variables and send different file types to corresponding processing nodes. The orchestration steps are as follows:

-1. Enable the [Features](../additional-features.md) function and check both "Images" and "Document" types in the file types.

+1. Enable the [Features](/en/guides/workflow/additional-features) function and check both "Images" and "Document" types in the file types.

2. Add two list operation nodes, setting to extract image and document variables respectively in the "List Operator" conditions.

3. Extract document file variables and pass them to the "Doc Extractor" node; extract image file variables and pass them to the "LLM" node.

4. Add a "Answer" node at the end, filling in the output variable of the LLM node.

diff --git a/en/guides/workflow/nodes/llm.mdx b/en/guides/workflow/nodes/llm.mdx

index dd31a288..7c6278c1 100644

--- a/en/guides/workflow/nodes/llm.mdx

+++ b/en/guides/workflow/nodes/llm.mdx

@@ -2,7 +2,6 @@

title: LLM

---

-

### Definition

Invokes the capabilities of large language models to process information input by users in the "Start" node (natural language, uploaded files, or images) and provide effective response information.

@@ -40,7 +39,7 @@ By selecting the appropriate model and writing prompts, you can build powerful a

4. **Advanced Settings**: You can enable memory, set memory windows, and use the Jinja-2 template language for more complex prompts.